Abstract

This paper suggests a new method to extract the initial movement of moving objects in digital image data obtained in visual sensor networks. First, consecutive images are received as input. Then, the frames are partitioned into nonoverlapping square blocks of pixels, and finally, the block-based motion vectors, which represent the movement information between two adjacent frames, are extracted from the received images using a block-matching algorithm. The extracted motion vectors are subsequently applied to an outlier-elimination algorithm called robust estimation to discriminate between the background motion vectors and those of noise or moving objects. The motion vectors corresponding to the noise or objects are clustered with an unsupervised clustering algorithm to segment the individual moving objects. Experimental results prove that the proposed method can effectively detect the initial movement of objects in various indoor and outdoor environments.

1. Introduction

Owing to significant progress in visual sensor technology, wireless communication, and pattern recognition algorithms, the deployment of wide-area visual sensor networks has become practical and cost effective [1]. These networks have a wide range of commercial and military applications such as video surveillance, smart home, traffic monitoring, and antiterrorism [2–4]. Among these, the technique of automatically detecting the initial movement of objects is used in many applications including security, face recognition and tracking, gesture analysis, extraction of vehicular volume of traffic statistics, analysis of aerial photographs, and human-motion understanding [5–9]. This technique of detecting moving objects is basic and important. It is, however, a very difficult problem because of the problems resulting from the movement of the camera when capturing scenes in any direction, noise generated by various factors in capturing the scenes, partial occlusion of moving objects, and self-transformation of deformable objects.

In recent years, various research methods have been undertaken to effectively detect moving objects. These methods involve the use of optical flow measurement [10], difference image [11], model generation [12], or boundary-based method such as snake [13] and saliency maps [14].

The method using optical flows [10] can measure the direction of the object movement while it is sensitive to illumination changes in the surrounding environment. The method using difference images [11] detects objects by applying a difference operation to two adjacent images in a fixed-camera-setting environment. The algorithm is simple and relatively accurate. However, there is a constraint that the camera must be fixed. The model generation-based method [12] is a technique of detecting objects by generating a distribution model of moving objects or background. This has the advantage that it can trace the objects robustly. However, it has difficulty detecting the initial objects for generating the model. The boundary-based method [13] can accurately find the boundary region of the objects using a method of energy minimization. It has the disadvantage that it is difficult to detect moving objects relatively quickly. The method using saliency maps [14] forms a saliency map using the density of the pixel distribution in the time and space domain and uses this to detect moving objects. It detects moving objects relatively robustly, though it requires an effective method of integrating the map.

Although several techniques of detecting moving objects have been studied, the task still remains to be achieved effectively. In particular, in a condition where the camera is not fixed, it is difficult to use difference images, and the task of initially detecting moving objects accurately remains a challenge.

Therefore, in this paper, we propose a method of detecting the initial movement of objects using an outlier-elimination algorithm and unsupervised clustering based on block-based motion vectors in an environment where the camera is not fixed. The initial movement information of the objects detected in this paper can be used very effectively for such multiple tasks as object model generation, object tracking, and server device initialization.

The remainder of this paper is organized as follows. Section 2 presents the technique to extract the block-based motion vectors from an input image. Section 3 explains the method to filter outlier motion vectors using robust estimation. Section 4 describes the method of segmenting the moving objects through an unsupervised clustering algorithm. In Section 5, we present experimental results to demonstrate that the suggested method can robustly detect the initial movement of objects compared to other methods. The conclusions and future work are discussed in Section 6.

2. Extraction of Block-Based Motion Vectors

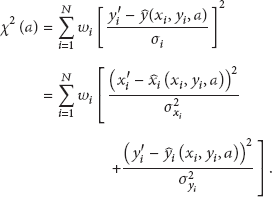

The proposed method, unlike the method using difference images, is not constrained by the requirement that the camera should be fixed. It can effectively detect the initial movement of objects in a free environment with considerable accuracy. Figure 1 briefly shows the overall configuration of the algorithm for detecting initial object movement.

Overall configuration of the algorithm.

As shown in Figure 1, the proposed system first receives input images and extracts N × N square block-based motion vectors representing the movement from adjacent images. The extracted motion vectors are applied to the algorithm for eliminating outlier vectors to complete the minimization step. Then, the unsupervised clustering algorithm is applied to cluster the motion vectors identifying the moving objects. Moving objects are detected when the size of the region of the clustered motion vectors is more than a certain threshold value.

The proposed method utilizes expanding block matching to extract the motion vectors more accurately [15]. The block-matching partitions the images into N × N square blocks of pixels, defines the features of each block, and applies a matching metric consisting of the defined features to the blocks within the search area to determine the block with the highest similarity. Then, the displacement of the centers between the reference block and the matched block is defined as a motion vector.

The expanding-block-matching algorithm expands the size of the matching template slightly and executes the matching process repetitively. It repeats this until it extracts a reliable maximum matching similarity, which, thereby, results in a decline of mismatching. The iteration of the block matching is determined through an evaluation function.

The evaluation function judges the matching discrimination of the block with the maximum matching similarity in the matching-similarity distribution calculated in the current search area and determines if the block matching should be repeated. In this paper, if the evaluation function has a positive value, we repeat the block matching while expanding the matching template. If it has a negative value, we use the matched corresponding block to determine the motion vector. The displaced matching-similarity function used in the proposed method is defined as follows:

In (1), n is the size of a block,

The expanding block matching performs the matching between the blocks by repetitively expanding the size of the matching template until it extracts the maximum matching similarity with discrimination. Two evaluation criteria are applied. First, the criterion for the expansion of the matching template is set. We evaluate whether there is a discrimination in the maximum matching similarity of the distribution of the matching similarity extracted from the given search area. Based on the block position with the maximum matching similarity

Figure 2 shows the change of matching similarity distribution as the template expands. The white circle represents the position with maximum matching similarity. As seen in Figure 2, when the size of template becomes 13 × 13, we determine that the maximum similarity with discrimination is extracted.

Similarity distribution with expansion of matching template.

3. Elimination of Outlier Vectors

The proposed method calculates camera movement information by applying the motion vectors extracted using expanding block matching to the outlier-elimination algorithm called robust estimation [16]. The robust estimation is a statistical method of calculating the parameters of the model with only nonoutliers by eliminating outliers included in the input data. The proposed method employs a robust estimation to calculate the camera motion parameters using the motion vectors as the input for the robust estimation. Therefore, the nonoutliers in the proposed method are the motion vectors in the background region including camera motion, while the outliers are the motion vectors, excluding the motion vectors in the background region, that are formed by object movement or other noises.

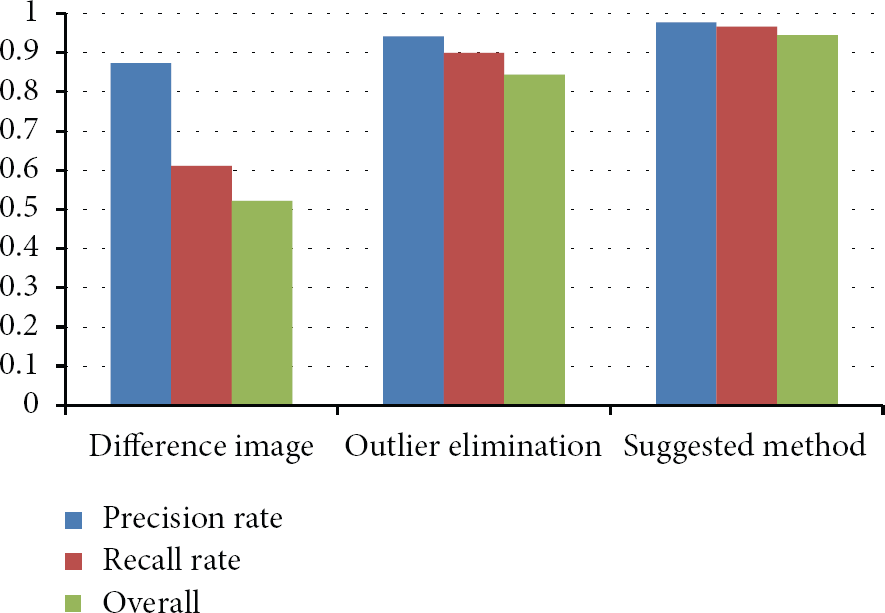

The robust estimation usually consists of two main steps. The first step eliminates the outliers from the input data. If outliers are included in the data, the parameters optimizing the model converge incorrectly. Therefore, the statistical distribution of the input data is analyzed to eliminate the outliers. In the second step, the model parameters are estimated using only the nonoutliers. In the process of finding the model parameters, a minimization technique is used. However, the existing robust estimation separates the nonoutliers from the outliers using a threshold value in the initial step of the minimization technique. Thus, as a step of the minimization technique repeats with the inaccurate separation between nonoutliers and outliers, the model parameters are incorrectly updated. Furthermore, each step of the minimization technique recalculates the weight function ignoring the correlation between the weighting factors in each step. Therefore, an irregular vibration of the weighted values occurs. Thus, the proposed method uses adaptive robust estimation that solves this problem.

In the proposed method, the measurement model used to perform outlier elimination is shown in (2). Generally, (2) is called an affine model [17, 18]:

In (2), a is the camera motion parameter that can be easily converted to viewing parameters such as tilt angle, pan angle, swing angle, and focal length using the existing camera conversion relation formula. In addition,

The adaptive robust estimation employed in the proposed method uses a continuous weight function, unlike the existing robust estimation that uses a binary weight function. In this paper, for the continuous weight function, a sigmoid function [19, 20] is used. The sigmoid weight function expresses the degree of belonging of nonoutliers and outliers more effectively than the binary weight function. Therefore, it can minimize the error that might occur in the repetitive initial step of the minimization technique. The weight function used in the proposed method is defined as

In (4),

The adaptive robust estimation is defined to ensure that it reflects the weights of the previous step in calculating the weights of the current step of the iteration process. Moreover, as the minimization algorithm repeats, it separates nonoutliers from outliers more flexibly by adjusting the sigmoid function in the form of a hard limit, as shown in Figure 3. The adjustment of the weighted values in the proposed method is performed by adjusting two factors

Adjusting the sigmoid function: (a)→(b)→(c)→(d).

4. Object Segmentation through Clustering

Because the motion vectors are processed by the outlier-elimination algorithm, camera-movement information parameters are extracted from the motion vectors of the background regions. The motion vectors corresponding to the moving object regions are judged as outliers as the repetitive procedure of the robust estimation is executed. Therefore, only the moving object part of the motion vectors is segmented. Thus, the proposed method clusters the motion vectors corresponding to the moving object regions, and segments them into object units to use as the initial movement information of each moving object.

Sample clustering generally includes different types of algorithms such as K-means, ISODATA (iterative self-organizing data analysis), and fuzzy C-means [21–23]. In this paper, the proposed method utilizes the ISODATA method, which is known to be an unsupervised clustering algorithm [24–26]. Ball and Hall suggested the ISODATA method, one of the most popular nearest-centroid clustering methods [24]. The algorithm does not require the number of clusters. Given a distance measure, the primary logic of the scheme is an updating loop that reassigns points to the nearest cluster each time the center is moved. The center is then moved because the points have been reassigned.

Typically, the basic internal ISODATA loop contains the following four stages:

it determines the initial parameters including the cluster center; it assigns the input patterns to their closest clusters; it calculates the cluster-related parameters and checks the conditions of split and merge; it performs split or merge; otherwise, it stops.

This is an iterative process that should converge to a stable cluster configuration from any starting set of cluster centers—if the number of clusters remains fixed. However, an incorrect initial choice of cluster centers could lead to an undesirable clustering. This iterative process is common to a number of squared-error algorithms. The difference is in the selection of the initial cluster centers and the criteria for splitting and merging the existing clusters. The above processes are performed repetitively until the parameters maintain a stable value. Algorithm 1 briefly describes the steps of the ISODATA algorithm.

Step 1. Read initial cluster means of points Step 2. Group each sample of the feature space to its nearest cluster center. Step 3. After all samples have been grouped, compute the coordinate values of each cluster center. Step 4. If any Step 5. If a split occurred in the previous step, regroup the data and compute new coordinates for the cluster centers. Step 6. If any cluster has too few members, eliminate that cluster. Step 7. If a cluster was eliminated in the previous step, regroup and compute new coordinates for the cluster centers. Step 8. If the distance between the two cluster centers is smaller than some Step 9. If any two clusters were combined in the previous step, compute the new coordinates for the cluster center. Step 10. Repeat Steps 2 through 9 until steady state occurs or until

Figure 4 shows the overall flowchart of the ISODATA clustering algorithm. The number of clusters finally extracted when the ISODATA algorithm is applied indicates the number of detected moving objects, and the mean of each cluster is the center position of each moving object.

Clustering algorithm.

5. Experimental Results

To test the proposed method, a computer with an Intel Pentium Core 2 Duo CPU and 4 GB of memory was used. The operating system was Microsoft Windows 7. Microsoft Visual C++ was used to implement the algorithm. For the input images, various types of image data received through visual sensor networks were used.

Figure 5 illustrates an example of extracting motion vectors showing the movement information using the expanding-block-matching algorithm from the adjacent images. Figure 5(a) is a scene in which a puppy is running to its owner, who is sitting. Figure 5(b) is an image in which a person is moving on a motor bike towards the front of the screen. Figure 5(c) is a scene in which a man is slowly walking to the left side of the screen. Figure 5(d) is an image in which a person is bouncing a ping-pong ball with the ball and part of the person's arm moving. As seen in the results of the extraction of motion vectors in Figure 5, some errors occur in the images captured in the various environments; however, we observe that the motion vectors are relatively correct.

Extracted motion vectors.

Figure 6 is the result of eliminating the noise judged as outliers when the motion vectors extracted by the block-matching algorithm were accepted as input for the outlier-elimination algorithm and the part of the motion vectors corresponding to the moving object region. In Figure 6(a), the camera movement was very fine; thus, the moving object regions were eliminated relatively correctly because they were judged as outliers. In Figure 6(b), the motion vectors corresponding to the moving objects and noise were eliminated; however, a considerable portion of the noise was not eliminated because of the overall low quality of the input images. In Figure 6(c), there was significant noise due to the low image resolution; yet, the motion vectors corresponding to the noise were eliminated relatively correctly. In Figure 6(d), the motion vectors corresponding to the moving objects and noise were correctly eliminated.

Outliers removal.

Figure 7 shows the results of eliminating motion vectors with very small regions by ISODATA clustering and those with extremely low compactness of regions among the motion vectors judged as outliers in Figure 6. The remainder was determined as moving object regions. Figures 7(a), 7(b), and 7(c) showed that it could detect the movement of moving objects correctly, except for several block regions, which were eliminated because they were outliers in the moving object regions. Figure 7(d) detected the ping-pong ball and the arm region movement relatively accurately, though it did not eliminate one region.

Object detection by clustering.

To compare the performance of the proposed method, experiments were conducted with a method using difference images, general outlier-elimination method, and the proposed method using outlier elimination and clustering. Figure 8 shows examples of detecting the movement of objects using (a) the method of using difference images and (b) the general outlier-elimination method. Each algorithm was applied to various image data that included the movement of objects. For the performance evaluation, the success and failure of the initial object detecting function of each algorithm were measured basically.

Results of existing methods.

To define the accuracy metric of the proposed initial object detection algorithm, we employ precision and recall rates that are usually used in pattern recognition and information retrieval with binary classification [27]. The precision, which is also called the positive predictive value, is the fraction of detected objects that are relevant, while the recall, which is also known as sensitivity, is the fraction of relevant objects that are detected. In simple terms, high recall means that an algorithm returned most of the relevant results, while high precision means that an algorithm returned substantially more relevant results than irrelevant. In the ideal case, when no false negatives and false positives are returned, we have precision = recall = one. However, neither precision nor recall alone can accurately assess the detection quality. In particular, recall can easily be maximized at the expense of a poor precision by returning all possible correspondences. On the other side, a high precision can be achieved at the expense of a poor recall by returning only few (correct) correspondences. Hence, it is necessary to consider both precision and recall or a combined measure. Therefore, in this paper, we employ the overall value for evaluation as

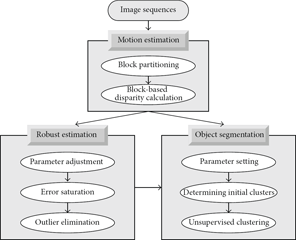

The computed precision, recall, and overall values for the existing and proposed object movement detection methods are shown in Table 1, and we can note that our approach outperforms others.

Overall value.

Figure 9 depicts the three values for each method. As can be seen from Figure 7, the method that involves the use of difference images operates accurately in environments where the camera is fixed. However, except for environments with very little camera movement, it did not detect the moving objects. The existing outlier-elimination algorithm detected moving objects with considerable accuracy, though its performance was not as good as the proposed method combining the clustering algorithm. The performance of the proposed method differed considerably depending on the images used; however, as the results of the experiment on various images prove, it detected the initial movement of objects relatively consistently and thus shows relatively good overall rates in accuracy. The proposed method failed to detect objects with very fast movement or to extract motion vectors from the images correctly with a sudden change in lighting.

Performance comparison.

6. Conclusions

This paper proposed a method for effectively detecting the initial movement of objects from dynamic images captured from natural environments where the camera was not fixed. The proposed method first splits the input image into

The method of detecting the initial movement of objects proposed in this paper is significant in that it can effectively detect object movement in various images captured in environments where the camera is not fixed. This is one of the most difficult problems for most techniques of detecting moving objects. Furthermore, the information regarding the initial movement of the objects detected in this paper is used positively in subsequent processing steps, for example, tracking the model of the objects and setting up the default values of the servo equipment controlling the camera motion.

In the future, we aim to work on a method for generating a deformable model of the moving objects detected by the proposed method and for tracing them robustly. Furthermore, we will focus on adaptively adjusting the initial parameters used in the unsupervised clustering algorithm through repetitive and empirical experiments.

Footnotes

Conflict of Interests

The authors declare that there is no conflict of interests regarding the publication of this paper.

Acknowledgments

This research was supported by Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Education, Science and Technology (2011-0021984).