Abstract

This study proposes a method for using stereo vision and face recogonition. The method differs from the feedback detection method used in sensors in general. The method disregards unimportant environmental changes and improves the overall performance of the recognition and tracking functions. Dual-CCD cameras on the visual system are used to capture images of faces. Through image preprocessing, determination of the moving target, and the position of the target center, the image is matched with the sample image to allow the robot to recognize and track stereo objects visually. The robot can recognize and track faces. And, the system also sends the images to a remote computer by wireless. A scheme is proposed to enhance the authentication messages by hash function in wireless communications. Since the proposed scheme provides an encryption function, it improves the authentication for wireless communications.

1. Introduction

The information technology industry has vigorously developed, and computer vision has increased in importance. The advancement of computer technology has directed increasing attention to CPU and DSP. In addition, the annual decline in hardware cost has significantly reduced the computation time and cost of image processing and has thus increased the practicality of computer vision systems and their applications. Although such systems have greatly improved in terms of the theory, algorithms, and practical applications of image processing, core technologies of computer vision still require breakthroughs and innovation. For instance, if a leaf falls on a moving car, computer vision should not mistake the leaf as an obstacle and put the car on break or turn it around [1–5].

Controlling data transmission in a wireless environment and to prevent illegal access to resources, users must be authorized [6]. Privacy is an important subject in wireless communications. Users require protection from identifying theft or being caught in some way. Thus, the anonymous technology is a solution to solve the problems of user's identification that could be stolen by attackers.

The features of chaos systems include their dynamic response and high sensitivity to variations in the initial values of a system, such as nonperiodicity, nonconvergence, and control parameters. Many methodologies and profound mathematical theories about chaos systems have been proposed in applications such as image encryption [7–9], secure communications [10, 11], and image processing [12, 13] in the past 20 years. Huang et al. [7] proposed a scheme for implementing quasioptimal chaos random codes and for selecting initial values of a chaos system for encrypting digital color images to enhance communication security. Pareek et al. [8] presented an encryption approach for secure transfer of images based on chaos logistic maps. Li et al. [10] proposed a scheme for improving cryptosystem sensitivity by using a magnifying glass concept to enlarge and observe minor parameter mismatches.

This paper is described in six sections as follows. Section 1: foreword outlines the study theme, describes the study motive and purpose, reviews the documents, and discusses the application of the related technique. Section 2: system structure introduces the hardware structure in this paper and describes the specifications and the control method of each device in simple detail. The method describes the processing flow for estimating image and distance and introduces the method used to complete each step in detail. Section 3: the new secure authentication scheme is proposed. Section 4: Experimental result provides full experimental data so as to measure the data for shape identification and the distance estimation and discusses experimental results. Section 5: conclusion summarizes and discusses the findings of this paper.

2. Face Detecting and Tracking Methods

There are many methods for tracking objects, yet current techniques are unable to completely understand changes of the whole environment. The 3D vision and image recognition matching is used to estimate the distance of a face to overcome the limitation of the whole environment. This technique calculates the distance from a face and compares samples through image preprocessing, determining a moving target and its center, and controlling the cameras to track the moving face in real time. By providing a triangulated location, the robot also has the ability to determine the distance of a face and track it.

2.1. Digital Image Processing

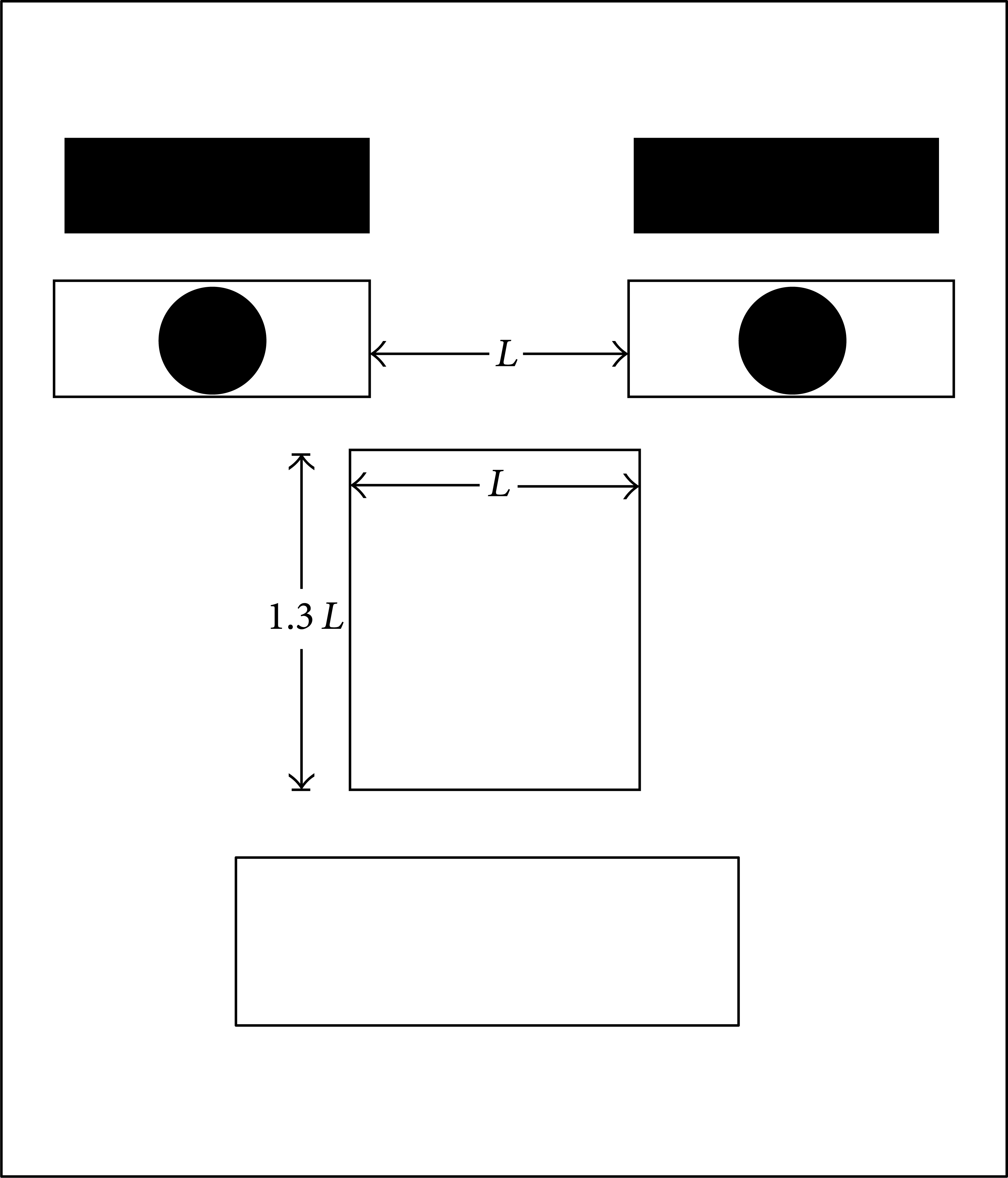

The processing block shown in Figure 1 indicates that the original image captured by the CCD camera is processed before it is converted into useful image information and becomes the actuating signal inputted into the motor driving system. The main processing steps are divided into the following: image preprocessing, identification of moving target, and position of target center.

Image processing flowchart.

2.1.1. Grayscale Manipulation

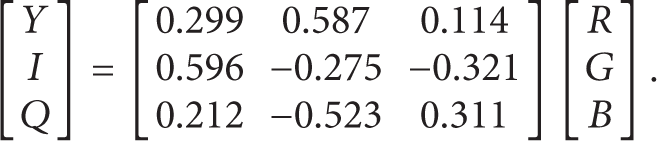

The image captured by a color CCD camera is an RGB color image, which represents a 3D matrix information type and is the color image constructed by the 2D matrices of RGB. Colored image information is not required because the moving target should be recognized quickly. A grayscale image has a 2D matrix information type. The storage space and computation required for a grayscale image during image processing are less than those required for the 3D matrix of an RGB color image. Therefore, RGB is converted into YIQ according to the following formula [5]:

2.1.2. Image Subtraction

Consistency in the background of consecutive images is used in image subtraction. The 2D matrices (grayscales) of two consecutive images are subtracted to obtain the absolute value. The new matrix obtained reflects the difference in the two consecutive images. The moving direction and position of the object can be determined through image marginalization.

2.1.3. Detection of Image Edges

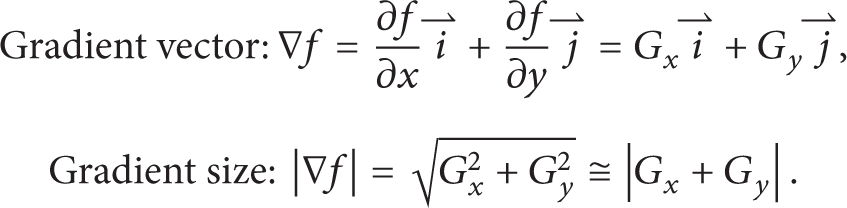

Image edge detection allows the size, shape, and position of an object in an image to be understood. Marginalization prevents the loss of the most important information and reduces such information to speed up image processing. In this study, Sobel edge detection [14] is used, expressed by (2)

The 3 × 3 image block in Figure 2 is used to obtain the following:

The sizes of gradient vectors are compared by using a threshold. When the pixel is greater than the threshold, it is given a value of 1 and is set as the edge pixel of the object. However, when the pixel is smaller than the threshold, it is given a value of 0 and is set as the pixel of the background.

3 × 3 Image block.

2.1.4. Image Restoration

The edge of an image may be discontinued after image marginalization, and the identification of the real shape or continuous surface may be affected in serious instances. Therefore, image restoration in many studies allows for image inpainting in the extraction of mouth features. Inpainting methods include dilation and erosion, and restoration functions include smoothing out images, removing noise, joining gaps together, and discontinuing edges.

2.1.5. Sample Matching

In sample matching [15], the training sample images in the system are used. By image processing of the sample image, the final simplified eigenvalue and the information extracted from the real consecutive images are matched. During the final match, the threshold can be adjusted to control the similarity of the match.

2.1.6. Feature Extraction

(1) Extraction of Eye Features. Figure 4 shows the eye features extracted. The eyes are generally detected first because they are more noticeable and stable than other facial features. Lam used the concavity of the eyes for detection, whereas Kawaguchi used the shape of the eyes [16, 17]. After the facial features are positioned, the overall image is processed by local image. The image of the eye is shown in the picture, from which the feature point of the eye is extracted. The local image of the eye is more noticeable than other features in terms of the horizontal edge component. Therefore, the Prewitt operator is used to sharpen the image and the 3 × 3 horizontal mask is used to implement it, as shown in Figure 3.

Prewitt horizontal mask coefficient.

Eye feature points and binarization.

Sharpening the image through the Prewitt operator yields the positions of the canthus and upper eyelid as the feature points of the eye.

The features of the eyes can be extracted based on these feature points. The eye image can be binarized by grayscale manipulation, binarization, and image restoration in image preprocessing, as shown in Figure 4.

(2) Extraction of Mouth Features. Figure 5 shows the mouth features extracted. Aside from information on the eye, information on the lips can be used to verify the position of the face. Therefore, the geometric position of the triangular relationship between the eyes and the mouth is used to roughly position the mouth in most cases [18, 19]. After the face is positioned, the local image of the mouth is extracted and subsequently processed. Mouth features are often extracted by temple matching or by using the brightness of the vertical axis. However, the shape of the mouth varies with the facial expression. Therefore, the Prewitt horizontal edge operator is also used to extract feature points around the lips.

Mouth feature points and binarization.

The features of the mouth can be extracted based on these feature points. The mouth image can be binarized through grayscale manipulation, binarization, and image restoration in image preprocessing, as shown in Figure 5.

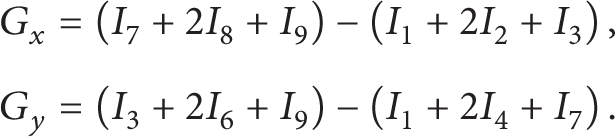

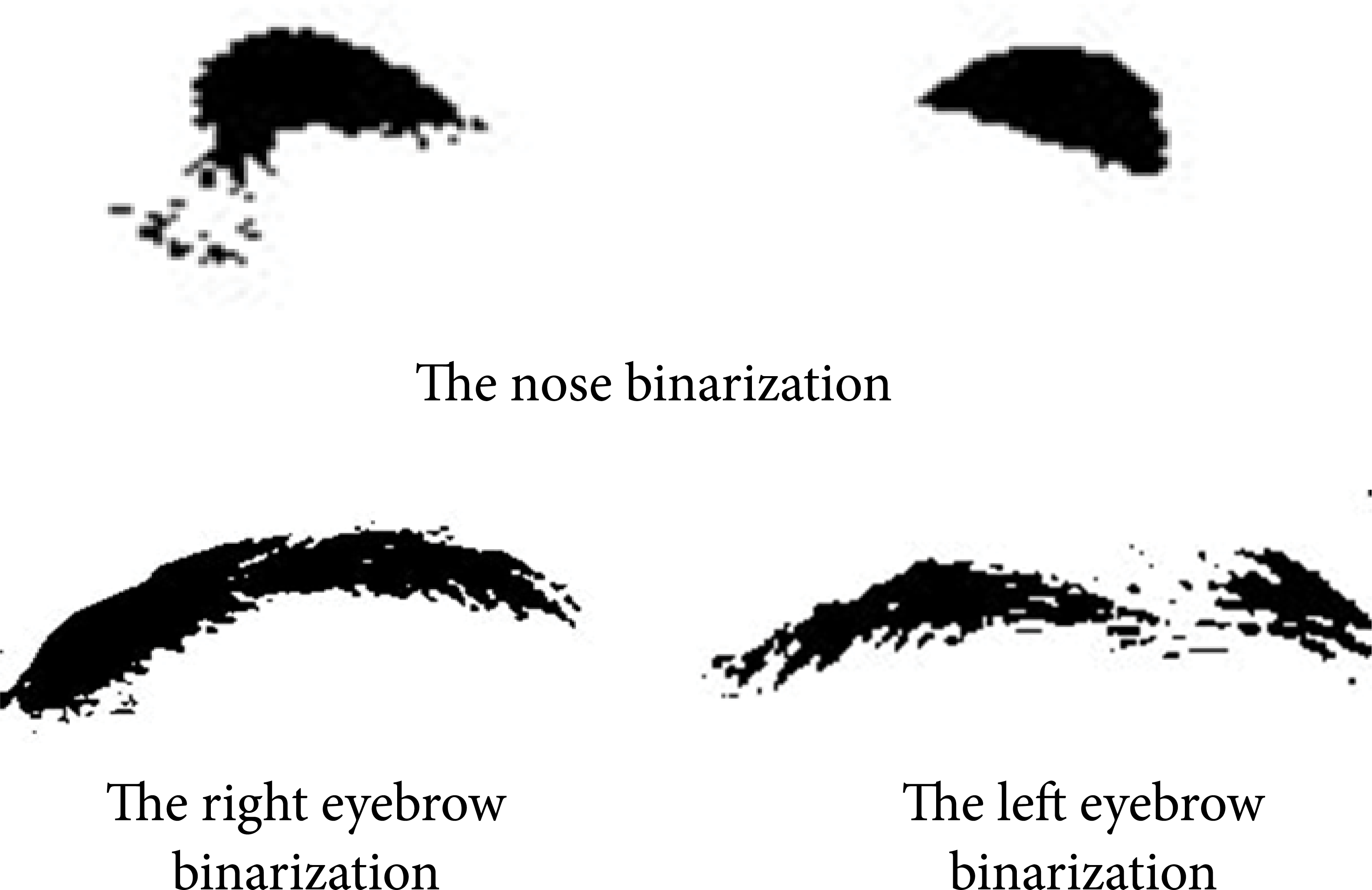

(3) Extraction of Eyebrow and Nose Features. Previous studies have positioned the face based on its oval shape and facial features based on their symmetrical characteristics, relative proportion, or position. Such features have also been positioned based on the changes and proportions of coordinates and grayscale values. Differences in eyebrow density produce different characteristics, and the position of the nose varies from different angles. Therefore, in the positioning of the features of the human face, the eyes or the mouth is used first. The eyes and the mouth can be used as the fundamental basis to achieve the positioning goal, and the computed facial proportion can be used to position the nose and the eyebrows [20, 21]. The nostril is used to extract the features of the nose. These features are extracted based on the shape and dark grayscale image of the nostril. The extracted features of the nose, eyes, and mouth are then used to extract the features of the eyebrows based on the proportion of the face, as shown in Figure 6.

Nose and eyebrow binarization.

Based on the facial proportion shown in Figure 7, the eigenvalues of all extracted facial features are processed to obtain the final sample image of facial features, as also shown in Figure 8. Finally, the processed training image and the image captured by the CCD camera are subjected to sample feature matching, and the target to be tracked is captured according to the weighted score of the similarity of the images.

Facial proportion.

Synthesized binary image.

2.1.7. Shifting of Moving Target

When the target moves with minute deformation (i.e., the moving target is not deformed instantaneously in two consecutive images among consecutive images captured rapidly in a short period), the moving target can be shifted to improve the delayed detection of the moving edge, as shown in Figure 9.

Schematic diagram of shifting of moving target.

Offset value:

where x d and y d are the offset values of the target in the second and third images. The target shifts to the right when x d is positive and shifts to the left otherwise. The target moves downward when y d is positive and moves upward otherwise. It is expressed in (4).

2.1.8. Position of Target Center

The target position in the latest image can be obtained through the digital image processing algorithm and the trigonometric theorem. Image information is then converted into an actuating signal that controls the motor [5, 14]. The relationship between the target and the angle of the image center is shown in Figure 10.

Target and angle of image center.

Assume that L is the focal length, A is the edge of the vision, A′ is the edge of the image, a is the distance between the image center and A′, B is the position of the target, B′ is the position of the target in the image, and b is the distance between the image center and B′. θ is the angle of the vision. If the angle between BB′ and the image center is θ′, then

The position of the target center is computed through this method, and the computation result becomes more accurate as image resolution increases in (5).

2.2. System Construction

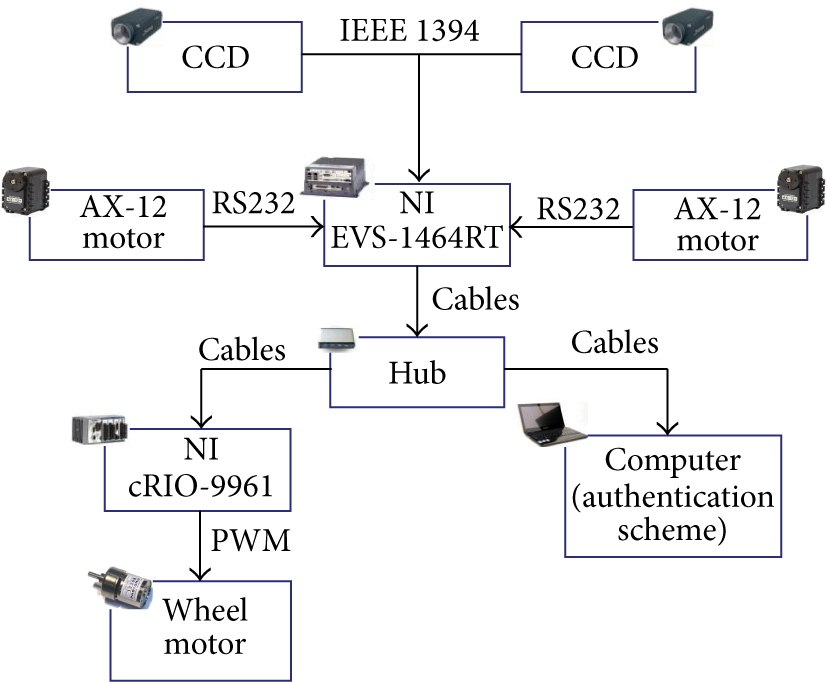

Two color CCD cameras are set up parallel to each other and are moved by a two-wheel driven sliding platform. The cameras are controlled through a personal computer. LabView is used as the development environment to process the images and control the algorithms. The image is captured by the CCD cameras, and its information is converted into an RGB (3D matrix) image through an image capture board. Simultaneous images must be captured because a dual-CCD stereo imaging system is used. These images undergo digital image processing at the main control terminal. After the algorithms are applied, the target in the images is separated from the background, and the edge, size, and position of the object are recognized. The information is transferred via the IEEE 1394 interface to send an immediate update of the motor control parameter to the controller. Afterward, the controller sends the signal to the motor driver based on the deviation between the position and the center of the platform. The motor drives the CCD camera to correct such deviation and to track and recognize the face. Figure 11 shows the overall mobile platform structure. The CCD cameras are used to record the repeated procedures [4].

Structure of the moving robot platform.

3. Proposed Scheme with Chaos System

The developed robot system could be achieved the remote monitor and the image capture by wireless channels. In order to improve the safety certification, a new scheme is proposed to enhance the authentication messages by hash function in wireless communications. Since the proposed scheme provides an encryption function, it improves the authentication for wireless communications. The unique characteristics of chaos systems make them suiTable for message encryption. For example, in cycles without convergence, the trajectory is spread throughout the system. In cycles in a limited area, the trajectory is constantly stretching and folding. Last, in systems that have extremely sensitive initial values, these features enable systems with different initial values to produce numerous unrelated chaos sequences. This study proposes a method which uses chaos system to generate chaos sequence of encryption. The encryption messages are generated by high-security algorithms that are difficult to break. The encryption and decryption model used in this study is based on chaos theory, which is briefly introduced below. And, the notation used throughout the paper is also shown in Table 1.

Units and corresponding symbols.

The chaos states of Lorenz system is used as our encryption parameter in this paper. The Lorenz system is a three-dimensional chaos system. The proposed scheme t is a timestamp that the chaos encryption function generates three states at the time t. The timestamp t is always different. We do not directly use timestamp as a parameter in our authentication message. The timestamp is used to create chaos states in chaos system. We define a chaos encryption function X(t) which contains seven combinations of chaos states. The encryption function generates different values of chaos states according to different conditions. The chaos encryption function is described as (6)

Our encryption function is developed for computation of the remainder q of time (t) is divided by 7. The remainder computation is defined by the following equation:

There are seven remainders in this mathematical formula. The remainder q is satisfied by 0 ≤ q < 7. According to the remainder of time that is taken to decide which combination of states X(tU) will be used as secret parameter in our proposed scheme. The proposed chaos-based security scheme includes the following phases.

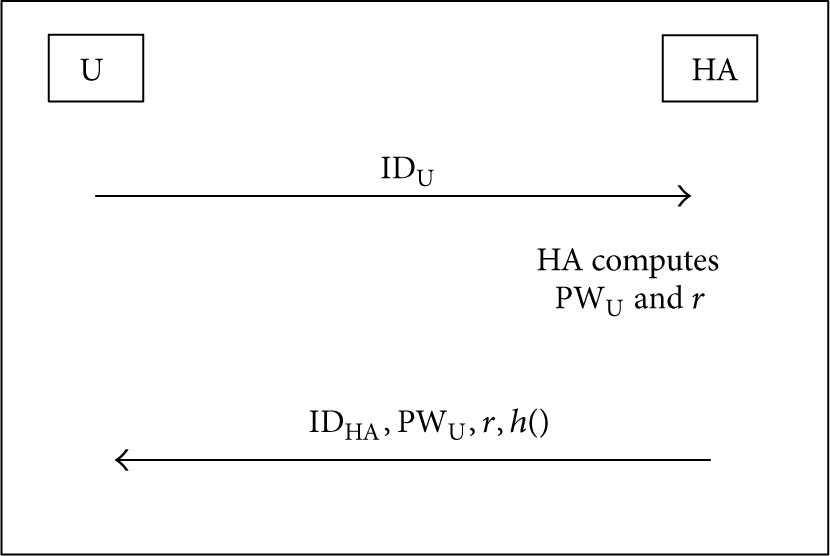

3.1. Registration Phase

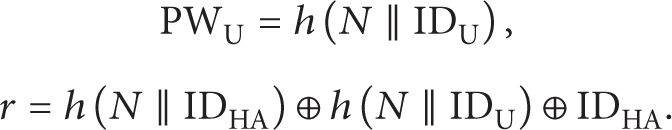

Figure 12 shows the communication section of user registration phase. A new user must submit a user identity IDU when registering a home agent (HA). The HA computes r and PWU. Consider

The registration phase of our proposed scheme.

3.2. Authentication Phase

The user calculates n by a hash function. The equation contains r, PWU, and an encryption message X(tU) that is generated at the timestamp tU. X(tU) is one of chaos state combinations. According to the timestamp divided by 7, take the remainder to decide which combination will be used in our scheme.

The n is an authentication message, which is used to hide the real identity message to prevent the attacker from stealing the user ID. Consider

An attacker who gets the authentication message would have difficulty guessing the encryption parameter X(tU) needed to calculate the actual user ID. Therefore, the proposed encryption scheme is more secure compared to conventional schemes.

Figure 13 is the proposed method of mutual authentication phase. The HA and FA of a network communicate using the following procedure.

The mutual authentication phase of our proposed scheme.

Step 1 (user sends messages to FA). In this step, the user sends n, IDHA, tU, and C to FA, where

Step 2 (FA sends messages to HA). After receiving the messages from user, the FA calculates an encryption message with its own asymmetric key using the formula EFA S (h(b,n,C,tU, CertFA)), where b is a random number generated by FA.

FA → HA: b, n, C, tU, tFA, EFA S (h(b,n,C,tU, CertFA)), CertFA.

Step 3 (HA verifies the IDU for the user). After the HA receives all messages from FA, HA validates tU. tHA′ is the timestamp when HA received messages from FA. Δt is a time threshold that is used to determine whether accepting messages to prevent a replaying attack. If tHA′ − tU ≤ Δt, HA accepts all messages and proceeds to next step, or else rejects the connection. In order to obtain IDU, HA uses the formula n = h(r⊕PWU ⊕X(tU)) ⊕ IDU. In this step HA must decrypt X(tU) according to the received message tU. First HA uses tU to calculate all the chaos states. Second, HA computes the remainder of tU mod 7. HA will obtain X(tU). HA calculates IDU by using X(tU).

After verifying the IDU, the HA calculates the encryption using the following equation:

Send C, W, EHA S (h(b,C,h(W), CertHA)), CertHA, tHA to FA.

Step 4 (FA calculates session key). The FA uses its secret key to decrypt W and obtains h(h(N∥IDU)), p0, and p. Then FA can calculate the session key sk between FA and U. Consider

Step 5 (FA sends messages back to user). After using its session key to decrypt (TCertU∥h(p0∥p))sk, FA obtains TCertU. The QUOTE U sends (p0∥TCertU∥Other Info))sk to FA for authentication.

4. Analysis and Experimental Results

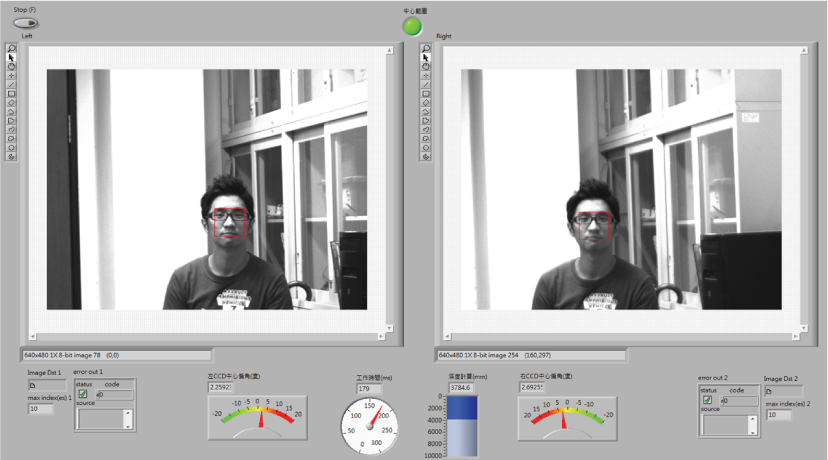

After the image information was simplified during image preprocessing, the sample image was saved, and the real-time image was used for the matching. For example, 10 different faces would be sampled to increase the accuracy of the match. The algorithm would be applied to understand and obtain the sample with the highest score. The image of the sample would be used as the matching reference. The parts of the needed image would be extracted to detect and track the image. Figure 14 shows the overall dual-CCD visual robot mobile platform used in this study. The robot can carry up to 60 kg, capture images with dual-CCD cameras, and obtain results through algorithms as shown in Figure 15.

Visual robot platform with dual CCD.

Left camera image (a) and right camera image (b).

The platform, which normally moves at 20 cm/sec, reaches a maximum speed of 45 cm/sec. The platform immediately begins detection and tracking once it is suddenly approached by an object. However, the platform requires a previous sample matching database or an immediate saved environment image before computing.

The time is used to be a password in our communication message, but using timestamp is a verification method for replying attack. Suppose the attacker can catch a valid login messages (n, IDHA,tU, and C) and tries to login to HA by replaying the same. The verification of timestamp in HA will reject this connection because of the interval tHA′ − tU > Δt, where tHA′ is the HA's system time while HA receives the replayed message. Thus, timestamp in our scheme would be used for avoiding the replay attack. In the proposed scheme, the chaos state is used as secret messages in communications. Which state variable will be used in the system that is determined according to the computation of the remainder of timestamp (mod 7). In other words, the secret message is not fixed. It has a variety of different combinations. Assume the attackers can catch the timestamp and compute the values of three states. Nobody can decrypt the communication message because they do not know which variable is used in our scheme. The attackers find with difficulty and guess which variable is used. Thus, our scheme has better security.

Finally, Figures 16, 17, and 18 illustrate the immediate object tracking experiment. Previous research theories and steps were used as the basis of the experiment, and the sample and tracked target were collectively used as the target to perform the tests. The tests were carried out with the CCD cameras pointing to the center, left, and right. The angle and the range data of the target were observed to determine if the platform can carry out a normal operation.

Dual-CCD camera pointing to center.

Dual-CCD camera pointing to left.

Dual-CCD camera pointing to right.

5. Conclusions

In this research, different algorithms are applied to images to detect and track images. A human-computer interface promotes understanding of the parameters used in the system. Most sensors must be replaced to achieve environmental perception ability and to reduce the complexity of the system. The effect of the environment must be eliminated, and the recognition rate of object tracking can be used to improve the performance of these sensors. Image tracking development and performance are also improved by automatic adjustment of the focal length or aperture of the CCD and camera lens. And, an encryption message is used to ensure good anonymity in every phase. The proposed scheme has the advantages of chaos systems. The response of a chaos system is highly sensitive in initial values of the system, and the trajectory of a chaos system is unpredictable when used to encrypt an authentication message. And the concept of one-time password and timestamp has been added in our scheme. Therefore, communication security in wireless communications is superior to that in previous schemes.

Conflict of Interests

The authors declare that there is no conflict of interests regarding the publication of this paper.

Footnotes

Acknowledgment

This research was supported by the National Science Council, Taiwan, under Grant NSC 102-2221-E-152-007.