Abstract

Many studies have investigated the management of data delivered over sensor networks and attempted to standardize their relations. Sensor data come from numerous tangible and intangible sources, and existing work has focused on the integration and management of the sensor data itself. The data should be interpreted according to the sensor environment and related objects, even though the data type, and even the value, is exactly the same. This means that the sensor data should have semantic connections with all objects, and so a knowledge base that covers all domains should be constructed. In this paper, we suggest a method of domain terminology collection based on Wikipedia category information in order to prepare seed data for such knowledge bases. However, Wikipedia has two weaknesses, namely, loops and unreasonable generalizations in the category structure. To overcome these weaknesses, we utilize a horizontal bootstrapping method for category searches and domain-term collection. Both the category-article and article-link relations defined in Wikipedia are employed as terminology indicators, and we use a new measure to calculate the similarity between categories. By evaluating various aspects of the proposed approach, we show that it outperforms the baseline method, having wider coverage and higher precision. The collected domain terminologies can assist the construction of domain knowledge bases for the semantic interpretation of sensor data.

1. Introduction

Many studies have considered the integrated management of data received from sensor networks [1, 2]. In particular, some significant research has focused on ontology-based approaches for developing standardized and semantic relations between the data [3–6]. The data collected from sensors represents various tangible and intangible objects, such as temperature, acceleration, GPS, light, barometric pressure, magnetic degree, and acoustic measurements. Existing research deals with the integration of the sensor data itself, the definition of standard schemes, and management applications for understanding the sensor data. However, the data could be interpreted differently according to the environment and which objects are related to the sensor, even though the data type, and even its value, may be the same. For example, two 1°C measurements from a refrigerator and an aquarium will have very different interpretations. To make appropriate decisions in different situations, the conceptual idea of the sensor network domain should be related to other concepts in different domains. To attack this issue, knowledge bases incorporating ontology, taxonomy, folksonomy, or thesaurus information should first be constructed, allowing reliable connections to be formed between concepts of the sensor network and concepts of other domain knowledge bases. The fundamental step in constructing knowledge bases is to collect domain terminologies, and our research deals with a domain-term collection method.

Domain-terms, which are the main components of the knowledge, are words and compound words that have specific meanings in a specific context (definition of the term “Terminology”: http://en.wikipedia.org/wiki/Terminology). Constructing knowledge bases manually requires considerable labor, cost, and time and can sometimes result in conflict [7, 8]. Therefore, the automatic construction of a body of knowledge by extracting domain-terms from various sources is a popular area of research [8–15]. Nowadays, Wikipedia (WP) and similar repositories are widely employed as information sources [7, 16, 17]. WP contains diverse forms to explain concepts (hereafter, we use concepts, terms, and article as the same meaning), such as abstract information (specific and long definitions), tabular information, the main article content, article links, and category information. The term “article” is generally used in WP, but it also means “title of article.” In this paper, we use “article” and “term” interchangeably. Moreover, WP provides highly reliable and widely used content, because it is based on semantic information from the collective intelligence of contributors worldwide. However, WP has a couple of weaknesses in its category structure (we detail these with examples in Section 2). One is that it has loops in the category hierarchy, and the other is that a significant number of categories are unreasonably generalized. These weaknesses were similarly identified in previous work [7]. General methods of extracting domain-terms from knowledge, such as for Princeton WordNet [18], use a vertical search (top-down or bottom-up) that chooses a representative term (e.g., science) covering a field of interest and extracts as domain-terms all of the terms (e.g., natural science, life science, biology, botany, ecology, genetic science, morphology, anatomy, biomedical science, medical science, information science, and natural language processing) contained under the representative term [19]. Because of the weaknesses identified above, such methods cannot be applied to WP. This research proposes a horizontal method to resolve the difficulties of a vertical search. The method requires one domain category as input (multiple categories are possible, but we consider only the single case in this paper). The entry category contains many articles. We call these domain articles, and each domain article is involved in one or more categories. We consider the categories connected to the domain articles as candidate categories that can be deeply related to the entry category. Then, our method measures the similarity between the domain category and the candidate category. If the similarity matches or exceeds a predetermined threshold, the candidate and its articles are added to the domain category group and the domain article group, respectively. The method generates a similar category group and a domain terminology group through iterative processes and evaluates its category grouping and term collection performance.

The remainder of this paper is organized as follows. Section 2 describes our motivation for this research. Section 3 proposes the domain-term collection method through domain category grouping. Section 4 presents experimental results and evaluates the performance of the proposed approach, and finally, in Section 5, we summarize our research.

2. Motivation

Many applications employ various WP components for semantic information processing. WP is an agglomeration of knowledge that has been cultivated by contributors from diverse fields; thus, its content has wide coverage and high reliability. In particular, the hierarchical structure of categories and the semantic networks between articles appear similar to the human knowledge system. These strengths allow WP to be widely used; however, unfortunately, additional processes are needed. We indicate a couple of weaknesses of WP in this section. Box 1 shows a case of loop relations in the hierarchical structure, which represents one of the weaknesses.

Natural language processing → Computational linguistics → Natural language and computing → Human-computer interaction → Artificial intelligence → Futurology → Social change → Social philosophy → Human sciences → Interdisciplinary fields → Science → Knowledge → → Mental content → Consciousness → Philosophy of mind → Conceptions of self →

The category “Natural language processing” has “Concepts” as a supercategory, and each “Concept” has itself as one of the superconcepts a few steps later. The WP hierarchy contains many loop cases, and this poses difficulties during a vertical search. Even if this was resolved programmatically, there would be another obstacle, as shown in Box 2.

→ ⋯ → Artificial intelligence → ⋯ → Human sciences → Social sciences → ⋯ → Science → Knowledge → Concepts → Mental content → Consciousness → metaphysics → ⋯ → Form → Ontology → Reality → ⋯ → Scientific observation → Data collection → ⋯ → Probability → Philosophical logic → ⋯ → Neo-Marxism → Reasoning → Intelligence → ⋯ → organizations → Non-profit organizations by beneficiaries → Non-profit organizations → ⋯ → Constitutions → Legal documents → Documents → ⋯ → Crowd psychology →

Box 2 enumerates the supercategories of “Natural language processing” after its loop cases have been removed. The initial category is a computer science technology, but this soon becomes connected to “Mind,” “Marxism,” “Humans,” “Taxonomy,” “Classification systems,” “Libraries,” “Collective intelligence,” “Internet,” “World,” and “People.” Some of the connections are appropriate, but others suffer from excessive generalization between categories. We call this inappropriate generalization, and it causes undesirable categories and terms to be collected in a domain category during a vertical search. Therefore, we propose a method of searching horizontally for related categories.

This research considers a category of interest as the entry domain category and measures the similarity of article intersections between this domain and the other, candidate category. If the similarity is equal to or exceeds a predetermined threshold, the candidate is used as an element of the domain set. Some well-known similarity measures for the degree of article intersection, such as the Jaccard similarity coefficient (JSC) or the Dice coefficient (DC), have significant limitations, as shown in Table 1.

Similarity issues for bootstrapping methods.

Each case consists of two categories, and we wish to determine whether the candidate can be added to the domain set. In the first case, the categories have the same number of articles, 50 of which are shared as an intersection set. The similarity values are 0.333 and 0.5 according to the JSC and the DC, respectively. In the second case, almost all of the candidate articles are included in the domain category, but the similarities are lower than those of the first case. We believe that the second case should have higher similarity values, because the initial domain category was chosen by the user, which means that the domain has our trust. However, existing methods cannot provide a suitable measure of similarity. In this paper, we suggest simple new measurements to mitigate this limitation.

3. Domain-Term Extraction Based on Category Grouping

Articles included in the same WP category describe similar content. However, WP assigns many similar categories (e.g., “Word sense disambiguation,” “Ontology learning,” and “Data mining”) to a single category (e.g., “Natural language processing”). To collect domain-terms that have wide coverage, we suggest a bootstrapping method that remedies the weaknesses of WP and improves on the existing similarity measurements mentioned in the previous section. We now describe these processes in detail using real examples.

3.1. System Flow

The proposed method takes one category, which the user selects as an entry (trigger), and follows the flowchart shown in Figure 1. Starting from the entry category, we determine similar categories through a horizontal search. In the search, articles included in the entry act as “clues” for measuring the similarity and “bridges” for preparing the next candidate category. Figure 2 illustrates an example of a category-article network. If the category “Natural language processing” is given as the entry, the method finds articles for similarity measurement and prepares the categories of each article for the next candidates. This means that “Information science,” “Knowledge representation,” “Machine learning,” “Artificial intelligence applications,” “Data mining,” and so forth are processed individually as candidate categories. We now explain the process shown in Figure 1 using similar examples.

Flowchart for domain category grouping and domain-term selection.

Example of a category-article network.

3.2. Domain-Term Selection through Category Grouping (Bootstrapping Method)

To group similar categories, we choose a horizontal category search and propose new similarity measurements that enable the group to be enriched. The bootstrapping process proceeds as follows.

An initial domain category (DC) consists of a user-selected category: DC = {user selected category}. For example, DC = {Natural language processing}. The length of DC increases throughout the iterative process. The domain articles (DA) of one category are collected. At first, DA consists of the articles of the entry, but it gradually becomes enriched with articles from new domain categories. Explicitly, DA = {(art, dist, count, dw)

i

, 1 =< i =< n}, where art, dist, count, and dw denote an article, a distance from an entry category, an accumulated (overlapped) count, and a domain-term weight, respectively. The initial elements of DA have values of 1, 1, and 1.0 for dist, count, and weight, respectively. For example, DA = {(Concept mining, 1, 1, 1.0), (Information retrieval, 1, 1, 1.0), (Language guessing, 1, 1, 1.0), (Stemming, 1, 1, 1.0), (Content determination, 1, 1, 1.0), …}. There are two options to choose whether an article-link network is used in the similarity measurements. We explain the options using Figure 3 and Table 2. Figure 3 shows the network between the categories “Natural language processing” and “Data mining,” whereas Table 2 defines the network types.

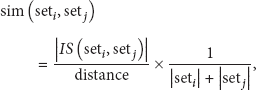

The first option considers only category-article networks, such as {Content determination, Information retrieval, Languageware, Concept mining, Document classification, Text mining, Automatic summarization, String kernel, Sentic computing, …} for “Natural language processing.” Type 1 in Table 2 is related to this option. The second option uses more complex networks that utilize the category-article network as well as article links. Types 2 and 3 in Table 2 represent this option. If the first option is chosen, the method goes to Step 4; otherwise, it goes to Step 11. Candidate categories (CC) are collected using one article of DA. If DC already contains a candidate, it is not included in CC. Explicitly, To prepare clues that indicate suitable categories, a set of candidate articles (CA) of one candidate category is formed. For example, CA (Data mining) = {Extension neural network, Big data, Data classification (business intelligence), Document classification, Web mining, Text mining, Concept mining,…}. If there is an intersection article, then an intersection set IS is constructed to measure the similarity: To eliminate the limitation described in Section 2, we propose a new similarity measurement:

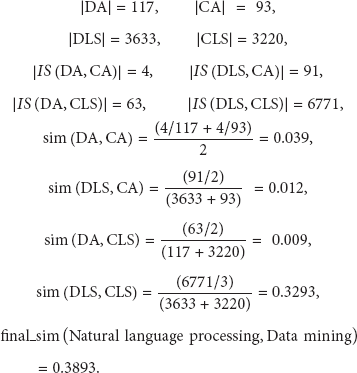

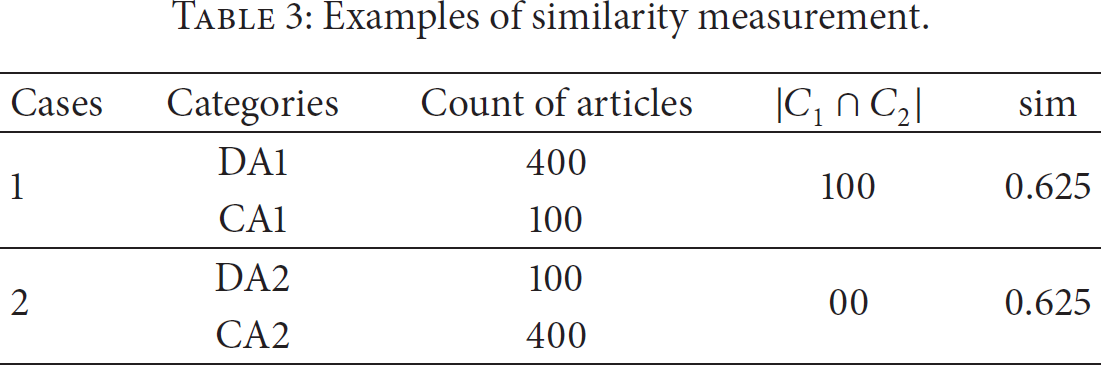

where, basically, we assign a value of 0.5 to α. Based on (1), we can calculate the similarities of Cases 1 and 2 in Table 1 to obtain values of 0.5 and 0.54, respectively. However, we must consider an additional constraint in the bootstrapping method. Table 3 shows another example. Based on (1), we find that both cases have the same similarity value, as shown in Table 3. Even so, Case 2 is inappropriate, because the coverage of DA is too narrow. This may cause the generalization problem. Thus, before calculating similarities in the bootstrapping method, the similarity constraint,

should be satisfied. According to this constraint and (1), the similarity between “Natural language processing” and “Data mining” is calculated as follows: If the similarity exceeds a predetermined threshold, go to Step 8; otherwise, we skip Step 8 and proceed to Step 9. If the candidate category has a similarity that is greater than the threshold, the system considers the candidate to be the domain and inserts the candidate and its articles into DC and DA. Here, new articles are accompanied by supplementary values of dist, count, and dw. Specifically, dist is the category distance from the entry category (in the case of “Data mining,” dist is 2), count is 1, and dw is the similarity calculated in Step 6. If DA already contains an article, the original supplementary values are increased by adding the new ones. These values are used later for domain-term selection. If there is at least one element in CC, we return to Step 4 for the next candidate category; otherwise, proceed to Step 10. If there is at least one element in DA, we return to Step 2 for the new CC of the next domain article; otherwise, proceed to Step 19. To use the links of articles as additional clues for the similarity measurement, a domain link set (DLS) is collected: DLS(DA) = {link_dom

j

, 1 =< j =< m}. For example, DLS = {Information, Metadata, Relational database, World Wide Web, Data (computing), Document retrieval, …}. This step is the same as Step 4. This step is the same as Step 5. Construct a candidate link set (CLS) with links from CA. Explicitly, CLS(CA ( If the similarity constraint is satisfied, (1) is applied to determine the category-article similarity. We use additional similarity measurements for article-link networks. The network can have different degrees of relatedness according to the network type (see Table 2). If two categories have complex, close connections, the similarity should be greater because of common features in the neighborhood. To apply both characteristics, we propose a further similarity measure:

where distance is the number of articles that exist on the network (this is different from dist in DA). According to (3), we can calculate sim (DLS, CA), sim (DA, CLS), and sim (DLS, CLS), which have distances of 2, 2, and 3, respectively. The final similarity (final_sim) between DA and CA is determined by summing sim (DA, CA) from (1) with sim (DLS, CA), sim (DA, CLS), and sim(DLS, CLS) from (3). The similarity between “Natural language processing” and “Data mining” can thus be calculated as follows:

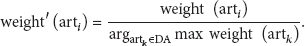

If the similarity exceeds the predetermined threshold, go to Step If the similarity exceeds the threshold, the system enriches DC, DA, and DLS. This step is similar to Step 8. If there is at least one element in CC, we return to Step Output the DC and DA acquired through the bootstrapping process. Terminate the bootstrapping process and evaluate DC and DA, which are increased in Step 8 or 17. For DA, the evaluations are divided into three types by supplementary value (dist, count, and dw). In the case of domain weight, we use values normalized according to

Network types and examples: C denotes a category; A, an article; and L, a link.

Bold: represents the category concepts connected with super and sub relations but inappropriate generalization in hierarchical structure.

Examples of similarity measurement.

Network structure between the categories “Natural language processing” and “Data mining.” Solid lines and dotted lines represent the category-article network and article-link network, respectively, whereas (C) denotes a category. Here, the link is a connection between WP articles.

We have described all of the bootstrapping steps for domain-term selection by grouping similar categories. In the next section, a few aspects of performance are evaluated.

4. Experimental Evaluations

This section considers the evaluation of DC and DA. One objective of our research is to select as many domain-terms as possible, for which we proposed the bootstrapping method for similar category grouping. In the process, DC and DA become enriched with categories and articles, respectively, and each article has supplementary values of dist, count, and dw.

To evaluate the quality of DC and DA, we used an article-category dataset and a Pagelinks dataset that are included among the WP components in DBpedia (DBpedia is a crowd-sourced community effort to extract structured information from Wikipedia and make this information available on the Web: http://dbpedia.org/About) version 3.7. To utilize the WP networks, we implemented two versions of our system, referred to as new similarity (NS) and new similarity with links (NSL). We varied the threshold of each system from 0.1 to 0.9. Moreover, we chose entry sets of 40 categories (each set has one category) from fields of computer science, such as “Natural language processing,” “Speech recognition,” and “Semantic Web.” The results are compared with those of a baseline method that employs the DC similarity measure. Table 4 shows some of the similar categories collected by NSL for the entry category “Semantic Web” with a threshold of 0.2.

Domain categories collected for “Semantic Web” on NLS with threshold value 0.2.

RE: relevance evaluation.

We invited domain specialists to examine the results, and each collection was manually checked by each evaluator. The RE field in Table 4 shows the actual checked results, where values of 1 and 0 were ascribed by the evaluators for relevance and irrelevance, respectively. Tables 5 and 6 present summaries of the DC evaluations.

DC evaluations with baseline and NS.

DC evaluations with NSL.

There was no improvement for thresholds greater than 0.6, and the bootstrapping was incomplete for a threshold of 0.1. Therefore, we present results for threshold values from 0.2 to 0.6. In the experiments, the baseline attained an extension rate of only 1.18 (40 categories extended by 7 categories), even though its precision was 100%. The aim of the information processing is to reduce the time taken to accomplish certain objectives, which implies that the information system should provide varied results. In this respect, we do not expect the baseline results to be helpful. However, it is apparent that NSL provides wide extension and high precision. The maximum extension rate was 24.45 with 799 appropriate categories for a threshold of 0.2. The minimum precision was around 84%, when the threshold was 0.3.

In addition to evaluating DC, we evaluated DA with the NSL results for a threshold of 0.2. To examine the influence of the distance, count, and domain weight, we analyzed the results according to each factor. Six DAs were selected at random, with a total of 1,769 articles (terms). Box 3 enumerates a part of collected articles for a domain “Semantic Web.” And Tables 7, 8, and 9 show the evaluation results with respect to distance, count, and weight.

DA evaluations based on distance, including articles within the indicated distance.

DA evaluations based on count (overlapped).

DA evaluations based on normalized weight.

XHTML + RDFa, Bath Profile, Sidecar file, COinS, Metadata publishing, MARC standards, WizFolio, Qiqqa, ISO-TimeML, TimeML, Metadata Authority Description Schema, Bookends (software), RIS (file_format), Metadata Object Description Schema, EndNote, Refer (software), ISO 2709, BibTeX, XML, S5 (file_format), Semantic HTML, Simple HTML Ontology Extensions, Opera Show Format, XOXO, XHTML Friends Network, StrixDB, Graph Style Sheets, TriX (syntax), TriG (syntax), RDF feed, Redland RDF Application Framework, RDF query language, Turtle (syntax), RDFLib, Notation3, SPARQL, D3web, Artificial architecture, NetWeaver Developer, Knowledge engineer, Frame language, …

The basic performance of the domain-term selection attained precision of 70.9%. As expected, the precision was inversely proportional to the distance; however, a distance of 4 produced almost all of the unrelated articles. The weight and count could be used as important criteria to select domain-terms; we found that the weight returned more refined results than the count (the weight returned 755 appropriate terms with 97.2% precision at a threshold of 0.3). At short distances, there are many names of people and organizations, such as “Squarespace,” “Rackspace Cloud,” and “Nsite Software (Platform as a Service).” These names were selected by the bootstrapping because their associated categories (e.g., “Cloud platforms,” “Cloud storage,” and “Cloud infrastructure”) were similar to the entry (e.g., “Cloud computing”). This situation is not caused by our method, but by the definition of the article-category relations of WP. We believe that this can be resolved by processing content (abstracts) or tabular information in the future.

5. Conclusions and Future Work

This paper has proposed a method of domain-term collection through a bootstrapping process to assist the semantic interpretation of data from sensor networks. To achieve this, we identified weaknesses in the WP category hierarchy (i.e., loops and inappropriate generalizations), and chose a horizontal, rather than vertical, category search. We proposed new semantic similarity measurements and a similarity constraint to surpass existing methods. Moreover, we employed category-article networks and article-link networks to elicit information for the category similarity measurement. In performance evaluations, our category grouping based on NSL yielded the greatest number of proper results. In terms of domain-term selection, we confirmed that the results obtained with normalized weights had the best precision and extension rate. The distance-based metric had no positive influence on our research. When the distance was greater than three, almost all of the terms were unrelated. However, we believe that the collected domain terminologies can assist the construction of domain knowledge bases for the semantic interpretation of sensor data.

WP has additional weaknesses to those mentioned in this paper, especially in the category-article relation. For example, the term “Paco Nathan” is a personal name that has “Natural language processing” as one of its categories. The relation between the two, that is, “Paco Nathan” has expertise in “Natural language processing,” causes noise and negatively influences semantic information processing. We think that this problem can be solved in future work by processing additional WP components, such as abstract or tabular information. Moreover, our research employed only the out-links of WP articles. If the in-links were considered, we expect that the results would be more significant, with wider coverage of domain-terms and higher relevance.

Footnotes

Conflict of Interests

The authors declare that there is no conflict of interests regarding the publication of this paper.