Abstract

Omnidirectional images generally have nonlinear distortion in radial direction. Unfortunately, traditional algorithms such as scale-invariant feature transform (SIFT) and Descriptor-Nets (D-Nets) do not work well in matching omnidirectional images just because they are incapable of dealing with the distortion. In order to solve this problem, a new voting algorithm is proposed based on the spherical model and the D-Nets algorithm. Because the spherical-based keypoint descriptor contains the distortion information of omnidirectional images, the proposed matching algorithm is invariant to distortion. Keypoint matching experiments are performed on three pairs of omnidirectional images, and comparison is made among the proposed algorithm, the SIFT and the D-Nets. The result shows that the proposed algorithm is more robust and more precise than the SIFT, and the D-Nets in matching omnidirectional images. Comparing with the SIFT and the D-Nets, the proposed algorithm has two main advantages: (a) there are more real matching keypoints; (b) the coverage range of the matching keypoints is wider, including the seriously distorted areas.

1. Introduction

Keypoints matching between different images of the same scene is generally required by computer vision tasks such as target tracking, image retrieval, and 3D reconstruction. The SIFT based on perspective projection has been the most popular method in this field [1]. Nowadays, omnidirectional cameras such as the fisheye-lens cameras are widely used in panoramic photography, visual surveillance, robot vision, and virtual reality for the purpose of 180° and even 360° visual field coverage [2]. However, the SIFT algorithm does not work well in matching omnidirectional images, because large nonlinear distortion always exists in them, especially in their peripheries. Therefore, the problem of matching omnidirectional images has absorbed the attention of many researchers in these years.

Although many investigations have been made recently, the keypoint matching between omnidirectional images still remains an open problem, especially in the cases of images with large distortion [3]. Daniilidis et al. proposed a framework for filtering and flow computation in catadioptric images [4]. Castle et al. combined the methods of point-based recognition and localization with those of point-based monocular visual SLAM (simultaneous localization and mapping) to identify and recover the 3D geometry of objects [5]. Bülow put forth an equivalent Gaussian smoothing method based on spherical diffusion for 3D surface smoothing [6]. Using this method, Hansen et al. modified the SIFT algorithm and developed two new spherical-based descriptors: parabolic SIFT (pSIFT) and spherical SIFT (sSIFT) [7]. However, these two descriptors are only suitable for images with small distortion. If the images have large distortion, large-scale interpolation will be needed, which will inevitably lower the keypoint matching precision. In addition, the pSIFT and sSIFT methods may produce false keypoints because of the interpolation artifacts. To avoid interpolation, Lourenço et al. proposed the SIFT with radial distortion (sRD-SIFT) algorithm [8, 9] based on the adaptive Gaussian filter and the compensatory gradient operator, by referring to the distortion model of Fitzgibbon [10] and the SIFT descriptor. Although the sRD-SIFT algorithm improves the matching effect of images with small distortion, it is worse than SIFT in the cases of large distortion. For example, the matching result of the sRD-SIFT is unsatisfactory especially in the peripheries of omnidirectional images.

In an omnidirectional camera system, a line in the space is projected onto the image plane as a curve. This means that a curve going through two image points may correspond to a line in the space. If there is a certain way to determine a particular curve by two image points, one can construct a new descriptor to describe this curve. By using such a descriptor, the curves can be matched through their endpoints. According to this idea, the present paper proposes a spherical-based keypoint descriptor to establish a matching algorithm for omnidirectional images. The spherical model and the polynomial model are combined together to define a curve with two keypoints at first. Then, the pixel values on the curve are employed to generate a descriptor. Finally, a new keypoint matching algorithm for omnidirectional images is proposed. The proposed algorithm has three characteristics. Firstly, more precise matching can be achieved, because the distortion information is taken into consideration by means of the particular curve. Secondly, both the local information and the global information are used in keypoint matching simultaneously. Thirdly, the matching is performed directly on the original images, and so the interpolation is avoided.

The rest of the paper is organized as follows. Section 2 briefly reviews the spherical model for omnidirectional cameras and constructs the image of a space line through this model. The descriptor for a curve is introduced in Section 3. Section 4 presents a voting scheme for the keypoint matching of curves. Section 5 shows the matching results of our algorithm and compares it with two other algorithms. Finally, some conclusions are drawn in Section 6.

2. Spherical Model

2.1. Projection of a Point

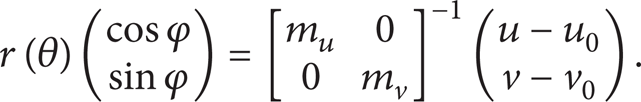

As shown in Figure 1, the projection process of the spherical model for an omnidirectional camera can be divided into two steps [11, 12]. Here, we take a point P(X, Y, Z) in the space to demonstrate the two steps. In the first step, it is linearly projected along the incident ray OP to a point

where r(θ) is the radial or polar distance from the image point to the origin of the world coordinate system, k1 is the focal length, and k2 is the distortion coefficient. u and v are the image coordinates, while u0 and v0 are the pixel coordinates of the principal point. φ is the angle between the x-axis and the radial line passing the image point p. m u and m v are the two scale factors specifying the number of pixels per unit distance in the horizontal and vertical directions, which should be known beforehand.

The projection of a point.

As shown in Figure 1, the mapping between the point P in the space and the image point p is reversible. The reversion can be done by using (2).

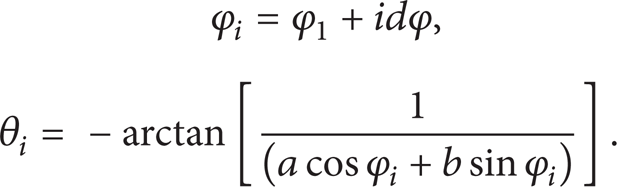

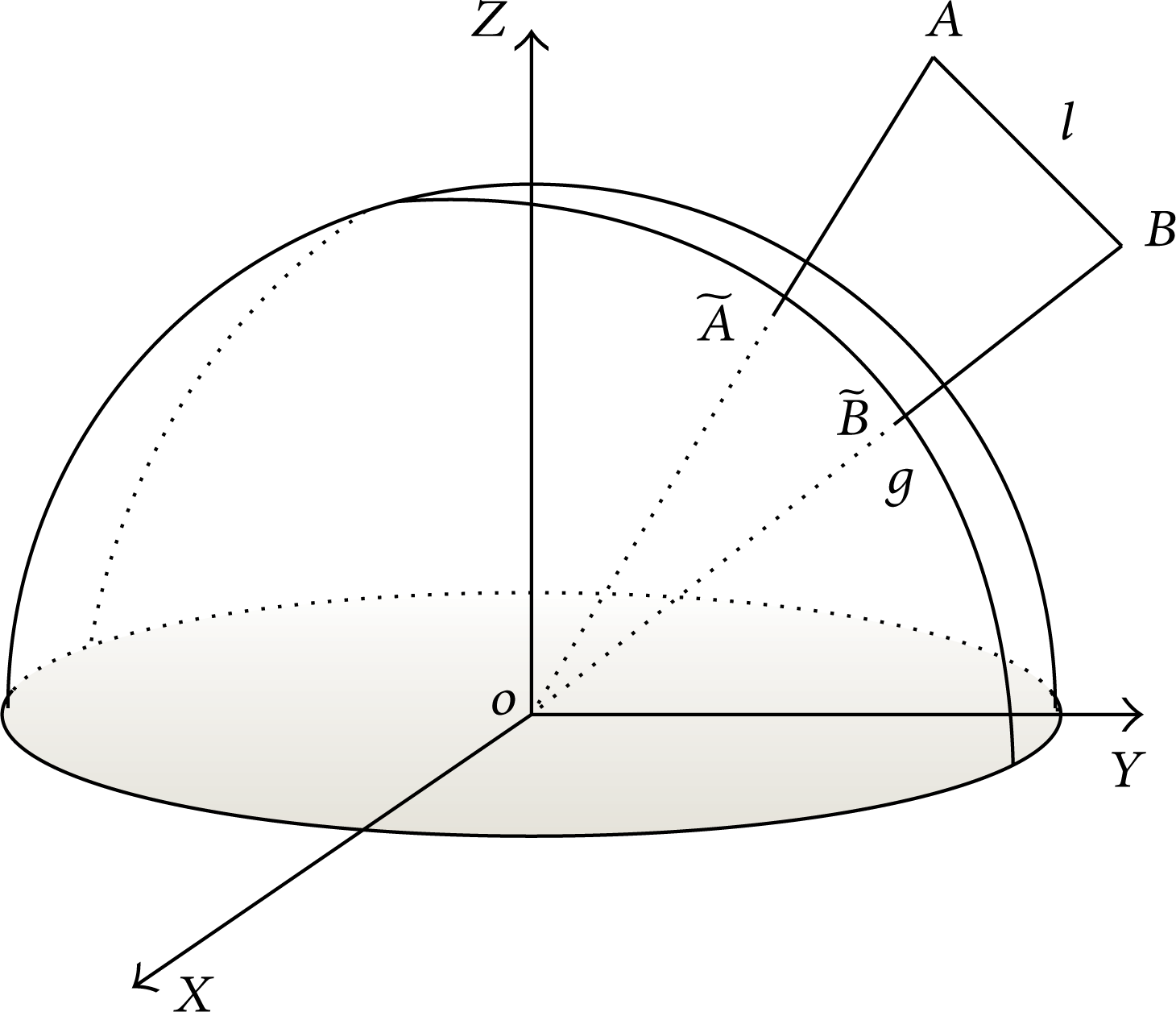

2.2. Projection of a Line

By extending the projection model of the point given in (2), one can obtain the projection of a line in the space [13]. Consider the line AB shown in Figure 2, which can be formulated as

where D = [D x , D y , D z ] T is the direction vector of AB and t is the distance from point B.

The projection of a line.

As a point begins moving from point B along the line AB, the angle θ in (1) will be a function of the distance t. Therefore, (2) can be extended as the following collinear model to formulate the image curve on the image plane [13]:

It should be noted that the unit sphere is centered at the origin of the world coordinate system OXYZ in Figure 2.

3. The New Keypoint Descriptor

3.1. Determination of the Image Curve

Similar to the case of a point, the projection of a line also contains two steps. At first, the line is projected as an arc segment of a unit circle on the unit sphere, and then the arc segment is further projected as a curve on the image plane. For example, consider the line l shown in Figure 2. At first, its two end points A and B are mapped to the unit circle g, and then the arc segment

It follows from (2) that the inverse mapping from an image point p(u, v) to its corresponding sphere point

By moving the origin of the image coordinate system to the image center (u0, v0) T , the image point p(x, y) T can be projected back to the sphere (θ, φ) T , where θ and φ satisfy

Projecting the two points a(x1, y1) and b(x2, y2) in the image plane back to the sphere (θ, φ)

T

, one gets two sphere points

where

The image of an arbitrary space line on the unit sphere is a spherical circle described by (7). After the spherical circle is determined, the space line's image on the image plane can be obtained from (4) and (5). In other words, the two keypoints on the image plane can be used to determine a curve on the image plane, which is the image of a space line. Therefore, a descriptor can be constructed based on a pair of keypoints for the matching relation of the spherical model. Even though the positions of the two keypoints are changed, the matching relation between the image curve and the space line keeps unchanged. That is to say, the image curve will not be affected by nonlinear distortion, and thus the uniqueness requirement can be satisfied.

3.2. The Feature Vector

As stated above, the keypoint pair is important for the spherical model based matching between two omnidirectional images. In fact, the keypoint pair is not a new concept in the area of computer vision. By using pixel histogram, Yang et al. [14] proposed a keypoint pair descriptor for food image recognition. Although the pixel histogram descriptor is effective in food recognition, it is not necessarily suitable for finding correspondences between omnidirectional images. By using the keypoint pair, we present a new descriptor here based on the Descriptor-Nets (D-Nets) [15] for the matching of omnidirectional images. The keypoint pair is sampled from the image curve in the image plane, and so the descriptor contains the feature information of nonlinear distortion and the image matching is robust.

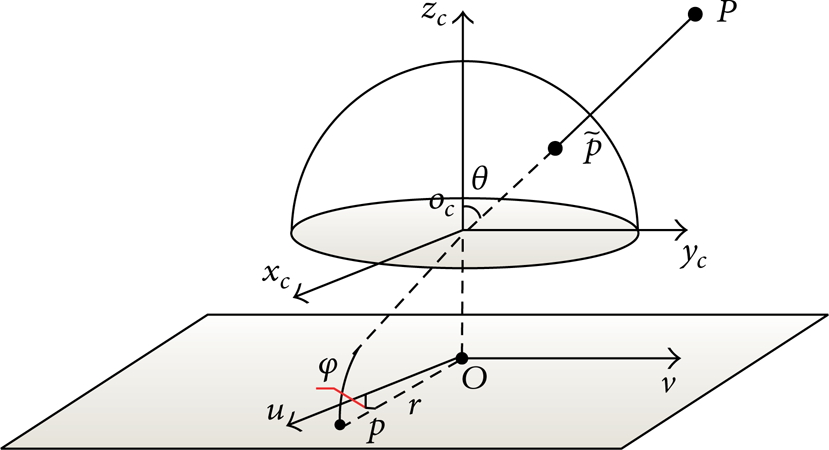

An omnidirectional image is shown in Figure 3, where the points A(θ1, φ1) and B(θ2, φ2) constitute a keypoint pair. During the sampling on the image curve, the angle φ varies uniformly with the sampling interval being dφ. When the sampling points are projected back to the sphere, the pixels distribute nonuniformly on the sphere. In addition, it can be inferred from (7) that the coordinates (θ i , φ i ) of the ith sampling point satisfy the following condition:

where the two coordinates are determined as follows:

Keypoints sampled from the image.

Each sampling step generates a pixel value, which can be specified as an element of a vector. Such a vector composed of all the pixel values in the sampling order is called the feature vector, whose dimension will be changeable, if the step length Δφ of the sampling is not fixed. For the convenience of treatment, the dimension of the feature vector is fixed as s here, and it is in return used to determine the sampling step length.

4. The New Image-Matching Algorithm

After obtaining the feature vectors for all the image curves, we can construct a new algorithm to match different images. Consider two images I and I′, whose feature points are V = {a1, a2,…, a n } and V′ = {b1, b2,…, bn′}, respectively. Choose a directed curve ε = {(a i , a j ) ∣ i≠j} from a i to a j and another one ε′ = {(b k , b l ) ∣ k≠l} from b k to b l . Assume that ε matches with ε′; it means that a i matches with b k , and a j matches with b l . So, the corresponding keypoints of these two images will match with each other through the curve.

Because each keypoint can generate n − 1 keypoint pairs with the other n − 1 keypoints and each keypoint pair corresponds to a vector of s dimension, large-scale calculation may have to be performed for the matching, which will inevitably reduce the matching speed. In order to enhance the matching efficiency, the value of each dimension is expressed by a binary number of b bits, like in the case of D-Nets [15]. Therefore, the feature vector of each curve has a group of binary numbers of s*b total bits.

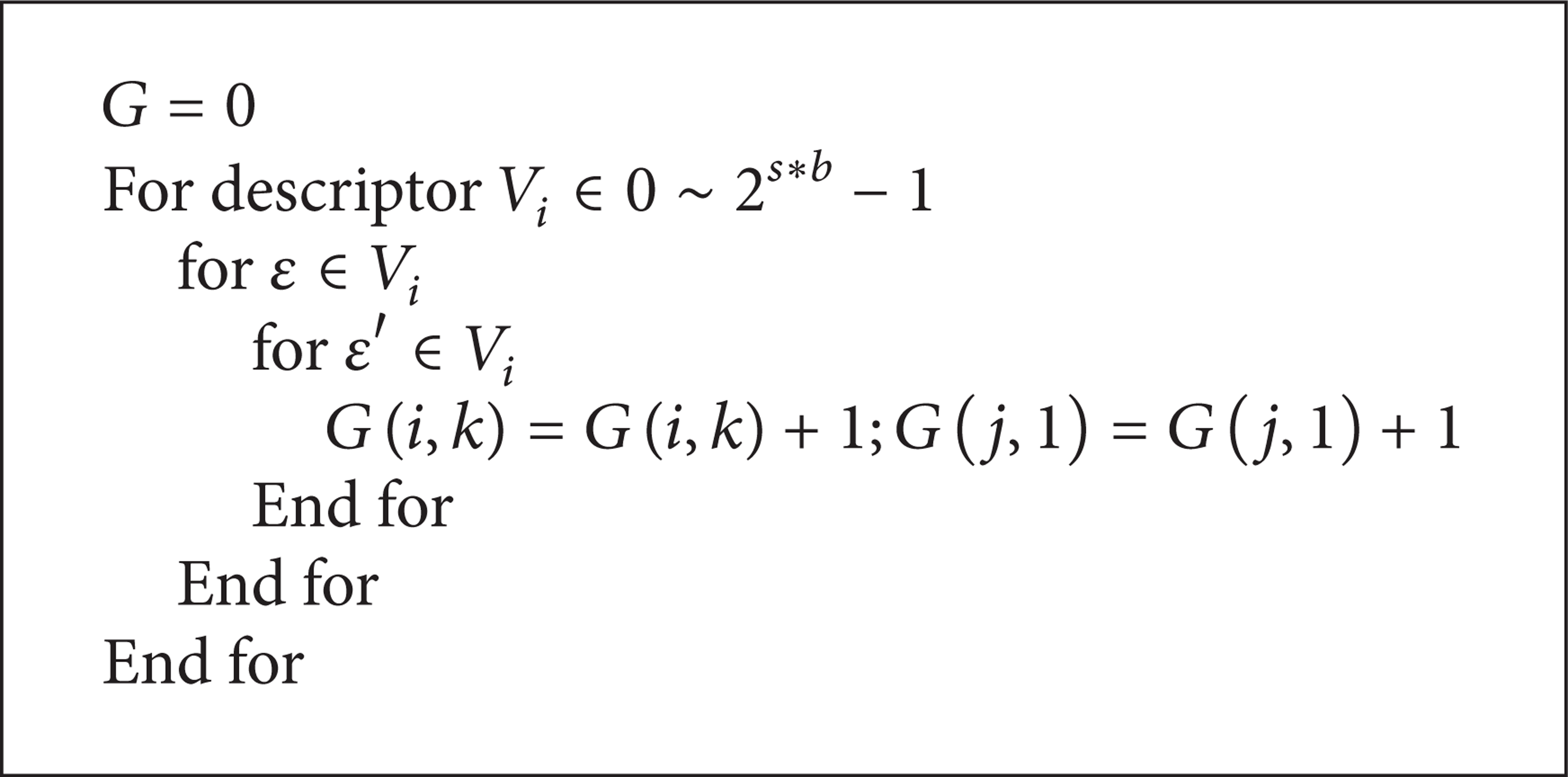

However, there is a problem that a keypoint of an image may match with many keypoints of another image. To achieve a more precise matching, a voting algorithm is put forth here. For an integer in the interval [0, 2 s*b − 1], there exist η1 curves in image I and η2 curves in image I′, respectively. If a keypoint a i in image I matches with the keypoint b k in image I′, we give a vote to the variable G(i, k); that is, we add 1 to the value of G(i, k). By traversing all the curves, one can find that the maximum G(i, k) corresponds to the case that a i matches with b k . The voting algorithm is illustrated in Algorithm 1.

The voting algorithm.

Each keypoint can generate 2*(n − 1) directed curves with the other n − 1 keypoints, and all the keypoints make contributions to the matching process of these curves. The result of image matching is improved because both the local information and global information of the images are used to characterize the keypoints.

5. Experimental Results and Discussion

In this section, the three image pairs shown in Figure 4 are used to illustrate the effects of the proposed image-matching algorithm. Three kinds of camera pose changes including scaling, translating, and affine changing are involved in these image pairs, respectively. To demonstrate the advantages of our algorithm, its matching performance is compared with SIFT and D-Nets. For this purpose, the number of real matching and the distribution range of the matching keypoints are specially discussed.

Three image pairs in the experiments.

5.1. Real Matching in Different Views

In this subsection, the SIFT, D-Nets, and our spherical-based algorithm are applied to match the image pairs shown in Figure 4, respectively. During the matching process, the RANSAC method [16] is employed to remove the wrong matching.

The matching results are shown in Figure 5, and the numbers of real matching points are given in Table 1. In Table 1, the total match gives the number of corresponding points after matching process, while the real match stands for the number of corresponding points in two images that represent the same space content. In the three algorithms, the proposed one has the best matching performance, while the D-Nets have the worst effect. As compared with the SIFT, the proposed algorithm improves the matching performance by 29.8%, 45.8%, and 43% under the conditions of scaling, affining, and translating, respectively.

Matching results of the three algorithms.

The matching results of the three image pairs by three different algorithms.

5.2. Distributions of the Matching Keypoints

The proposed matching algorithm is more invariant to distortion than other algorithms, because the spherical-based keypoint descriptor contains the distortion information of omnidirectional images. The matching keypoints distribute at not only the center of the image but also its periphery. As shown in the first row of Figure 5, the distortion at the image center is negligibly small. However, the distortion and scale on the image periphery are remarkable, which makes the traditional algorithms unsuitable to the peripheral area. When the images have distortion, affine, and translation simultaneously, the matching will become more complicated. In the second and third rows of Figure 5, the matching keypoints distribute in areas where the synthetic distortion is relatively small. However, they may distribute anywhere in the images, if the camera pose changes.

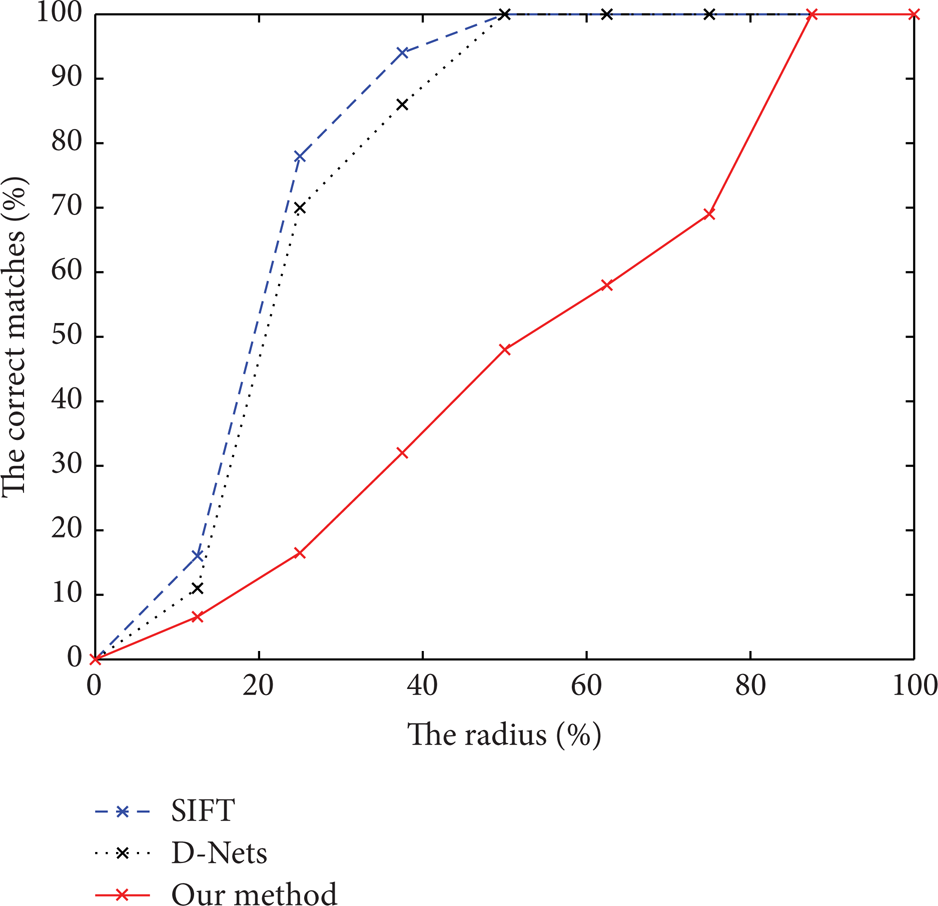

Figure 6 shows the distributions of the matching keypoints on the image. The keypoints of either SIFT or D-Nets distribute in an area whose radius is less than 0.5R. Obviously, the area covered by the keypoints of the proposed algorithm is much larger, including the peripheral area with large distortion. This is because the distortion information of the images is contained in the spherical-based keypoint descriptor. The coverage of the distorted image periphery by the keypoints naturally produces better matching between omnidirectional images. It seems that the result of our algorithm is worse than others in the center of the first image pair, which is because the image pair changes the scale mainly, especially in the center of the image. They are similar to each other in this area. However, this area is only a little part of the whole image. The matching points locate at this area only with the traditional matching algorithm, while our algorithm can get more matching points in the area of whole image. Moreover, according to Table 1, our algorithm can get the more real matching points when considering the more general camera pose changing.

Distributions of the matching keypoints on the image.

6. Conclusions

In this paper, the problem of keypoint matching in omnidirectional images is investigated. Based on the spherical model and the D-Nets algorithm, a new voting algorithm is proposed to remove the effect of image distortion. Keypoint matching experiments are performed on three pairs of omnidirectional images which are captured by CANON SIGMA 8 mm F3.5 EX DG Circular Fisheye lens, and comparison is made among the SIFT, the D-Nets, and the proposed algorithm. It is indicated that the proposed algorithm has the best matching result especially when the images have large distortion. As compared with the SIFT and the D-Nets, the advantages of the proposed algorithm are embodied mainly in two aspects: (a) there are more real matching keypoints; (b) the coverage range of the matching keypoints is wider, including the distorted peripheral areas. The proposed algorithm is generally more robust and more precise than the existing SIFT and D-Nets algorithms in matching omnidirectional images that are taken from quite different views.

Conflict of Interests

The authors declare that there is no conflict of interests regarding the publication of this paper.

Footnotes

Acknowledgments

This work is supported by the Natural Science Foundation of China (no. 61175031), the Science Foundation of Ministry of Education of China (no. 110204004), and the National High Technology Research and Development Program of China (863 Program) (no. 2012AA041402).