Abstract

The increase in urbanisation is making the management of city resources a difficult task. Data collected through observations (utilising humans as sensors) of the city surroundings can be used to improve decision making in terms of managing these resources. However, the data collected must be of a certain quality in order to ensure that effective and efficient decisions are made. This study is focused on the improvement of emergency and nonemergency services (city resources) through the use of participatory crowdsourcing (humans as sensors) as a data collection method (collect public safety data), utilising voice technology in the form of an interactive voice response (IVR) system. This study proposes public safety data quality criteria which were developed to assess and identify the problems affecting data quality. This study is guided by design science methodology and applies three driving theories: the data information knowledge action result (DIKAR) model, the characteristics of a smart city, and a credible data quality framework. Four critical success factors were developed to ensure that high quality public safety data is collected through participatory crowdsourcing utilising voice technologies.

1. Introduction

Local government accepts responsibility for maintaining the city's infrastructure, as well as for providing a safe living environment [1]. Currently, cities around the world are facing challenges in their attempts to keep up with the rate of urbanisation. Accordingly, city resources are unable to support all members of the public simply because the ratio of number of citizens to city resources (e.g., water and electricity distribution or public safety emergency and nonemergency units) is high. This is not because city resources are scarce, but because they are not managed effectively and efficiently [2]. Therefore, improving the management of city resources would assist cities in adapting to increased urbanisation.

In order to explain how one would improve the management of city resources, the data information knowledge action result (DIKAR) model can be used. This model, illustrated in Figure 1, explains that processed data becomes information, and one gains knowledge by interpreting information. Knowledge is then used to decide on a cause of action, which drives a result. Essentially, the DIKAR model explains that data is required to improve decision-making procedures. Therefore, the collection of relevant data is required if the management of city resources is to be improved. However, the data collected must be of sufficient quality to facilitate effective decision making regarding the management of city resources.

DIKAR Model [3].

This paper is focused on the collection of high quality public safety data in order facilitate decision making relating to the management of emergency and nonemergency units, ultimately leading to the creation of a safer living environment. Because of citizens' knowledge of their surroundings, crowdsourcing has been found to be the most appropriate method for collecting public safety data. “Crowdsourcing” is a term that refers to the collection of large volumes of data or reports on certain events, by making use of the geographical dispersion of people [4]. Data can thus be collected and/or reported on using applications that make use of images collected from camera phones; by voice, through a phone call; and text, through instant messaging, email, or social networking [5].

This paper will discuss how crowdsourcing (humans as sensors) can be used as a smart city initiative to reduce or mitigate the problems associated with urbanisation, specifically public safety. The paper will centre on efforts to ensure that high-quality public safety data is collected. Before discussing this, the relationship between the smart city and crowdsourcing was placed in context. As part of a smart city initiative, crowdsourcing is used to reduce or mitigate the challenges associated with urbanisation. The paper will discuss a specific public safety crowdsourcing project, which assisted in the identification of critical success factors for ensuring the collection of high quality public safety data, using participatory crowdsourcing utilising voice technologies. In the following section, the research methodology used for this study will be discussed.

2. Materials and Methods

A mixed method approach was undertaken in this study guided by design science methodology. Design science guidelines were followed throughout this study, leading towards the development of the critical success factors. Design science is a problem-solving paradigm which “seeks to create innovations that define the ideas, practices, technical capabilities, and products through which the analysis, design, implementation, management, and use of information systems can be effectively and efficiently accomplished” [6, page 76]. Design science consists of seven guidelines, all of which must be considered [7]. Figure 2 graphically depicts the way in which the seven guidelines were followed throughout this study. Note that the design science guidelines presented by Hevner et al. [7] have been rearranged for the purpose of this study.

Applied design science guidelines.

Guideline 1: Problem Relevance. The problem identified in this study refers to the presence of low quality data, which would result in ineffective decisions being made on the management of city resources. This study will focus specifically on the data quality of public safety reports provided by citizens through participatory crowdsourcing.

Guideline 2: Research Rigour. Secondary data was used to create and support all logical conclusions during the development of the critical success factors. Theories were used to guide the research and validate any assumptions. Subsequently, two expert reviews were conducted for the purpose of assessing the critical success factors.

Guideline 3: Design as an Artefact. This study was intended to produce the critical success factors required during the collection of high quality public safety data through participatory crowdsourcing utilising voice technologies.

Guideline 4: Design Evaluation. The proposed critical success factors were evaluated by applying an expert review. Accordingly, the proposed artefact was developed after considering the content of the research, and the material was then presented to seven experts, with sufficient and relevant educational background and experience, for evaluation. After considering the feedback from the expert review process, the artefact was appropriately modified.

Guideline 5: Design as a Search Process. The proposed artefact was constructed from related primary and secondary data. The comments and recommendations from the expert groups allowed the proposed artefact to be refined by removing ambiguity and ensuring completeness.

Guideline 6: Research Contributions. The design artefact is in the form of critical success factors, which address the issue of receiving poor quality public safety data because decisions based on such data will provide ineffective results.

Guideline 7: Communication of Research. This study will provide the management audience with an awareness of the importance of data quality, as well as the way in which data can be assessed to discover factors which produce low data quality. From a technological perspective, the way in which high quality data can be achieved through the use of technology will be illustrated.

In the next section, the details and findings of this study will be discussed.

3. Discussion and Findings

The discussion and findings section will firstly define “smart city” and “crowdsourcing” before illustrating the relationship between them. The next section will discuss the crowdsourcing safety Initiative (CSI) participatory project and its data collection method before the public safety data quality criteria are presented. The presentation of the critical success factors will follow, and they will then be linked to the identified public safety data quality problems identified. The last section under the heading “Discussion and Findings” presents the relationship between the crowdsourcing areas and the critical success factors.

3.1. Conceptualisation of a “Smart City”

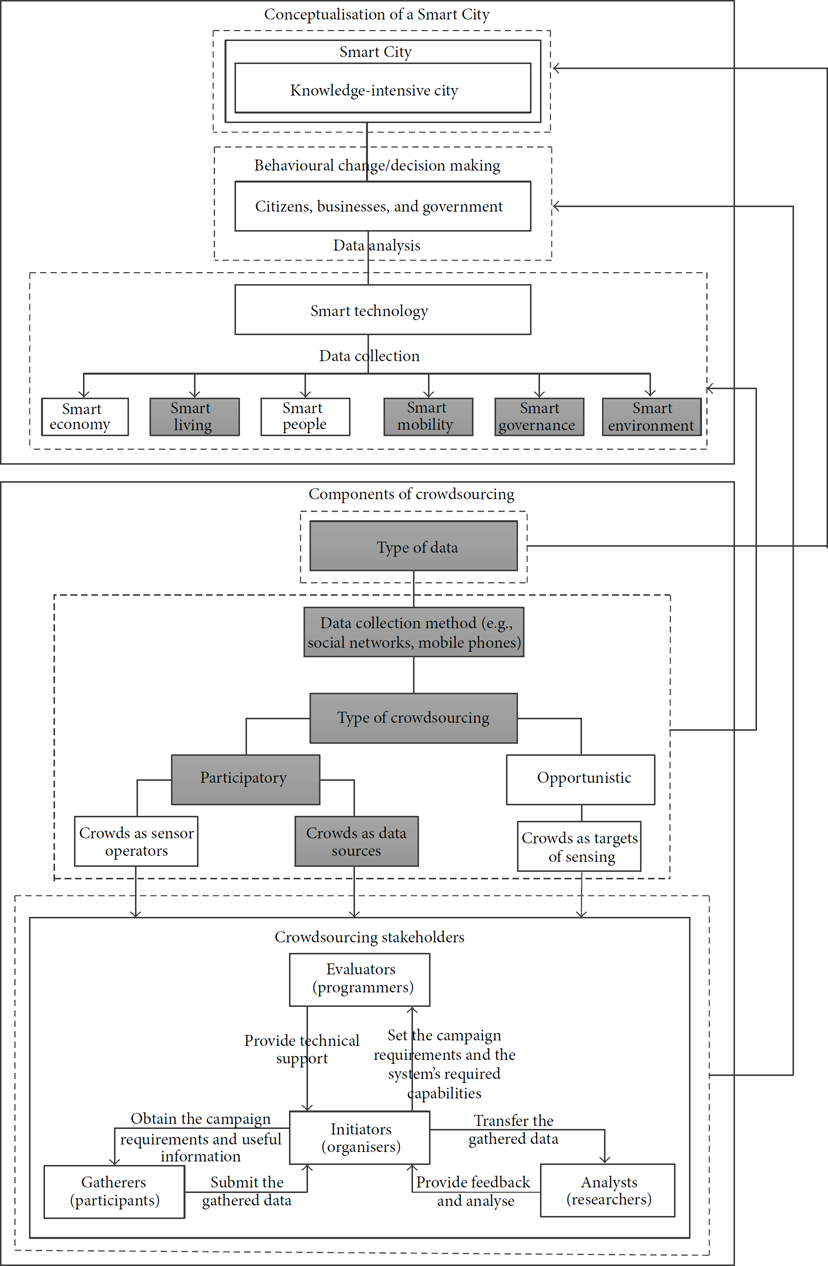

Nam and Pardo [8] explain that the most important consideration when defining a smart city is to view it as one “large organic system.” When conceptualising the smart city it is important to consider the stakeholders involved, smart technology, goals, and influential areas of the smart city. The “Conceptualisation of a smart city” section in Figure 3 incorporates all these components and can be used to explain the smart city concept. Note that the six boxes (smart economy, smart living, smart people, smart mobility, smart environment, and smart governance) refer to the six characteristics of a smart city, constructed by Giffinger et al. [9]. The characteristics were constructed on the basis of theories relating to traditional neoclassical and regional urban growth and development. This has allowed the characteristics to be used as a theoretical framework by a number of authors such as Caragliu et al. [10], Lombardi et al. [11], and Desourdis [12]. The grey boxes in the “Conceptualisation of a smart city” section in Figure 3 indicate the areas relevant to public safety, which is the focus of this paper (public safety data quality).

Smart city and crowdsourcing relationship.

Smart technologies are used to collect data on, or directly influence, certain areas of the city (economy, living, people, mobility, environment, and governance) [13]. Smart technologies are used by citizens, organisations, and government, usually in a collaborative effort, as limitations exist in individual endeavours. For example, due to the wide dispersion of citizens, such technologies can act as sensors to report traffic accidents, which will assist the traffic department in creating safer roads and managing traffic flow. These initiatives contribute to making the city smarter and more knowledge driven. Participatory crowdsourcing is the specific smart technology that will be discussed in this paper. Consequently, the way in which this can contribute to the smart city, more specifically public safety under “smart living,” will be explained.

3.2. Crowdsourcing Defined

Various authors use the terms “crowdsourcing” and “crowdsensing” interchangeably. This has been accepted by many publishers, but to avoid any confusion, this paper will use the term “crowdsourcing.” Crowdsourcing refers to a group of individuals who collect data or report on certain events of a similar nature and pool all the data collected [4]. This is the underlying concept of crowdsourcing. Accordingly, tasks can be solved or data generated by taking advantage of the wide geographical dispersion of citizens [14]. The dynamic mobility of citizens and their observations and knowledge of their surroundings make them the perfect candidates for collecting data on activities occurring in their environment. In this manner, large volumes of data can be collected in a short period of time, at a low cost. This data can, in turn, be used to make decisions [3], identify patterns [15], solve problems [14], and even influence behavioural change [5].

Before a crowdsourcing initiative can be implemented, one must determine exactly what data will be collected from crowds. For example, data on weather conditions will entail temperature, wind speed, and a geographical location. The data that is expected to be collected will influence the method in which it is collected. Methods for collecting date can range from social networks to sophisticated sensor devices. In all such initiatives, a common decision that must be made, regardless of the method chosen, is the type of crowdsourcing initiative to be undertaken.

3.3. Types of Crowdsourcing

Crowdsourcing initiatives can be divided into three categories based on the role and extent of participation [16]. Srivastava et al. [16] strongly emphasise that these three categories are not mutually exclusive; however, they can be used to understand the broad range of crowdsourcing initiatives. Therefore, a crowdsourcing initiative can incorporate a combination of characteristics from more than one category. The three types of crowdsourcing are tabulated in Table 1. When the crowdsourcing initiative has been clearly defined, the stakeholders involved can be selected.

Types of crowdsourcing [16].

3.4. Crowdsourcing Stakeholders

A typical crowdsourcing project has four stakeholder groups: (1) evaluators; (2) initiators; (3) gatherers; and (4) analysts [15]. The “Crowdsourcing Stakeholders” section in Figure 3 graphically illustrates the four stakeholder groups and their general roles and responsibilities in a crowdsourcing project. Note that an entity can play the role of more than one stakeholder group; for example, initiators can also be analysts. Additionally, a stakeholder group can occupy more than one entity. The next section will discuss how all the considerations of crowdsourcing are combined to create an effective crowdsourcing initiative.

3.5. Components of Crowdsourcing

The discussion above shows that there are four components of a typical crowdsourcing initiative. These four components include (1) the type of data collected; (2) the method used to collect the data; (3) the type of crowdsourcing initiative; and (4) the stakeholder groups involved in the crowdsourcing initiative. The “Components of crowdsourcing” section in Figure 3 incorporates all four components to illustrate what needs to be considered for a typical crowdsourcing initiative to facilitate its data collection purpose successfully. Note that the bottom box (labelled “Crowdsourcing Stakeholders”) was constructed by Yang et al. [15].

Before one can decide on the most appropriate and feasible crowdsourcing option and the stakeholders required, one must first understand the type of data that needs to be collected. For example, traffic accidents will require a description of the incident, time, and geographical location. One must then determine the best data collection method which is feasible and will allow high quality data to be collected. The grey boxes in Figure 3 (in the “Components of crowdsourcing” section) indicate the decision adopted by the CSI participatory project. Before discussing the CSI participatory project in more detail, the link between smart city and crowdsourcing will be provided.

3.6. Relationship between Smart City and Crowdsourcing

It is important to link smart city and crowdsourcing to emphasise the significance of this study and the contribution it makes to the body of knowledge. This will also add to an understanding of the way the critical success factors were decided upon and why they were deemed important (critical) to the research area of this study. Figure 3 illustrates the relationship between smart city and crowdsourcing, but more specifically how participatory crowdsourcing can contribute to the smart city.

The link between “Type of data” and “smart city” illustrates that high quality data will create a robust knowledge-intensive city. The relationship between “Citizens, businesses, and government” and the “Crowdsourcing Stakeholders” emphasises that crowdsourcing stakeholders should be made up of citizens, businesses, and local government. The link between “smart technology” (and the smart city characteristics) and the “Data collection method” (and “Type of crowdsourcing”) emphasises that different types of crowdsourcing can be used as smart technology to contribute to six areas (smart economy, smart living, smart people, smart mobility, smart governance, and smart environment) of a city. The next section will provide further details on the CSI participatory project.

3.7. CSI Participatory Project

The University of Fort Hare (located in East London, South Africa) and IBM have pooled their resources in order to run a public safety crowdsourcing pilot study in East London, South Africa. East London is a small district situated in Buffalo City, which is located in the developing country of South Africa. Figure 4 indicates the location of East London with the letter “A.”

East London (Google Maps).

The first thing that needs to be decided is exactly what data will be collected from the crowds. In the case of the CSI participatory project, public safety data will be gathered. Therefore, the initiative will contribute towards the smart living area of a smart city. Because of the vast array of public safety issues, general data common to all safety incidents should be collected so as to avoid data overload. At the same time, the data should be sufficient to facilitate an effective and efficient response. Through conversational analysis between IBM and the University of Fort Hare, it was found that the data should include the type of incident, the date and time of the incident, and the geographical location of the incident. The next step was to choose the data collection method to be used, for example, social networks, mobile phones, and blogs.

The CSI participatory project utilised mobile and landline phones for the collection of voice data. This was deemed the most appropriate data collection method based on the countries technological infrastructure (access to internet and network connection speeds), access to computers and smartphones, 11 official languages, low literacy rates, and low computer literacy rates. Additionally, reporting through speech is faster and less effort than typing a message. It is safe to assume that the majority of the public are capable of making a simple phone call from a mobile or landline phone. When phone calls are used as a data collection method, one must decide if calls will be managed by computer interaction (message prompts), human interaction, or a combination of both. In the case of the CSI participatory crowdsourcing project, participants were directed by message prompts (computer interaction).

The next decision was how the chosen data collection method would be used to collect the data; this was influenced by the type of crowdsourcing used. The type of crowdsourcing used by the CSI participatory project was crowds as data sources (participatory sourcing). This method was chosen as all other methods were found to be impractical. Because of South Africa's low literacy rate, text messaging may prevent some participants from reporting, and, therefore, making a phone call was decided on as an appropriate method of reporting for this project. Additionally, reporting through speech is faster and requires less effort than typing a message. It was felt that is was safe to assume that the general public is capable of making a simple phone call from a mobile or landline phone. When phone calls are used as a data collection method, it is important to decide whether the calls are to be managed by computer interaction (message prompts), human interaction, or a combination of both. In the case of the CSI participatory project, participants were directed by message prompts (computer interaction).

The CSI participatory project made use of an interactive voice response (IVR) system for an audio user interface (in the form of message prompts). IVR systems comprise an interactive telephonic interface [20] in terms of which pre-coded messages are provided to the user, who in turn supplies audio input to the system [21]. The IVR system serves as a substitute for the World Wide Web by sending and receiving data to people through their telephones [22]. The CSI project took advantage of the IVR system's voice recognition functionality when developing the message prompts to direct the caller to respond effectively.

The message prompts are intended to instruct the caller to provide the correct information so that the public safety data collected can be used appropriately. After a number of discussions on and iterations of potential message prompts, a free-flowing data reporting method (see Figure 5) was agreed on. The audio interface is vital for data collection as this directed the user to provide the required information on public safety issues. Greeff et al. [20] found that at times users have difficulty understanding the message prompts. Accordingly, the presentation of message prompts to the user has to be clear, ensuring that no ambiguity is present. This is no simple task as lengthy message prompts and countless options generally result in user dissatisfaction [23]. Therefore, the whole instruction process must be clear and concise. Numerous articles [20, 24, 25] have presented methods for developing an audio interface that will ensure that message prompts do not negatively affect user satisfaction. These methods were considered in conjunction with other methods that ensure that quality data is captured by the system, with the ultimate aim of ensuring that quality data is collected without compromising user satisfaction. The Wang and Strong's [26] Data Quality Framework was used to develop criteria for public safety data quality for the CSI participatory project.

Crowdsourcing message prompts.

Figure 3 illustrates that there are four types of stakeholder groups in a typical crowdsourcing initiative. As mentioned above, an entity can perform the role of more than one stakeholder group, which is the case in many crowdsourcing initiatives. The CSI participatory project also follows this stakeholder map, with the University of Fort Hare acting as the initiator, while both the University of Fort Hare and IBM play the joint role of analysts and evaluators (strictly in terms of setting up the IVR system); the participants comprise the citizens of East London.

3.8. Data Quality Framework

Wang and Strong's [26] Data Quality Framework organises data quality attributes into four categories, namely: (1) intrinsic data quality; (2) contextual data quality; (3) representational data quality; and (4) accessibility data quality. Note that the data quality attributes organised into the four categories include only those deemed important to a data consumer [26]. A data consumer is described as a person or organisation that accesses or uses the data [26]; therefore, the data consumer within the CSI participatory project would be the entity responsible for acting on the data collected (in this case, emergency and nonemergency services). The Data Quality Framework is used to construct criteria for public safety data quality and to assess the presence of the data quality attributes in the data collected from the citizens through the CSI project.

The Data Quality Framework was used to construct “yes or no” data quality assessment questions. Therefore, if all questions result in “yes” after a public safety report is assessed, then the data provided in the report is considered to be of high quality. Consequently, any “no” result will indicate that data quality is compromised, and based on the data quality attribute, one would be able to identify the problem area. Note that no weights will be allocated to questions used in the public safety data quality criteria as all questions are considered equally important.

Table 2 illustrates the questions that were constructed and indicates the data quality attributes (and the data quality dimension to which it belongs based on Wang and Strong's [26] Data Quality Framework) that were considered when constructing each individual question. This collection of questions is referred to as the public safety data quality criteria. These quality criteria were used to assess 100 public safety reports collected from the CSI project. A benchmark of 94 or fewer was found to be appropriate in assuming quality problems in the public safety reports. Therefore, any question that scored lower than 95 is seen as a data quality issue or a potential data quality issue. These questions that have scored below 95 are highlighted in bold in Table 2.

Public safety data quality criteria.

Following the assessment of the public safety reports, nine problems were found that affect the quality of the data. These problems were grouped and are presented in Table 5 together with the question number/s from Table 2 in brackets to indicate the question/s that identified the problems. These problems were used as a guide when compiling the critical success factors.

3.9. Critical Success Factors

Based on Wang and Strong's [26] Data Quality Framework, four critical success factors were proposed. These were compiled from both the secondary and the primary data and are presented in Table 3. After the feedback from the first expert review, the names of the critical success factors were made more descriptive, and a fifth critical success factor was added. After the second expert review, the critical success factors were repositioned as design principles [6], and the five refined critical success factors were reduced back to four. The finalised critical success factors indicate what should be in place and considered to ensure quality data is collected when implementing a crowdsourcing initiative. The final critical success factors are presented in Table 3 (the finalised critical success factors) to illustrate changes between the proposed critical success factors and the final (refined) critical success factors.

Critical success factors for this study.

A detailed explanation of each refined critical success factor is provided in Table 4.

Refined critical success factors.

Critical success factors and problem areas.

3.10. Critical Success Factors and Problem Areas

The critical success factors discussed above are the most important (critical) areas that need to be considered to ensure success in their respective environments. In other words, all major problems jeopardising the success of collecting quality public safety data in a participatory crowdsourcing project will be mitigated or reduced if one considers the critical success factors. Table 5 emphasises the importance of all the critical success factors by illustrating the problems that they address. The data quality problems were identified by assessing 100 public safety reports. This is also helpful for understanding which critical success factors require more attention than others.

Table 5 illustrates the areas (critical success factors) that need to be addressed to ensure that the problems affecting public safety data quality can be solved. This shows that if these factors are considered, nine problems can be solved and, subsequently, the quality of public safety data in participatory crowdsourcing through audio data collection can be increased. This will make this method of participatory crowdsourcing suitable for use as a smart city initiative and for reducing the public safety problem. Although Table 5 shows which critical success factors address the specific problems affecting data quality, it does not illustrate which stakeholders are responsible for the specific critical success factors. This will be discussed in the next section.

3.11. Critical Success Factors and the Theoretical Background

Since the construction of the critical success factors was supported by the Wang and Strong's [26] Data Quality Framework, it is valuable to illustrate the relationship between a typical crowdsourcing project and the supporting factors of the Data Quality Framework. This is presented in Figure 6.

Critical success factors and the Wang and Strong's [26] Data Quality Framework

Through conversational analysis on the CSI participatory project, it was found that the specific data that had to be collected included the type of incident and the date, time, and location (street/landmark and suburb/highway) of the incident. Based on the city's (East London) limitations, such as technological accessibility and literacy rate, voice was chosen as the data collection method using mobile and landline phones. The same constraints were considered when participatory crowdsourcing (crowds as data sources) was selected. A typical crowdsourcing project, regardless of the type of crowdsourcing option selected (participatory or opportunistic), involves four stakeholder groups. Since the CSI project is a joint effort by Fort Hare University and IBM, these two entities undertook the responsibility for multiple stakeholders (gatherers—East London citizens, initiators—Fort Hare University, analysts—Fort Hare University and IBM, and evaluators—Fort Hare University and IBM).

4. Conclusion

The CSI participatory project aims at providing an environment in which the public may communicate safety issues to the Buffalo City Municipality (responsible for East London), that is, data provided by the participants related to public safety issues. The collection of high quality public safety data from reports (participant successfully reporting quality public safety data), through participatory crowdsourcing (using humans as sensors), will result in an increased ability to resolve the issues raised by the public [27]. This will enable a significant contribution to be made to the smart city.

The study firstly established the components of a smart city and crowdsourcing and explained how crowdsourcing can be used as a smart city initiative (see Figure 3). This created context for the data quality problem experienced when using crowdsourcing. Subsequently, public safety data quality criteria were developed to assess the public safety reports collected from the CSI project. Following the data quality assessment of 100 public safety reports, it was found that there were nine common problems affecting the quality of data. These problems can be reduced or mitigated by considering the four critical success factors. These factors will also ensure that any other problems that could affect data quality are reduced or eliminated.

The critical success factors indicated what areas need to be considered to ensure quality data is collected. In terms of future research, it would be interesting to examine the implementation of participatory crowdsourcing in order to determine how these areas can be optimally addressed to ensure that high quality public safety data is collected. These solutions can then be applied in practice to test the effectiveness and efficiency of the data collected. In addition, all participatory crowdsourcing initiatives rely on participation to be successful. This study assumes (supported by the Rational Choice Theory [18]) that if the participants' reporting is effortless (simplification of the crowdsourcing process), participants will be more likely to participate. In addition, if participants perceive a crowdsourcing project as an effective initiative and anonymity is ensured, they will be more likely to cooperate and even take the extra effort to provide high quality data. Measuring the extent of these conclusions will be interesting research for future studies.

Footnotes

Acknowledgments

This work is based on the research supported in part by the National Research Foundation (NRF) of South Africa, the International Business Machine Corporation (IBM), the Govan Mbeki Research and Development Centre (GRMDC), the University of Fort Hare, and the citizens of East London. The authors acknowledge that the opinions, findings, and conclusions expressed are those of the authors and that the NRF, IBM, GMRDC, and UFH accept no liability whatsoever in this regard.