Abstract

Automated music composition and algorithmic composition are based on the logic operation with music parameters being set according to the desired music style or emotion. The computer generative music can be integrated with other domains using proper mapping techniques, such as the intermedia arts with music technology. This paper mainly discusses the possibility of integrating both automatic composition and motion devices with an Emotional Identification System (EIS) using the emotion classification and parameters through wireless communication ZigBee. The correspondent music pattern and motion path can be driven simultaneously via the cursor movement of the Emotion Map (EM) varying with time. An interactive music-motion platform is established accordingly.

1. Introduction

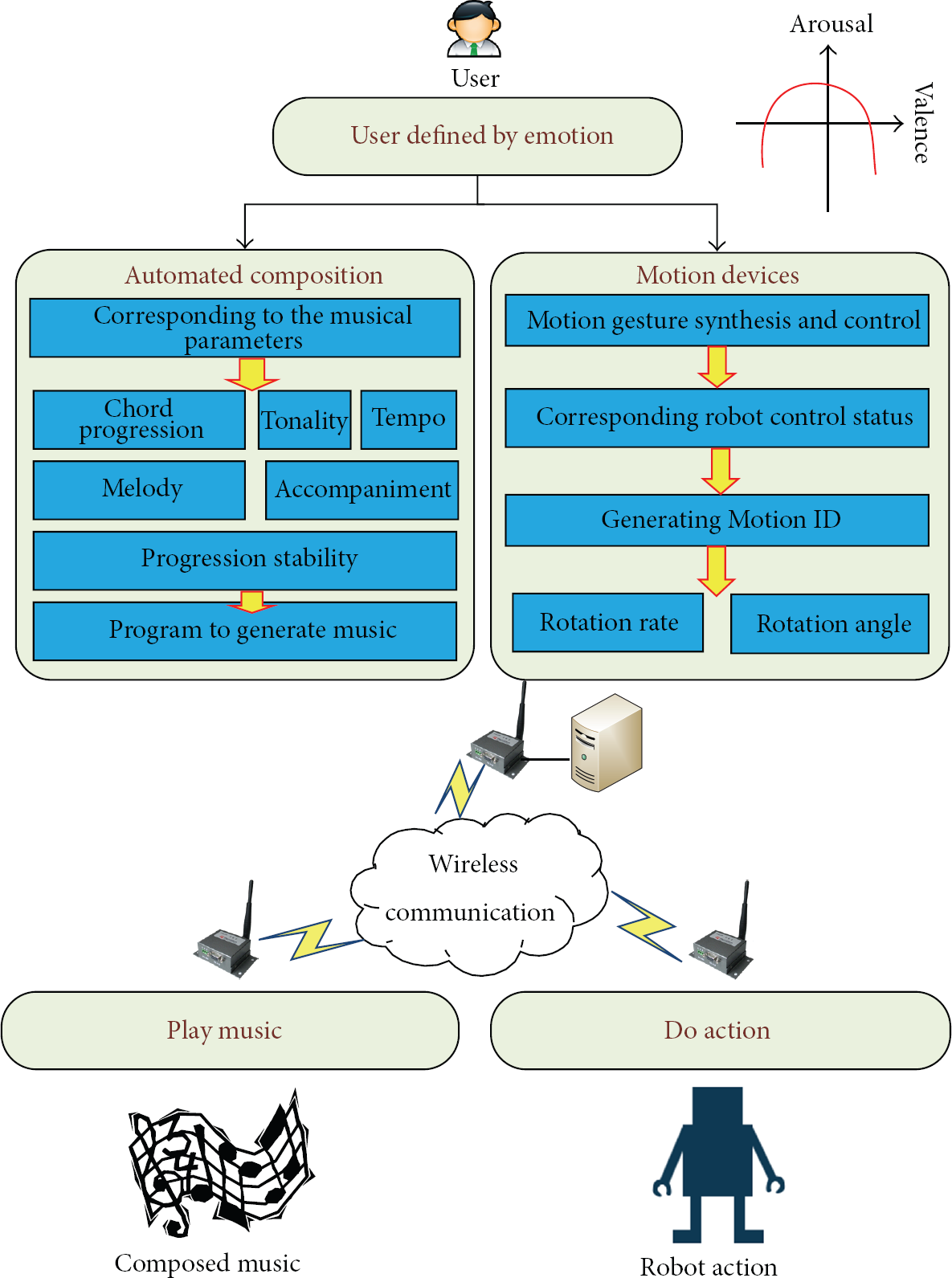

Discussion of this paper is shown on the main schema such as Figure 1.

Automated emotion music generation with robotic motion based on emotion patterns.

In this paper, the main idea of the innovated music-motion generation system is based on the mood analysis using the concept of Emotion Map (EM) which will be introduced in the next section. The corresponding mood parameters can be retrieved from Emotion Map with x-axis and y-axis parameters to generate music parameter by Music ID and to generate movement action by Motion ID. Music ID is used to generate music patterns through Max/MSP real-time programming [1], and the real-time automated composition is performed by the corresponding Motion ID from RoboBuilder Development Board [2], control servomotors, and a robot that corresponds to the action. Music ID and Motion ID are transferred to client via wireless communication ZigBee. ZigBee's wireless communication can achieve ubiquitous computing [3], concept that consists of five basic elements (5A): any time, any where, any service, any device, and any security.

In the system architecture diagram (Figure 1), the dashed line is the integration of the future development, including Emotional Identification System (EIS), intermedia audio-visual art, digital music learning system, and interactive multimedia device, which can be combined with audio and interactive toys using motion sensor. To EIS, for example, the future work can consolidate the research of brain waves. By analyzing the data of user's brain waves, it captures the emotion response to generate the objective analysis of the emotional parameters. In addition, Music ID can be generated by motion sensor to perform the algorithmic composition with more parameters provided, but the ultimate goal of the entire system is to integrate automatic composition and interaction of science, and more music technologies can be applied in the future with cross-disciplinary research in the industry field. Automatic composition [4] becomes more mature than before, and it can be applied even to the video game.

2. Background

2.1. Cognitive Emotion Map and Emotional Parameters Analysis

According to the cognitive response evaluation for the study of the music mood, many experiments demonstrate that the arousal (activation) and valence (pleasantness) in an x-y two-dimensional diagram are the dominant representation for music cognition [6].

As to the emotion mapping, based on Germany Augsburg University's research [7], four categories of human emotions including happiness, anger, sorrow, and joy retrieved from the brain waves can be expressed in the degree of excitement (arousal) and pleasant degree (valence), distributed in a two-dimensional emotion plane.

According to the announced research by the University of Montreal [8], happy, sad, scary, and peaceful music excerpts for research on emotions are used as the experiments to allow subjects to listen to the music in various emotion types, to evaluate testers' response from the cognitive music. In addition this system uses the specific result of the experiment as the linkage for both emotional and cognitive responses.

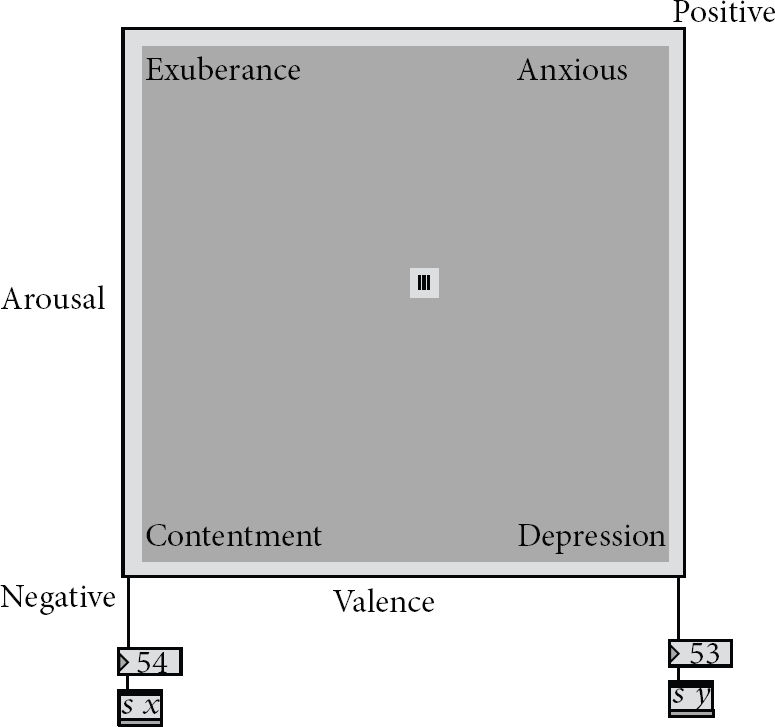

In combination with the above experimental results, the selected two-dimensional cognitive 2DER [5] in Figure 2 is used, and the term “Emotion Map” (EM) is created in our research.

Emotional and cognitive reaction of corresponding figure [5].

With Max/MSP computer music program implementation, a screenshot of the user's interface as EM is shown in Figure 3. In this system the user can choose the emotion varying trajectory through the EM to acquire arousal and valance values, and obtain the output values from the corresponding EM's x and y values correspondently.

Two-dimensional Emotion Map (2DER).

2.2. Music Parameters Analysis

Music features including pitch, tempo, velocity, and rhythm can be retrieved through musical instrument digital interface (MIDI), to make most of elements in music quantifiable with the integer in a range from 0 to 127.

Table 1 is based on the previous research to put both music parameters [9, 10] and the corresponding description [9, 10].

Music parameters with their corresponding relations.

2.3. Music Parameters and the Correspondent Cognitive Emotion Map

According to Gomez and Danuser's experimental result [11], the rated score for the moods of voluntary subjects is obtained based on the emotional and cognitive analysis with listening to several music fragments from different musical types. The following result shows the statistical ratings with expert analysis, which is shown in Figure 4.

Music parameters and the corresponding cognitive reaction relationship.

These experimental results consist of the following conclusions.

Sound intensity in positive low arousal is lower and is relatively high in negative high arousal. Tempo in positive areas ( Rhythm in negative high arousal area is vaguer but is more explicit than that in the other three quadrants. Accentuated rhythms and arousal are positively correlated. Rhythmic articulation in positive area ( As pleasantness increases, melodic direction has descending tendencies. Pitch range in negative high arousal is broader and in the other three quadrants appears to be narrower. Mode (major/minor) in negative high arousal tends to minor mode and in positive high-arousal tends to major mode. Harmonic complexity in negative area (

Consonance declines from positive low-arousal position to negative high-arousal position.

2.4. Sieve and Markov Model

These two methods are both important in computer composition. A Markov model [12] is simply a nondeterministic finite automata with probability associated with each state transition. Figure 5 is an example; it has some rule to work the model.

Markov model in chord.

Figure 6 is the flowchart of sieve; it will generate the number in the scale at random, using the modulus operator to determine whether the number is in the scale or not. If the note is in the scale, it will become a musical note. On the contrary, the system will regenerate the number. According to pitch class theory [13], we can use that random number to modulo arithmetic in 12.

Sieve dataflow.

2.5. ZigBee Model

The ZigBee Alliance [14] is an association of companies working together to develop standards and products for reliable, cost-effective, low-power wireless networking [15]. ZigBee has many kinds of topology, for example, Mesh, Star, and Cluster Trees. These topologies enable ZigBee to be applied to other fields.

In this study, Mesh network was chosen to play an important role. Point-to-point line configuration of Mesh makes isolation of faults easy. The transmission through node-by-node strategy allows longer transfer distance, and the system becomes more portable and unlimitable.

2.6. Algorithmic Composition

Algorithmic composition is implemented with various algorithms to compose music automatically. The total randomized music can be automatically generated easily with random function; however, it lacks any musical rules and algorithms to control the music progression. As shown in Figure 7, thus the software “MusicSculptor” developed by Professor Phil Winsor can control the music parameter progressions via probability distribution [16, 17].

The pitch class and octave range distribution table in musicsculptor.

Furthermore, there are some delicate works of algorithmic composition done by Professor David Cope, using computer music composing program, to generate music in some certain styles with proper music parameter settings and to discuss many of the issues surrounding the program, including artificial inteligence [18, 19].

3. Method

In music analysis and automated composition part, we use Max/MSP to implement the generative music. The motion device design programming is by C, and the movement of the motors speed and direction can be controlled in real time. Algorithm 1 is part of program; its function is to move robot to another position.

/********************************************/ /*Function that sends Position Move Command to wCK module */ /*lnput: ServolD, SpeedLevel, Position */ /*Output: Current */ /********************************************/ char PosSend (char ServolD, char Speed Level, char Position) { Char Current; SendOperCommand ((SpeedLevel≪5)|ServoID, Position); GetByte (TIME_OUT1); Current = GetByte (TIME_OUT1); return Current; }

The flowchart of system is as shown in Figure 8.

System flowchart.

3.1. Sentiment Analysis

Traditional algorithmic composition uses random function or probability function; however, it is not intuitive to general users. Therefore, in the research we supply friendly interface to user composition. According to 2DER, users draw the emotion trajectory from the EM, based on the emotional quadrant in Figure 2 definition, to get the correspondent arousal and valence value and map them into the automated music composition (Max/MSP) and motion planning program.

3.2. Automated Composition

Automated composition of the above study and discussion has a variety of musical parameters, and cognitive EM can be directly related. In the implementation, some of the music parameters are used as follows.

Tonality. Tonality is divided into major and minorchords. According to the value of the valence, the appearance chance of major chord and minor chord progressions can be controlled. For a large valance value, major chord probability is higher. On the contrary, a small valence value will make the minor chord probability higher. Chord Progression and the Main Melody. Chord progression is based on the “scale degree,” and cadence can be a rule using Markov chain to control the chord progression chance. Musical scale in major or minor can be determined based on the EM mode, and the main melody can be determined by the Max/MSP program from chord tones with the passing tones added. Tempo. In high-arousal condition, the tempo goes faster, and in low-arousal condition it is slower. Accompaniment. Accompaniment parts use some fixed music patterns. Progression Stability. In high-arousal condition, chords changing rate is higher. On the other hand, low arousal causes chord to change less frequently and to be more prone to remain at the level of the “tonic chord.”

The “real-time automated composition program” by Max/MSP is constructed, and the generated music can be saved into an MIDI file to use MIDI sequencer to analyze its pitch distribution, as shown in Figure 9.

Pitch distribution of different types of emotion music generated by real-time automated composition program.

In Figure 9, the results of the automated composition program show that high arousal (joy and angry) leads into short but many notes. On the contrary, low arousal has more long notes than high arousal.

3.3. Motion Gesture Synthesis and Control

Motion unit for a robot uses RoboBuilder (see Figure 10). The robot is made in Korea. And it is a low-priced, full-featured, high technology, and research humanoid robot. The control signal can be sent out to the motors for the motion gesture synthesis and control.

RoboBuilder.

In order to control the robotic motion with emotion data, it is necessary to define the robot's movement in a table as shown in Figure 1. Action parts, as shown in Motion ID, can be synthesized by the C program to control the mapping between Motion ID and Action, as shown in Table 2.

Robotic Motion ID and its motion definition.

The emotional controlled robot motion can be expressed based on the correspondent parameters shown in Table 3.

Emotional states and their correspondent motion.

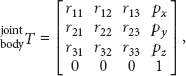

For obtaining the robot motion in the space, the kinematics are a very common problem to discuss the relation between robot joint space and Cartesian space. The robot static positioning problem can be solved via the forward and backward kinematics [20]. The kinematic analysis for the robot system is significant to integrate the robotic motion control and the music emotion precisely. For instance, if we use the humanoid robot system as the robot motion mechanism, its forward kinematics can be described as follows:

Music emotion driven robot control architecture.

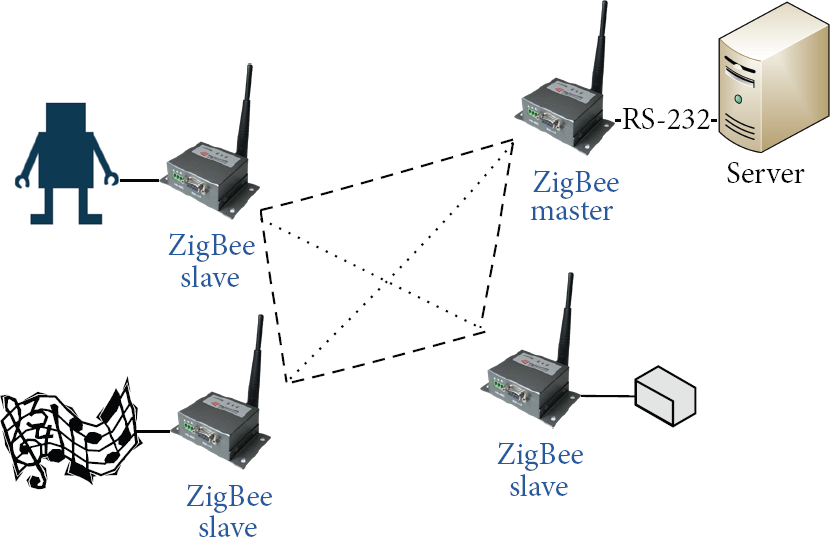

3.4. ZigBee Communication

Figure 12 is the flowchart of ZigBee communication. The ZigBee master is responsible for sending the data from server and receiving the data from the other ZigBee slave. Server can transmit generated MIDI files and motion commands to remote speaker and robot though ZigBee.

ZigBee communication flowchart.

4. Experimental Result and Analysis

The experiment uses our method to input some emotion data to our system. The system transforms these data into music features and Motion ID. The specific emotions generate music and drive robot to do some action. We record the result of our system into videos which have four emotions: joy, anger, sadness, and happiness. All videos are available on the internet at http://140.113.156.190/robot/.

The SPSS software is used to get the statistic results (see Figure 13). Among the 12 subjects, there are 75% male and 25% female. As most of them have been learning about music more than five years, the rate is up to 75%. There are 66.7% of them who often listen to music. The above information shows that most of the subjects are familiar with music, thus giving more credibility to the test.

Subjects' data.

The reliability coefficient (Cronbach's α) is 0.813 which shows the good quality of the subjects' emotion discrimination (α > 0.6). The questionnaire survey is conducted to see if the generated music and driven robot match the corresponding emotion. Likert 5-point scale (1: strongly disagree, 2: disagree, 3: neither agree nor disagree, 4: agree, and 5: strongly agree) is used for the evaluation. According to Table 4 robot and music expressing sadness have good feedback in this questionnaire.

Statistics results.

Compared to the conventional algorithmic composition of computer programs, the proposed system can be applied in more practical and specific ways to perform the automated composition with proper auxiliary motion gestures and the emotion-based graphic interface, instead of the huge amount of music parameters input, to make music composition much easier and interesting.

5. Conclusions

This study is based on the integration of automated music composition and motion device. Some important features and advantages are discussed and used to synthesize both music and motion based on the emotion data from the user's input according to the EM x-y value. The EM's interface can be friendlier than the traditional algorithmic composition interface. The musical psychology literatures can be used to perform the automated composition, and the system uses emotion as an input parameter to allow users to compose music easily without having to learn the complicated music theory, with the emotion-mapped EM data input instead.

This research can create further development to integrate the music with the brain wave data, to make the computer generative music to be properly related to user's current mood or emotion.

This system also performs the emotion-based automated composition with the integration of a small robot, according to the user's mood selection.

Wireless communication makes the system more portable and reliable. Not only wireless communication but also the automated music composition can be brought into the commercial market in the future, to apply the research to the field of multimedia edutainment with music technology.

Footnotes

Acknowledgment

The authors are appreciative of the support from the National Science Council Projects of Taiwan: NSC 101-2410-H-155-033-MY3 and NSC 101-2627-E-155-001-MY3.