Abstract

Intelligent CAD garment design becomes more and more popular by attracting the attentions from both manufacturers and professional stylists. The existing garment CAD systems and clothing simulation software fail to provide user-friendly interfaces as well as dynamic recommendation during the garment creation process. In this paper, we propose an intelligent hypertouch garment design system, which dynamically predicts the possible solutions along with the intelligent design procedure. User behavioral information and dynamic shape matching are used to learn and predict the desired garment patterns. We also propose a new hypertouch concept of gesture-based interaction for our system. We evaluate our system with a prototype platform. The results show that our system is effective, robust, and easy to use for quick garment design.

1. Introduction

During the last decade, garment design, modeling, and animation have been identified as being among the most popular topics in the fields of CAD and computer graphics. Traditional garment design usually requires pattern technologists (tailors) to create 2D patterns from the designer's sketches, which are then cut out and sewn together to form a complete piece of dress. CAD systems and software [1] are highly involved in clothing manufacturing to model these 2D clothes patterns according to the specifications from the designers and tailors.

However, manipulating a CAD system requires extensive learning effort. Most commercial garment design systems are based on rigid shape creation and editing, such as B-Spline and Bézier Curve. It is neither flexible to perform the operation nor efficient to carry out validity assessment. Moreover, it is often required to obtain a complete shape input before the CAD system starts to process, resulting in efficiency being lost in the garment CAD progress. It would be better to have a proactive clothes shape recommendation mechanism that captures the knowledge of user preferences and predicts and suggests suitable solutions in such a dynamic drawing environment. This is the first motivation of our work.

Our second motivation is the fast development of multitouch technology [2]. Current CAD platforms for garment design are mainly conceived for 2D modeling for geometric clothes shapes but generally do not provide high-level interactive environment with aesthetic and functional features. With the emergence of electronic touch-sensing equipments, it is now possible to provide designers with a more intuitive and flexible means of interaction. The multitouch systems allow people to interact with the system using multiple fingers simultaneously. More information is processed, and higher degrees of freedom are experienced than in mouse-based operations. This in turn reduces the time and effort overhead for prior training. How to build an efficient and easy-to-use garment design platform becomes one of the main challenges in the domain of textile garment production. We propose a concept of hypertouch interaction to enrich the diversity and functionality of current touch-based interfaces.

Contributions. We propose a proactive recommendation system that returns possible clothes shapes along with the designing progress based on user behavior patterns. The work we present in this paper has three main contributions, which are given as follows.

User Behavior Pattern Extraction. We extract the behavior patterns from the apparel design database to capture user preferences. A domain knowledge base with these user behavior patterns is constructed accordingly. We employ the user behavior tree from our previous work [3] to model these user patterns. Different drawing habits with respect to both spatial and temporal properties are taken into consideration.

Dynamic Clothes Shape Recommendation. We introduce a new feature of dynamic recommendation, which is also called recommendation in motion, to the current apparel design systems. We provide a proactive mechanism, which dynamically returns fitting shapes as the designer inputting clothes contours. This speeds up the apparel design process to a large extent. The selection of the matching shapes is determined by a partial matching algorithm between the incomplete input shape and the patterns in the knowledge base.

Nonrigid Hypertouch Interaction. We propose a new hypertouch interaction concept for apparel design. Compared to the existing touch-based systems, the hypertouch interface gives the user higher degrees of freedom as well as more information throughput. A flexible and natural interface is developed to benefit the apparel design task towards a more efficient and user-friendly direction.

2. Related Work

Recent touch-sensing techniques [2] enable people to interact with complex systems through multipoint control and have become the focus of a great deal of research and commercial activities [4]. These techniques support gesture-based interaction [5, 6], with or without the requirement of direct contact with the touch screen or the so-called hypertouch [7], to provide effective and practical manipulations or controls of the objects.

Wu and Balakrishnan [6] explored a variety of gestural interaction techniques with multifingers or whole hands. Their techniques are towards tabletop displays as if interacting with physical objects on real tables. ShadowGuides [8] is an interactive system for multitouch and hand gestures with in situ learning. Wobbrock et al. [9] presented a method to design tabletop gestures that relies on eliciting gestures from nontechnical users by first portraying the effect of a gesture and then asking users to perform its cause.

There are some gestural systems developed to facilitate the processing of clothing manipulation. Igarashi and Hughes [10] proposed a technique for mapping sketching clothes marks onto a 3D model by building up correspondence between 2D sketches and 3D models. In [11], authors proposed a garment creation and modeling system with sketches. The operations are performed by stroke symbols designed especially for different editing operations, such as break, corner detection, and folding.

These systems carry out clothing design tasks on virtual human models, and do not provide domain knowledge during the design process. Moreover, all operations correspond to a single-finger dragging and moving action of different paths and patterns, while a multitouch concept is not involved.

3. System Architecture

In this paper, we present an intelligent recommendation system for garment design. The propose method provides an easy-to-use and flexible touching interface, as well as dynamic shape prediction based on user behavior patterns. Figure 1 demonstrates the entire system architecture and framework of our proposed method.

The architecture of our intelligent hypertouch recommendation system for apparel design.

Three phases are involved in our proposed system, including (1) user behavior capture, (2) hypertouch interaction, and (3) intelligent apparel design.

For the first phase of our system, a variety of user behavior patterns are captured by constructing a drawing pattern database offline. We first invite designers to perform apparel design to generate clothes shapes. The garment creation process is recorded and the drawing behavior patterns from designers and tailors are extracted and retrieved. These user drawing behavior patterns are formulated into a tree-structured model, which is called the user behavior tree (UBT) in our previous work [3]. A knowledge base storing the UBTs of the designers and tailors is constructed accordingly.

For the second phase, a hypertouch interface is provided to perform the apparel design task. By using the interface, the garment shapes are depicted freely and quickly. Both freehand sketching and gesture interaction are supported. New shape inputs and commands are created by designers with stylus or fingers on our tabletop display device. The raw input data are first regularized and recognized. Geometric features and topological features of the shape are then extracted. In this paper, bisegment descriptors [12] are used to model these shape features.

The third phase is online dynamic recommendation. This is a google-like processing. Possible matched shapes are proactively returned to the user to provide suggestions and reference according to domain knowledge and user preferences. A partial matching algorithm proposed in our previous work [13] is used to dynamically return the possible shapes with maximal similarities to the current input drawing in real time. We develop a prototype system to evaluate the effectiveness, efficiency, and user friendliness of the proposed method.

4. Hypertouch Interaction

In this paper, we propose a new type of interaction called hypertouch (HT) interaction. The hypertouch interaction is an extension of the existing touch-based interfaces [2]. It exploits richer ways of interactions and puts them into a better and broader manner of usage.

In this section, we first define the concept of hypertouch interaction. Second, we define the elementary items forming hypertouch interaction and discuss their characteristics.

4.1. The Hypertouch Concept

The richness and diversity of user interfaces is highly related to the richness of degrees of freedom (DOF), particularly continuous DOF. Typical touch-based interfaces are largely based on the moving fingers on the 2D touch-sensitive surfaces. Direct touches on the surfaces, either with a stylus or fingers, are required in order to perform practical controls on the objects. Direct touching is required, either with a stylus or fingers, on the surface in order to perform practical controls on the objects. This results in 2 degrees of freedom (DOF) for each finger touch and places a 2D spatial constraint on the interaction. Sometimes it is not adequate to capture the type of richness of input that we encounter in our interaction world.

In order to enable more channels for communication, we propose the concept of hypertouch interaction, which is given in Definition 1.

Definition 1 (hypertouch interaction). Hypertouch interaction is a manner of human-computer interaction with a touch screen, in which each touch event gives two or more degrees of freedom (DOF).

By providing higher DOF, the hypertouch interaction opens up the diversity of touch functionalities. It alleviates the 2D spatial constraint for current touch interfaces that the user has to actually touch the screen to operate. In other words, it extends the touch actions into space-immersed manipulations with higher dimensions. The conventional 2D touch-based interfaces are enriched with larger freedom and flexibility in a broader spatial area.

The common 2D touch-based interfaces are the most popular and typical hypertouch systems. However, these touch-based systems are basically offering 2 DOF for each finger touch. In addition to the 2D touch interface, hypertouch interaction also happens in 2.5D and 3D spaces. Higher DOF allows the user to conduct the interaction more freely and flexibly. This generates friendly user experience and improves the working efficiency.

The 2.5D hypertouch interaction is also called zero touch or touch-free, which means the interaction takes place in an area very close to the touch surface (usually within 20 cm in distance). This activates the depth sensing feature of the operations, which provides a far higher potential for rich interaction than the current binary touch interaction.

The 3D hypertouch is also known as 3D gesture control or 3D depth sensing technique. It allows the user to interact with any display screen from any distance. Depth tracking devices, for example, 3D cameras and 3D gesture recognition, are required to perform the 3D hypertouch interaction.

The above spatial attributes are one of the most intrinsic characteristics of the hypertouch interaction that makes it more comprehensive than the current 2D touch-based interaction. It will be formalized and discussed in detail in the following subsection.

4.2. Characteristics of Hypertouch Interaction

The hypertouch interaction is composed of a series of hypertouch events under certain context. The diversity of a hypertouch event is determined by two factors as the multitouch threads and the gesture depth channel. Definition 2 gives the concept of hypertouch event.

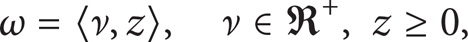

Definition 2 (hypertouch event). A hypertouch event ω is a 2-tuple which defines the hypertouch action involving ν finger touches at distance z and is denoted as

where ν is the number of touch threads and z is the gesture depth from the hands to the screen along the Z-axis.

Figure 2 shows the principle of hypertouch event, in which the two axes denote for the touch threads and the depth channel, respectively. As we can see from Figure 2, the interaction space is described by the above two dimensions. The flexibility (the red line) of gestures is enriched with the growing of distance to the screen and the number of the touches. This is because more touch points and broader interaction space provide more free and natural communication as is in our daily lives. On the other hand, high flexibility results in low efficiency and accuracy. Since additional time is needed for 2.5D and 3D interaction to track the hand position and perform 3D gesture recognition, the efficiency (the green line) of the interaction is decreased along with the increasing of the gesture depth. The goal of hypertouch event is to achieve a tradeoff between flexibility and efficiency. This means the hypertouch event usually takes place in the upper subspace (the shadowed area) in order to generate satisfied user experiences. In other words, users tend to use multiple number of touches across multiple dimensions of spaces to achieve an optimal combination of flexibility and efficiency. This also explains why the current 2D multitouch interface is not enough to provide flexible and constraint-free gesture functionalities.

The principle of hypertouch event. The hypertouch event takes action in the upper subspace, while multitouch event and remote control are performed in the left and right subspace, respectively.

A hypertouch event and its corresponding context information consist of a scene of hypertouch. A set of hypertouch scenes consist of the whole hypertouch interaction space. The concept of hypertouch scene and interaction space are defined in Definitions 3 and 4, respectively.

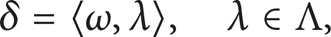

Definition 3 (hypertouch scene). A scene δ is a scenario in which the users perform their hypertouch actions. A scene is composed of a 〈event, context〉 pair as

where ω is the hypertouch event and λ is the context of the scene from the context set Λ.

Definition 4 (hypertouch space). An interactive hypertouch space Ψ is consisted of a set of hypertouch scenes, which are aware of the user, performance, application, and environment. It is denoted as

where δ stands for a specific scene.

The context information is taken into consideration in order to model the hypertouch scene more comprehensively. The context is related to the specific environmental parameters and attributes of the hypertouch event. It encodes the operating characteristics from multiple aspects, including time, location, number of users, interaction mode, software API, and hardware. Figure 3 shows an illustration of the multiple dimensions of the context information.

The illustration of various attributes in the hypertouch context from different aspects.

5. Intelligent Garment Recommendation

The intelligent garment design is a real-time interaction process between the designer and the computer. The ultimate goal of the system is to support dynamic recommendations along with the shape generation process. To this end, we employ a graph-based shape description and matching methods to return possible apparel shape solutions proactively.

5.1. The Criteria for Recommendation Selection

In order to determine which apparel shapes are chosen from the database for recommendation, a partial matching algorithm is employed. The shapes with the highest similarities to the input drawing are selected and returned to the designer in real time once a new command input is detected.

There are generally three cases. Firstly, if a candidate garment shape includes the incomplete input shape, they are considered to be highly similar since the input shape can be finished later. Secondly, if the partial input shape includes certain components that are not included in the candidate shape or includes more components than the candidate, the candidate shape is regarded not to conform to the user requirement, and the similarity should be very low even if other corresponding parts may be very similar. Thirdly, if the partial input shape is included by two or more candidate garment shapes, the one with the fewest components will have the highest similarity.

5.2. Dynamic Matching Procedure

There are mainly two modules involved in our intelligent apparel recommendation procedure, including garment shape description and partial matching.

In the shape description module, both geometrical and topological features of the garment shapes are extracted. A bisegment descriptor proposed in our previous work [3, 12, 13] is used to model these shape features into graph structures. In this way, the raw sketch input data is transformed into graph representations, which are then used for the following partial matching. We introduce the calculation of the bisegment graph (BSG) shape descriptor in the following.

Definition 5 (bisegment graph). A BSG is defined as a 6-tuple G = (V, E, R E , Θ E , Λ E , C E ), where

V is the set of graph nodes (every node corresponds to a segment in the garment shape),

E ⊆ V × V is the set of edges of the graph (if there is a bisegment combining two neighbor segments v i and v j , an edge e ij = (v i , v j ) exists),

R E : E → Σ R is a function assigning the feature of binary topology relations Σ R = {R l, l , R l, c , R c, c } to the edges,

Θ E : E → (0, π] is a function extracting the geometrical features by calculating the inner angle of the two adjacent segments of a bisegment,

Λ E : E → (0,1] is a function extracting the geometrical features by calculating the ratio between the segment lengths of the bisegment,

C E : E → [0, π) × [0, π) and C E (e ij ) = (κ1, κ2), where κ1 and κ2 are the curvatures of the two segments at their intersection point (here κ1 and κ2 are sorted in an ascending order to count for rotation invariance).

Figure 4 shows the procedure of bisegment graph calculation. The original shape is first regularized and segmented into {a, b, c, d} before they are decomposed into a bisegment sequence as {B1, B2, B3, B4}. The features are then extracted, and a bisegment graph is constructed based on Definition 5.

The calculation of the bisegment graph of a garment shape. (a) A regularized shape for the sleeve part of a garment. (b) The decomposition of the bisegments. (c) The graph construction of the corresponding BSG model.

In the partial matching module, a partial matching algorithm is developed to calculate the similarity for dynamic apparel shape recommendation. The partial matching of garment shapes is performed between the precollected shapes in the apparel database and the user's input shape from the hypertouch interface. The calculation of the similarity is asymmetric. We carry out the partial matching based on a product graph spanning algorithm [13]. A weighted product graph of two bisegment graph models representing the difference between two garment shapes is derived. The similarity is then calculated from the minimum spanning tree (MST) of the weighted product graph.

The process of our intelligent apparel recommendation is shown in Figure 5, which gives an example of the matching procedure between two garment shapes. Figure 5 (a) is a candidate garment shape in the database. Its corresponding bisegment graph representation is shown in Figure 5 (b). Figure 5 (c) is the incomplete sketch shape input drawn by the designer. The bisegment feature of the input is calculated online to generate the bisegment graph descriptor in Figure 5 (d). The product graph of the bisegment graphs for the candidate shape and input shape is derived from the Kronecker product operation [13], and the result is given in Figure 5 (e). We will explain the details of the dynamic partial matching algorithm in the following.

The partial matching procedure. (a) A candidate garment shape with three segments. (b) The bisegment graph extraction of (a). (c) An incomplete garment shape from the designer with two segments. (d) The bisegment graph extraction of (b). (e) The weighted directed product graph of (b) and (d) by a modified Kronecker product operation.

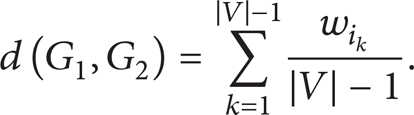

5.2.1. Product Graph Construction

We will first define some basic concepts.

Definition 6 (modified Kronecker product). Given two real matrices, A ∈ ℝm × n, B ∈ ℝr × s, the modified Kronecker product

where [A ij ]r × s denotes a r × s real matrix with elements of A ij , and abs(A) is a r × s matrix with elements of abs(A).

The product of two weighted graphs can then be defined based on Definition 6.

Definition 7 (weighted direct product graph (WDPG)). Given two graphs, G1(V1, E1), G2(V2, E2), and their weighted matrices as W1 ∈ ℝm × n and W2 ∈ ℝr × s, respectively, their weighted direct product graph G1 × 2 is a graph with vertex set V1 × 2 = {(u

i

, v

r

): u

i

∈ V1, v

r

∈ V2}, edge set E1 × 2 = {((u

i

, v

r

), (u

j

, v

s

)): (u

i

, u

j

) ∈ E1 ∧ (v

r

, v

s

) ∈ E2}, and weighted matrix

From Definition 7, the WDPG G1 × 2 is a product graph that each vertex involves a vertex pair over two graphs G1 and G2, an edge indicates that the corresponding vertices in the original graphs are both neighbors, and the weights of the edges are determined by

The construction of WDPG of two graphs of different sizes is shown in Figure 5. The vertex in the product graph corresponds to a vertex pair in the original graphs, and an edge exists if and only if the corresponding vertices are neighbors in both original graphs. The weights of the product graph are set by calculating the absolute difference of the weights of the corresponding edges from the original graphs. For instance, the weight of edge (A1, B2) in the product graph is set to be 3, which is the absolute difference value of the weights of edge (A, B) and (1,2) in the original graphs.

5.2.2. Similarity Measurement

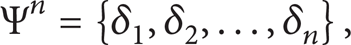

Here we give the matching metric based on the MST.

For a product graph, given its minimal spanning tree with edge set denoted as {e i 1 , e i 2 , …, e i |V| – 1 } and the weight set as {w i 1 , w i 2 , …, w i |V| – 1 }, then the proposed similarity metric between two original graphs is determined by

According to our calculation of the weights of the product graph, which encodes the difference of weights between two corresponding edges in two original graphs, the edge-matching cost is captured by the minimal spanning tree of the product graph. In this way, the above measurement based on minimal spanning tree can capture the minimal matching cost between two original graphs.

In this paper, the Prim algorithm [14] is used to implement the minimal spanning tree of the product graph. The time complexity is O(nm log(n) + |E|), where n and m (m ≤ n) denote the sizes of the original graphs and |E| denotes the number of edges of the product graph (here, given two BSG G and G′ of size n and m, the size of their product graph is nm).

Also note that since the product graph can be constructed between graphs of different sizes, the proposed matching metric is capable of both complete and partial matching.

6. Performance Evaluation

In order to evaluate the efficiency and the effectiveness of our proposed method, we develop a prototype system of our intelligent recommendation for hypertouch apparel design. The setup of our experiment is a workstation of Windows 7 with an Intel Core i7 2.8 GHz CPU, 8 GB RAM, and GeForce GTX 1792 MB. The environment of hypertouch interaction is built upon a large freehand tabletop surface [15] which offers dynamic finger tracking and gesture recognition.

First, the garment shape database in our previous work [3, 12] is extended. 10 garment designers are invited to create standard garment shapes using CAD software, and we achieve a new shape database of 454 garment shapes. The database is categorized based on the standard panel models. Given an input shape, the retrieved targets are considered to be successful if they fall into the same category as the input shape. We compare the proposed method with the state-of-the-art garment shape matching methods [3, 12, 16]. The demonstration of our hypertouch interface and the result of intelligent apparel recommendation by dynamic matching is given in the following.

6.1. Gesture Interaction for Hypertouch Garment Design

The designing process is described as follows:

the designer starts off with a virtual mannequin;

the designer uses the hypertouch interface to carry out manipulations in the hypertouch environment;

designers draw an outline of the desired clothing over the mannequin;

our system converts the outline into incomplete clothes shapes and searches for similar garment shapes in the database;

garments that fit most of the input shapes are returned to the user dynamically along with the drawing process.

Figure 6 gives a demonstration of our hypertouch gesture interaction for interactive apparel shape design. The comments from designers show that our interface helps to have fast prototyping of apparel shapes and garment design. From Figure 6 we can see that the apparel modeling system is designed to have intelligent computer supports, which still offers a similar feeling to real life design on a mannequin. Flexible and quick controls of the garment shape are enabled to accomplish the design task quickly.

Demonstrations of hypertouch gestures for apparel design. (a) A rotation gesture performed by one hand pressing and holding on the object and the other hand specifying the direction of the movement. (b) A 2.5D zoom-in gesture by waving the object closer to the user. (c) A shape blending gesture by two fingers moving apart. (d) A color control gesture by one hand selecting the color and the other hand adjusting the gradient degree.

6.2. Results for Intelligent Apparel Recommendation

Figure 7 shows the dynamic recommendation results given a front and a skirt shape input. As we can see, the user intention of the desired shape is predicted along with the completion of the shape drawing. Recommendations of the garment shape are returned dynamically once a user input is detected. In this way, the apparel design process is largely speeded up by our intelligent recommendation.

Dynamic recommendation for the apparel design. The recommendation results are shown on the right side of the design panel, with similarities descending from left to right and top to bottom. (a)–(c) The intelligent designing process of a front garment shape by one user. (d)–(f) The intelligent designing process of a skirt garment shape by another user.

We then compare the performance of the proposed dynamic panel matching algorithm with other matching methods. We calculate the similarity between the input shapes and the garment shapes in the database. The top-K (K = 5,10,15,20) garment shapes are returned as the matched results. All results are averaged over all input trials. The same shape features are used for all comparing algorithms.

The well-known precision and recall rates are used to evaluate the matching performance of the similarity metrics. With the number of returning items increasing, the precision will be decreased but the recall will be increased. Recall-precision curves can be plotted based on the recall and precision pairs, in which a higher curve indicates better matching performance. The response time of the matching procedure is also measured. We compare our proposed matching method with the state-of-the-art garment shape matching methods, including user behaviour tree (UBT) matching [3, 12] and attribute strings matching [16].

The matching accuracy with K returned items is given in Figure 8. It is shown that the proposed matching approaches achieve the best performance among all methods. The average matching precision even achieves 0.95 with K = 5, which shows that our metric is effective to calculate the similarity between two garment shapes.

Average precision rates of different return window sizes.

The recall-precision curves are shown in Figure 9. Same as above, the proposed method achieves the highest performanceamong all methods. This further confirms that our proposed method can reach better performance than the state-of-the-art methods.

The precision-recall curves of different panel matching methods.

We also calculate the average response time of the proposed approach, which is about 50 ms. Moreover, for a novel user, the whole garment design process takes around 2 minutes including drawing, editing, correcting, and selecting candidate garments. This is sufficiently fast for real time garment design task.

7. Conclusions

In this paper, we propose an intelligent apparel design system that supports dynamic recommendation, or the so-called recommendation in motion, to capture user preferences online. Prior domain knowledge is extracted, and a database of user drawing patterns is constructed accordingly. A dynamic partial matching algorithm based on minimal spanning tree is used to calculate the similarity between the input shape and the candidate shapes in the apparel database. Possible shape solutions are ranked in the order of similarity descending and returned to the user proactively with the shape creation progress. In this way, the efficiency of apparel design is largely improved. Meanwhile, the designer and the computer system are connected and communicated in a more intelligent way than with transitional CAD software.

We also present a new concept of hypertouch interaction. Higher degrees of freedom and more information are achieved in the hypertouch environment. Nonrigid sketching and gesture interaction are also supported with hypertouch features. This benefits the touch-based interface towards a more flexible, intuitive, efficient, and easy-to-use user experience.

One direction of our future work is the development of 3D hypertouch interface. How to robustly track the hands and recognize the gestures in 3D still attracts lots of attentions. Considering Kinect or LeapMotion instead of tabletop table will be a possible solution. Another direction is to increase the volume of the apparel database to accommodate a larger variety of garment shapes.

Conflict of Interests

The authors declare that there is no conflict of interests regarding the publication of this paper.

Footnotes

Acknowledgments

This work is supported by The Hong Kong Research Grants Council General Research Fund Grants (nos. PolyU 5101/09E, PolyU 5101/08E), The National Science Foundation of China (nos. 61272276, 61305091), The National Twelfth Five-Year Plan Major Science and Technology Project (no. 2012BAC11B00-04-03), The Fundamental Research Funds for the Central Universities (no. 2100219038), and Shanghai Pujiang Program (no. 13PJ1408200). The authors would also like to thank the anonymous reviewers for their valuable comments and suggestions.