Abstract

This paper presents a new method to calibrate the robot visual measurement system. In the paper, a laser tracker is used to calibrate the robot twist angles. Each axis of the robot is moved to many positions and the positions measured by the laser tracker fit a plane. The normal vectors of the planes are the directions of the Z axes. According to the definition of the robot kinematics model parameters, the errors of the twist angles can be calculated. The joint angles zero offsets are calibrated by the constraint that the rotation relationship between the world frame and the robot base frame is relatively constant. A planar target with several parallel lines is used to obtain the pose of the camera relative to the planar target by the lines in the target plane and the vanishing line of the plane. The quantum behaved particle swarm optimization (QPSO) algorithm is used to calculate the parameters. Experiments are performed and the results show that the accuracy of the robot visual measurement system is improved about 10 times after being calibrated.

1. Introduction

3D surface topography measurement and the aiming and positioning of the butt joint are key technology in large scale equipment manufacture and assemble process. With the developing of computer vision technology, visual measurement system as a kind of noncontact measurement method is used more and more widely. Besides noncontact, it has the advantages of high measuring precision and measuring speed. When the tested objects have fixed testing characteristics, or are mass produced, the framework structure is used. But, with the increase of the structure complexity and high machining precision requirement of mechanical parts, the demand to measuring is more and more strict. The framework structure cannot meet the measuring requirement. A flexible measurement system which can measure any part of the tested object is needed. Robot visual measurement system is a combination of robot and visual measurement system. Robot vision is seen to be the most important sensory ability for the robot [1]. Cameras fixed on the robot arm allow it not only to have the robot advantages of large flexible global motion but also to have the advantages of the visual measurement system.

Generally, industrial robots have high repeatability but low absolute accuracy, and the characteristic of the robot makes robot visual measurement systems cannot meet the requirement in some situations. Therefore, it is important to improve the absolute accuracy to enhance the measuring accuracy of robot visual measurement systems. Five error sources are known to lead to robot inaccuracy, such as errors in geometrical parameters, environment, measurement, calculation, and use. The geometrical parameter errors are the dominant error source which account for 90% of the errors [2]. So it is important to identify the geometrical parameter errors. There are a lot of researches about robot calibration [3–10], and most of them are based on the distance constraint. On this occasion, although the robot kinematical parameters are calibrated, the results are inaccurate without using the rotation constraint sufficiently. In the paper, the rotation constraint is introduced. The pose of the world frame relative to the camera frame can be obtained by a planar target with parallel lines using the features of the vanishing points and vanishing lines.

For the robot visual measurement system, it is important to know the relationship between the robot and the camera, which is called the hand-eye calibration. The hand-eye calibration methods are mature [11–14], and most of them are solved by the equation AX = XB. The rotation is calculated first, and then the translations are attained. So, in the paper, the procedure of calculating the hand-eye relationship is not introduced.

For most conventional optimization methods, for example, least squared algorithm [15], which is based on quadratic error functions, the iterative initial values have a great contribution to the results. That means that the optimization results are not the global optimum while they are just the approximate extreme points in the solution space. Now that the traditional methods cannot deal with nonlinear systems effectively, some new methods drawn from the field of biology are proposed to be the alternation. Genetic algorithms (GA) [16–19], artificial neural networks [20–22], and particle swarm optimization algorithm [23, 24] are used to identify parameters to improve the robot accuracy. Hassan et al. [25] had proved that the particle swarm optimization algorithm had better optimization capability than other optimization algorithms. Though the PSO [26] algorithm can solve some problems efficiently, it has disadvantage that it does not guarantee the global optimum [27, 28]. To overcome the shortcoming, the QPSO algorithm is proposed [29]. In the paper, the QPSO algorithm is used to obtain the real joint angles zero offsets.

According to the D-H model, the rotation of the transformation from the robot end effector to the robot base frame is only related to the robot twist angles and joint angles. So the twist angles and the joint angles can be calibrated using the rotation constraint. The errors from twist angles and joint angles have more contributions to the robot accuracy than link lengths and link joint offsets. So the proposed method is a simple way to calibrate robot when the measuring requirement is not too strict.

This paper is organized as follows. The calibration principle will be presented in Section 2. Experiment results will be shown in Section 3, and the conclusion will be provided in Section 4.

2. Calibration Principle

As shown in Figure 1, there is a camera fixed on the robot end effector. The camera frame is denoted by F

c

. The world frame is created on the left top corner of the planar target, which has black and white lines on it, and is denoted by F

w

. The robot base frame F

r

and the world frame F

w

are relatively stationary. The camera is moved to different positions with the robot, and the transformations

Robot visual measurement system calibration model.

2.1. Calibration of Twist Angles

According to the definition of the kinematical parameters of D-H model, the twist angle describes the angle between two Z axes of the adjacent links. When the robot is given, the twist angles are determinate. So the real Z axes should be determined first to calibrate the twist angles. A laser tracker is used to measure the real Z axes directions of the robot. The reflector is fixed on the robot end effector, and the first axis of the robot is moved in the joint angle range uniformly. The motion curve of the reflector is a spatial circular arc, and the normal vector of the arc plane is the direction of the robot first link Z axis.

Suppose that the positions of the reflector are (x1 i , y1 i , z1 i ), where i is the ith position when the first axis is moved. Then, the plane can be written as

where v1 = (a1, b1, c1) is the unit normal vector of the plane fitted by the positions (x1 i , y1 i , z1 i ). And it is also the direction of the first link Z axis.

The same operations are performed to the rest links, and the directions of the Z axes are obtained and denoted as vaxis = (aaxis, baxis, caxis)(axis = 2, 3, 4, 5, 6).

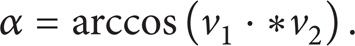

So the angle between the first and the second links can be obtained as

The sign can be determined by the definition of the twist angle. Therefore, the real twist angle can be written as

The rest twist angles can be attained as (2) and (3), and the twist angles errors can be obtained as follows:

where α i ′, α i , and Δα i (i = 1, 2, … 6) are the true value, the ideal value, and the error of the twist angles.

But it is worth noting that there is a link gear between the link 2 and link 3. When the link 2 is moved, the link 3 is moved at the same time. But it is not the same for the reverse condition. So when the operation is performed to the link 2, the reflector should be fixed on the arm of the link 2, rather than on the robot end effector.

2.2. Calibration of Joint Angles

The resolution of the robot axes is about 0.01°, so the errors from the recorders can be ignored. The joint angles errors mentioned there mean errors of the joint angle zero offsets.

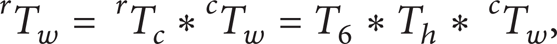

The robot base frame F r and the world frame F w are relatively stationary. Therefore, there is a constant equation as

where the matrix T

h

is the hand-eye relationship transformation, which has been obtained already. The matrices T6 and

According to (5), we have

where

2.2.1. Solving R6

The robot is a six-degree-of-freedom serial industrial robot. According to the D-H model, the pose and position of each link can be expressed as (7). The kinematical parameters are shown in Table 1. Consider

where cθ is the cosine value of the angle θ and sθ is the sine value of the angle θ.

IRB1400 robot kinematical parameters.

The transformation from the robot end effector to the robot base frame can be written as

From (7), we can see that R6 is only determined by the twist angles α and the joint angles θ.

2.2.2. Solving

The camera is calibrated using the method proposed by Zhang [30].

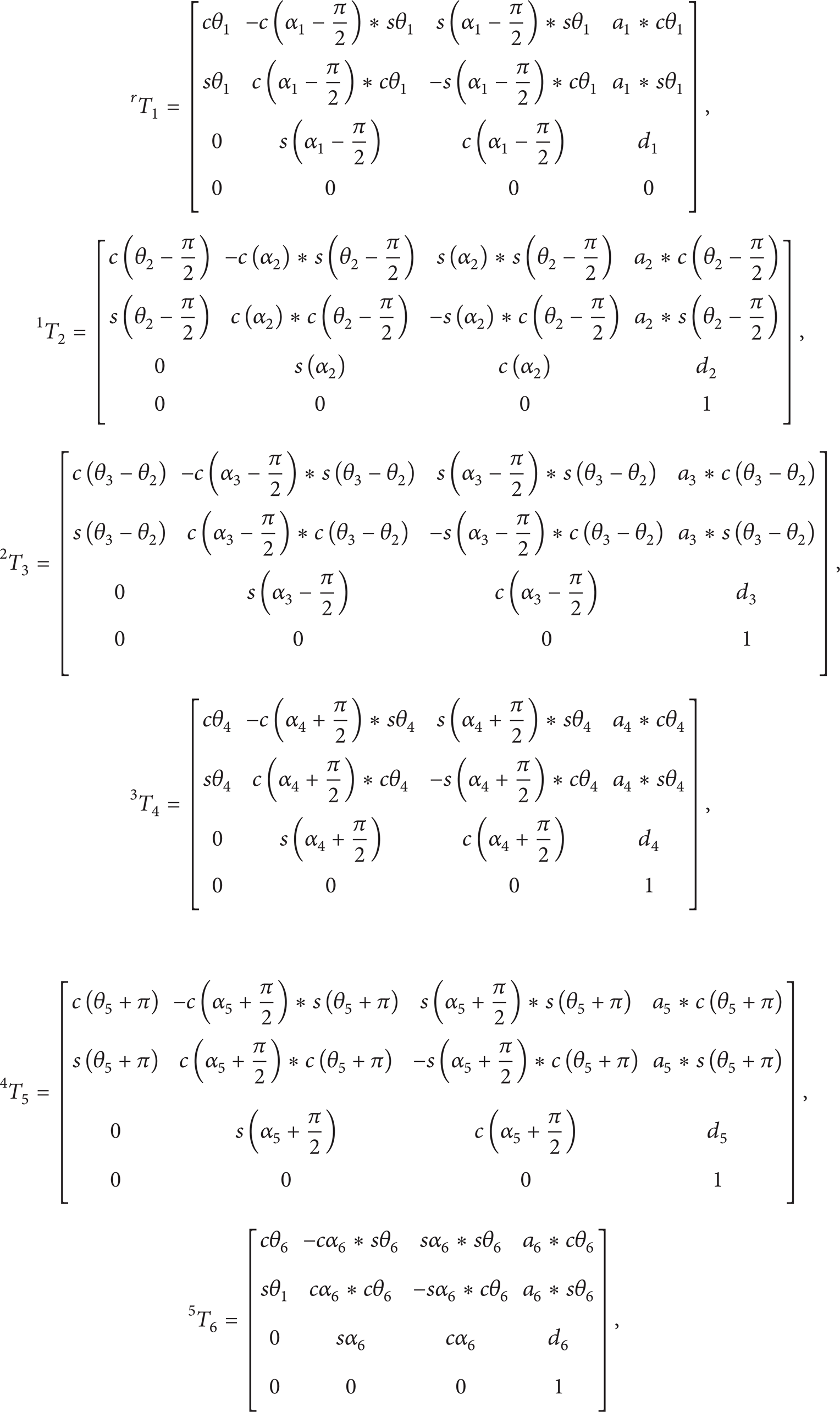

If there are at least three coplanar equally spaced parallel lines on the scene plane, the

There are a set of parallel lines on the planar target, and the lines are coplanar and equally spaced parallel. Three equally spaced lines on the scene plane are represented as

The vanishing point of the three parallel lines is named as v = (x v , y v , 1) T , which is the intersection point of the three parallel lines.

According to the equation

the direction of the parallel lines measured in the camera frame can be expressed as

where K are the internal calibration parameters of the camera.

The direction d is the Y axis of the world frame expressed in the camera frame.

The three equally spaced lines on the planar target can be represented as

The solution for the vanishing line is,

According to the equation

the orientation of the target plane can be represented in the camera frame as

The direction n is the Z axis of the world frame expressed in the camera frame.

Then, the direction of X axis of the world frame expressed in the camera frame can be obtained as

Therefore, the transformation

2.2.3. Quantum Behaved Particle Swarm Optimization

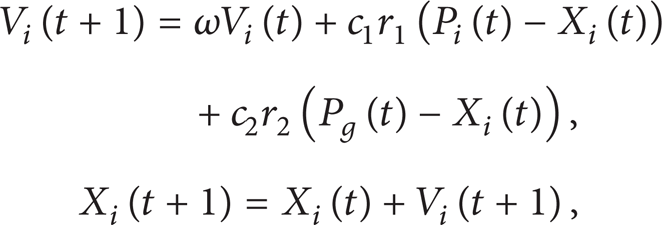

Particle swarm optimization (PSO) was first introduced by Kennedy and Eberhart. In the PSO algorithm, every swarm particle explores a possible solution. At first, the initial particles are generated in the searching space randomly. Then, the particles as their personal best positions are used to evaluate the fitness function determined by the optimization problem. And the best position of the whole flock is the global best solution. Then, the swarms adjust their own velocities and positions dynamically based on the personal best positions and global best positions as the following equations:

where c1 and c2 are the acceleration coefficients, ω is the inertia weight factor, r1 and r2 are random numbers in the range of (0, 1), X i (t) is the ith particle, P i (t) is the personal best position of the ith particle, and P g (t) is the global best position of the entire population.

Every swarm continuously updates itself through the above mentioned best positions. In this way, the particles tend to reach better and better solutions in the searching space.

In the PSO algorithm, the particles will follow a particular course after several iterations. And then the particles will be trapped into local optima. So the global convergence cannot be guaranteed which has been proved by Bergh. To make sure that the particles escape from a local minimum, the QPSO algorithm is proposed. In the QPSO algorithm, the particle position is described not by the velocity but by the particle's appearing probability density function. There is no fixed orbit for the particles, and the particles constrained by δ potential trough can appear at any position in the feasible solution space with certain probability. So the QPSO can guarantee the global convergence and it has been proved by Sun.

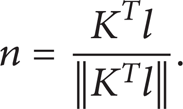

The particle position updating equations are as follows:

where ϕ ∼ U(0 ∼ 1), u ∼ U(0 ∼ 1), D is the dimension of the problem space, M is the population size, x i = (xi1, xi2, …, x iD ) are the particle current positions, P i = (Pi, 1, Pi, 2, …, P iD ) are the personal best positions, G i = (Gi, 1, Gi, 2, …, G iD ) are the global best positions, and α is the Contraction-Expansion coefficient which is the only parameter in the QPSO algorithm depicted as

where t is the current iterative number and the MAXITER is the maximum iterative number.

2.2.4. Objective Function

The zero offsets of joint angles need to be computed using the optimization algorithm.

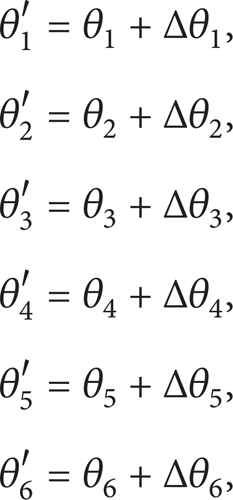

Suppose the true joint angle values are expressed as

where θ i ′, θ i , and Δθ i (i = 1, 2, … 6) are the true value, the ideal value, and the error of joint angles zero offsets, respectively.

Equations (4) and (21) can be substituted to (7), and the true value R6′ can be obtained and then can be substituted to (6).

After the robot moved several positions, the objective function is obtained as

where n is the number of the positions and

3. Experimental Results

3.1. Experiment Setup

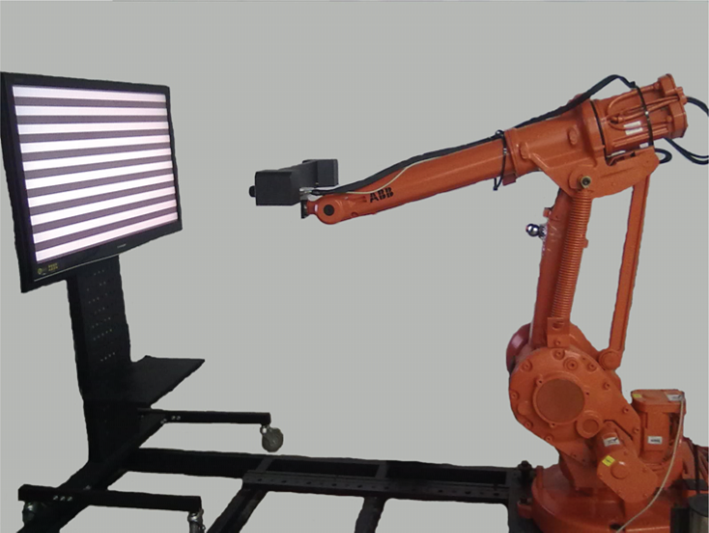

The robot visual measurement system is composed of an industrial robot and a visual measurement system. The visual measurement system consists of a binocular stereo vision system, laser generators, a computer, and the image process system. The robot is an industrial robot IRB1400 from ABB (a Swiss-Swedish conglomerate). The binocular stereo vision system consists of two industrial cameras AVT F504B, with 16 mm lens from Mage. The image resolution of the camera is 2452*2056 pixels. The field of view is about 300*300 mm2, and the work distance is about 600 mm. The laser generators are HK130209307-10 from HUAKE. The laser beams produced by laser generators are projected on the tested surface and form light stripes on the surface. The contour of the light stripes is the contour of the test surface, which is simple for measuring.

The two cameras are calibrated using the Zhang [30] method, the left camera frame is the camera frame F c , and the right camera is an auxiliary camera to measure. The planar gridding target is placed in the robot work space, and the cameras are moved with robot to capture the images of the planar target in different positions.

In the calibrating procedure, a laser tracker and a planar target will be used. The laser tracker is AT901-B from Leica, with the measuring resolution of 0.01 mm. There are parallel lines on the planar target, and the resolution is 5 μm.

The work space of the measuring system is about 1500*1500*1000 mm3. And the system is shown in Figure 2.

The robot visual measurement system.

3.2. Calibration of Twist Angles

As shown in Figure 3, there is an adapting part fixed on the robot end effector, and the reflector of the laser tracker is fixed on the other end of the adapting part. Each single axis is moved in its joint angle range mentioned in Section 2.1, and 100 pause points are captured uniformly. It is worth noting that, when the axis 2 is moved, the reflector is fixed on the arm of axis 2, as shown in Figure 4. The pause points are measured by the laser tracker, and planes are fitted based on these points. The normal vectors of the planes are the directions of Z axes, and the twist angles are obtained. There are only five twist angles which can be obtained. The last frame is created on the robot end effector, and the sixth twist angle is zero which cannot be calibrated by the method we proposed. The calibration results of the twist angles are shown in Table 2.

The errors of the twist angles.

Adapting part.

The reflector on the axis 2.

3.3. Solving the Joint Angle Zero Offsets

The camera is moved with the robot to 100 different positions, and at least three parallel lines on the planar target are captured by the left camera. The images are saved and the corresponding joint angles are recorded at the same time. According to Section 2.2.1 and Section 2.2.2, R6 and

According to the objective functions in Section 2.2.4, the errors of the joint angle zero offsets are computed using the QPSO algorithm.

The particle size is 60, the dimension is 6, the maximum iteration number is 20000, and the range of parameters are (−1, 1). The errors of the joint angle zero offsets are shown in Table 3.

The errors of the joint angle zero offsets.

3.4. Verified Experiments

To verify the validity of the proposed method, a verified experiment system is set up. The round hole target is put in the robot work space, as shown in Figure 5.

Verified experiment.

The centers of the holes on the target are measured by the binocular stereo vision system. Then, they can be represented in the robot base frame, by the transformation matrices T6 and T h , where the matrix T6 is obtained by the D-H model with the calibrated parameters α i ′ and θ i ′. At the same time, they are measured using the laser tracker.

Five hole centers measured randomly in the left target and four in the right target have been recorded and the distances of any two hole centers are calculated. Compare the distances measured by the laser tracker and the robot visual measurement system; the results are shown in Table 4.

Verified experiment results.

From Table 4, we can see that the measuring accuracy is improved after calibration.

4. Conclusion

This paper represents a two-step calibration method for the robot visual measurement system. Firstly, the twist angles are calibrated using a laser tracker. And then, according to the constraint that the world frame and the robot base frame are relatively constant, the joint angles zero offsets are calibrated. The rotation constraint is introduced and the accuracy is improved. The results show that the proposed calibration method in the paper can improve the measuring accuracy greatly. In the calibrating procedure, there are some key problems that need to be noted.

To improve the accuracy, the pause points should be as much as possible when calibrating the twist angles.

In the calibrating procedure, adjust the camera to make sure that the planar target is in the range of the camera depth of field.

The scene plane should be as much lean as possible with the planar target to decrease the effect by the vanishing point position error when calibrating the rotation parameters.

The robot should be moved to cover all the real measuring space to guarantee the measuring accuracy.

The calibration method proposed in the paper has broad applications including numerous other kinds of robot visual measurement systems. After calibration, there is no additional measuring equipment needed in the measuring procedure. The proposed method is simple and easy to perform. It can meet the large scale measurement requirement. But, if the measurement requirement is more restrict, the distance constraint should be used and the link length and link joint offsets should be calibrated. The effects about temperature and elasticity are not discussed either, and the studies on them should be done in the future.