Abstract

We consider a large-scale wireless network that uses sensors along its edge to estimate the characteristics of interference from neighboring networks or devices. Each sensor makes a noisy measurement of the received signal strength (RSS) from an interferer, compares its measurement to a threshold, and then transmits the resulting bit to a cluster head (CH) over a noisy communication channel. The CH computes the maximum likelihood estimate (MLE) of the distance to the interferer using these noise-corrupted bits. We propose and justify a low-complexity threshold design technique in which the sensors use nonidentical thresholds to generate their bits. This produces a dithering effect that provides better performance than previous techniques that use different non-identical thresholds or the case in which all the sensor motes use an identical non-optimal threshold. Our proposed technique is also shown (a) to be of low complexity compared to previous non-identical threshold approaches and (b) to provide performance that is very close to that obtained when all sensors use the identical, but unknown, optimal threshold. We derive the Cramér-Rao bound (CRB) and also show that the MLE using our dithered thresholds is asymptotically both efficient and consistent. Simulations are used to verify these theoretical results.

1. Introduction

Large-scale deployments of wireless LANs in unlicensed RF bands are often subject to interference from many sources. We have encountered this problem in the e-Stadium wireless testbed [1], which enables football fans in the stadium at Purdue to access multimedia content related to the game [2] via 802.11b and 3G networks. The locations and coverage areas of the 802.11b access points (APs) in the stadium are shown in Figure 1. The two interfering APs also shown in the figure may disrupt eStadium services for fans sitting along the outer edge of the stands or in the tailgating area north of the stadium. The locations and settings of these interfering access points or devices change over time. The eStadium testbed must sense these changes and adapt its channel assignments and power levels to ensure that its users experience satisfactory Quality of Service (QoS).

The eStadium testbed at Purdue's Ross Ade stadium includes (1) twelve 802.11 APs with directional antennas that provide coverage, shaded in orange, of the outdoor seating area (2) ten APs with omni antennas that cover the indoor seating areas, (3) a Vivato network that serves the parking area north of the stadium, and (4) clusters of sensors in the concourse area of the stadium. Interfering APs are generally WiFi networks operating in the vicinity of the stadium.

The eStadium testbed includes clusters of wireless sensors that are distributed along the concourse area of the stadium [3]. They currently gather and process information from this area and make it available to fans and security personnel during games. We propose that these sensors perform the additional task of characterizing the sources of interference with the 802.11b-based portion of the eStadium testbed.

The information gathered about interferers by these sensors is not directly available to the eStadium APs because of their directional antennas or shadowing by the stadium's structure. A Smart WiFi system [4] would thus not be able to sense and adjust to the presence of these external interferers. This proposed use of the sensors, combined with algorithms to alter the 802.11b channel assignments and power settings, is thus a cognitive networking approach to enabling the coexistence of many systems in unlicensed bands.

The contributions of this paper include the following.

Analysis and improvement of MLE approaches in which sensors conserve energy by minimizing the amount of data they transmit. Each sensor thresholds its noisy measurement of the interferer's signal strength, adds the resulting bit to a packet carrying other data, and transmits the packet to the CH via a noisy channel. We consider and justify the case in which each sensor uses a different threshold and compare it with cases in which every sensor uses the same threshold. Proofs of the asymptotic efficiency and consistency of this new MLE approach and derivation of the Cramér-Rao bound (CRB). Given that the thresholds in our case are non-identical, the standard proofs of asymptotic consistency and efficiency do not hold. Verification that the MLE based on the use of non-identical thresholds by the sensors performs: (i) as well as one based on the use of identical optimal thresholds, even when the number of sensors is small, (ii) significantly better than the one based on the use of identical non-optimal thresholds, and (iii) is of lower complexity and has a performance that is better than or as good as existing non-identical threshold techniques. Because the identical optimal threshold case requires prior knowledge of the true value of the unknown parameter, and existing non-identical techniques involve the solution of a complex optimization problem, our non-identical threshold approach is much more practical and of lower complexity in terms of the design of the thresholds.

In Section 2, we review the most relevant prior work in this area. Sections 3 and 4 describe the system model and the formulation of the sensor fusion problem. The performance of the algorithm is evaluated and compared with existing techniques in Section 5, and conclusions are provided in Section 7.

2. Related Work

In the context of transmitter location estimation for wireless networks, the authors in [5–7] propose expectation maximization (EM) algorithms to locate multiple transmitters using received power levels at arbitrarily placed receivers. The authors in [8] consider a cognitive wireless network in which secondary users measure signal strengths from primary users to estimate the distance to the primary users. They propose estimation algorithms under different channel fading conditions. In [9], the authors study an 802.11 cognitive mesh network (COMNET) in which mesh clients (MC) calculate their distances from the primary stations with triangulation-based localization techniques that use RSS measurements. These localization techniques assume that the MCs are synchronized and that they are located on the edge of the secondary 802.11 network; otherwise, they may not be able to detect the interference from the primary stations. In the above papers, the authors do not consider the use of thresholded measurements or the transmission of measurements over noisy channels.

In the context of distributed estimation in wireless sensor networks, the authors in [10, 11] consider 1-bit quantization using identical and non-identical thresholds and obtain the maximum-likelihood estimate of an unknown parameter. They account for measurement noise that is Gaussian or has an unknown distribution but do not consider other sources of error, such as noise in the communication channel. A similar situation is examined in [12], where multiple-bit quantized information is used to locate a target in a sensor field under error-free channel conditions. A nonparametric approach to density estimation using a sensor network was introduced in [13]. The authors considered the case where 1-bit quantized data is transmitted to the CH using random thresholds from a pmf. As with the previous papers, however, processing by sensors and the wireless channel between the sensors and the CH are considered to be error free, which is typically not the case in realistic situations. In [14], the authors study channel aware target localization using 1-bit quantized data. They analyze the effects on the root mean squared error (RMSE) of the MLE due to communication channel impairments. They consider the BSC, Rayleigh fading with coherent reception and non-coherent reception. However, in their work, they do not address the design of the 1-bit quantization algorithm for transmission over noisy communication channels.

In [15, 16], the authors consider a 1-bit quantization framework for noisy Gaussian communication links. However, it is assumed that either all sensors use the same threshold or use one of two thresholds. More recently, in [17], the authors have proposed an MLE-based distributed estimation algorithm using 1-bit quantized data that is transmitted over a BSC. The context in which the estimation algorithm is analyzed is for secure data transmission in wireless sensor networks, but it is assumed that all the sensors use identical thresholds.

Several other papers, including [18–21], also study the distributed estimation problem, but their approach is based on the best linear unbiased estimate (BLUE) at the CH instead of the MLE. This approach is typically not appropriate or optimal when the received data at the CH is a nonlinear function of the unknown parameter.

Our approach is unique because it (a) exploits and justifies the use of different thresholds by the sensors when quantizing their noisy measurements and (b) accounts for noisy measurements by the sensors and error-prone processing and communications between the sensors and the CH. Our analysis of the MLE in this new approach will show that uniformly-spaced thresholds produces a dithering effect that leads to near-optimal performance when the only information available about the parameter being estimated is its support. This is very important because previous approaches based on the use of an identical threshold by all sensors work well only when the chosen threshold is either very near the unknown true value, or the number of sensors is very large. Furthermore, our non-identical threshold design algorithm is significantly less complex and performs as good as or better than existing non-identical threshold techniques, such as the one in [10].

3. System Model and Problem Motivation

3.1. System Characteristics and Considerations

The following characteristics of the wireless sensor network and sensor fusion algorithm are assumed in this paper. They are motivated by the eStadium project but are relevant to many other systems operating in unlicensed bands.

(a) Architecture

The system consists of a network of wireless APs and a single-hop cluster of N spatially distributed wireless sensors deployed along the edge of the wireless network. Each sensor measures the RSS from an interfering AP, compares it to its threshold, and relays the resulting bit to the CH. The CH fuses the bits it receives to produce an estimate of the signal strength and distance to the interfering AP.

(b) Measurement Errors

Due to large-scale fading and shadowing effects, the RSS

(c) Energy Limits

Energy efficiency is critical in the design of battery-powered sensor networks, so sensor k thresholds the RSS value it observes to produce only one bit, denoted by

(d) Communication Channel

The wireless channel between each sensor, and the CH is modeled as a binary symmetric Channel (BSC) with a crossover probability of ϵ [25]. We assume that the value of ϵ is perfectly estimated at the CH; this can be achieved by the use of pilot training data transmitted by the sensors to the CH during the initialization process. We further assume that the communication channel is static for a period of T seconds; hence, ϵ does not change during the estimation process. For completeness, in Section 5, we also analyze the performance degradation of our algorithms due to a mismatch between the estimated value of ϵ and the true value of ϵ. The choice of a symmetric channel is not required for our analysis but does simplify comparisons. Collisions of bits/packets in the communication channel are assumed to result in their loss and subsequent retransmission. The BSC model thus applies to transmitted bits/packets that are not involved in collisions. Alternatively, one could assume that the sensors' transmissions are scheduled via a collision-free protocol, such as the one in [26], or that they use the TDMA-based frame that is available under the 802.15.4 (ZigBee) protocol [24].

(e) Reliability and Trustworthiness

Noisy communications may not be the only source of errors affecting the sensors' decisions before they reach the CH. The sensors may make processing or storage errors. They may be compromised and intentionally report incorrect results with some probability in order to compromise performance without being detected. These additional error sources can be aggregated with the communication errors, resulting in a larger crossover probability. These chapter's results can thus be used to characterize the sensitivity of fusion algorithms to these error sources.

(f) Industrial, Scientific, and Medical (ISM) Band

The true mean value of the RSS distribution (see (b), above), denoted by

Plot shows the relationship between μ and R with

(g) Periodic Updates

We assume that the estimates μ and R are updated every T seconds to capture any changes in the set of interferers. T is assumed to be greater than the time required for all sensors to collect a measurement and transmit their bits to the CH. In this paper, we focus on the performance of estimates for a single time period of length T. The better the estimate produced in this period, the faster an iterative algorithm based on it will converge. An iterative algorithm that builds on the results is presented in [28].

In summary, sensor k transmits a single bit of information

System block diagram for a cluster of wireless sensors. The sensor' RSS measurements are quantized using the dithered quantization technique. The resulting 1-bit values are transmitted over a BSC to the CH, which calculates the MLE of R.

3.2. Optimal Identical Threshold Design

The optimal threshold can be defined to be the threshold that minimizes the Cramér-Rao bound (CRB) for any unbiased estimator of μ. This optimization criteria is similar to that proposed in [10, 11] for the error-free communication scenario. In this case, all the sensor motes use the same optimal threshold derived by solving the optimization problem. The thresholds can be expressed as

An interesting observation is that the optimal identical threshold choice does not depend on

3.3. Threshold Design for Dithered Quantization

Because use of the optimal identical threshold for the 1-bit quantization step requires prior knowledge of the unknown parameter, we propose a framework in which each sensor uses a different threshold. This ensures that at least a few thresholds will be close to the true value of the unknown parameter. Furthermore, using non-identical thresholds at the sensors for the 1-bit quantization produces a dithering effect that reduces the bias in the estimator (as shown in Section 5). We thus refer to this technique as dithered quantization. Since it is well known that the uniform distribution has maximum entropy among all continuous distributions with compact support [25], we assume that μ is uniformly distributed over the interval

3.4. Binning-Based Nonidentical Threshold Design

Another approach to the use of non-identical threshold was proposed in [10]. They consider designing the threshold vector

and

Using numerical techniques to approximate the numerator of 𝒦,

For the rest of the paper, we refer to this technique as the binning-based non-identical threshold approach. In Section 5, we compare the performance of all three threshold design schemes.

3.5. Effect of Network Topology on Threshold Design

Wireless sensor networks change with time because sensors can fail as their batteries die, or new sensors may be added to an existing network. Our threshold design is robust to these changes, since when the number of sensors is large, the thresholds are densely distributed over the interval, the loss of a few sensors will not result in a significant loss of information. When the number of sensors is small, the thresholds are coarsely spread over the interval. A few failures might thus result in a significant decrease in the number of bits received by the CH. The CH can then reassign thresholds by communicating with the sensors over the wireless channel. When a new sensor joins the network, it can request a threshold from the CH, choose one based on its unique MAC address, or the CH can reassign all sensors' thresholds according to the algorithm in Section 3.3. On the other hand, the binning-based non-identical threshold technique requires the solution to the optimization problem (8) each time the thresholds need to be assigned. Hence, compared to our proposed approach, the binning-based approach is of much higher computational complexity and therefore cannot easily adapt to changes in the network topology.

4. Sensor Fusion Algorithm

We use maximum-likelihood estimation (MLE) techniques to estimate μ and then use the invariance property of MLE's [29] obtain an estimate for R. For sensor mote k, we denote

Let

Thus, the MLE

5. Performance Analysis of Dithered Quantization Approach

This section contains (i) proofs of the asymptotic consistency and efficiency of the MLE for μ and R using the proposed dithered quantization approach (ii) derivation of the Cramér-Rao bound (CRB) for the variance of any unbiased estimator for μ and R, and (iii) Monte Carlo simulations that validate the asymptotic results and compare the performance of the dithered quantization technique with the identical threshold-based quantization approach. Since the constrained ML optimization in (15) is analytically intractable, there is no closed-form expression for the estimates or the distribution of the estimates. Hence, to study the bias of the MLE's, we prove that the estimators are asymptotically strongly consistent and hence asymptotically unbiased. Furthermore, although the

5.1. Asymptotic Properties

For the following results, we define

Proposition 1.

The MLE

Proof.

See Appendix A.

Proposition 2.

Proof.

See Appendix B.

The following two corollaries are immediate consequences of the invariance property of functions of MLE's and the one-to-one mapping between μ and R.

Corollary 1.

The MLE

Corollary 2.

5.2. Cramér-Rao Bound

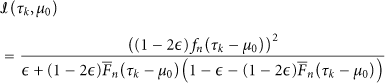

In this subsection, we derive the CRB for any unbiased estimator of μ denoted by

Theorem 1.

The CRB for any unbiased estimator

Proof.

See Appendix C.

Using the CRB for

Lemma 1.

If

Proof.

A proof of this lemma can be found in [29, Chapter 3, Appendix

Corollary 3.

The CRB for any unbiased estimator for

Proof.

Using Lemma 1 and the relationship between R and μ given by

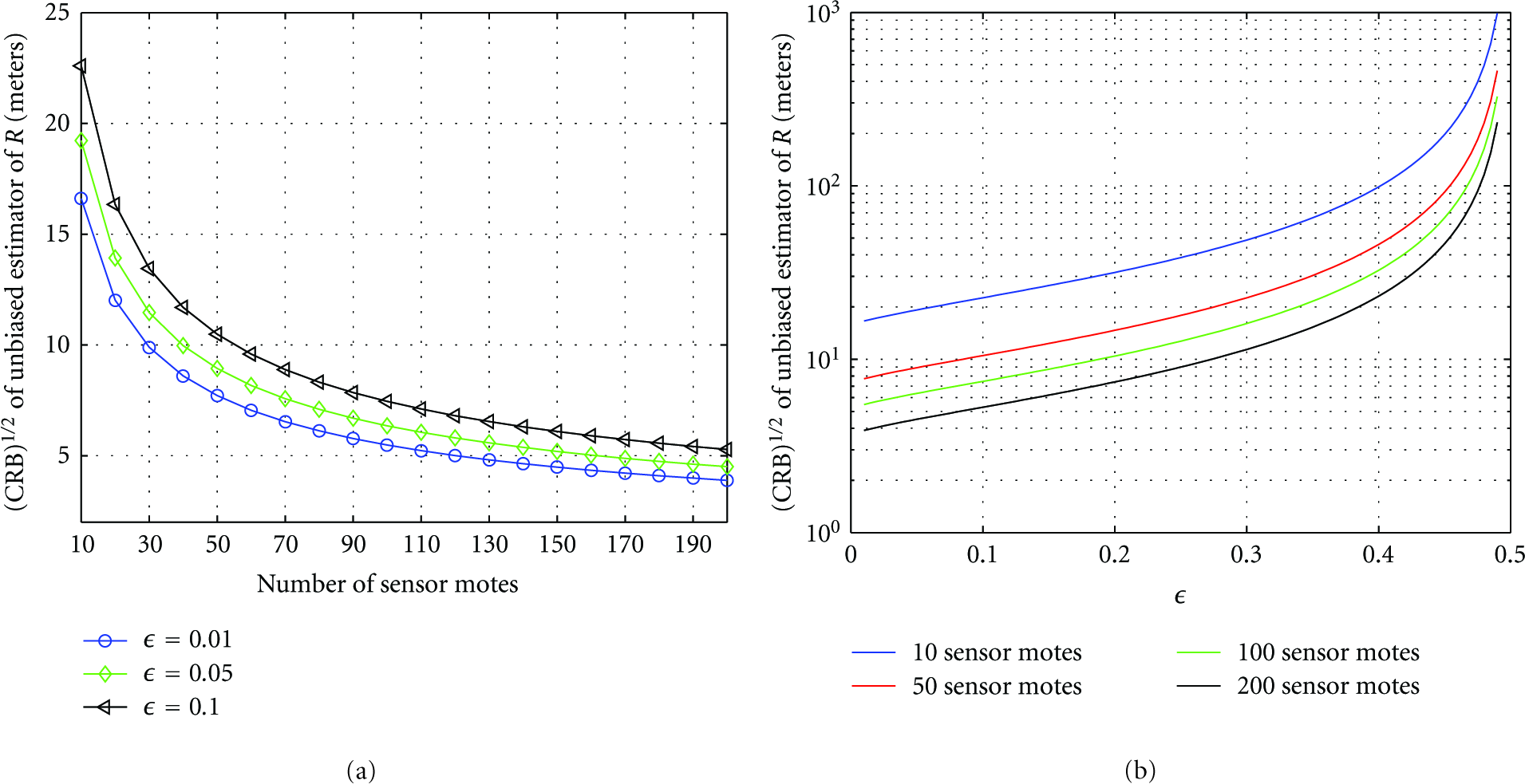

Figures 4(a) and 4(b) show the behavior of the square root of the CRB of

5.3. Numerical Simulation Results

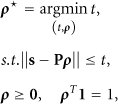

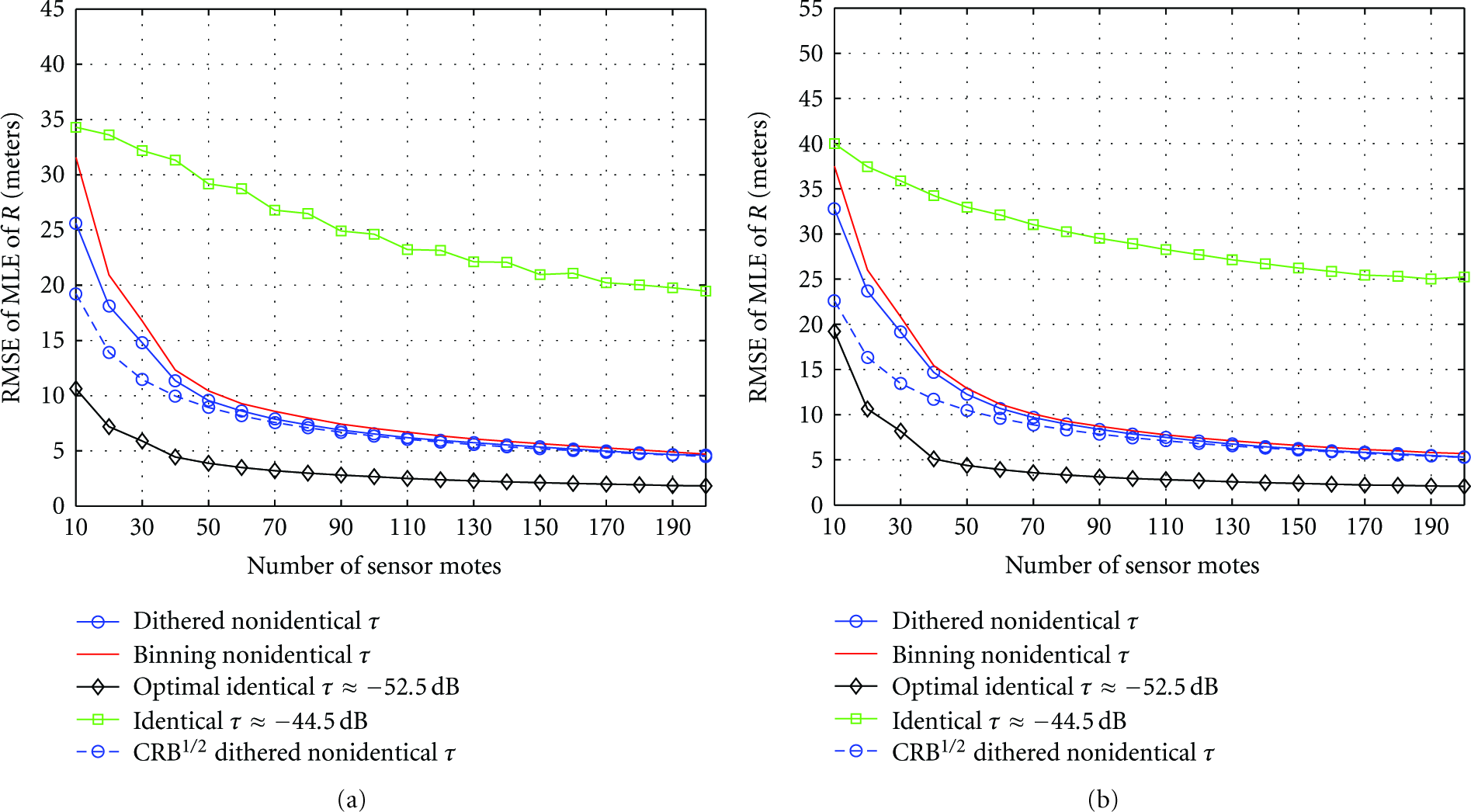

To evaluate the performance of the dithered quantization scheme and compare it with the identical and the binning-based non-identical threshold approaches, we now conduct numerical Monte Carlo simulations. In these simulations (i) the number of sensors is varied from 10 to 200 and (ii) both a low ϵ of 0.05 and a high ϵ of 0.1 are considered. The value of

The results obtained were averaged over 2000 realizations of the measurement noise process. It can easily be shown (see Appendix D) that the log-likelihood function is not a concave function of μ for both non-identical threshold-based approaches. Hence, we implemented an 1-dimensional iterated grid search algorithm [31] to locate an approximate maximal value of the log-likelihood function denoted by ℒ. We employed an equidistance grid of size n points given as:

To characterize the computational complexity of the optimization algorithm, we note that the local optimization using SQP has quadratic convergence rate. The computational complexity of the iterative grid search algorithm can be expressed as

The non-identical thresholds for the dithered quantization technique are generated using the technique described in Section 3, and, for the binning-based technique the SOCP 7 is solved in Matlab using cvx software [32]. As mentioned in [10], the threshold spacing for the binning technique is set to

In Figures 5(a) and 5(b), we plot the root mean squared error (RMSE) of

RMSE of

Figures 6(a) and 6(b) show the bias of

Bias of

In Figures 7(a) and 7(b), we compare the performance loss incurred using the different thresholding techniques. We define the

Figure 8 shows the robustness of our scheme due to a mismatch in the true value of ϵ and the value of ϵ used by the CH for the ML estimation. We notice that even if there is a mismatch, the performance degradation is not significant. The performance of having a perfectly estimated value of ϵ can be replicated using more sensor motes under the condition of a mismatch in ϵ. Furthermore, the gap between the performance between the perfectly estimated ϵ and the mismatched ϵ decreases as the number of sensor motes increases.

shows the RMSE of

In a realistic situation in which the mean of the RSS distribution at the sensor nodes is unknown priori, there is no way to choose the optimal τ for the identical threshold case. It will thus be difficult to achieve good/reliable estimates using non-optimal identical thresholds. In this type of practical scenario, if both approaches (non-optimal identical and non-identical thresholds) use the same number of sensors, and each sensor samples the RSS once, then using the non-identical threshold approach would produce a much more accurate estimate.

Although both non-identical thresholding approaches require fewer sensors than the non-optimal identical threshold technique to achieve near-optimal performance, we observe that compared to the binning approach our thresholding scheme is of low complexity, as it does not involve solving any complex optimization problem to design the thresholds. Furthermore, the dithered quantization technique performs better in terms of having a lower RMSE, variance, and bias compared with the binning and non-optimal identical thresholding techniques, particularly when the number of sensors is small, which is the typical case in a practical scenario.

In summary, the dithered quantization technique has significant advantages over other techniques in terms of either the number of sensors required to achieve a given accuracy or the accuracy of estimates achievable with a fixed number of sensors.

6. Drawbacks of the Identical Threshold Approach

In this section, we study the disadvantages of using the identical threshold approach and the effect on

In (a) the CRB and the Chernoff bound for

7. Conclusion

In this paper, we proposed and analyzed a sensor fusion algorithm for interference characterization in wireless networks. To minimize energy usage, each wireless sensor in the network transmits only 1-bit of quantized, noisy RSS information to the FC over a BSC.

Unlike previous approaches that used identical thresholds for all sensors or use non-identical thresholds based on solving a complex optimization problem, we propose a simple and low complexity threshold design technique of uniformly distributing the thresholds over the range of possible values of the mean of the RSS distribution. This approach performs (i) as well as the optimal identical threshold approach and (ii) significantly better than the identical non-optimal threshold design approaches and has a performance that is more accurate or as good as previously proposed non-identical threshold techniques. The optimal identical threshold case is highly unrealistic because the optimal threshold cannot be known in advance; our non-identical threshold technique is much more practical, reliable, and of low complexity design compared to previously proposed techniques based on either using non-identical or identical non-optimal thresholds.