Abstract

Many operations are characterized by a trade‐off between speed and quality. This calls for an exploration of effective strategies to balance the two metrics. This study investigates the separate and joint effect of quantity and quality feedback on both performance metrics in order picking. We collected data from two real‐effort experiments with in total 212 participants, who conducted an order picking task in an experimental warehouse. The results of the main experiment show that giving only quantity feedback improves productivity performance, without compromising on quality. No significant effects of quality feedback were identified. Furthermore, combining quantity and quality feedback does not create a synergistic or a weakening effect on both performance dimensions. The second experiment was used as a robustness check in a different setting, and its results show that quantity feedback not only positively impacts productivity, but can also improve quality. We further decompose the aggregate productivity improvement into the separate impact on pickers who perceive different relative positions. We find that the top‐ranked and middle‐ranked workers in their group accelerate less relative to themselves than the bottom‐ranked workers do, and the acceleration gap between the top‐ranked and the bottom‐ranked pickers is smaller when quantity feedback is present. Our findings show that managers can achieve both fast and faultless performance by carefully designing the performance feedback to their employees.

Introduction

The boom of e‐commerce requires companies and warehouses to deliver products to increasingly demanding customers in a timely and accurate manner. This forces warehouses to operate at a high level of efficiency and productivity (Grosse et al. 2017). Order picking—the retrieval of products from their storage locations in the warehouse to satisfy orders of specific customers—is an essential activity in warehouse operations. It has a strong influence on the responsiveness of a warehouse and thereby on the smooth operation of supply‐chain processes (Chen et al. 2010). It is considered the most labor‐intensive and time‐consuming process in a warehouse (Grosse et al. 2017), and constitutes a substantial part of its operating costs (Tompkins et al. 2010). In addition, order picking significantly influences the service quality provided for customers in terms of picking errors (e.g., damages, mispicks, and shorts) (Battini et al. 2015, Berger and Ludwig 2007, Van Gils et al. 2018). Picking errors are costly because they need to be corrected either immediately, which can cause delivery delays and a loss of customers (Bateman and Ludwig 2004, Grosse and Glock 2013), or later as a refund or extra compensation to the customers, which leads to financial loss to the warehouse (Bateman and Ludwig 2004, Berger and Ludwig 2007). To sum up, order picking is therefore perceived as the highest priority activity in warehouses in terms of performance improvement (De Koster et al. 2007, Tompkins et al. 2010). However, studies on order picking mostly focus on a single performance dimension (Chen et al. 2010). Ignoring any dimension leads to an overestimation of the real performance if a trade‐off exists, or an underestimation of the performance if a joint gain can be achieved. This motivates us to focus on productivity and quality performance simultaneously. We are particularly interested in ways to better balance the two performance dimensions.

Organizations have implemented various strategies to motivate workers and enhance performance. Providing relative performance feedback is one of the most popular and promising levers, especially in organizations where workers retain discretion over how to perform tasks (Song et al. 2018). According to McGregor (2006), more than one‐third of US corporations use various forms of relative performance feedback in their employee evaluation and management systems. However, a recent Gallup survey revealed that only 25% of employees “strongly agree” that they receive meaningful feedback that helps or motivates them to do better work (Ben Wigert 2017). Such a low rate reflects the inefficiency of most feedback strategies in use, as well as the necessity of relevant research in this field. Another factor that should not be neglected is that giving immediate feedback is costly for the organizations because of the time, resource, and technology investment (Eriksson et al. 2009). For these reasons, it is vital for organizations to justify the effectiveness of their feedback strategies.

Previous feedback studies focus primarily on a specific metric of performance (e.g., productivity or quality) and use feedback strategies accordingly. For example, productivity‐specific feedback (e.g., quantity or quantity‐based ranking) is mainly aimed at improving productivity performance (e.g., Eriksson et al. 2009, Jordi Blanes and Nossol 2011, Jung et al. 2010, Schultz et al. 2003, Song et al. 2018). Similarly, quality‐specific feedback (e.g., errors) is mainly provided for quality control (e.g., Berger and Ludwig 2007, Berglund and Ludwig 2009, Park et al. 2019). The results suggest that “the clearer the feedback information is, the greater the effect” (Schultz et al. 2003, p. 90). However, researchers are concerned that feedback could improve some performance indicators at the expense of hurting other performance indicators (DeNisi and Kluger 2000). Given the importance of both productivity and quality performance in many operational contexts, we are intuitively motivated to investigate both feedback strategies, hereinafter known as quantity feedback and quality feedback, and to examine their separate and/or combined consequences on the two performance dimensions simultaneously.

In addition, working in groups is common in many workplaces, such as banks, restaurants, assembly lines, and warehouses. In these environments, workers can interact with each other, and adjust work speed for various reasons such as congestion, social pressure, workload, and deadlines (for a review of how social pressure impacts worker behavior and productivity, see Allon and Kremer 2018). It is important for managers to monitor group dynamics and to take promotive or preventive measures accordingly. A well‐acknowledged group phenomenon is the “regression‐to‐the‐mean effect” (Doerr et al. 1996,2004, Schultz et al. 1998,1999,2010). It describes the phenomenon that in a group the slower workers speed up, and the faster workers slow down. In other words, people perceiving different performance positions in a group exert different effort on the follow‐up tasks. This conclusion is drawn mainly from systems with serial and interdependent tasks. In such systems, starvation or blocking occurs and leads to low system efficiency. To reduce the pressure of being the cause of the starvation or blocking, the slowest workers speed up and the fastest workers slow down (Schultz et al. 1998). However, situations are quite different in parallel systems where tasks are independent. The issue of system starvation or blocking becomes less prominent and productivity improvement depends on the sum (or average) of the effort of all workers instead of the slowest worker. Schultz et al. (2010) investigated the group dynamics in a parallel task and found widely varying individual reactions, but they did not mention how the group workers were incentivized. The absence of proper incentives makes a big difference in motivating group workers (Hannan et al. 2008, Siemsen et al. 2007). For example, if a group incentive or fixed payment were used, poor performers would reduce effort and free‐ride on others' effort, and top performers would also be demotivated when they found that their peers were free‐riding on them (Kerr 1983). When motivations are different, it becomes unclear whether the “regression‐to‐the‐mean” conclusion still holds. We build on these papers by exploring the group dynamics in parallel tasks where workers are paid for performance, and by revealing the influence (if any) of feedback strategies on them. We specifically address the following research questions by studying order picker behavior and performance (i.e., productivity and quality) with different feedback strategies (i.e., no feedback, quantity feedback, quality feedback, combined quantity and quality feedback): To what extent do quantity feedback and quality feedback (separately and jointly) influence order picking performance, in terms of both productivity and quality? How does the perceived relative position of pickers impact their effort in parallel order picking?

We examine these questions by conducting a real‐effort experiment in a laboratory warehouse with 126 participants, and a robustness study with 86 participants. The remainder of this study is organized as follows. In the next section, we introduce the activities in manual order picking, examine the theories on relative performance feedback and perceived relative position, and formulate our hypotheses. Section 3 describes the experiment design and measures for this study. The results, analyses, and a robustness study are presented in section 4. Section 5 offers the theoretical and practical implications, limitations, and suggestions for future research, followed by a conclusion in section 6.

Literature Review and Hypotheses Development

Order Picking

Order picking involves the process of clustering and scheduling customer orders, assigning stock to order lines, releasing orders to the floor, picking articles from storage locations, and disposing the picked articles (De Koster et al. 2007). In spite of the emergence of automated or semi‐automated warehousing systems where robots and information and communication technology are used, a large share of all orders will still be picked manually in the coming decade, and humans will continue to play an essential role in the order picking process in most warehouses (Azadeh et al. 2019). One reason could be management concerns of the risk of interrupting warehouse operations in the implementation periods or in the event of system failure, and the loss of flexibility in the longer run (Hackman et al. 2001). Another reason is that the main advantage of automation (e.g., savings in labor costs) may be offset by high automation investments which can only be earned back in the medium and longer term (Azadeh et al. 2019, Hackman et al. 2001). Moreover, humans are more flexible than machines and can react to unexpected changes, especially when a change requires logical reasoning, something that machines simply cannot do yet (Grosse et al. 2015). For these reasons, research on how to improve the competitivity of manual order picking systems is needed. In this study, we specifically concentrate on such manual order picking systems and pay special attention to the human operators.

A variety of ways have been proposed to improve manual order picking performance. Many deal with picking devices adoption (for a comparison, see Battini et al. 2015) and optimization problems in system design (for a review, see Van Gils et al. 2018). The literature on optimization problems covers a wide range of areas such as storage assignment (Le‐Duc and De Koster 2005, Yu et al. 2015), order batching (Gademann and Van de Velde 2005, Matusiak et al. 2014), and routing problems (Roodbergen and De Koster 2001). However, optimal solutions or work schedules may not be the best choice in practice. This is because the proposed solutions and schedules are sometimes complex and difficult to understand for order pickers (Gademann and Van de Velde 2005). Another reason is that the established decision support models either completely ignore human factors (e.g., learning effects) or involve simplified representations of human behavior in their assumptions (Boudreau et al. 2003, Gino and Pisano 2008, Grosse et al. 2017). For instance, assumptions that workers are independent, emotionless, deterministic, and effectively identical to one another are commonly used, but are not true in practice (Boudreau et al. 2003). In fact, order pickers do not work alone and can interact (i.e., learn, compete, cooperate, or share knowledge) with other pickers in a same group or shift. They learn‐by‐doing, that is, they remember and over time acquire knowledge about the warehouse layout, shortest pick route, location of items on shelves, and the retrieval and verification procedure (Grosse and Glock 2013).

Accordingly, researchers have recently been paying more attention to behavioral operations, where the effects of system design on the human‐in‐the‐system and the human influences on system performance are simultaneously considered. Research has shown that significant differences in worker skills, speed, ability, and variability (heterogeneity) exist, even for simple manual tasks (Boudreau et al. 2003, Doerr and Arreola‐Risa 2000). Matusiak et al. (2017) found that significant improvement in total order processing time can be achieved by considering these skill differences among pickers in the batching and routing methods. Research has also validated the effect of learning and experience on reducing order picking time, especially in search activities in a chaotic storage context (Batt and Gallino 2019). Order fulfillment productivity greatly improves after a proper combination of tasks details and the workers (i.e., learning and experience). In a controlled field experiment, De Vries et al. (2016a) studied how different incentive systems (competition‐based vs. cooperation‐based) and regulatory foci (prevention‐focus vs. promotion‐focus) influence order picking productivity in different picking systems, and found that productivity can be considerably improved by aligning order picking methods and incentive systems for the right pickers. Jordi Blanes and Nossol (2011) found that giving workers feedback on their relative performance resulted in a large and long‐lasting increase in productivity performance in a warehouse. We refer to Grosse et al. (2017) for more order picking works considering human factors.

Several studies also consider the quality performance of order picking. By implementing feedback intervention such as voice‐assisted technology (Berger and Ludwig 2007) and frequent and specific feedback (Park et al. 2019), the error rate of order picking can be reduced substantially. This effect was found to be particularly large for pickers who made the most errors (Berger and Ludwig 2007). De Vries et al. (2016b) investigated various order picking tools (e.g., voice, handheld, RF‐terminal, and paper technologies) and compared the resulting productivity and quality performance simultaneously. They found that increased productivity and quality performance can be achieved by assigning the right pickers to work with a particular picking tool or method. These findings jointly highlight the importance of human factors and the necessity of incorporating them in traditional order picking planning models. Detailed procedures for the incorporating are described by Grosse et al. (2015,2017). We extend this stream by exploring the effect of different feedback policies rather than picking tools on human behaviors and the resulting influence on both productivity and quality performance.

Relative Performance Feedback

Workers have an inherent need to know how well they are doing when performing a task (DeNisi and Kluger 2000, Jung et al. 2010). Relative performance feedback fulfills this need by providing information to workers about their performance on specific metrics compared to a standard or to that of others (Jordi Blanes and Nossol 2011). This intervention is assumed to improve performance in multiple ways. It facilitates a social comparison process, which is useful to improve one's performance through direct comparison with peers (Monteil and Huguet 1999). Falk and Ichino (2006) and Mas and Moretti (2009) reported that just allowing people to observe each other's performance has a positive influence on their performance even in settings with flat wage schemes. This is commonly known as the “positive peer effect” (Kandel and Lazear 1992). Relative performance feedback is also an important source of task motivation and its presence produces increased satisfaction and motivation (Hackman and Oldham 1976, Jung et al. 2010). Evidence from decision‐making models shows that individuals learn from the outcomes of their decisions or behavior (DeNisi and Kluger 2000), and enhancements to feedback facilitate even better learning‐by‐doing behavior and lead to optimal choices (Bolton and Katok 2008). Furthermore, Williams et al. (1981) reported that social loafing, the tendency for people to exert less effort to achieve a goal when they are in a group, is more pronounced when a worker's individual effort is not visible and diminishes when feedback allows him 1 to identify individual outcomes. Performance feedback combats social loafing by creating social recognition or approval (i.e., positive reinforcement) for front runners and unpleasantness or uneasiness (i.e., negative reinforcement) for laggards (Jung et al. 2010).

However, performance feedback is not always as effective as it is typically assumed (DeNisi and Kluger 2000). Poorly implemented feedback strategies can actually hurt rather than improve performance, and some feedback strategies improve performance for some performance indicators, but actually hinder performance for other indicators (DeNisi and Kluger 2000). For example, in a laboratory experiment, Eriksson et al. (2009) found that feedback does not enhance individual productivity performance, and at the same time, it reduces the quality of the low performers. Regardless of the possible conflicts between performance metrics, most feedback studies focus primarily on a specific metric of performance and use feedback strategies accordingly. Typically, quantity‐based feedback is used for productivity improvement, and quality‐based feedback is used for quality improvement. Limited attention is given to the side effects of each feedback strategy on the other performance indicator, or to the potential synergistic effects of the two feedback strategies. Investigating the influence on different outcomes enables us to make a more comprehensive evaluation of the effectiveness of feedback strategies. In view of this, our paper distinguishes by focusing on the two performance dimensions (i.e., productivity and quality) simultaneously, and by investigating the situation when quantity and quality feedback strategies are combined. The work by Gardner (2020) closely relates to our study, by investigating the influence of four types of feedback (i.e., speed, accuracy, joint speed and accuracy, and yields) on production performance. The current study differs from Gardner (2020) in two important ways. First, we focus on a group working environment and provide feedback information publicly, while Gardner (2020) focuses on individual working and presents individual performance feedback privately. As a result, social comparison is absent in Gardner (2020), which makes the motivations for subjects in the two contexts substantially different. Second, we investigate the speed‐quality trade‐off without considering the effect of rework, while Gardner (2020) explicitly accounts for rework. Rework provides participants with the opportunity to correct their errors, which makes the underlying trade‐off mechanisms in the two studies different.

Table 1 shows the focus and position of our paper in the feedback literature, as well as the associated hypotheses to be tested in this study. In Table 1, a positive influence of specific feedback (quantity or quality) on a specific performance dimension (productivity or quality) is suggested (i.e., H1a, H2b). These are generally the focus and main conclusions of previous works (see examples in Table 1). Some studies on quantity‐based feedback additionally discuss (or control) quality performance (Eriksson et al. 2009, Gino and Staats 2011, Jordi Blanes and Nossol 2011, Jung et al. 2010, Song et al. 2018), but reveal mixed influences on quality. We predict a negative influence of specific feedback (quantity or quality) on the other performance indicator (quality or productivity) (i.e., H1b, H2a). The consequences for combined quantity and quality feedback are yet unpredictable and we leave the hypotheses direction unspecified (i.e., H3a, H3b). We explain the hypotheses in more detail in the next sections.

Quantity‐Based Feedback

Based on the multiple mechanisms through which feedback can work and the generally positive conclusions from previous feedback studies (e.g., Jordi Blanes and Nossol 2011, Jung et al. 2010, Schultz et al. 2003, Song et al. 2018), we predict that order pickers work faster when quantity information about their effort is displayed. This is reflected in hypothesis 1a.

Focus and Position of Our Paper in the Feedback Literature (Bold Type Signifies the Most Tested Hypotheses, “+” Means Positive Effect, “−” Means Negative Effect, and “?” Means Undirectional Effect)

Pickers who receive only quantity‐based feedback are more productive than those who are not given this feedback.

Prior research has documented the existence of a “speed‐quality trade‐off,” signifying that higher working speed leads to lower quality (Anand et al. 2011). As stated in H1a, quantity feedback stimulates higher productivity implying a faster working pace in the process, which could consequently sacrifice quality. In addition, giving feedback informs workers that their performance is closely monitored by managers. The exercise of monitoring performance per se, as well as the social comparison, could make workers feel stressed and anxious, especially those who perform poorly relative to their peers (Eriksson et al. 2009, Holman et al. 2002). In a laboratory experiment of concentration tasks, Eriksson et al. (2009) found that subjects who lag behind and face more pressure make more mistakes. Feedback intervention itself also distracts and disrupts subjects, and thereby causes more errors (Eriksson et al. 2009). Moreover, quantity‐based feedback does not display any clue of errors. This could transmit a misleading signal to workers that quality performance is less important, negligible, or even unmonitored, so that workers are indifferent to errors. According to the above analyses, we propose that giving quantity‐based feedback to order pickers increases picking errors. This is reflected in hypothesis 1b.

Pickers who receive only quantity‐based feedback make more errors than those who are not given this feedback.

Quality‐Based Feedback

As mentioned before, feedback influences behavior and performance through social comparison. Quality feedback displays only errors, thereby allowing workers to compare and compete in quality performance, rather than in productivity. As a result, quality feedback is unable to effectively motivate workers toward productivity improvement. Besides, quality feedback intensifies the consequences of errors and weakens the influence of productivity. This intervention shifts workers' attention to errors, and drives them to exert more caution and effort to avoid making errors. According to the “speed‐quality trade‐off” (Anand et al. 2011), they may even slow down their working pace, which leads to a lower productivity in the end.

Giving quality feedback may also require workers to correct errors before proceeding (Bateman and Ludwig 2004, Batt and Gallino 2019, Berglund and Ludwig 2009, Terrell 1990). Correcting an error needs extra effort (e.g., returning the wrong products, re‐picking the correct products), and obviously such effort does not create additional value. More importantly, giving too many warnings and correcting behavior can disrupt the smooth running of operations and decrease overall productivity. For these reasons, we propose that giving quality feedback decreases productivity. This is stated in hypothesis 2a.

Pickers who receive only quality‐based feedback are less productive than those who are not given this feedback.

As discussed above, quality feedback highlights the importance of quality and induces a behavior change toward quality improvement. It also gives workers information about the type, extent, and direction of errors so that they may be located and corrected (Forza and Salvador 2000). More support for the positive impact on quality can be found in systems that use voice technology to give corrective feedback (Bateman and Ludwig 2004, Berglund and Ludwig 2009). Given the above information, we propose that providing quality feedback to pickers increases their picking quality. This is tested in hypothesis 2b.

Pickers who receive only quality‐based feedback make fewer errors than those who are not given this feedback.

The analyses above generally recognize the influence of feedback strategies, and the hypotheses indicate a trade‐off between productivity and quality performance when quantity feedback and quality feedback are implemented separately. On this basis, we analyze the situations when the two feedback strategies are used simultaneously.

Combining Quantity and Quality Feedback

Since quantity feedback and quality feedback are expected to have an opposite impact on quality performance and productivity performance, respectively, we may expect a weakening effect on each performance dimension when they are combined. For example, because quality feedback is expected to negatively impact productivity, the extent of productivity improvement when combining quantity and quality feedback will be lower than when only quantity feedback is provided. The remaining expectations can be motivated in the same manner.

However, a synergistic effect could also be possible. In fact, using quantity and quality feedback together goes beyond simply combining the two feedback strategies. More specific information can be inferred from the combined information through simple calculation. For instance, a worker who is given combined quantity and quality feedback knows not only his total picked quantity and errors, but also the correct numbers, which basically reflects the overall distribution of his effort. In this regard, using combined quantity and quality feedback strategy provides more specific information to workers than using them separately. According to the findings of feedback study that more specific feedback information results in more effective impact (Schultz et al. 2003), we can expect a synergistic effect. Several studies have also identified exceptional cases in which specific feedback functions differently. For example, it has been found that global feedback and specific feedback lead to comparable results when social comparison feedback is additionally provided (Williams and Geller 2000), when the feedback is more frequently delivered (Park et al. 2019), or when participants are compensated based on an individual incentive scheme (Hannan et al. 2008). Lee et al. (2014) found that global feedback is more effective than specific feedback in improving performance dimensions beyond the scope of the feedback. That is, providing global feedback allows workers to engage in an active exploration of all relevant behaviors or performance, whereas specific feedback confines people's focus to only certain target behaviors or performance.

Taken together, the two opposite or synergistic effects could occur separately or simultaneously, and lead to very different results. Therefore, we formulate hypotheses on the interaction of quantity and quality feedback, but do not specify a direction. By comparing the outcomes in the synergy situation with that where feedback strategy is used alone, we can test the following hypotheses:

An interaction effect between quantity and quality feedback exists in predicting productivity performance, such that the effect of combined quantity and quality feedback differs from the sum of the separate effects (no direction predicted).

An interaction effect between quantity and quality feedback exists in predicting quality performance, such that the effect of combined quantity and quality feedback differs from the sum of the separate effects (no direction predicted).

In fact, the heterogeneous results of feedback are not only due to the feedback diversity itself, but also because of the confounded effects caused by other factors. Factors such as task characteristics (e.g., complexity, duration) (DeNisi and Kluger 2000, Gardner 2020, Kluger and DeNisi 1996), situational variables (e.g., goal setting, incentive programs) (Alvero et al. 2001, Erez 1977), personality (Bateman and Ludwig 2004), as well as the validation of best practices being shared (Song et al. 2018) can all be moderators. Research shows that feedback combined with these factors produces more consistent effects than feedback alone (Alvero et al. 2001). By its very nature, feedback is usually paired with incentive programs (Bateman and Ludwig 2004), and particularly a pay‐for‐performance incentive is adopted when the output can be directly or efficiently measured. In a parallel group‐working context with a single customer queue, Shunko et al. (2018) found that workers who were paid for performance tried to work as fast as possible even when the queue‐length visibility was blocked (i.e., limited feedback). Therefore, building on existing literature, our paper adopts a pay‐for‐performance incentive. Appendix A provides a more detailed summary of studies that simultaneously investigate the influence of feedback with incentives on different performance dimensions, as well as the difference with our study.

Effect of Perceived Relative Position

The above hypotheses on aggregate performance do not necessarily guarantee an identical effect on all workers. The effect may vary from worker to worker (Jordi Blanes and Nossol 2011). According to Bucklin et al. (2004), these complicated mechanisms largely depend on the performers' historical behavior. When making comparisons to others, people may focus on more productive colleagues or less productive colleagues, and behave differently according to the reference groups (Roels and Su 2013). In a non‐interactive context where the top‐performers' best practice is regularly shared, bottom‐ranked workers attain greater performance improvement than the reference group, that is, middle‐ranked workers (Song et al. 2018). In the context of conjunctive tasks, slower workers speed up and faster workers slow down (Doerr et al. 1996,2004, Schultz et al. 1999). However, the behavioral change and consequences in the context of interactive and parallel tasks are not clear, because the motivation and emotion for workers perceiving different relative positions vary.

Workers in parallel systems do not have to worry about being the cause of system blocking or starvation. However, they experience different emotions depending on the saliency of the comparison with their coworkers (Gino and Staats 2011). For instance, a perceived front runner may experience a positive affective state (e.g., joy), which could further increase his confidence in his ability to perform well. A perceived laggard, on the other hand, could experience a negative affective state (e.g., tension), which could amplify feelings of shame due to poor performance. These affective states (e.g., joy, disappointment, tension) can increase or decrease a worker's motivation (Gino and Staats 2011) and impact goal‐directed behavior and performance on subsequent tasks (Ilies and Judge 2005). Nevertheless, the exact behavioral consequence is still unpredictable. A front runner may assume that his colleagues are not working hard and hence reduce his effort to gain equity (Jackson and Harkins 1985). Alternatively, the front runner could be more enthusiastic and increase effort to challenge his potential (Eriksson et al. 2009). A laggard, on the other hand, may work harder to preserve pride and avoid the embarrassment or shame of being in last place (Eriksson et al. 2009, Kuziemko et al. 2014). Laggards could also become demotivated, exert less effort, and even quit the task (Ashraf et al. 2014, Eriksson et al. 2009). Given these conflicting findings, it is difficult to predict a direction or magnitude of the pure influence of perceived relative position on workers' dynamic effort adjustment in parallel tasks. This is tested in hypothesis 4.

Pickers' perceived relative position influences their effort on subsequent tasks (no direction or magnitude predicted).

Methodology

Participants

We test how people react to feedback. For this purpose, we collected data from 126 participants and conducted a laboratory experiment in a controlled setup. The participants were students from one university in China recruited through flyers posted on campus, and they were divided into three‐person groups to represent workers who are working in parallel and paid for performance. The participants registered for the experiment through an individual online registration link and chose three available time slots, and were accordingly scheduled together with two other participants. The purpose of this procedure was to reduce the chance that friends or familiar individuals were assigned to the same group. The effects of peer familiarity on behavior may be stronger in the company of friends vs. strangers (Gardner and Steinberg 2005).

Of the 126 participants, 48% were male, and the average age was 22 ranging from 17 to 30. None of the participants had any order picking experience. All participants received the same instructions prior to the picking task, and they were paid based on individual performance. The order picking task in the experiment was easy‐to‐learn. As summarized by Ederer and Manso (2013), pay‐for‐performance incentives are effective for tasks where physical effort is the main determinant of productivity. Specifically, participants got the following instruction “Your earnings depend on your performance, which is the number of correctly picked products. You can earn between 10 (i.e., the show‐up fee) and 50 RMB at the end of the experiment” (approximately equivalent to $1.55 and $7.73). The piece rate for a correct product was 0.1 RMB, and average earnings throughout the experiment were 28.3 RMB.

Procedure

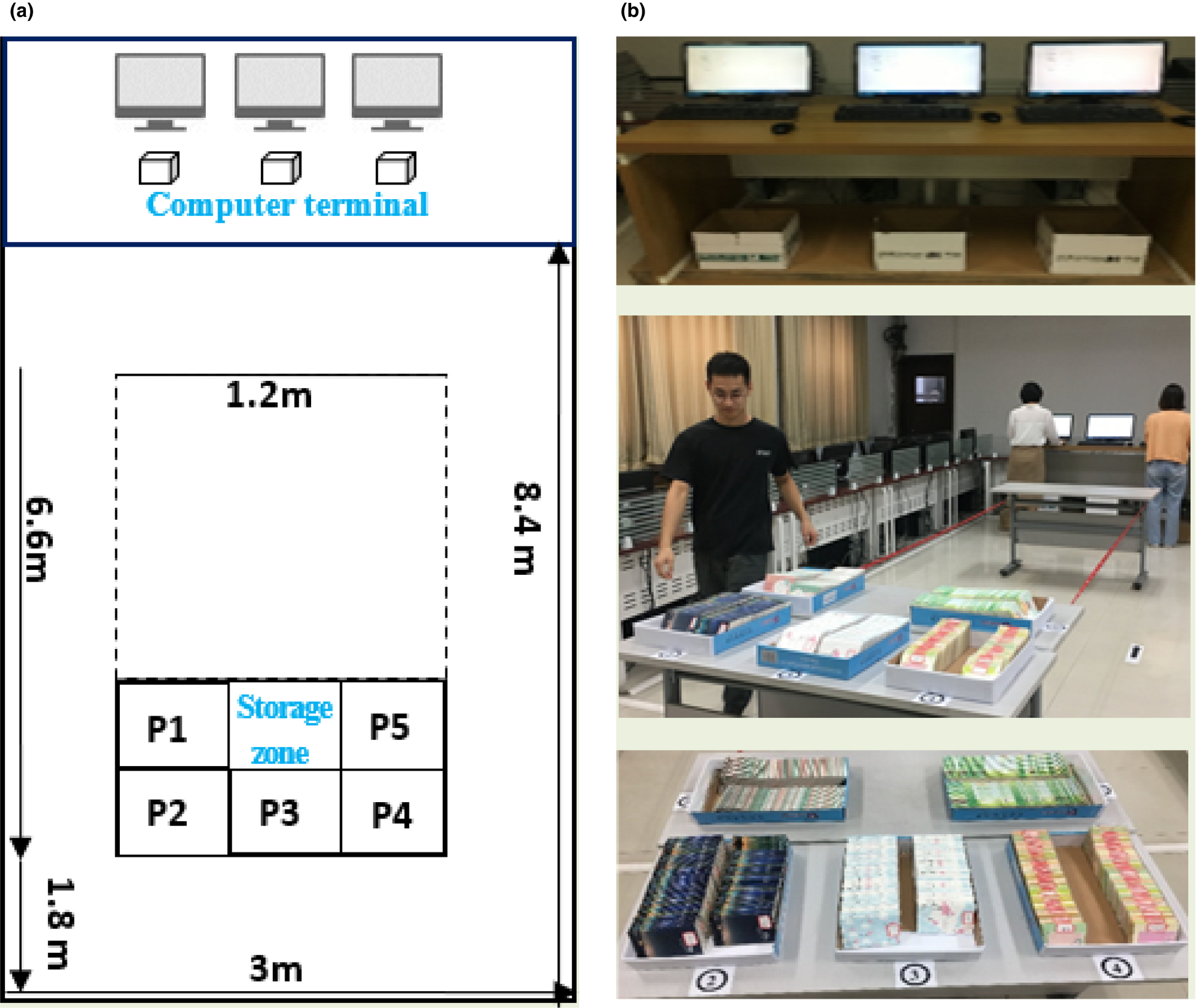

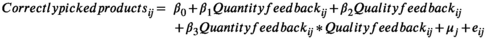

The experiment was conducted in a laboratory warehouse (see layout on the left of Figure 1). The warehouse consisted of two parts: computer terminals (one for each subject) and product storage zone. Three computer terminals were arranged compactly, and programs were set for subjects to receive and confirm customer orders, and to provide feedback information depending on the experimental treatment for each group. Below each computer there was an empty box to collect picked products (see the real setting on the top right of Figure 1). At the storage zone, there were five numbered locations and each location stocked one product (see products on the bottom right of Figure 1). The products were small notepads and sticky notes with different colors. We used a six‐digit code to distinguish the five products in the computer system, and the code was also pasted on both sides of each product (e.g., 010101, 101010). Figure 1b in the middle shows a snapshot of the laboratory where three pickers were working in the experiment.

(a) Laboratory Warehouse Layout and (b) Experiment Setting, Pickers, and Products [Color figure can be viewed at

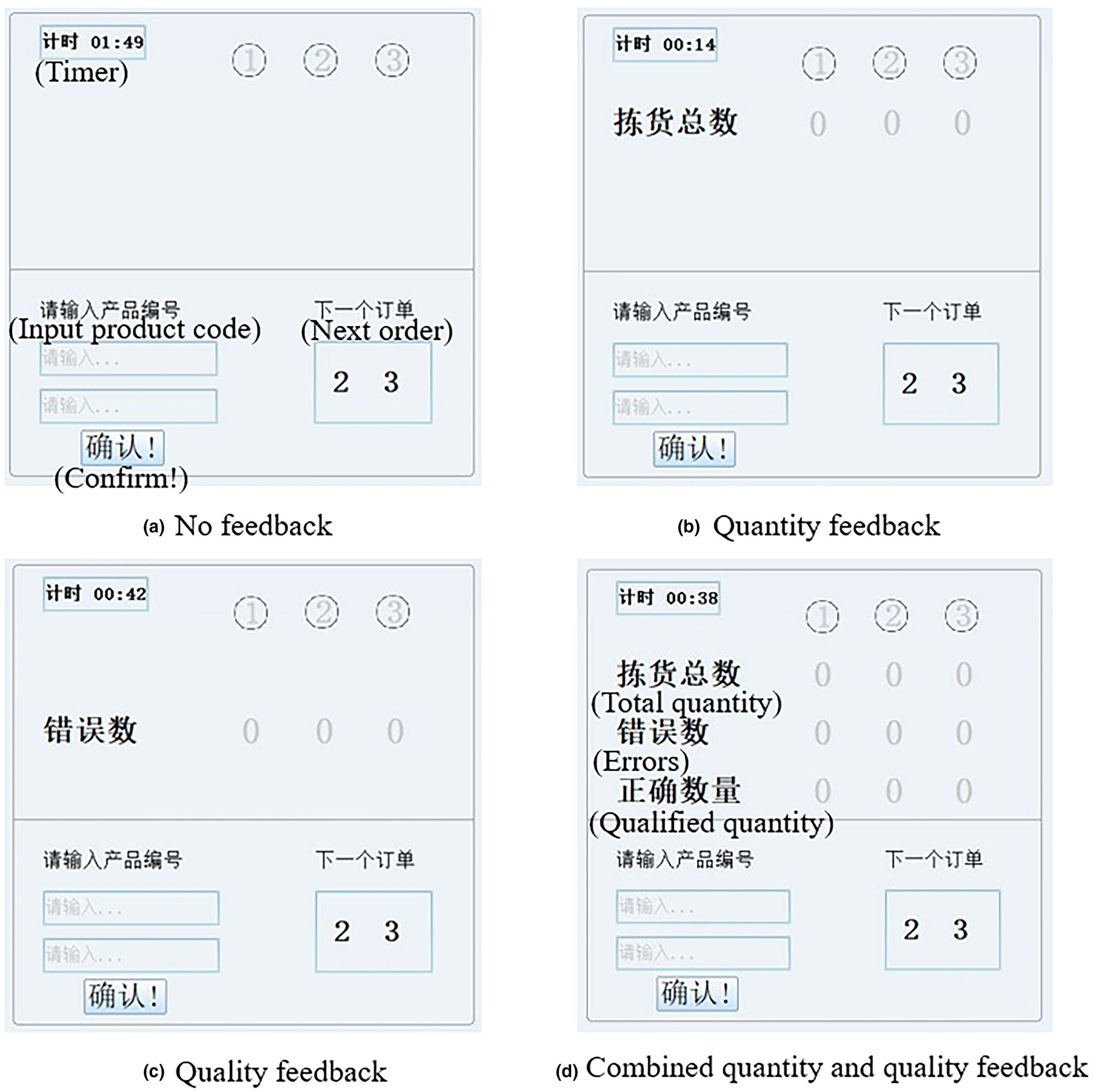

After entering the laboratory, the participants first completed a short questionnaire on demographics, and conducted a trial run of two orders. Each order included two products, each with one unit. A typical procedure to finish an order consisted of the following activities: (a) a picker received an order from the terminal which showed the locations of the two products; (b) the picker walked to the storage zone, searched for and picked the required products and went back to the terminal in a counter‐clockwise direction; (c) the picker input the six‐digit code on each product into two textboxes on the bottom left of the screen in sequence (see Figure 2); and (d) the picker clicked the confirmation button after inputting and dropped off the products into the collection box below his terminal. The terminals were programmed to process the inputs, save the accumulated results dynamically, and display some information immediately on the computer screen depending on which treatment was used. Then, in the following real picking run of 30 minutes, the three‐person group was randomly assigned a feedback condition. The total experiment lasted approximately 45 minutes.

Four Experiment Setups (Words in Brackets are the Translation of the Chinese Words) [Color figure can be viewed at

Experimental Manipulations

One hundred and twenty‐six participants were randomly assigned to four experimental conditions: (a) no feedback; (b) quantity feedback; (c) quality feedback; and (d) combined quantity and quality feedback. The experiment interface was originally designed in Chinese, and we attached the English translation below (see Figure 2a for basic setup translation and Figure 2d for feedback translation).

Measures

Although subjects worked in groups, the data were collected and analyzed on an individual basis. We analyzed the aggregate performance on both productivity and quality.

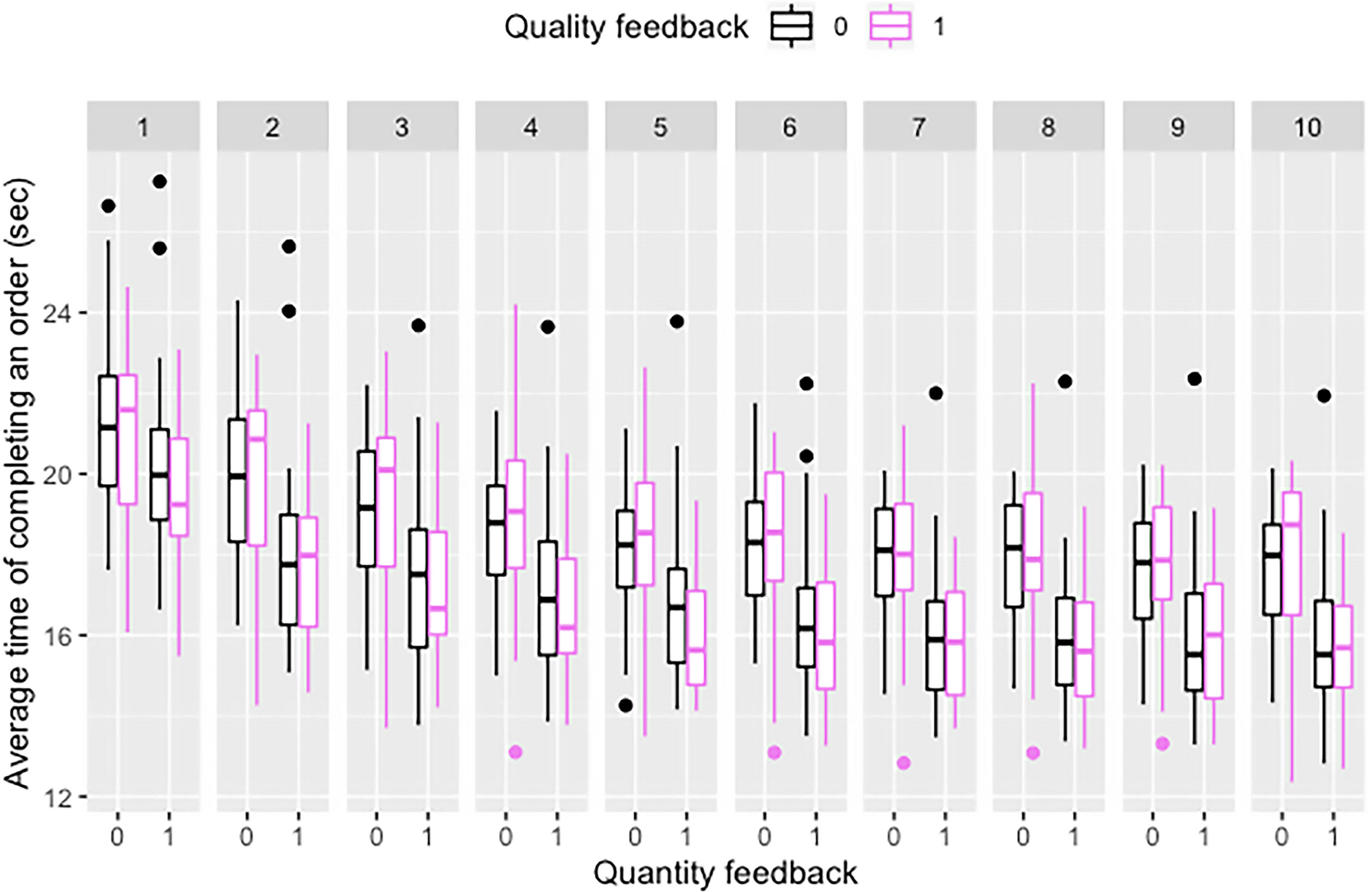

We also collected the quantity and error information of each participant at 3‐minute intervals (i.e., 10 times) for further analyses of pickers' behavioral mechanisms in the process. The following indicators were transformed from the dynamic process data (i.e., time‐based), and served as the process outcome and important drivers, respectively.

In addition, we used the variable “

Average Time of Completing an Order Throughout the Process (10 Intervals) [Color figure can be viewed at

Results

Table 2 shows the distribution of participants and groups across the four conditions. In the condition of combined quantity and quality feedback, the data of two participants from two groups were removed from the sample, because they did not fully understand the instructions and made excessive errors due to incorrect operations. The descriptive statistics in Table 2 are calculated after the removal.

Descriptive Statistics and Distribution of Participants and Groups Across Conditions

: The results of two participants were removed from the sample.

To test the hypotheses and identify the most effective feedback strategy, we specify the basic model in the beginning and conduct a series of analyses. The results are presented from two perspectives. First, we study the influences of feedback strategies on a picker's aggregate productivity, and we then go deeper into the process to investigate how these influences, if any, vary across pickers with different perceived relative positions and across different stages (i.e., progress stage vs. steady stage). Next, we analyze the influences of feedback strategies on picker quality performance.

Model Specification

Since three pickers pick in a group and the process data are collected for each picker every 3 minutes, we need to consider dependence among the pickers in the same group and among the observations per picker. We first take productivity as an example to specify our basic model. We conducted a multilevel analysis with participants nested in groups to assess the variance in individual productivity that could be explained by group level. The analysis was performed based on the steps explained by Bliese (2009), using the multilevel package in R 3.4.2 (R Core Team 2017). The intraclass correlation coefficients ICC(1) and ICC(2) were calculated first to evaluate the reliability (Table 3). The value of 0.59 for ICC(1) indicates that 59% of the variance in individual productivity can be explained by group level, and the value of 0.81 for ICC(2) indicates that groups can be reliably differentiated in terms of average productivity (Fleiss 2011). Subsequently, we employed a random group resampling (RGR) procedure with 9996 pseudo groups to test whether the within‐group variances of individual productivity from the real groups were significantly smaller than those from the random groups. The RGR z‐value of −4.08 indicates that the variance in productivity in the actual groups is significantly smaller than the variance in the randomly created groups (i.e., high agreement in real groups). The results in Table 3 jointly reveal that the group level explains a significant part of the variance in individual productivity.

Group‐Level Properties

We then compared a model without a random intercept with one that contained a random group intercept using the “lme” and “gls” function in the “nlme” package (Pinheiro et al. 2007) in R 3.4.2 (R Core Team 2017). The −2 log‐likelihood value for the random intercept model (i.e., deviance value = 1082.8, df = 3) was significantly (

Effects of Feedback Strategies on Picker Productivity and Dynamic Effort

We estimate aggregate productivity using the following model at the participant level:

In Equation (2), “Correctly picked products

Empirical Model on Aggregate Productivity (Model 1)

Note

**

The significant likelihood ratio test demonstrates that Model 1 as a whole fits the data relatively well. The results in Table 4 show that giving only quantity feedback has a positive and significant (

After establishing that quantity feedback significantly increases productivity, it is still unclear how a group of workers are affected and how such influence varies over the process. To answer these questions, we analyzed the dynamic process data with a stepwise regression method (see Table 5). We used the dynamic indicator “relative picked products after” as the dependent variable. Since the data were collected from a single picker every 3 minutes, we employed models with a random participant and group intercept for subsequent analyses of the varying behaviors.

Stepwise Regression with Feedback Strategies, Dynamically Perceived Rank, and Progress Stage Interactions

Notes

(1) *

A bold font for coefficients indicates significance at the 5% level.

First, the control variable (i.e., progress stage), the main and interaction effects of quantity feedback and quality feedback are entered into the regression as a block (Model 2). The significant likelihood ratio test demonstrates that the model as a whole fits the data better than a null model (

Second, the dynamically perceived relative position (i.e., rank) and its interaction with quantity feedback are entered into regression Model 3 as a block (reference group: last ranked). In a pretest we also tested for potential interaction effect between quality feedback and rank on process effort, but this was not identified. Dynamically perceived relative position is the combined outcome of effort of a picker and his group members. The significant likelihood ratio test shows that Model 3 fits the data better than Model 2 (

Third, the interaction of progress stage and quantity feedback is entered as a block (Model 4). This block is introduced to further understand whether the above influence of the progress stage varies across quantity feedback conditions. Results of Model 4 show that this block does not account for a significant amount of incremental variance in the dependent variable compared to Model 3. The non‐significant coefficient for the interaction indicates that the positive influence of the progress stage does not change with the presence of quantity feedback.

Effects of Feedback Strategies on Picker Quality Performance

For the analysis of quality (i.e., errors), we first calculated the intraclass correlation coefficients (ICC) using the “sjstats” package (Lúdecke 2018) in R 3.4.2 (R Core Team 2017). The ICC value for the null model which only has a random intercept is 0.017, indicating that group level only explains a small proportion of the variance in individual errors. Therefore, we did not include the influence of group level on quality analysis. We used a negative binomial count model as opposed to a normally distributed continuous model to estimate the effects of predictors on picked errors, because the number of picked errors is a non‐negative discrete variable and is not normally distributed (

In Equation (3), “total errors” is the number of errors a picker makes. “Total products” is the total effort of a picker, and equals the sum of “correctly picked products” and “total errors.” This variable is used as a predictor to test whether a trade‐off between speed and quality exists. The model used for this analysis was fit using the “MASS” package (Venables and Ripley 2002) in R 3.4.2 (R Core Team 2017). Table 6 shows the results.

Empirical Model on Quality (Dependent Variable: Total Errors)

Note

**

The joint significance test indicates the model fits the data better than a null model (

Overview of Hypotheses and Test Results

The bold font indicates significance (

Robustness Check of the Impact of Quantity Feedback

Experiment Setting

The results in Tables 4 and 6 jointly show the effectiveness of quantity feedback. We conducted the order picking activity in a different setting to test the robustness of quantity feedback. The setting differs in the following aspects.

(1) The experiment was executed at a Western European university, a setting that substantially differs culturally from the experimental context of the main study. Western European culture is characterized by a high degree of individualism, where society members are encouraged to develop a differentiated identity and personal goals and needs. Chinese culture is an example of high collectivism, where members value the group interest and seek to maintain group harmony (Oyserman et al. 2002). Therefore, testing the results in a different culture can help us further understand the applicability and generalizability of quantity feedback.

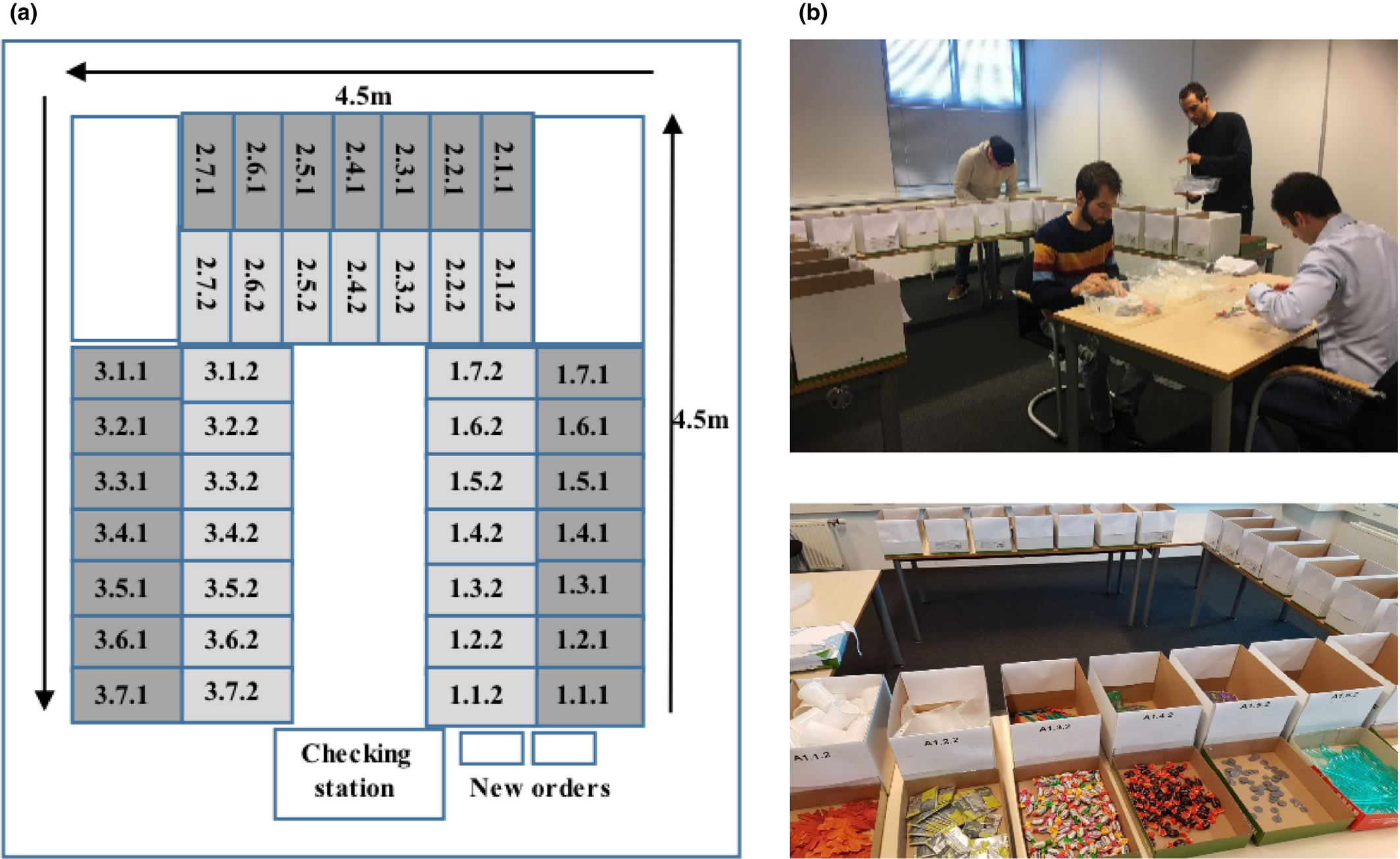

(2) We used a higher variety of products and orders in this setting (see Figure 4). The experimental warehouse stored 42 different products, some of which are with very similar appearance. The orders contained on average 8.8 different products (

(a) Laboratory Warehouse Layout and (b) Experiment with Pickers, Inspectors, and Products in Robustness Study [Color figure can be viewed at

(3) Picking errors were more common due to the higher product and order variety. The margin for error was much larger because either a wrong product or a wrong quantity could raise an error. With a larger variance in errors, we were better able to identify and understand the role of quantity feedback in predicting quality performance.

(4) We used a different individual incentive system to pay the participants. All participants in the robustness experiment were told that “Your reward is determined by your own performance, which will be compared with 360 participants in previous sessions. You can earn €15 if you are among the top performers.” Each participant could earn between €5 (i.e., the show‐up fee) and €15 (approximately equivalent to $5.65 and $16.94) at the end of the experiment. We did not specify the performance of prior sessions to avoid the confounding effect of goals, since a clear reference performance could be easy for one worker and be too hard for another and thereby create different motivations. This incentive system is used to check whether quantity feedback could still stimulate workers whose payments were contingent on the performance comparison with unknown subjects or standards. For practical reasons, each participant received €15 in the end.

The warehouse (see the layout in Figure 4a) had three storage zones, organized in an inverted U‐shape. Each zone had seven locations with two levels. The locations were numbered according to the zone, location, and level. For instance, location number 2.4.2 represents zone 2, location 4 and level 2 (higher level). Each location stocked one kind of product. After entering the laboratory, the participants first completed a short questionnaire on demographics and conducted a trial run of three full orders. A typical procedure to finish an order mainly consisted of the following activities: (a) a picker got a picking box with a new order form from the starting position and filled in his own number on the top of the order form; (b) the picker read the information on the pick list, walked to the first specified storage location and searched for the items from the outside of the inverted U‐shape; (c) the picker picked the items, dropped them in the picking box, and walked to the next picking location to pick other items; and (d) after collecting all the items on the order form, the picker dropped off the picking box with the order form at the checking station for a quality and productivity check executed by two experimenters. The participants were required to place a checkmark on the order form at the end of each order line after each pick to confirm the pick, since confirmation is critical for order accuracy (Tompkins et al. 2010). Completed order lines with the correct products, the right quantity, and the order form with checkmark were regarded as productivity. Then, in the following real picking run of 40 minutes, the group was randomly assigned a feedback condition. After that, the participants were debriefed and paid. The total experiment took approximately 60 minutes. Since the experiment was conducted in multiple and sequential sessions, it is necessary to prevent that early participants could inform later ones about payment or experiment details. We took several measures to prevent such potential spoilers: (i) The entrance and the exit of the laboratory area were different so that participants in a later round did not physically meet the participants of the previous round. (ii) The schedule of participation information was only known by the experimenters, so that participants had limited information about their participation sequence. (iii) At the end of the experiment, all participants received the same debriefing that “You are all among the top performers, so each of you earns €15.” (iv) The participants were kindly requested to keep the payment and the experiment details confidential until all sessions had finished, and we did not receive any information suggesting that this confidentiality had been compromised.

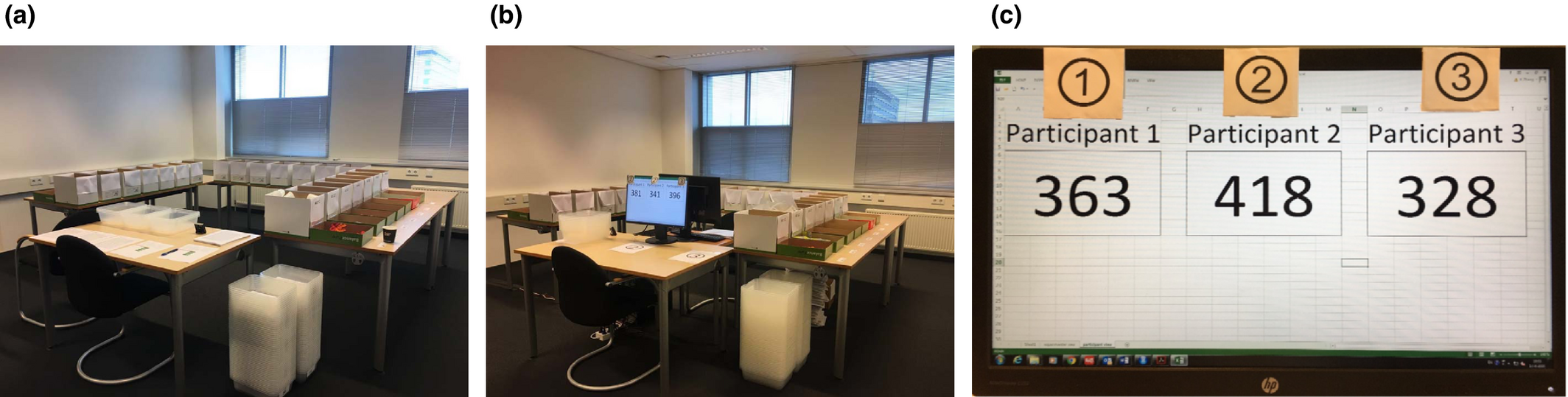

We created two feedback conditions named “No feedback(R)” and “Quantity feedback(R),” respectively (see the setups in Figure 5). In the “No feedback(R)” condition (Figure 5a), no productivity or quality information was provided. In the “Quantity feedback(R)” condition (Figure 5b), the accumulated “total picked lines” of each participant was continuously displayed on a shared screen (see Figure 5c). We collected data from 86 university student participants.

Two Experiment Setups: (a) No Feedback(R) vs. (b, c) Quantity Feedback(R) [Color figure can be viewed at

Preparation of the Data

Table 8 shows the descriptive statistics and distribution of participants and groups across the two feedback conditions. Among the 30 groups, four groups in “No feedback(R)” condition consisted of two pickers because of the last‐minute cancelations of participants, and the other groups consisted of three pickers. We first checked the influence of two‐person vs. three‐person groups on both productivity and quality performance within the “No feedback(R)” condition, and found that two‐person groups do not significantly differ in the two dimensions. For productivity influence, we used an ANOVA to compare the difference of individual productivity between two‐person and three‐person groups. Results show no significant productivity difference between two‐person and three‐person groups in the “No feedback(R)” condition (

Descriptive Statistics and Distribution of Participants and Groups Across Conditions in Robustness Study

Note

Values in square brackets indicate the number of groups comprising two pickers.

Results on Aggregate Performance

We conducted similar analyses as in the main study on aggregate performance: mixed‐effects linear regression for productivity, and negative binomial regression for quality analysis. Because the orders contained a varying number of lines, ignoring this fact would lead to an overestimation of effort of the pickers who by luck handled several large orders rather than many small ones. We therefore used “order density” (i.e., the average lines per order) as a control variable in the analyses. Table 9 shows the fit results for productivity and quality. The results demonstrate that quantity feedback significantly improves productivity (

Empirical Models on Productivity and Quality in Robustness Check

Notes

(1) †

Note that we do not directly compare the numerical results between the two experiments, because of different setups and treatment conditions. Instead, we compare the most important conclusions of both experiments, while taking the differences between them into account. Combining the findings of the main study and robustness study, quantity feedback demonstrates higher effectiveness than the other three feedback strategies (i.e., no feedback, quality feedback, and combined quantity and quality feedback) in terms of balancing productivity and quality performance.

Discussion

In the main study of this study, we used four relative performance feedback strategies (no feedback, quantity feedback, quality feedback, and combined quantity and quality feedback) in parallel order picking tasks in a laboratory warehouse, and investigated their influence on productivity and quality performance simultaneously. The results show that quantity feedback is effective in improving productivity performance, while no evidence was found for a simultaneous decrease in quality. At the same time, we did not find evidence showing that quality feedback could produce better productivity or quality performance. Combining quantity and quality feedback does not have a synergistic or a weakening effect on both performance dimensions. A robustness check in a different setting not only confirms the positive effect of quantity feedback on productivity, but also shows a potential of using quantity feedback in quality improvement. We further decomposed this aggregate productivity improvement into the separate impact on pickers with different relative performance levels. The top‐ranked and middle‐ranked pickers accelerate less relative to themselves than the bottom‐ranked pickers do, and the acceleration gap between the top‐ranked and the bottom‐ranked pickers narrows when quantity feedback is present.

Implications for Theory

This study makes several contributions to the literature on operations management and performance feedback. First, the speed‐quality trade‐off is an important issue in operations management, and we address this question with the help of relative performance feedback strategies. We find that giving only quantity feedback improves productivity performance, and this improvement is achieved without compromising on quality. On that basis, adding more specific details such as qualified number and errors does not create a synergistic or a weakening effect for both performance dimensions. This does not confirm findings that more specific feedback results in more effective outcomes (Schultz et al. 2003). However, it is perfectly in line with studies revealing that specific feedback is not always more effective than global feedback (Hannan et al. 2008, Lee et al. 2014, Park et al. 2019, Williams and Geller 2000). The public, real time feedback setting, and the explicit pay‐for‐performance incentives in our study are essentially a combination of the exceptional cases in the literature. The comparable results of global and specific feedback hold not only for productivity (target performance), but also for quality (nontarget performance). Besides, we argue that, in time‐pressured tasks, feedback which is too specific gives people too much information. The processing of such excessive amount of information is time consuming and distracts people from the main tasks.

Second, we did not find evidence for a significant improvement of quality performance when only quality feedback is provided. This is not fully in line with most literature (Bateman and Ludwig 2004, Berglund and Ludwig 2009, Park et al. 2019), in which feedback on incorrect responses is found to be effective for quality performance improvement. We speculate that two major reasons could be accountable for this inconsistency. One reason is related to the operational design and its underlying motivation. Previous literature employed corrective feedback by using instruments such as voice technology, and subjects were allowed to correct the errors when they receive the error feedback (Bateman and Ludwig 2004, Berglund and Ludwig 2009). In some computer‐based cases, subjects were even unable to proceed until they gave a correct response (Terrell 1990). It is then justifiable that the errors would be reduced under such a “forced correct response” intervention. In this regard, our finding on quality performance is not comparable, because the feedback errors in our setting cannot be corrected. They function as an antecedent notification to a subject that “You (or others) just made an error,” and the subject is then expected to be more cautious in completing subsequent tasks. The underlying motivation of such a design is to cultivate a proactive awareness of error prevention which is beneficial for continuous quality improvement. Another reason could be relevant to target performance and incentive systems that are matched with feedback strategies. It is suggested that effective incentive systems should possess the contingent nature to generate the desired behavior change, which means that the reward should be earned based upon specific performance criteria (Stajkovic and Luthans 2001). “Productivity” serves as the target performance and reward base in our study, but quality feedback does not reveal sufficient information about this criterion. To resolve such misalignment, error‐contingent incentive systems (e.g., forfeiting money for errors) should be applied with error‐specific feedback (e.g., Bateman and Ludwig 2004). Further investigation of the effectiveness of such alignment needs more empirical or statistic evidence, which is beyond the scope of this study. In addition, the existence of a “floor effect” could also be an alternative interpretation of the quality‐related outcomes of our study. That is, the number of errors in the main study is low in general and the room for improvement is limited. In the robustness check where error variance becomes larger, we found a marginally significant improvement in quality performance when quantity feedback is introduced. Therefore, we believe that the influence of feedback strategies on quality performance would be prominent in contexts with larger error variance.

When making a direct comparison between quantity and quality feedback, it could be argued that part of the effect of the two feedback criteria might be attributed to framing. As is common in practice, quantity feedback is presented in a positive frame in our study, reminding people of their progress and success in doing the task. Quality feedback, on the other hand, is more negatively framed, with a focus on errors and failure. As shown in the feedback literature (e.g., Roney et al. 1995), positive feedback typically has a motivating effect while negative feedback might hurt motivation and subsequent performance. As such, it is credible that framing effects play a role in the current study. However, the potential effects of framing in our setup are even more complex with the presence of social comparison. For instance, while quantity feedback is positively framed, providing quantity feedback to the lowest ranked member of the group also generates negative framing by reminding how far he is lagging behind. Similarly, quality feedback to the one who makes fewest errors conveys a positive message, informing the picker how well he is doing on the task. Because of these complex mechanisms, we do not attribute the full set of findings regarding performance feedback to framing, and interpret the results mainly from the perspective of social comparison. For a more elaborate discussion on the effects of positive and negative feedback valence, we refer to Gino and Staats (2011).

Furthermore, we disentangle the complicated mechanisms of quantity feedback provided to a group of workers in parallel tasks using social comparison theory. Our process results show that on average all workers accelerate in the process, but the workers who perceive to be top ranked and middle ranked in their group accelerate less relative to themselves than those perceiving to be bottom ranked do. Such group dynamics partly correspond to the “regression‐to‐the‐mean effect,” which describes that slower workers speed up and fast workers slow down (Doerr et al. 1996) and the finding in Schultz et al. (1998) that only slower workers speed up in conjunctive‐task systems. Fast workers in conjunctive tasks slow down mainly because they want to avoid being the cause of system blocks (Schultz et al. 1998), or because they experience the most interruptions by their adjacent colleagues through both blocking and starvation (Doerr et al. 2004). In parallel tasks where workers are paid based on individual performance, our finding can be jointly explained by the existence of a ceiling effect and peer effects. The ceiling effect describes the fact that top‐ranked workers cannot work much faster because they already approximate their maximum level of effort (Pritchard et al. 1988). The bottom‐ranked workers are predicted to have more room to improve their effort than the top‐ranked workers, and positive peer effects prescribe that last‐ranked workers adjust their effort to that of coworkers who rank ahead of them (Kandel and Lazear 1992). We further investigated how such group dynamics in parallel tasks interact with feedback intervention, and found that the difference of process effort between top‐ranked and bottomed‐ranked workers is smaller when quantity feedback is present. This generally indicates the effectiveness of quantity feedback in motivating top‐ranked workers, making their acceleration rate similar to that of the bottom‐ranked workers. For a simple but strenuous task which has a clear distinction between the progress and steady stage, the results show that pickers accelerate more in the progress stage than in the steady stage when individual learning decreases and fatigue increases. We did not consider the effect of group learning in this group activity, because the tasks were independent and the transfer of knowledge among group members was rarely observed in the experiment.

Implications for Practice

Since slight differences in feedback implementation could cause huge outcome differences, findings on this topic are of great importance to practitioners. Although the participants in our experiment were university students who may have motivations and behaviors that differ from workers employed in warehouses, we believe that our results can be generalized to practice, especially in industries where repetitive tasks are operated by people. De Vries et al. (2016b) revealed similar patterns of experimental effects on order picking performance among professionals, university students, and vocational students, strengthening confidence in the generalizability of our results. In particular, the pay‐for‐performance design is specifically generalizable to flexible workers whose earnings are partly directly related to their productivity.

Several key findings of our research are directly or indirectly pertinent to managers in people‐centric industries, and to industries where both productivity and quality have important implications. First, providing quantity feedback with pay‐for‐performance incentives improves productivity by approximately 10% on average compared to a situation giving no feedback, and this significant improvement is not achieved at the expense of quality. A robustness check in a different setting even shows that quantity feedback decreases expected errors by 28% (i.e., 1 − exp(−0.33)). These results indicate that giving quantity feedback to group workers can serve a double purpose in organizations, that is, productivity improvement and quality control.

Our results show that adding more specific details (e.g., correct numbers and errors) on top of quantity feedback does not create extra value for these two performance dimensions. This deserves special attention from managers who are fully convinced that more specific is more effective. In spite of the comparable output, giving only global feedback also has practical advantages over specific feedback in aspects of inputs, such as costs, time, and effort (Lee et al. 2014). For example, specialized programming and extra space are needed in this study for delivering more specific information. These inputs are naturally important variables to consider in real settings. The results together should stimulate managers to choose the simple but effective feedback strategy (i.e., quantity feedback) in their daily operations, and should reduce their concerns about the speed‐quality trade‐off in repetitive tasks if proper interventions are taken.

Next, we did not find a positive effect of quality feedback on quality performance. This result should be interpreted with caution due to the specific pay‐for‐performance incentives (i.e., subjects are paid based on correct productivity) and overall low error rates in the study. Organizations usually have well‐defined target performance (e.g., productivity, quality, or both) based on which proper incentive systems and feedback strategies should be well aligned. Bateman and Ludwig (2004) showed an example by using an adapted incentive system (i.e., workers first forfeit money for each error and then have the opportunity to earn the disincentive money back upon attainment of certain quality goals) with quality feedback (i.e., error rate), and achieved significant quality improvement.

In addition, we show managers a clear picture of how group workers with different perceived relative positions perform in parallel tasks, and how such group dynamics change with quantity feedback. Managers may not want to discourage any workers when implementing a new policy. A good understanding of this picture informs managers which group of workers would benefit most, and what the consequences would be. Our findings from the dynamic analyses show that laggards on average accelerate more than front runners do relative to themselves. By recognizing the behavioral mechanisms, managers could introduce interventions to prevent demotivation in groups. For instance, giving quantity feedback reduces the difference of process effort between top‐ranked and bottom‐ranked workers, which effectively motivates the top‐ranked pickers to accelerate similarly to bottom‐ranked pickers.

We used a low‐tech picking system in which workers pick by memory in the main study and by paper in the robustness study. Nevertheless, we believe that the effects can be applied to systems using more advanced forms of IT as long as effort and attention are the main determinants of productivity. Technological tools such as pick‐to‐light, pick‐by‐voice, or RF‐terminals provide an efficient way to instruct workers and have been implemented in many warehouses. Managers can take full advantage of technological advances, and decide how and what feedback elements could be integrated. Our results show that providing workers with quantity feedback which allows them to make social comparisons is an option. For contexts where quality performance outweighs productivity, a “forced correct response” intervention supported by IT is also effective in preventing and correcting errors. In addition, managers can tailor feedback strategies (such as content, framing) with the help of IT to mitigate potential demotivation.

Limitations and Future Research

Although we tried to arrange the laboratory warehouse as realistically as possible to represent the situation of order picking in practice, our research has some limitations and the results should be interpreted accordingly. For example, we employed order lines as the feedback criteria for productivity, which is fair to every participant in the experiment, because the products were small and light and all workers followed the same picking route. However, in practice products can vary considerably in size and weight, and in some warehouses with zone picking, a worker is just responsible for a specific area. In this case, the simple feedback criteria may cause fairness concerns and even worker dissatisfaction. Anecdotal evidence from a Dutch company revealed employees complaining about the unfairness of feedback criteria, that is, order lines, claiming that the effort of picking one bottle of water is quite different from picking 10 bottles of water but they are treated the same. Therefore, companies need to take fairness into operational consideration and implement feedback on a correct and fair basis.

Next, a 30‐minute laboratory experiment (a 40 minutes in the robustness check) is sufficient for us to investigate people's immediate response to feedback information, but it is too short to be compared with day‐to‐day operations in practice. Future research collecting data from real order pickers in real warehouses or other people‐centric settings could be an interesting context to replicate and extend the current experiment. However, real settings have a much more complex working environment where workers can be influenced by stress, fatigue, boredom, and other factors over an extended period of time. It is worth mentioning that Jordi Blanes and Nossol (2011) have found a positive and long‐lasting influence of a specific feedback strategy in a real warehouse.

We have found a variety of effects of quantity feedback on pickers with different perceived positions. Future studies could also test if this is caused by individual differences. De Vries et al. (2016b) found significant effects of picker personality on productivity and its interaction with picking tools. In a similar manner, it would be interesting to know if feedback interventions interact with individual characteristics. This is important for both practitioners and academia. An interaction could explain the varied influences of a same intervention on different workers. Consequently, tailored feedback strategies could be applied to specific workers, which is easy to be realized with the assistance of advanced technologies.

In addition, this study investigated the role of feedback strategies in warehouse operations where customers are no directly involved. However, speed‐quality competition is also pervasive in many customer‐intensive service operations such as hospitals and restaurants. These contexts have very different measures for quality performance, such as the post‐discharge mortality rate (Kc and Terwiesch 2009) and service duration (Li et al. 2016). Whether the results generalize to different outcome measures is still unknown. Furthermore, we have not distinguished the sources of errors. In our setting, an error could have been caused by a wrong picking, a wrong typing, or a combination of both. Knowing the exact sources and causes helps to prevent or correct errors through targeted measures. Future research could be conducted in contexts with larger error variance and with the distinction of error sources under investigation. When quality performance is under consideration, other interventions like incentive programs should also be updated to better match the organization's strategies. It is also possible to introduce advanced technologies to mitigate errors. With various types of technologies being available, potential interaction between technological tools and feedback strategies could be estimated.

Conclusion

Our research contributes to both researchers and managers interested in identifying the determinants of fast and faultless performance, by examining the isolated and combined consequences of quantity and quality feedback simultaneously. The real‐effort and rigorously controlled setting enables us to draw relevant conclusions that contribute to academic theory, and simultaneously offer concrete input to managers about improving process designs and feedback systems.

Footnotes

A

Acknowledgments

The authors thank the editors and anonymous reviewers for their helpful and constructive suggestions that substantially improved the paper. Co‐author XiaoLi Zhang gratefully acknowledges the financial support from the China Scholarship Council (CSC), co‐author Jelle de Vries is funded by Dutch Research Council (NWO) VENI Project 016.Veni.195.292, and co‐author ChenGuang Liu is funded by the National Natural Science Foundation of China (Grant no: 71671139).

Throughout this study, HE or HIS refers to both men and women.