Abstract

The problem of how a soft-body 3D robot, grasped with palm-fingers, can effectively convey directional clues remains an open subject. When grasping a soft robot, the controlled stimuli involve recruitment encapsulated mechanoreceptors due to the active deformation of the soft robot, in contrast to conventional fingertip haptics that use rigid-body robots. Specifically, when a 3D continuum soft robot (cSR) is used as a haptic device, it is unclear how longitudinal, azimuthal, and curvature deformation coordinates convey actively directional haptic perception. Henceforth, an emerging topic arises, hereby identified as a novel paradigm of soft haptics in view of the guaranteed highly compliant interaction behavior of cSR. In this article, we address the question of what directional clues can be conveyed when grasping a 3D cSR probe, aimed at elucidating toward a soft haptic display. A complex cSR dynamic structure is considered, in closed loop with a known nonlinear controller, and a 10-subject user study is implemented. Findings show that 3D directional information can be achieved with high accuracy, wherein curvature stands for the dominant deformation coordinate of perception. Results offer insights into developing a soft haptic methodology, and its design principles, for an actionable and augmented user 3D cSR directional interface, where a variable stiffness method is still required to convey force cues and develop a soft-haptic interface.

Introduction

A variety of methods and techniques have been proposed to convey haptic stimuli from a rigid machine or robot to humans, with the aim of creating effective human–robot physical interfaces (HRpI). In the realm of haptics, there are insurmountable challenges when rigid-body robots are used, to name a few, the limited range of stiffness that induces nonpassive behavior, the bulky rigid materials that lead to heavy bodies with high energy transfer coefficient, and lack of mechanical compliance that hinders natural interaction during human natural motion. These challenges have promoted studying soft-body robots, whose low density and high compliance seem an option for HRpI, albeit with trading off high precision and resolution, however, within specifications for some HRpI applications. Henceforth, there arises the question on how to exploit some apparent promising characteristics of soft robots as a haptic device for HRpI, which we refer to as soft haptics. Thus, in contrast to the established field of haptics, which relies predominantly on rigid-body robots, the emerging paradigm of soft haptics presents a seemingly contradictory challenge: how to convey active haptic stimuli to humans throughout a highly deformable continuum soft robot (cSR).

It is not surprising that a preliminary literature review reveals essentially no proven methods for developing a soft haptic interface, particularly one made of a continuum soft body, or for conveying directional information, which is one of the primary tasks a haptic interface could perform (the second one is rendering force feedback, and being bilateral active command, the third one). On the other hand, haptic guidance tasks, which require the rendering of directional cues, have been addressed empirically in literature, highlighting evidence of its assistance in short-term kinematic pattern improvement, although it impedes long-term motor learning, 1 which is key in some tasks. For instance, when learning to drive a car, discriminating directional cues is crucial for maneuvering through a rigid-body interface, such as the steering wheel, especially for novice drivers. Noticeably, a novice driver acquires gradually the ability to discriminate directional cues when holding rigidly the steering by increasing muscle stiffness for a high energy transfer, 2 which may counteract the training action, thus reducing kinesthetic sensitivity and perception, in contrast to a skilled driver who holds it gently enough. This observation motivates us to wonder whether a cSR can convey effectively directional cues as a haptic probe, whose highly compliant body leads to gentle grasping by wrapping with the palm and fingers.

The display of force feedback and directional information (change of coordinate frame) has been addressed with rigid-body haptic devices. Recently, Lacote 3 proposed a rigid-body cylindrical handle to convey 2D directional cues, where stimuli are provided by an array of vibrators reaching 70% accuracy. Also, soft object manipulation using rigid-body haptic interfaces has been addressed, 4 representing a complementary research venue. However, the usage of rigid-body haptic probes has led to concerns about human safety and passivity issues.5,6 Hence, nonrigid alternatives for haptic probes have also been studied, including wearable fabrics that stretch to convey pressure cues. 7 Interestingly, wearable fabric straps (extremely soft and adhesive) have been placed over the finger dorsum and pulled to provide a skin-stretching sensation. 8 Koehler 9 proposed a novel cSR for local shape perception using inflatable pneumatic membranes for local fingertip tactile stimulus, with a high Pearson correlation coefficient, and recently, Wang 10 scales up to a 3D local shape perception using 9 membranes. Sebastian 11 considered a noninertial one-pressure chamber cylindrical probe with 60% accuracy of stiffness perception, obtained while wrapping the probe with the palm. The probe was equipped with passive interchangeable polymer sleeves to customize the surface stiffness under pressure regulation, without any motion. A soft pneumatic button for fingertip stimuli was tested to compare normal pressure versus vibration stimuli under various conditions, favoring the button option. 12 Also, a parallel mechanism equipped with elastic intermediate beams has been proposed as a nonrigid haptic probe alternative, 13 with limited results. So far, soft haptics with cSR remain unexplored in the literature. Now, consider a cSR composed entirely of elastomeric soft material, consisting of an aggregate of an infinite number of hyperelastic particles, 14 forming the soft body. This particular class of cSR has irrupted the robotics arena with promising proof of concept; however, it represents a robot with many research unknowns remaining. The deformable body characteristics of cSR arise as a premier candidate for HRpI systems, given the high compliance, low weight, and low inertia that render low natural frequency characteristics. However, little is known about how to address cSR to become a feasible human-in-the-loop coupling device for robotic applications, for which haptics (magnitude and direction of contact force vector) feedback is fundamental.

Soft robots and human–robot interaction stand in the frontier of multidisciplinary and interdisciplinary research, whose intersection is yet to unveil its potential as a safe but effective soft HRpI system. A haptic interface made of cSR is a type of HRpI wherein the pair human–robot coupled system is tightly physically intertwined, integrated at the sensory as well as at the actuation layer throughout highly nonlinear dynamics. When the human grasps the soft body, fingers and palm perturb the cSR in a complex manner because operational forces are projected as interaction torques at each pressure contact point, assuming lumped parameters, with respect to the cSR’s Center of Mass for each given configuration, finally arising a complex bilateral interaction of this pair. So far, this human–machine dynamics has been overlooked in the literature. Consequently, as a first step toward the soft haptics paradigm, the problem statement can be phrased as how to design a cSR with potential as a haptic interface that conveys effective directional cues. Clearly, control of the highly nonlinear dynamics of the perturbed cSR is at the core of this human-in-the-loop problem, with the stability analysis underneath. However, to focus on the human perception of directional cues, we would consider a very well-known controller that suffices the motion requirements 1 from a system approach for haptics. In this way, as a first step toward a haptic cSR, the objective of this study is to determine what and how cSR 3D deformation coordinates convey effectively directional cues. We hypothesize that curvature stands for the dominant deformation coordinate to convey effective directional information throughout palm-finger contact when the user synergistically follows its motion.

Our contribution amounts to a nonlinear system dynamic approach to elucidate how to convey directional information to the user throughout the deformation coordinates of a cSR. We show high accuracy that withstands as an effective approach for direction perception. This can be extremely useful for critical applications wherein rigid-body robots jeopardize interfacing with humans. Our findings support the conclusion that cSR is a viable soft-body probe, a safer HRpI, and it can potentially render stiffness over a broader range compared to rigid-body robots.

Materials and Methods

A. The cSR as a wrapping 3D haptic probe

The 3D cSR is a cylindrically shaped 3D body made of deformable hyperelastic elastomer, and commanded by three embedded pneumatic chambers. The nonlinear dynamic model is well posed, with the following kinematics and dynamics modeling. For further details, the interested reader is referred to Trejo-Ramos 14 and Ramos-Velasco. 15

Kinematic model

Assume a 3D inertial robot frame fixed at its base with unitary axis

This procedure can be generalized to an s-frame

Dynamic model

The Lagrangian modeling formalism yields, after considering the body to be composed of a continuum of infinitesimal elastomeric particles and inner viscoelastic forces, the following dynamic model:

Assuming three evenly distributed pressure chambers (see Fig. 1b),

17

the following well-posed invertible (rank 3) input matrix is obtained, for curvature

The human–robot interaction torques

The human hand wraps the soft body at finite, but multiple contact areas, whose center of pressures can be considered contact points, with corresponding contact forces

B. Directional control design

Aiming at analyzing the potential scalability of the cSR as a haptic deformable probe, we now consider an off-the-shelf control structure to make matters simple, although a model-free robust and fast controller can be used.18,19 The control objective is to converge to the target configuration

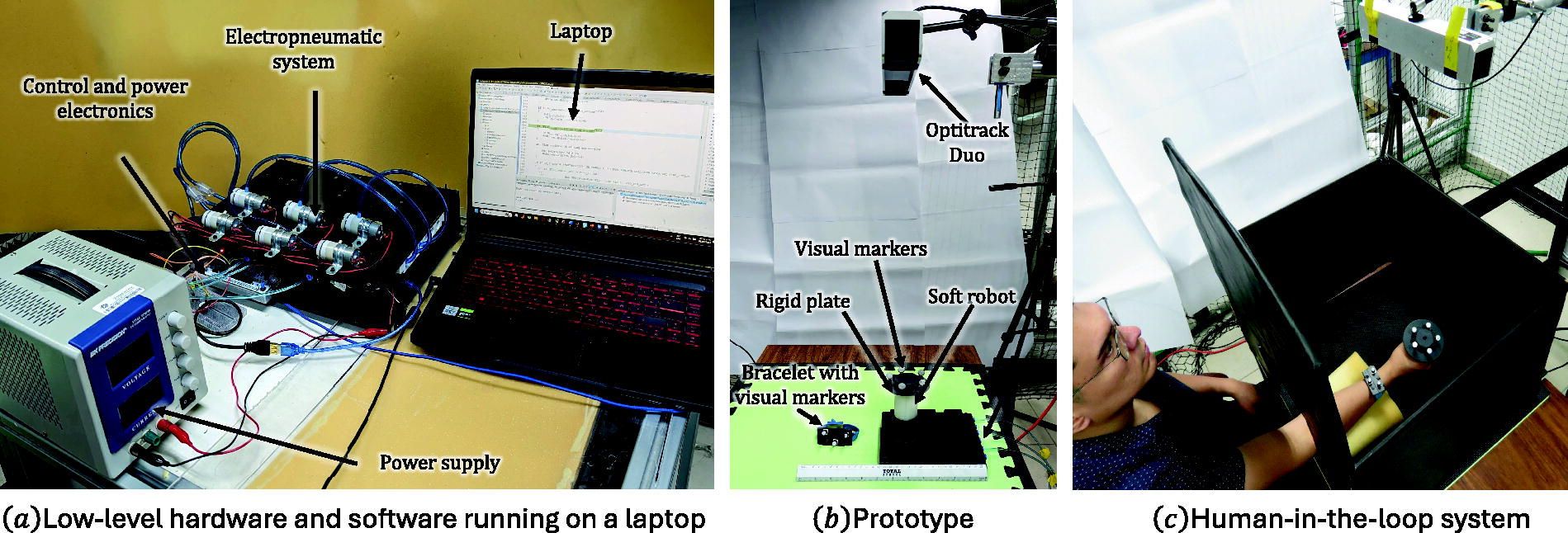

C. The experimental testbed

A mechatronics approach was deployed to develop an open-architecture prototype, see Figure 2, wherein it shows the system components and human-in-the-loop functional testbed, complemented with schematics shown in Figure 3. The electropneumatic system consists of six PWM-controlled pumps (2 per pneumatic chamber, for pressurization and depressurization) running at a pulse width of 1 kHz, governed by a ZHV-0519 electrovalve to ensure air expulsion only during pump activation. Chamber pressure is measured by a 100 kPa-range HL100D sensor at a resolution of 16-bit analog-to-digital converter. A high-performance architecture microcontroller module NUCLEO-144 STM32H7 (model 55ZI-Q) processes data at 10 kHz by I2C. The cSR is manufactured using ECOFLEX-0030 elastomer, following a well-established process,

21

with a rigid plate placed at the top, wherein 5 infrared visual markers are fixed. These visual markers are measured by an OptriTrack V120:Duo vision system at a frequency of 120 Hz. The system is installed above the table, with the optical axis aligned to the vertical pose of the cSR. Optitrack’s Motive software platform is programmed to detect in the visual field markers of two rigid bodies, one determines the distal point of the cSR and the other measures the position of a bracelet over the volunteer forearm. 3D Cartesian coordinates of the two rigid bodies are streamed using Motive’s NatNet SDK to a Matlab wrapper that computes deformation coordinates using inverse kinematics. A robust differentiator yielded asymptotically generalized coordinates velocities

Components

Schematic of the mechatronic architecture of the human-in-the-loop prototype.

The desired pneumatic pressure

D. Design of experiments

The user experimental study 2 aims at determining the human ability to recognize 3D directional information provided by a soft handle fixed at a table.

The initial position (configuration) was set at length

Desired configurations for each of the six directions are shown in different colors, where dot closest to ground denotes smallest longitude ld1 and subindexes follow enumeration shown in Table 1. Centered at

Coordinates used for desired configurations di, where d0 denotes initial state

Participants were directed to take a seat in front of a table, gaze forward, and extend their dominant arm comfortably onto the table, ensuring they could reach the handle workspace. To avoid distraction, the laboratory was closed and a blind screen was placed in front of participant to prevent them from watching their hand and the handle, see Figure 2c. Participants were also provided with a picture illustrating the six directional choices. At the beginning of each trial, participants were instructed to grasp gently the handle from an initial slightly bent vertical position, using a power grasp with their dominant hand. They were explicitly instructed not to exert any force on the handle, but to follow passively its motion. After the handle moved to the next direction, participants rated verbally their perceived direction of motion; afterward, the handle returned to the initial position. Subsequently, they were asked to rate their confidence in their response using a 9-point Likert scale.

The experiment was divided into three blocks, each separated by a 5–10-minute break. In each block a desired azimuth angle indicated the intended direction, denoted by

B1 handle length is regulated at a low value (

B2 handle length is regulated at medium value (

B3 handle length is regulated at maximum value (

Each block is composed of five repetitions for the six directions with three different curvatures (see Fig. 5, wherein it is depicted an example of cSR movement in each block) and presented in random order, for a subtotal of 90 trials per block. A total of 270 trials were performed per participant (6 directions × 5 repetitions × 3 longitudes × 3 curvatures). The experiment lasted about 1 hour and the sequence of the three blocks was counterbalanced across participants.

Soft robot desired configurations for the same given azimuth Φd, where columns show the same length for three different curvatures κ = {κ1, κ2, κ3} and rows present the same curvature for three different length l = {l1, l2, l3}.

E. Participants

Before recruiting participants for the study, the G*Power 3.0.10 program was employed to ascertain the minimum sample size. We considered a within-factors repeated measure analysis (1 group, 3 lengths or 3 curvatures) with a power of 0.85 and

Results and Discussions

A. Results

Figure 6 shows the confusion matrix of the recognition rates for the six directions at each combination of curvature and length of the handle. It can be noticed that the highest ratings are consistently found along a diagonal from the bottom left to the top right, which corresponds to the correct answers. It is noteworthy that stimuli characterized by the smallest curvature

Confusion matrix of recognition rates for the six directions, each of them as presented in Fig. 5.

Average recognition rates and average confidence scores across participants and trials for the three stimuli curvatures and lengths are displayed in Figure 7. It is notable that the lowest recognition rates are associated with the smallest curvature

Recognition rate (up) and confidence score (down) for the different stimuli with curvature κ and length l.

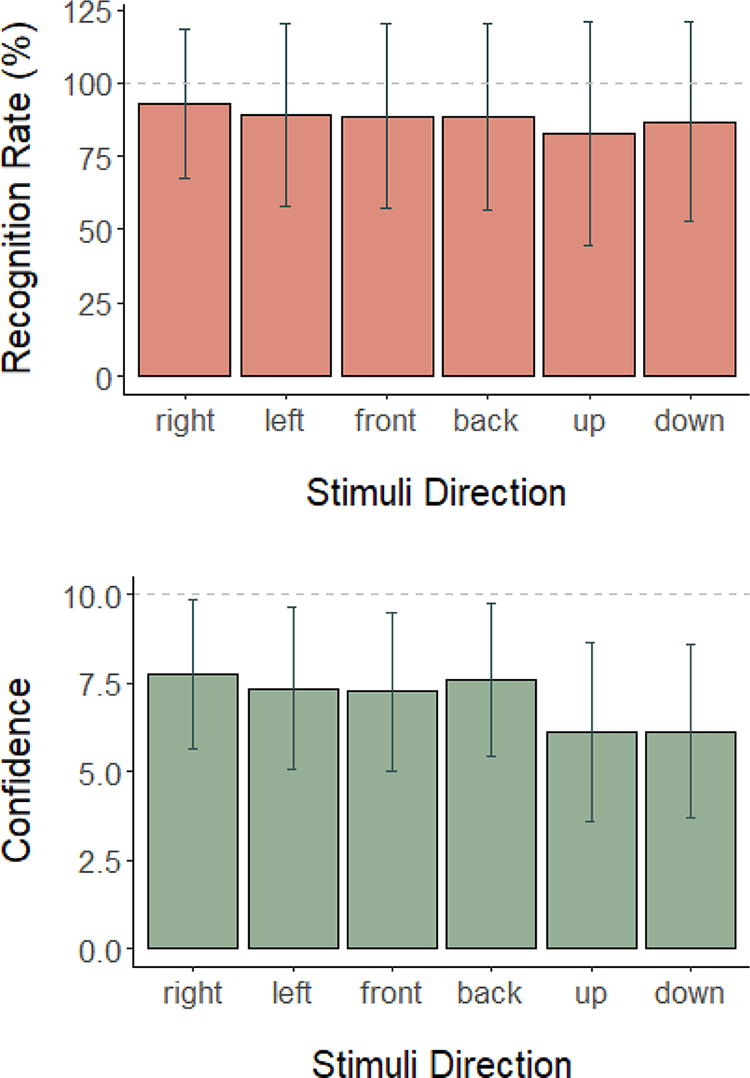

Average recognition rates and average confidence scores across participants and trials per direction are shown in Figure 8. It can be noticed that the highest recognition rate (92.9%) and confidence score (7.8) were obtained in the right direction, and the lowest values were obtained in the up direction (82.7% and 6.1, respectively). Kruskal–Wallis tests revealed a significant effect of direction on recognition rate [H(5) = 24, p < 0.001] and confidence [H(5) = 209, p < 0.001]. Wilcoxon tests indicated a significant difference in the recognition rate between right and up directions (p < 0.001) and between right and down directions (p < 0.001). Furthermore, they indicated a significant difference in the confidence score between all directions, except among back and left, front and right directions (p’s < 0.001), as well as among left and right directions (p’s < 0.001), and between down and up directions (p < 0.001). These results indicate that recognizing the up and down directions was significantly more difficult than recognizing the right direction. Furthermore, participants received equal confidence scores and recognition rates between opposite directions (i.e., left–right). This last result is consistent with the difficulty in recognizing any direction with a small curvature since both up and down directions are produced with robot configuration near the null curvature.

Recognition rate (up) and confidence score (down) for the different stimuli directions.

B. Discussions

The required different spatial configurations of the soft robot were achieved using the well-known “PD controller plus desired inverse dynamics” controller. This model-based controller was chosen for two reasons, first, it guarantees fast convergence at the expense of exact knowledge of the model; however, it does not stand for a limitation since the testbed is custom made, and therefore model and parameters have been characterized; second, the discrimination tasks were sufficiently contrasting, consisting of six cSR configurations (left, right, up, down, front, and back) so they do not require a high-precision controller. Clearly, for tracking tasks or those requiring high precision, a nonlinear advanced smooth controller that guarantees fast and robust tracking will be required.

Conclusions

The pneumatic soft, highly compliant structure, comfortably and safely wrapped around the hand, is an actionable interface. It represents a cost-effective soft probe through which the user accurately detects directional stimuli. Some additional conclusions are now in order, including future work to extend its usage as a haptic interface.

A. Concluding remarks

The confusion matrix offers a glimpse of how deformation coordinates correlate to convey directional stimuli. It seems clear that the larger the curvature the better the directional perception, without much correlation to longitude deformation. The high accuracy comes from curvature at a constant azimuth with a bit of confusion for pure elongation coordinates that have null curvature. However, it is not conclusive to say that skin-stretch mechanoreceptors do not play a role in detecting sliding from elongation deformation coordinates, because the experiments were designed to follow the deformation to perceive directional information using the proposed inertial (fix base) 3D cSR. In this case, it was observed that the user tends to move hand along the deformation coordinates, thus with near zero elongation velocity that minimizes its perception.

B. Future work

To broaden the research scope of this study, it is imperative to address the fundamental questions pointed out hereinafter. A major pending research issue is to provide an effective method to convey force stimuli at a given coordinate, either by static or quasi-static modulation of the viscoelastic or stiffness properties of the cSR. There is a lot of ongoing research on varying stiffness, including implicit (impedance of admittance) or explicit force control methods with handy force display controllers to convey force as a haptic display. The latter is advantageous because a cSR can display force in a wider range, in comparison to rigid-body haptics or even using elastic actuators, 24 for a safer haptic interface. Experiments are underway considering redundancy of actuation (six pressure chambers instead of three) to synthesize an active variable stiffness body, using the new method of minimum norm solution in actuator space. So far, theory and simulations show robust and fast convergence to a dynamic variable stiffness using a dynamic sliding PID control. 18 Such experiments will address a wide range of neuromuscular strength training of the hand.

When user’s hand wraps the cSR, there arise complex compliant skin-stretch effects, from its intertwined highly nonlinear dynamics, which stimulate the palm and fingers in an unknown and unexplored way throughout different encapsulated mechanoreceptors (corpuscles of Meissner for touch, of Pacinian for pressure, of Ruffini for cutaneous tension, and the Merkel disks for vibration) to provide sensory information to the central nervous system. We surmise all these skin mechanoreceptor are involved when using a 3D cSR to determine spatial and force clues, however, under various factors not tested. Certainly, flexible electronics are needed to enable force and pressure sensing over the cSR surface to fully unveil a functional haptic interface. Internal pressure sensing can be used to infer model-based user wrapping force.

This article focused on the design and performance evaluation of directional guidance with a soft robot and serves as a point of departure for crucial unexplored theory, namely an human-robot interaction model, including the virtual force model, a compliance model vector, JND, sampling rate for soft skin-robot contact, stretch haptics, palm haptics, to name a few. Overall, we believe these research issues will give rise to a novel paradigm that properly addresses soft haptics as a field, with promising advantages over the mature field of rigid-body haptics.

Footnotes

Acknowledgments

Authors acknowledge support from the National Council of Humanities, Science, and Technology (CONAHCYT) under PhD Scholarships #1007401 and #1144012 granted to first and fourth authors, respectively.

Authors’ Contributions

Authors contributed equally, where CEVG developed the cSR testbed and conducted the experiments, NGH conducted and supervised the protocol implementation and synthesis of results, VPV coordinated the project and the control system implementation, SEUC programmed the vision system and assisted in the low-level integration, and EOD verified the cSR model and its implementation.

Author Disclosure Statement

The authors declare neither competing nor personal financial interests.

Funding Information

No specific research grant provided funds for this research.