Abstract

Over 10 million uninsured individuals are eligible for subsidized health insurance coverage through the Affordable Care Act (ACA) marketplaces, and millions more were projected to become eligible with the end of the federal COVID-19 Public Health Emergency in 2023. Individual studies on behaviorally informed interventions designed to encourage enrollment suggest that some are more effective than others. This study summarizes evidence on the efficacy of these interventions and suggests which administrative burdens might be most relevant for potential enrollees. Published and unpublished studies were identified through a systematic review of studies assessing the impact of behaviorally informed interventions on ACA marketplace enrollment from 2014 to 2022.

Thirty-four studies comprising over 18 million participants were included (32 randomized controlled trials and 2 quasiexperimental studies). At the time of data extraction, 8 were published. Twenty-seven of the studies qualified for inclusion in a meta-analysis, which found that the average rate of enrollment was about 1 percentage point higher for those who received an intervention (0.009, P < 0.001), a 24% increase relative to control households; for every 1000 people who receive an intervention, that would correspond to about 9 additional enrollees. When stratifying by intervention intensity, support-based interventions increased enrollment by 2 percentage points (0.020, P = 0.004), while information-based interventions increased enrollment by 0.6 percentage points (0.006, P < 0.001).

The meta-analysis found that behaviorally informed interventions can increase ACA marketplace enrollment. Interventions aimed at alleviating compliance costs by providing enrollment support were about three times as effective as information alone.

Introduction

The passage of the American Rescue Plan Act in 2021 and Inflation Reduction Act in 2022 dramatically expanded the Patient Protection and Affordable Care Act’s (ACA) subsidies through the end of 2025. These policies have contributed to record-high marketplace enrollment and record-low uninsured rates. 1 Despite these coverage gains, an estimated 10.9 million individuals eligible for subsidized health insurance remain uninsured, likely due in part to a lack of awareness of low-cost coverage options available through the marketplaces. 2 In addition, an estimated 18 million individuals are projected to lose Medicaid coverage with the end of the federal COVID-19 Public Health Emergency in 2023 and will be at risk of gaps in coverage. 3

Given the dual challenges of addressing enrollment frictions for the remaining uninsured and for those transitioning from Medicaid, state and federal marketplace administrators need to understand what strategies are effective at preserving—and expanding upon—the coverage gains in recent years. This systematic review and meta-analysis evaluate and synthesize the evidence from published and unpublished studies on the effects of behaviorally informed interventions on ACA marketplace enrollment.

Methods

The authors conducted a systematic review and meta-analysis, following the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines. 4

Search strategy and data sources

The study selection protocol is detailed in Supplementary Appendix SA1. It involved English language searches of three databases in September 2022 (PubMed, CINAHL, and Google Scholar) and four registries from September to November 2022 (PROSPERO systematic review registry, Open Science Framework, the American Economic Association Randomized Controlled Trials registry, and ClinicalTrials.gov). The following search terms were used: (“The ACA” OR “The Affordable Care Act” OR Obamacare OR “The Patient Protection and Affordable Care Act”) AND (enrollment OR “take up” OR uptake OR takeup OR “take-up”) AND “health insurance” AND (intervention OR increas* OR strateg* OR nudg* OR impact OR evidence).

The search strategy also included efforts to mitigate potential publication bias and to reflect the understanding that practitioners may conduct studies for internal use without publishing the findings. Specifically, owing to issues such as p-hacking, reviewing only published work on ACA interventions may lead us to draw misleading conclusions. 5 To identify gray literature and unpublished studies, the authors hand-searched reference lists and conducted outreach to researchers and practitioners. They met with staff from four US federal government organizations and four nongovernmental research organizations and placed a call for studies in a relevant organization’s newsletter and reached out to State-based marketplaces and All-payer Claims Databases in 6 states; the authors heard from all 6 and met with 4. In particular, one author (A.F.) extracted data from unpublished randomized controlled trial studies by Covered California, California’s ACA marketplace. In addition, the authors discussed the project with representatives from two private health insurance brokers and with three teams of academic researchers.

Study selection

For the systematic review, selection criteria required studies to be credibly causal 6 empirical evaluations—randomized controlled trials or quasiexperimental studies—of interventions designed to increase ACA marketplace enrollment. This means studies focused on coverage for children or elderly adults through the Children’s Health Insurance Program or Medicare were excluded. Only studies focused on marketplace enrollment were included, setting aside research related to continuity of care or efforts to support people with finding an optimal plan. Studies that were conducted after January 2014, when most major provisions of the ACA were in force, were included.

One author (L.M.) screened the identified studies, removed duplicates, and reviewed the title and abstract to assess whether each met the inclusion criteria, in consultation with other authors. If a study’s abstract met the criteria, the full paper was reviewed. To validate these decisions, two authors (A.K.C. and A.F.) coded a random sample of 20 studies each, with full concurrence on final inclusion decisions. A.F. screened 20 unpublished studies by Covered California to identify which met the eligibility criteria.

To qualify for inclusion in the meta-analysis, studies identified in the systematic review: (1) compared marketplace enrollment outcomes between a treatment group and a group that did not receive any intervention as a result of the study; (2) measured both treatments and outcomes at the individual/household level, such that all of the constituent studies have similar outcomes (a binary measure of ACA enrollment); and (3) reported a sample size, an effect size, and a measure of variance, either directly or by providing enough information that they could be calculated.

One author (L.M.) screened studies identified in the systematic review for inclusion and created study covariates related to intervention types (eg, information-only treatments vs. treatments that included a support component or personalized vs. generic interventions), in consultation with other authors (A.F., E.S., and A.K.C.). An independent reanalyst validated this process. This narrowed the list of 34 studies identified in the systematic review to 27 that were eligible for inclusion in the meta-analysis.

Data extraction and synthesis

For each eligible study, information was extracted regarding the study design and methods, population of interest, intervention type, outcomes, and variance of measured effects. The full list of variables the team attempted to extract for each study is included in the appendix. To identify study-level risk of bias, studies were coded using the JAMA Quality Rating Scheme for Studies and Other Evidence, which rates studies from 1 (properly powered and conducted randomized clinical trial) to 5 (opinion of respected authorities; case reports). 7

Studies identified in the systematic review tested interventions aimed at people looking for health insurance through state-based marketplaces. The authors coded these interventions as falling into two categories: information-based interventions and interventions that provided some enrollment assistance. These categories align with two types of administrative burdens that might affect prospective enrollees: learning costs (eg, understanding eligibility, benefits of enrollment, and the steps involved) and compliance costs (eg, navigating a marketplace, submitting an application, and completing the enrollment process). 8 Information-only interventions could help address learning costs; interventions that involve enrollment support might alleviate compliance costs as well. For many consumers, navigating the many choices offered on the marketplaces is not only confusing, but overwhelming. 9 Among these consumers, it is possible that support-based interventions also address a third category of administrative burdens, psychological costs. 8

To explore effect size heterogeneity across subgroups of studies in the meta-analysis, the authors conducted analyses to see if findings differed by study context, including location and timing relative to the January 31, 2020, federal determination of the COVID-19 Public Health Emergency; characteristics of the intervention, including whether it addressed learning or compliance costs, and personalization of content; and whether study participants had previously interacted with the marketplace or not. Intervention cost was closely aligned with whether the intervention included a support component, and there was no variation in study quality among the studies that qualified for the meta-analysis, so it was not possible to test for heterogeneity of effects across these categories.

Statistical Analysis

A random effects meta-analysis model was used to estimate an average pooled treatment effect across outreach interventions. 10,11 Weighting effect sizes by the inverse of their variance ensured that more precise effect sizes contributed relatively more to estimates. 12 The authors accounted for within-study statistical dependence using a correlated and hierarchical effects (CHE) approach. 13 Note that because all effect sizes in the sample are based on similar comparisons between a randomized outreach intervention and a true control group, and because the inclusion criteria required studies to be closely aligned in terms of outcome measurement—a household-level, binary indicator of ACA enrollment—unstandardized effect sizes were chosen for ease of interpretation.

Below, this article provides estimates for the average effect of ACA outreach across the studies included in this meta-analysis, summarizes various estimates of the degree of treatment effect heterogeneity, and reviews results from the subgroup analyses. All analyses were performed in R Statistical Software. 14 Supplementary Appendix SB1 outlines the meta-analysis strategy in more detail, presents results for all findings reported in the text, reviews the relationships between some of the subgroup variables, and discusses a series of robustness checks.

Systematic Review Results

Description of the evidence

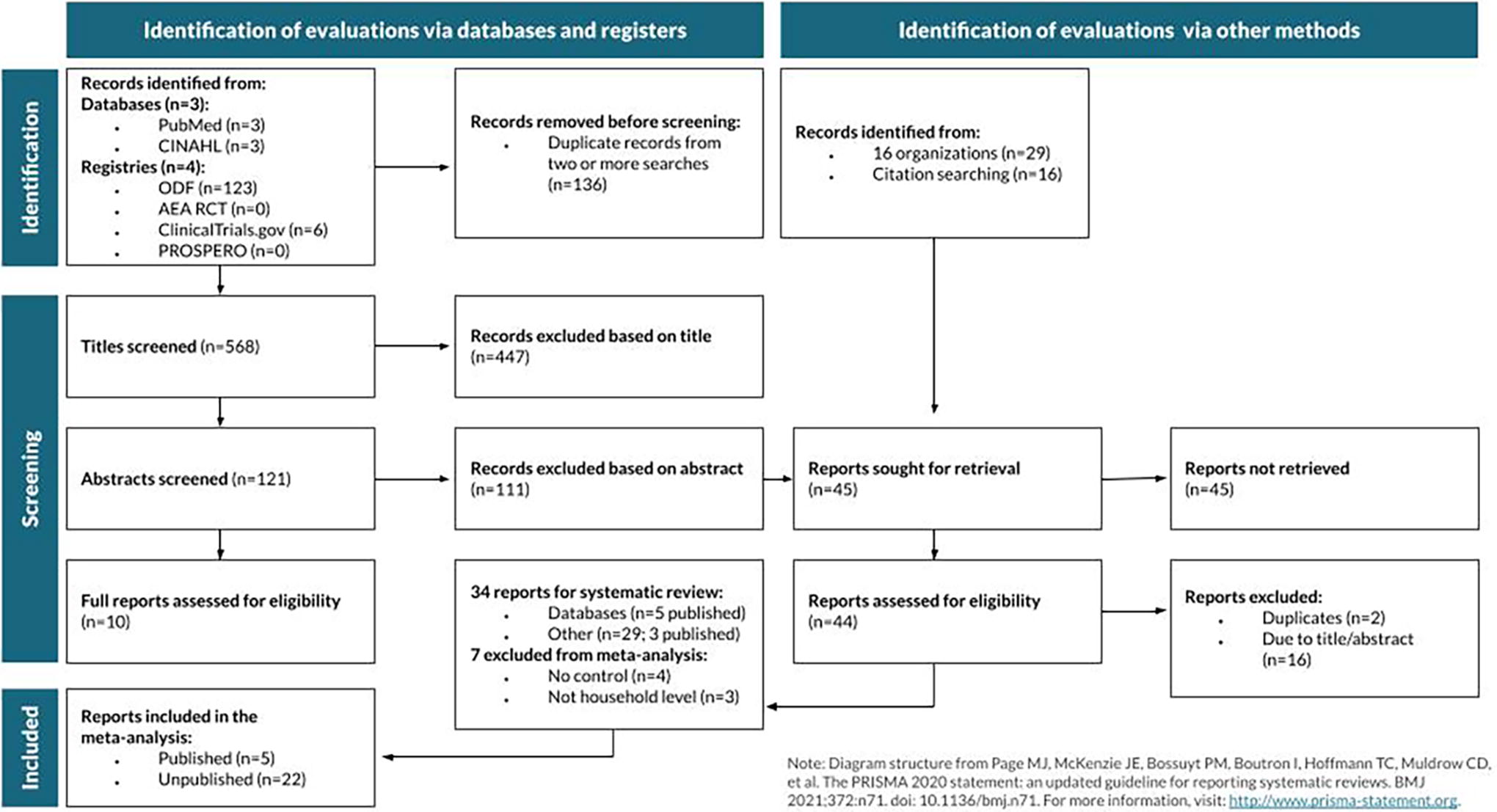

The search process, outlined in Figure 1, identified 703 studies through databases and registries and 45 studies through hand searches and individual outreach; 34 met the inclusion criteria for the systematic review. Of these 34, 32 were randomized controlled trials, covering over 18 million participants. Two were quasi-experimental studies: one used matching to compare enrollment rates across states with and without outreach and one used a regression discontinuity approach to identify the effects of advertisements. At the time of data collection in 2022, 8 were published studies and 26 were unpublished. Eight were studies of national (or near-national) interventions, 22 were of interventions in California, and 1 each were of interventions in Kentucky, Massachusetts, Maryland, and Colorado. Data collection years ranged from 2014 to 2022.

Most studies that met the inclusion criteria for the systematic review and meta-analysis were unpublished.

Interventions aimed at learning costs

Most of the studies reviewed the effects of interventions aimed at learning costs and are described in Table 1. The interventions in this category were diverse, including information shared via text message, e-mail, direct mail, television advertisements, and website banners. Interventions were largely delivered during enrollment periods and generally contained information about the enrollment process, such as upcoming deadlines, marketplace web or contact information, estimated costs, and recommended enrollment options. In many cases, these interventions included some components informed by behavioral science, such as action language or social norm messaging. In some cases, these interventions included some element of personalization, such as a premium estimate tailored to the recipient. Three examples of these types of interventions are described in more detail, with additional information about the decision to code them as information-based interventions, in Supplementary Appendix SD1.

Twenty-Four Studies of Interventions Aimed at Learning Costs

*P < 0.1.

**P < 0.05.

***P < 0.01.

indicates studies published at the time of data collection in 2022.

indicates a quasiexperimental study, rated 4 on the JAMA Quality Rating Scheme. All other studies are randomized controlled trials, rated 1.

indicates a study that qualified for inclusion in the systematic review but not in the meta-analysis. All other studies were included in the meta-analysis.

indicates a study that did not report p-values for estimated effects.

indicates a study on the effects of an intervention on enrollment in any plan, including Medicaid.

Of these 24 studies, 7 were national studies (including one that covered the 37 states on HealthCare.gov in 2015), 14 were based in California, and 1 each took place in Kentucky, Maryland, and Colorado. Twenty-three were randomized controlled trials covering sample populations totaling 17,678,399; 1 was a quasiexperimental study. Effect sizes ranged from 0.006 to 2.95 percentage points, and 19 were unpublished at the time of data collection for the meta-analysis in 2022.

Interventions aimed at learning and compliance costs

Ten studies evaluated the effects of interventions aimed at alleviating burdens associated with learning and compliance costs and are described in Table 2. The most crucial difference between these support-based interventions and the information-based interventions described above is that these included some element of assistance beyond the passive provision of information. For example, many of these interventions included a phone call through which a representative could provide a potential enrollee with personalized support as they began to consider and navigate the process. Others included mechanisms to support them as they began the process, such as a streamlined enrollment process. Supplementary Appendix SD1 outlines two examples.

Ten Studies of Interventions Aimed at Learning and Compliance Costs

P < 0.1.

P < 0.05.

P < 0.01.

indicates studies published at the time of data collection in 2022.

indicates a quasiexperimental study, rated 4 on the JAMA Quality Rating Scheme. All other studies are randomized controlled trials, rated 1.

indicates a study that qualified for inclusion in the systematic review but not in the meta-analysis. All other studies were included in the meta-analysis.

indicates a study that did not report P-values for estimated effects.

Of these 10 studies, 9 were randomized controlled trials and 1 was a quasi-experimental study; the randomized studies covered a total sample population of 310,127. One of these studies was nationwide, 1 in Massachusetts, and 7 in California. Seven were unpublished at the time of data collection. Most effect sizes were larger than those attributable to the information-only interventions described above; effect sizes in this category ranged from 0.4 to 5.9 percentage points. The meta-analysis that follows explores this difference in effects by intervention strategy more systematically.

Meta-analysis results: Average estimated effects and heterogeneity

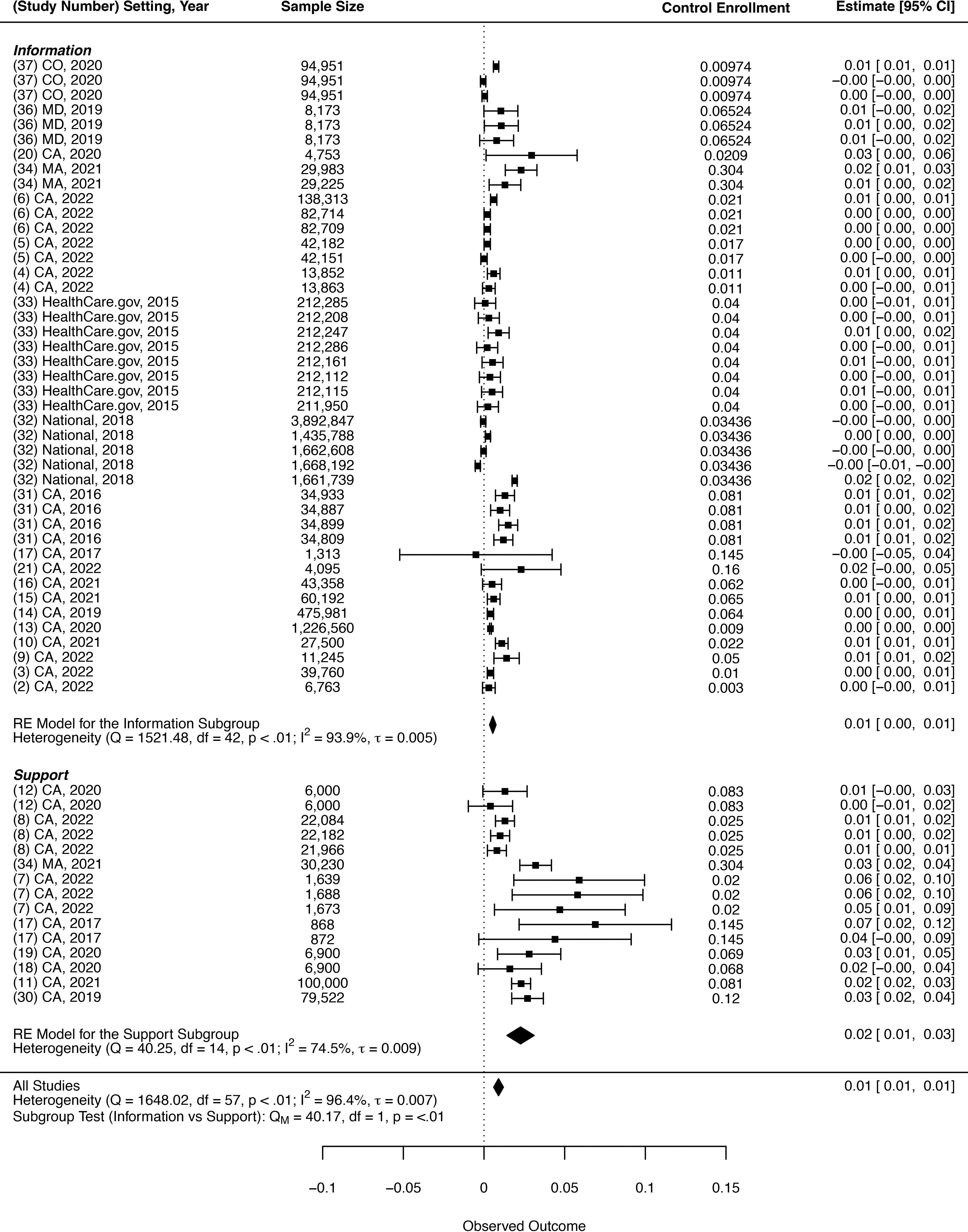

Twenty-seven of the studies identified in the systematic review qualified for inclusion in the meta-analysis, covering 58 treatment arms; 22 studies were unpublished at the time of data collection. Across interventions, the average enrollment rate in the control groups, weighted by sample size, was 3.8%. For those who received an intervention, the meta-analysis indicates that the enrollment rate was 0.9 percentage points higher, a 24% increase (0.009, P < 0.001; 95% confidence interval [CI; 0.006, 0.012]).

The 95% prediction interval in which the effects of a new intervention might fall, if its target population were selected at random from the combined population of the meta-analysis, was −0.006 to 0.024. Prediction intervals are analogous to confidence intervals, incorporating effect size heterogeneity as an additional source of uncertainty. The prediction interval was wider than the confidence interval by approximately 1.2 percentage points in either direction, suggesting noteworthy heterogeneity in the impacts of ACA outreach interventions.

Further heterogeneity estimates are provided in Figure 2, a forest plot. Cochran’s Q test rejects the null hypothesis of no effect size heterogeneity (Q = 1648.02, df = 57, P < 0.01). The authors also estimate τ = 0.007, which can be thought of as the standard deviation of the distribution of possible true effect sizes. This value is almost as large as the pooled effect estimate. Lastly, the I-squared estimate of 96.4% suggests that an overwhelming majority of the effect size variance across the sample stems from effect size heterogeneity rather than sampling error.

Interventions with support components aimed at learning and compliance costs increased enrollment more than information alone.

The forest plot illustrates that treatments that involved support, ie, those aimed at alleviating compliance costs, were more effective than information-only interventions, which aim to alleviate learning costs. Support-based interventions increased enrollment by 2 percentage points (0.020, P = 0.004; 95% CI [0.010, 0.030]), while information-based interventions increased enrollment by 0.6 percentage points (0.006, P < 0.001; 95% CI [0.004, 0.008]). A cluster-robust Wald test rejected the hypothesis that average effects are equal across these subgroups (P = 0.010).

Personalized interventions were also more effective. Personalized interventions increased enrollment by 1.2 percentage points (0.012, P < 0.001; 95% CI [0.008, 0.016]), while interventions with no personalized component increased enrollment by 0.5 percentage points (0.005, P = 0.011; 95% CI [0.002, 0.009]). A cluster-robust Wald test rejected the hypothesis that average effects are equal across these subgroups (P = 0.045).

In addition, outreach interventions may be more effective among people with some prior interaction with the marketplaces, such as those who have initiated the enrollment process by submitting an application. Among those with some prior interaction, interventions increased enrollment by 1.2 percentage points (0.012, P < 0.001; 95% CI [0.007, 0.017]), compared with 0.6 percentage points (0.006, P = 0.004; 95% CI [0.002, 0.010]) among those with no prior interaction. A cluster-robust Wald test rejected the hypothesis that average effects are equal across these subgroups (P = 0.014).

In contrast, there was no evidence of statistically significant differences in estimated effect sizes across study setting, year, or timing relative to the declaration of the federal COVID-19 Public Health Emergency.

Discussion

As policymakers seek to help the remaining uninsured obtain health insurance and facilitate coverage transitions for those losing Medicaid, this systematic review and meta-analysis found that interventions aimed at addressing administrative burdens caused statistically significant increases in ACA take-up. Although information-based interventions targeting learning costs increased ACA enrollment, support-based interventions targeting learning and compliance costs were significantly more effective. Outreach with personalized elements also more significantly increased ACA enrollment. Finally, this study found that outreach was more effective among people who had already interacted with the marketplace in some way, such as by starting or submitting an application. These findings underscore the benefits of behaviorally informed outreach interventions while also documenting variation in their relative efficacy.

Many states are conducting outreach, creating resources, training assisters, and taking other proactive steps to increase enrollment. 15 Prior research has found that informational interventions tend to be more effective among those less in need, 16,17 whereas support-based interventions tend to help more disadvantaged subpopulations. 18,19 For example, in California’s ACA marketplace, informational nudges delivered via mail and email were most effective among penalty payers and healthier individuals, while personalized enrollment assistance was most effective among older consumers, low-income consumers and those with a Spanish language preference. With that in mind, policymakers concerned about equitable take-up will need to deploy more support-based interventions. 20

One of the key considerations in implementing these more effective and more equitable support-based interventions is that they may be more resource-intensive than alternatives. Cost estimates were not available for all the interventions covered in this systematic review and meta-analysis, so additional research on the cost effectiveness of providing support in addition to information would be constructive. However, a prior randomized evaluation of support-based outreach indicated that even more resource-intensive outreach interventions can be cost-effective for agencies, providing a two-to-one return on investment. 18

In addition, the cost difference between providing support or personalization and information alone may be relatively minimal. In one study, providing nonpersonalized letters was associated with an overall cost per new enrollee of $191. 21 In comparison, an evaluation of personalized telephone outreach tested an intervention that cost approximately $224 per new member acquired. 18 Personalization may be an even lower cost alternative: a study testing personalized messages found that they yielded an overall cost per new enrollment of approximately $50 during a special enrollment period and $41 during an open enrollment period. 17

Yet even when states offer enrollment assistance, take-up remains incomplete. Structural approaches, such as autoenrollment, may be necessary to connect people to benefits for which they are eligible. An evaluation of a streamlined enrollment process, which required a marketplace to manually process paper forms returned by mail, benchmarked effects to the reduction in premiums needed for a commensurate increase in enrollment: a decrease in premiums of $39 per year would be necessary to increase enrollment as much as streamlined enrollment. 22

Limitations

Systematic reviews and meta-analyses have risks, which the authors worked to mitigate. Publication bias is a concern, so the systematic review process included a thorough review of unpublished studies. This was intended to both broaden the knowledge base around interventions to support health insurance enrollment and reduce the chance of overestimating effects in the meta-analysis, as published studies are often more likely to report statistically significant findings than unpublished studies. The value of mitigating publication bias via the inclusion of unpublished evaluations outweighs any potential risks due to methodological differences between published and unpublished evaluations; in addition, including these unpublished evaluations offers an opportunity to extend the knowledge base beyond prior systematic reviews of published studies. Effect sizes also tend to be larger in quasiexperiments than randomized experiments, 23 so the meta-analysis included only experiments with true controls. It is possible that the systematic review search missed relevant studies, and excluding quasi-experimental studies limits the types of interventions covered.

Another challenge for meta-analyses is the potential for study-level biases, which lead to inaccurate averages. However, this study focuses on exploring sources of heterogeneity, rather than simply estimating an overall pooled effect size, makes this less of a concern. In addition, the authors’ assessments of study-level quality suggest that there is minimal risk-of-bias among the included studies.

All 27 of the studies included in the meta-analysis are randomized controlled trials, which require high organizational capacity to plan, execute, and analyze. It is possible that high research capacity is correlated with stronger tools overall or a focus on innovation at the organizational level, which would limit the generalizability of these results to settings in which organizations are working with fewer resources or different orientations toward innovation.

In addition, most of the studies included took place in California, which could raise questions around generalizability. However, effect sizes were statistically similar across California and non-California studies, eligibility criteria for marketplace coverage are broadly similar across states such that marketplaces serve similar types of consumers, and the reported interventions sought to address commonly cited barriers to take-up such as limited awareness and the complexity of the application and enrollment process. Furthermore, California’s demographics reflect predicted demographic trends in the rest of the United States, 24 so findings in California could have future relevance elsewhere. For these reasons, these results are well-positioned to inform outreach efforts across the ACA marketplaces.

Conclusions

This systematic review and meta-analysis demonstrate that outreach interventions can increase ACA marketplace enrollment; even with small effect sizes, the implications at a population level are still meaningful. Interventions aimed at alleviating compliance costs by providing enrollment support were about three times as effective as information alone. These support-based interventions are likely to be more resource-intensive, but they have been found to be cost-effective. 18 Amid the end of the federal COVID-19 Public Health Emergency and the availability of enhanced marketplace subsidies, these findings have implications for policymakers looking for effective strategies to preserve the coverage gains in recent years.

Author Disclosure Statement

This publication does not represent the views of the General Services Administration (GSA) or the federal government. The co-authors of this article granted unlimited and unrestricted rights to GSA to use and reproduce all materials in connection with the co-authorship. This publication was written independently by the authors and did not go through internal Office of Evaluation Sciences (OES) review beyond analyses presented in the OES abstract. The publication’s analyses comply with the journal’s peer review process, rather than the OES project process, for validation.

Footnotes

Supplementary Material

Supplementary Appendix SA1

Supplementary Appendix SB1

Supplementary Appendix SC1

Supplementary Appendix SD1

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.