Abstract

Translation of spinal cord injury (SCI) therapeutics from pre-clinical animal studies into human studies is challenged by effect size variability, irreproducibility, and misalignment of evidence used by pre-clinical versus clinical literature. Clinical literature values reproducibility, with the highest grade evidence (class 1) consisting of meta-analysis demonstrating large therapeutic efficacy replicating across multiple studies. Conversely, pre-clinical literature values novelty over replication and lacks rigorous meta-analyses to assess reproducibility of effect sizes across multiple articles. Here, we applied modified clinical meta-analysis methods to pre-clinical studies, comparing effect sizes extracted from published literature to raw data on individual animals from these same studies. Literature-extracted data (LED) from numerical and graphical outcomes reported in publications were compared with individual animal data (IAD) deposited in a federally supported repository of SCI data. The animal groups from the IAD were matched with the same cohorts in the LED for a direct comparison. We applied random-effects meta-analysis to evaluate predictors of neuroconversion in LED versus IAD. We included publications with common injury models (contusive injuries) and standardized end-points (open field assessments). The extraction of data from 25 published articles yielded n = 1841 subjects, whereas IAD from these same articles included n = 2441 subjects. We observed differences in the number of experimental groups and animals per group, insufficient reporting of dropout animals, and missing information on experimental details. Meta-analysis revealed differences in effect sizes across LED versus IAD stratifications, for instance, severe injuries had the largest effect size in LED (standardized mean difference [SMD = 4.92]), but mild injuries had the largest effect size in IAD (SMD = 6.06). Publications with smaller sample sizes yielded larger effect sizes, while studies with larger sample sizes had smaller effects. The results demonstrate the feasibility of combining IAD analysis with traditional LED meta-analysis to assess effect size reproducibility in SCI.

Introduction

Spinal cord injury (SCI) affects up to 580,000 new patients worldwide every year and an estimated 1.5–5.2 million patients are suffering from the consequences of SCI.1,2 Despite rising optimism, 3 no pharmaco-biological interventions are widely effective in human SCI. Translation of SCI therapeutics from pre-clinical animal studies into humans is limited by fundamental disconnects in how scientific the pre-clinical and the clinical literature weigh evidence.4–6 Pre-clinical results are judged based on the biological novelty of findings and elegance of the methods, whereas clinical findings focus on the stability of findings in the face of clinical variation. In clinical research, systematic reviews and meta-analyses demonstrating reproducible therapeutic effects are considered the highest quality of evidence (class-I evidence), representing a major tool for weighing evidence for clinical decision support. 7 Conversely, pre-clinical literature values primary data analysis over secondary analysis to assess reproducibility. The lack of reproducibility testing in pre-clinical literature has led some clinical researchers to question the translational value of animal models for predicting clinical trial outcomes. 8 Yet, clinical translation in SCI is relatively new 1 and depends on sustained pioneering efforts to drive ongoing bidirectional “translational dialogue.”9–11 Aligning the evidentiary basis of pre-clinical and clinical findings represents a fundamental gap, bridgeable through meta-science.

The overall goal of the present study is to assess the feasibility of developing class-I evidence in pre-clinical research through meta-analysis. The SCI community has pioneered efforts to recover individual animal data (IAD) from inaccessible article records (“dark data”), 12 making them findable, accessible, interoperable, and reusable (FAIR) for translational decision support in the Open Data Commons for SCI (ODC-SCI).13,14 This opens the possibility of meta-analysis including literature-extracted data (LED) and IAD analyzed in parallel. Applying meta-analytical methodologies to animal studies to identify, appraise, select, and synthesize all available high-quality research evidence remains a challenging task. Pioneering efforts by the Collaborative Approach to Meta-Analysis and Review of Animal Data in Experimental Studies (CAMARADES) group starting in the early 2000s focused on pre-clinical stroke research.15,16 CAMARADES methods have been extended to numerous neurological disease models, including SCI.17–19

Heterogeneity and reporting biases within pre-clinical studies have been proposed to contribute to the translational disconnect of pre-clinical and clinical SCI research. 18 The ARRIVE (Animal Research: Reporting of In Vivo Experiments) guidelines provide a checklist to articulate dropout of animals because of morbidity/mortality; however, this practice is often not followed.20,21 Statistical tools that detect when subjects have been selectively removed from published analyses (publication bias) suggest that data on “dropout” in animal studies lead to substantial overstatement of efficacy in SCI experiments by up to 40.9%. 18 In clinical literature, “loss-to-follow-up” is a common problem that is acknowledged, reported, and statistically managed. This inflation of effect sizes because of publication bias is common across fields. 22 One limitation of literature-based meta-analysis is that statistical tools used to infer missing data rely on data estimable from published literature, in which author curation and editorial decision shaped the nature of the data selected for inclusion. With the rise of data-sharing mandates from funders and journals, it is now possible to directly analyze individual data points undergirding the published literature. 23 Hence, investigating individual data represent an opportunity to directly quantify the impact of publication bias and relevance of noncurated data and its impact on effect size.

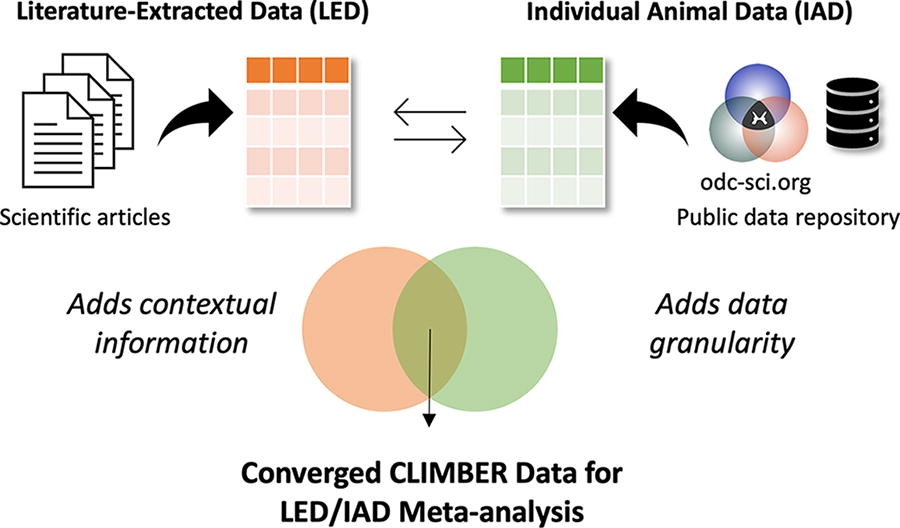

In this study, we performed LED and IAD meta-analysis to study the following: (1) the effects missing data have on published findings, (2) predictors of significant functional improvement (neuroconversion) in animals from numerous studies, and (3) feasibility of benchmarking translation across pre-clinical research (Fig. 1).

Diagram representing the process of CLIMBER meta-analysis. Converging data from public data repositories (odc-sci.org) and scientific articles allows for the unique meta-analysis done by CLIMBER.

Methods

Search and selection of publicly available articles

Articles were selected through a methodical review of publications with the corresponding IAD publicly accessible within ODC-SCI 13 (odc-sci.org, RRID:SCR_016673) as of October 2021 and the VISION-SCI data repository. 24 As of April 2024, the ODC-SCI is the only National Institutes of Health (NIH)-supported specialist repository that complies with federal data management and sharing policies. 25 When publicly available raw data on subjects are posted on ODC-SCI, information about corresponding article publication is listed under “Provenance and Originating Publications” on the dataset landing page. Using this information, we successfully linked the datasets to their published literature by using the availability of PubMed identifiers and publication information posted with datasets in ODC-SCI. To prepare for a comparable and reproducible analysis, publications needed to meet the following criteria: (1) have available corresponding IAD, (2) be a pre-clinical SCI study, (3) include neurobehavioral outcomes with time points, (4) report the number of animals per experimental group, and (5) report the mean effect size and its variance (standard deviation or standard error of the mean). All articles included one of three animal species (mice, rat, nonhuman primates). After selecting articles for inclusion, we confirmed that our IAD matched with LED shown in the selected peer-reviewed article by taking advantage of the fact that the Open Data Commons for Traumatic Brain Injury (ODC-TBI) system requires data uploaders to report unique subject identifiers and experimental groups for each subject. This allowed us to compare the reported sample sizes for each group from the published article to the IAD and cross-reference subject groupings and align IAD to LED.

Data extraction from publications

Two independent reviewers systematically extracted values from the figures and tables from published articles. When data points were only expressed graphically, we used WebPlotDigitizer (v.4.5) to extract data. Data points expressed serially were recorded numerically. On some occasions, information was impossible to extract because of poor plot quality or digitization issues (e.g., pseudo-3D plots with distorted proportionality). We included every time point for which all experimental groups had an outcome score. Additional categorical variables were collected for subgroup analyses. Datasets from two independent reviewers were merged and reviewed to eliminate errors. Discrepancies were adjudicated through supervised discussions by a third reviewer. The final dataset included data and information from all selected articles with many variables, including the following: animal age, species, sex, outcome measure, outcome scale, injury details, author information, and publication information. The full LED analysis dataset is publicly available at the odc-sci.org (RRID:SCR_016673; https://dx.doi.org/10.34945/F5DG6D).

Identification of IAD

After extraction of data from published articles, corresponding IAD were exported from ODC-SCI and combined with VISION-SCI. Datasets contained different approaches for expressing identical information, such as different labels and units (i.e., time postoperation was reported in both days and weeks). To ensure consistency and facilitate meaningful comparisons, data sets were merged, and variables harmonized to consistent units to standardize diverse data elements across the unified dataset. To minimize the potential for human error, the process of merging and harmonizing this dataset was executed exclusively within the tidyverse package in R version 4.3.0.26,27 The full IAD dataset for analysis is publicly available at the odc-sci.org (RRID:SCR_016673; https://dx.doi.org/10.34945/F5J59P).

Inclusion criteria for meta-analysis

Stringent inclusion criteria helped manage heterogeneity between studies. We only included publications that featured data from common injury models and standardized end-points. As contusive injuries made up 48.6% (895/1841) of LED, we excluded publications reporting other injury models. Included publications reported at least one of the following assessment measures: the Basso, Beattie, and Bresnahan Locomotor Scale (BBB Score), 28 Grooming Test, 29 Forelimb Open Field Scores, 30 the Basso Mouse Scale (BMS Score), and BMS Subscores. 31 Each study included neurobehavioral recovery scores over time. Variations between the durations of behavioral assessments prompted selection of specific time points for analysis to establish a more uniform time frame across the different studies. Studies were included if they reported outcome assessment scores obtained between 0 and 3 days postoperative (DPO) and again at 42 DPO (±14 DPO). There were no inclusion or exclusion criteria related to study intervention. In the IAD, we were sometimes unable to determine which experimental group a subject belonged to because of missing values or lack of information. Subjects with undetermined experimental grouping were excluded.

Analysis

To compare diverse outcomes, we used standardized mean difference (SMD) as the effect size metric, in accordance with meta-analysis guidelines.

32

Standardized effect size allows for comparable results across studies with different outcome scales and measurement. We selected outcome measures that were closest to 1 DPO (within 0 and 3 days) serving as baseline and 42 DPO (within 28 and 56 days) to determine the respective effect for each experimental group. To derive standardized effect sizes for this percent change, we calculated SMD for paired samples using the formula from Borenstein

32

:

The variance of d was calculated using the following:

And the standard error of d was calculated using the square root of Vd.

SMD effect size is a standardized statistical entity, reflecting the degree of improvement relative to the baseline. This standardized measure enables us to compare diverse end-points and therapies using a consistent benchmark.

A random-effects meta-analysis was performed to calculate an overall estimate of effect size, using the metagen function from the meta package in R with a significance level set at

Results

Literature search and selection

An initial search and data selection are shown in Figure 2. The initial search identified 11 articles from the ODC-SCI (Table 1) and 24 articles from the VISION-SCI data set (Table 2), accumulating to a total of 35 publications. Of these publications, 10 were excluded because of the following: histology only (no function), unavailable comparison data, duplicated studies, and studies with incomparable data (bioenergetics and review articles). This resulted in 25 included publications with n = 1841 for LED and n = 2441 for IAD. After applying inclusion criteria, 7 eligible publications were included for LED (n = 311 subjects; 25 experimental groups; (Supplementary Table S1) and IAD (n = 304 subjects; 21 experimental groups; (Supplementary Table S2). We then performed individual experimental group matching aligning IAD and LED. In the IAD, there were instances where the experimental grouping for some subjects was not reported, which impaired our ability to directly compare with the animals in the LED as we were unable to determine their appropriate cohort. Owing to these incomplete data and insufficient information, 37 subjects were excluded. Our final analysis resulted in 21 experimental groups for LED (n = 285 animals, Table 3) and 20 experimental groups for IAD collated from the VISION-SCI database and uploaded to the ODC-SCI repository (n = 293; Table 4). We observed misalignment of the numbers of experimental study groups in two cases, and differences in the reported scores and differences in the number of animals per group.

Flowchart illustrating the process of literature screening and selection. The initial search identified 35 articles with corresponding data in ODC-SCI and VISION-SCI. Ten of these articles were excluded for various reasons, which resulted in 25 articles with n = 1841 for literature-extracted data and n = 2441 for subject-level data. Inclusion criteria allowed for 7 eligible publications with n = 311 subjects (25 experimental groups) for literature-extracted data and n = 304 subjects (21 experimental groups) for individual animal data. Groups and subjects with lack of information were eliminated because of the inability to match subjects from literature-extracted data to individual animal data. Final inclusion resulted in n = 285 (21 experimental groups) animals for literature-extracted data and n = 293 (20 experimental groups) for individual animal data. ODC, open data commons; SCI, spinal cord injury.

Corresponding ODC-SCI DOI Numbers of Evaluated Articles

ODC, open data commons; SCI, spinal cord injury.

Evaluated Articles from VISION-SCI Data Repository

SCI, spinal cord injury.

Summary of Experimental Groups from Literature-Extracted Data

BBB, Basso, Beattie, and Bresnahan Locomotor Scale; BMS, Basso mouse scale open-field score.

Summary of Experimental Groups from Individual Animal Data

BBB, Basso, Beattie, and Bresnahan Locomotor Scale; BMS, Basso mouse scale open-field score.

Comparison of published and IAD experimental groups

Random-effects meta-analysis on 21 groups from LED and the 20 groups from the IAD are depicted in Figure 3a. Random-effects model (HK) yielded an SMD of 4.23 (95% CI: [3.14; 5.31]) showing beneficial effect sizes across the groups in the LED. Expected between-study heterogeneity was confirmed by heterogeneity analysis (I2 = 95%, τ2 = 4.7084, p < 0.01). An identical meta-analysis model on the corresponding IAD is shown in Figure 3b. The random-effects model on IAD revealed a larger SMD of 4.83 (95% CI: [3.14; 6.52]) but a similar pattern to LED. Significant heterogeneity was detected in IAD (I2 = 91%, τ2 = 10.0634, p < 0.01).

Identical random-effects models (HK) run on both the literature-extracted and individual animal data.

Subgroup analysis

To assess the impact of various factors within both meta-analyses, we performed a stratified analysis on the subgroups as demonstrated in Figure 4. When assessing the LED, stratification for the animal type (p < 0.01, Fig. 4b), level of injury (p < 0.01, Fig. 4d), animal strain (p < 0.01, Fig. 4e), and sample size (p < 0.01, Fig. 4f) accounted for a significant proportion of between-study heterogeneity. For the IAD, all stratifications accounted for statistically significant proportions of heterogeneity; however, the LED did not confirm this for animal sex (Fig. 4a) and injury severity (Fig. 4c).

A stratified analysis on subgroups was performed to assess impact of various factors.

Although we observed comparable SMD values for some stratifications, absolute numbers of effect sizes did not match across the majority of the stratifications. For injury severity (Fig. 4c), level of injury (Fig. 4d), and animal strain (Fig. 4e), we did not observe comparable trends in the reported effect sizes. Within LED, severe contusion injuries showed the highest effect size (Fig. 4c, SMD 4.92 [95% CI: 3.40; 6.44]), whereas for the IAD, mild contusion injuries had the highest effect size (Fig. 4c, SMD: 6.06 [95% CI: 3.40; 8.71]). Cervical injuries revealed the lowest effect size within the LED (Fig. 4d, SMD: 1.91, 95% CI: [0.98; 2.83]). In IAD, the lowest effect size was detected for lumbar injuries (SMD: 1.29 [95% CI: −4.30; 6.88]). Within the stratification for animal strain, Long–Evans rats showed the lowest effect size in the LED (Fig. 4e; (SMD: 2.93 [95% CI: 1.41; 4.44]); however, Sprague–Dawley rats performed worst in the IAD (SMD: 2.43 [95% CI: 0.12; 4.73]). Figure 4 shows a side-by-side comparison of the subgroup analyses for the literature-extracted and individual animal data. The seperated analyses can be viewed in the supplementary material: see Supplementary Figure S1 for LED and Supplementary Figure S2 for IAD.

Discussion

We performed a matched meta-analysis to directly compare IAD with their literature-reported summaries. We observed notable mismatches between the LED and the IAD in the number of experimental groups, reported number of animals per group, and reported recovery effect sizes. Notably, IAD analysis had smaller error bars, reflecting the higher power than LED. This echoes work in the clinical literature reporting that individual participant data meta-analysis improves sensitivity for detecting true effects over LED.6,17,18,37

Starting with publicly available IAD in the ODC-SCI, we identified 25 matching SCI articles reporting results derived from the same cohort. We then applied the CAMARADES meta-analysis data extraction protocol to the full text (Fig. 2). We observed large differences in the number of experimental groups, number of animals per group, and group labels. Focused analysis on the most commonly used injury models and behavioral outcome measurement reduced the pool from 25 to 7 publications and reduced the total number of animals for the LED by 83% (from n = 1841 to n = 285), yielding a more homogenous dataset for analysis. Subsequent comparisons of LED with IAD revealed the impact of reporting bias. Our analysis revealed notable effect size differences between LED and IAD analyses (Fig. 4). For example, IAD analysis uncovered significant sex differences that did not reach significance in the LED meta-analysis. In addition, LED and IAD nominated discrepant effects of strain on outcome.

Stratification by injury severity revealed largest effect sizes for mild contusion injuries in IAD, confirming a common biological assumption of SCI researchers that greater sparing provides a better substrate for recovery. However, the LED nominated severe contusions as having the largest effect size, a counterintuitive finding from a clinicopathological standpoint. We also observed distinct trends for neurobehavioral recovery depending on the segmental level of injury, with LED analysis nominating cervical SCI has having smallest effect sizes, whereas IAD nominated lumbar SCI as having the smallest effect sizes. Results also suggested that groups with smaller sample sizes had larger effect sizes, which has been previously interpreted to reflect that researchers terminate studies early upon seeing large effects, even though these effects likely reflect statistical noise rather than true effect sizes.18,38 This analysis highlights potential inflation of effect sizes in inadequately powered small studies. Prior studies have shown that smaller sample sizes may lead to overoptimistic conclusions, with effect sizes appearing higher than they would be with adequate sample size. 39 It is noteworthy that LED analyses often produced unrealistically small error bars, when compared with the IAD from the same subjects (Fig. 4f).

The results provide opportunities for translational dialog with clinical meta-analysis, the major tool for ranking evidence in clinical decision support. 7 The clinical classes of evidence (CoE) grading system places meta-analysis of randomized controlled clinical trials as the highest grade of clinical evidence (Class I). 40 Pre-clinical and mechanistic studies are ranked as the weakest evidence, meaning that pre-clinical research has little impact on clinical decision-making. The clinical CoE system is so different from the pre-clinical literature definition of “robust effects” that it is difficult for clinicians to gauge pre-clinical research and make objective, informed decisions about which therapies should advance into clinical studies. As a result, translation may occur in a haphazard manner based on journal impact and citations rather than rigor and reproducibility. Prior reports suggest that most pre-clinical therapies reported in high-impact journals cannot be independently replicated, 41 leading to the perception that pre-clinical literature lacks standardization and relies on underpowered studies with questionable evidentiary basis. 42 Yet, pre-clinical research remains the majority of biomedical scientific literature and represents the majority of the global research investment in biomedicine. For example, a recent report from the U.S. NIH suggests that it spends over 50% of its budget on basic science and continues to view pre-clinical science as the bedrock of biomedical discovery. 43 Yet, considerably less resources are dedicated to assessing the reproducibility of these discoveries, resulting in what some have called “canonization of false facts.” 44

Specifically for the field of pre-clinical SCI research, the current study confirms that publications as a final “scientific product” contain highly selected datasets. Reporting selective subsets of data may unintentionally sway results to more desirable outcomes, potentially obscuring true effect sizes. These findings place an emphasis on the need for better transparency in reporting, including sharing individual subject data, instead of letting it become dark data, data inaccessible to the research community.12,45 Without access to IAD, it is difficult to evaluate which experimental therapeutics are best candidates for clinical translation.

This work has some limitations. Because of the 37 subjects with missing experimental grouping information, we could only partially register individual subjects to their corresponding representation in published articles. This may have affected the reported neurobehavioral recovery, as attrition bias (e.g., the removal of outliers) has been reported to significantly modify results even if only very few animals had been removed from a particular experimental group. 46 In addition, our analysis cannot discriminate whether the chosen outcome parameters were truly justified to detect the best possible effect in all the published experiments. This may influence the results since outcome testing modalities need to be chosen carefully with respect to the specific injury model, severity, and location. 47

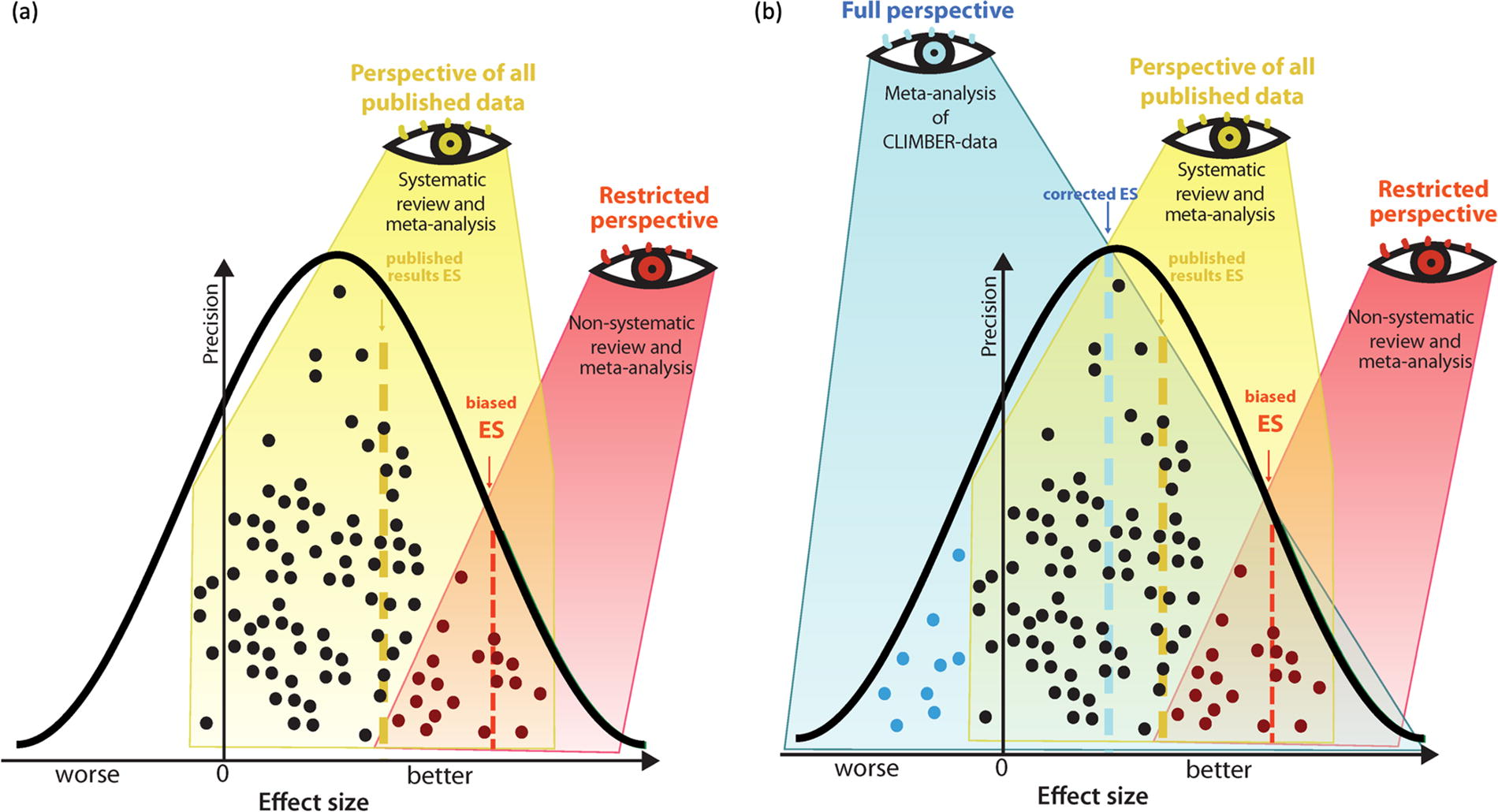

Together, the results demonstrate the utility of combining IAD analysis and traditional literature-sourced meta-analysis to explore effect size reproducibility in SCI. By combining IAD with LED, future work will be able to gain additional insights on findings across diverse studies, helping to improve the predictive value for clinical translation (Fig. 5). Augmented, combinatorial analysis strategies may allow for higher evidence levels of pre-clinical findings. The results underline the added value of the FAIR data sharing (ODC-SCI) to better understand missing data to improve robustness of translation.

Traditional meta-analysis versus meta-analysis of CLIMBER data.

Footnotes

Acknowledgments

The authors are thankful to all the laboratories and colleagues for their efforts, transparency, trust, and generosity partnering up and uploading their data to the ODC-SCI database, which allowed to conduct this analysis.

Authors’ Contributions

E.G.I.: Data curation, methodology, visualization, formal analysis, writing—original draft, and reviewing and editing. A.K.: Data curation, methodology, formal analysis, writing—original draft, and reviewing and editing. K.A.F.: Data curation, methodology, and writing—reviewing and editing. A.V.K.: Conceptualization, supervision, and writing—reviewing and editing. L.M.D.: Resources and writing—reviewing and editing. J.M.S.: Conceptualization, visualization, supervision, and writing—reviewing and editing. A.R.F.: Conceptualization, supervision, writing—reviewing and editing, and funding acquisition. A.T.-E.: Methodology, investigation, visualization, formal analysis, writing—reviewing and editing, and supervision. R.W.: Methodology, investigation, formal analysis, visualization, writing—original draft, reviewing and editing, and supervision.

Author Disclosure Statement

There are no conflicts of interest for any of the authors.

Funding Information

R.W. and A.K. received funding from the Ministeriums fuer Wissenschaft, Forschung and Kunst (MWK), Baden-Württemberg, Germany (MWK#1030015201). J.M.S. received funding support from the National Institutes of Neurological Disorders-NIH (Grant R01NS118200), the Craig H. Nielsen Foundation (CHNF#596764), the Wings-for-Life Spinal Cord Research Foundation (#WfL-US-08/22), and the W.E. Hunt & C.M. Curtis Endowment. J.M.S. is a Discovery Theme Initiative Scholar (Chronic Brain Injury) at the Ohio State University. A.T.-E. received funding support from the Wings for Life Spinal Cord Research Foundation and the Canadian Institute of Health Research (CIHR#496623). A.R.F. received funding from Wings for Life Foundation as the major sponsor of the current work, as well as funds from NIH grants: R01NS122888; UH3NS106899, U24NS122732; U.S. Veterans Affairs: I01RX002245, I01RX002787, I01BX005871, I50BX005878; Craig H. Nielsen Foundation, and the UC Noyce Initiative.

Abbreviations Used

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.