Abstract

Timely outbreak detection and response can translate into illnesses averted and lives saved. As such, timeliness is an important criterion for evaluating performance of infectious disease surveillance systems. Through the use of clearly defined outbreak milestones, timeliness metrics can capture the speed of outbreak detection, verification, response, and other key actions across the timeline of an outbreak and evaluate progress over time. In this article, we describe a series of country-level pilot studies designed to assess the feasibility and utility of tracking timeliness metrics and highlight key findings. We then discuss subsequent efforts to develop a timeliness metrics measurement framework through expert consultation and provide recommendations for implementation. National surveillance programs, international agencies, and donor organizations can use timeliness metrics to identify gaps in surveillance performance and track progress toward improved global health security.

Introduction

The frequency of infectious disease outbreaks is increasing globally, posing a substantial risk to both population health and economic activity. 1 In June 2018, for the first time, active outbreaks of Ebola, Middle East respiratory syndrome, Zika, Nipah virus, Lassa fever, and Rift Valley fever occurred simultaneously, all of which are considered World Health Organization (WHO) priority diseases. 2 The current COVID-19 pandemic illustrates that emerging diseases are a constant threat, resulting in grave consequences when not quickly detected and contained.3-5 Early detection and rapid response are crucial for mitigating the impact of outbreaks of emerging and reemerging diseases. Rapidly identifying causative pathogens, ascertaining modes of transmission, and implementing control measures can translate into a marked reduction in morbidity and mortality, minimizing social and economic disruption.6,7 Given this reality, it is imperative to routinely assess the world's ability to quickly find and contain any high-consequence outbreak at its source. Monitoring the timeliness of outbreak detection, verification, notification, and response can provide insight on surveillance capabilities and indicate where performance might be improved. 8

Evaluations of outbreak detection and response timeliness are difficult without consistent, standardized methods for analyzing timeliness trends across key surveillance functions. Previous studies in this area have focused on the timeliness of reporting (notification) of cases or outbreaks to national notifiable disease surveillance systems to identify areas for improvement.8,9 Other studies have examined the length of outbreak investigations and the timeliness of analytical studies to characterize those outbreaks. 10 In the Netherlands, a study assessed the impact of reporting delays on outbreaks, noting that realistic improvements in measles and mumps case reporting, when combined with sufficient vaccine coverage, could make notable improvements in outbreak control conditions. 11 While each of these studies makes valuable contributions, they do not establish guidance for routine timeliness evaluations of disease surveillance functions at the national or subnational level. In this article, we state the case for tracking the timeliness of outbreak surveillance actions and share guidance for capturing such measures. At the same time, we acknowledge that timeliness is but a single important aspect for evaluation of disease surveillance systems, and should be considered alongside features such as validity, flexibility, acceptability, stability, and cost of implementation. 12

Tracking timeliness of outbreak surveillance actions complements existing evaluative frameworks currently used at the country level. Health authorities are required by the 2005 revisions to the International Health Regulations (IHR) to define the etiologic agent, source, and mode of spread when an outbreak occurs within their jurisdiction, and to notify the international community of such information. 13 Country capacity for conducting core surveillance activities identified by the IHR are monitored through State Parties Self-Assessment Annual Reporting to WHO and the Joint External Evaluation (JEE) process. The State Parties Self-Assessment Annual Reporting (SPAR) tool enables countries to report on 24 indicators related to the 13 IHR capacities needed to detect, assess, notify, report, and respond to public health risk and acute events of domestic and international concern. 14 The JEE is a voluntary, collaborative process to periodically assess country capacity to (1) prevent, detect, and rapidly respond to public health risks; (2) assess country-specific progress; and (3) recommend priority actions to be taken across 19 technical areas. 15 As of April 7, 2020, the JEE process had been completed by 113 countries. 16

While the SPAR and JEE tools have proven valuable in identifying gaps and driving preparedness planning and investments, they focus on assessing country capabilities rather than performance. A framework for assessing the timeliness of disease surveillance can complement such efforts by providing a set of quantitative performance measures for tracking progress routinely and consistently, as efforts like the JEE are implemented only on a periodic basis.

Methods

Chan et al 17 measured and analyzed the trends in timeliness using 4 outbreak milestones applied to events from the WHO's Disease Outbreak News from 1996 to 2009. The authors found that the global speed of outbreak discovery and public communication had improved overall by 7.3% and 6.2% per year, respectively. In 2016, a 5-year update to the Chan et al study indicated improvements in global capacity for timely disease detection had continued, but at a slower rate. 18 However, in both studies the ability to stratify by disease type or examine geographic factors at a level more granular than WHO regions was limited. More granular data at the national and subnational levels must be examined to better characterize these trends and provide actionable insights for governments.

Since 2014, Ending Pandemics has focused on developing definitions for outbreak milestones and corresponding timeliness metrics that can be used at the national and subnational level for monitoring performance in disease surveillance. Ending Pandemics partnered with HealthMap of Boston Children's Hospital, the International Society for Infectious Diseases' Program for Monitoring Emerging Diseases, and the Task Force for Global Health's Training Programs in Epidemiology and Public Health Interventions to adapt the methods from Chan et al for use by national health agencies to assess timeliness of outbreak surveillance actions. 17

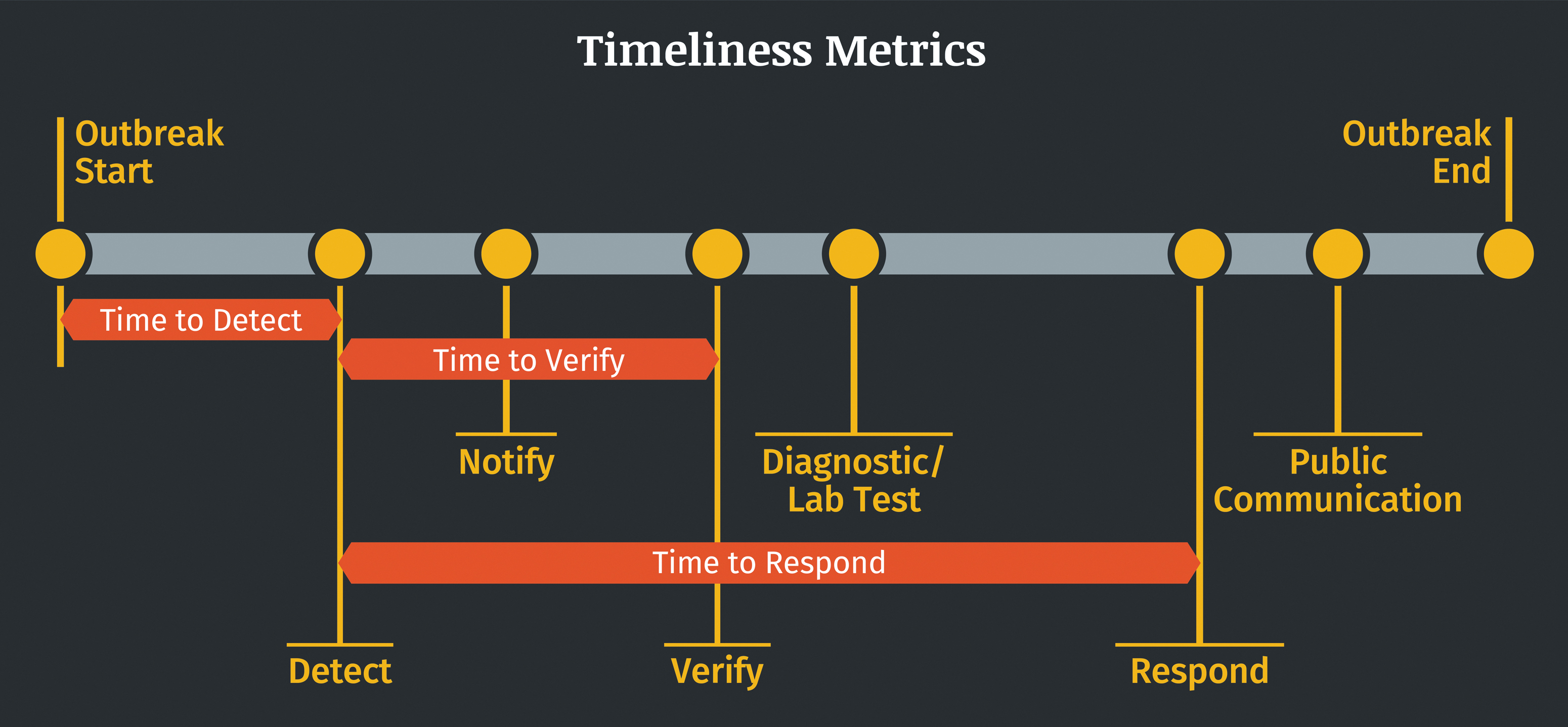

At the crux of this effort was identifying and defining key outbreak milestones from which timeliness metrics could be calculated. The identified outbreak milestones represent the dates of key surveillance actions, including date of detection, date of verification, and date of response to an outbreak. Timeliness metrics are defined as the time interval between 2 outbreak milestones. For example, timeliness of outbreak detection was calculated as the time interval between the Outbreak Start and the Detect milestones (Figure 1). Following methods established by Chan et al, 17 the median time for each timeliness metric can be calculated for outbreaks during the selected time period and a univariate Cox proportional hazards regression analysis applied to analyze the changes in median timeliness for each metric. The initial version of outbreak milestones used to establish the timeliness metrics for country-level studies are described in Smolinski et al 19 and provided in Table 1.

Illustration of example outbreak milestones and timeliness metrics.

Pilot Study Outbreak Milestone Definitions

As described in Smolinski et al 19 ; bOutbreak threshold refers to minimum number of cases and other criteria required to declare an outbreak for a particular disease.

Using the outbreak milestones developed for use at the national level, Ending Pandemics provided financial support and technical assistance for a series of retrospective studies designed to assess the feasibility of measuring the timeliness of key disease surveillance actions. Of primary interest was assessing the availability of outbreak milestones (date variables) within existing disease surveillance systems. These data were identified, extracted, and in many cases supplemented by additional documentation. Available outbreak milestones were then leveraged to assemble outbreak timelines and measure timeliness trends. Studies were initiated by field epidemiology training programs (FETPs) representing 18 countries: Barbados, Haiti, India, Indonesia, Kenya, Pakistan, Paraguay, Senegal, Taiwan, Tanzania, Zimbabwe, and a Central American collaboration through the Council of Ministers of Health of Central America that included Belize, Costa Rica, Dominican Republic, El Salvador, Guatemala, Honduras, and Panama. Two studies were conducted in India, 1 by the Chennai-based FETP (part of the National Institute of Epidemiology) and 1 by the Delhi-based FETP (housed within the Ministry of Health).

Similar pilots were developed with 4 countries in the Mekong Basin Disease Surveillance (MBDS) network (Cambodia, Laos, Myanmar, Vietnam) and 5 countries in the Southeast European Center for Surveillance and Control of Infectious Diseases (SECID) network (Albania, Bosnia and Herzegovina, Bulgaria, Kosovo, Macedonia). Both MBDS and SECID operate as multicountry, regional disease surveillance networks focused on detection and control of disease outbreaks.20-22

Countries established time periods for inclusion of retrospective outbreak data ranging between 5 and 12 years. Studies conducted by FETPs assessed infectious disease outbreaks during their selected time period, in many cases applying some of the exclusion criteria established by Chan et al, 17 such as excluding foodborne outbreaks, nonnatural cases due to laboratory accidents, or single cases of disease. Further detail on exclusion criteria can be found in Table 2. The MBDS and SECID networks each selected specific priority diseases for evaluation within their respective networks.21,22 When possible, countries stratified results by pathogen, disease type, and/or subnational geography to assess gaps in surveillance. Some countries also examined timeliness trends before and after key public health interventions to assess their potential impact on timeliness.

Retrospective Pilot Study Results From Field Epidemiology Training Programs

Direct cross-country comparisons are not advised due to variations in time period, disease type, and total number of outbreaks examined. Select results from the studies are shown here as examples.

Abbreviations: FETP, field epidemiology training program.

Results

Data collection, analysis, and reported results varied by country due to differing priority diseases selected and wide variation in data availability. We will not attempt to comprehensively detail such a diverse set of studies, but we do share key findings. In total, 19 of the initial 27 countries participating in the retrospective studies were able to complete analyses of timeliness metrics, resulting in 20 studies to draw from due to the participation of 2 India-based FETPs. Of the 8 countries that did not complete analysis of timeliness metrics, most cited lack of data availability as the primary barrier to execution. The remaining 20 studies collectively reviewed 153 years of outbreak data. Individual country studies ranged in size from 56 outbreaks to 7,092 outbreaks over time frames ranging from 5 to 12 years, spanning 2003 through 2017. Results reported here are drawn from a series of published papers, unpublished project reports submitted by FETPs, and workshop presentations. A summary of results from FETP studies can be found in Table 2. Published papers and presentations are cited for the MBDS and SECID network results.21,22

Data availability for outbreak milestones varied considerably from country to country. No participating country consistently collected structured data for every outbreak milestone, although many were able to calculate trends for multiple timeliness metrics. Identifying dates of the outbreak milestones frequently required extensive data extraction processes for many countries. Missing data and lack of standardized data collection practices were common. Of the 20 studies, 15 (75%) were able to describe results for timeliness of outbreak detection and 12 (60%) described the timeliness of outbreak notification.* In addition, 14 studies (70%) described the timeliness of outbreak response. Results for timeliness of laboratory confirmation and public communication were less common, with 7 (35%) and 9 (45%) studies reporting these metrics, respectively.

Of the 20 studies reported here, 13 included data on timeliness trends for each year included in the study timeline. The 7 countries not reporting yearly results calculated median measures of timeliness for the entire study period to provide a baseline from which further progress can be measured. Of the 13 studies with yearly timeliness data, 10 noted improvements in surveillance timeliness and 3 reported either negligible change or mixed results across specific timeliness metrics. For example, Senegal reported improvement in outbreak detection time, from a median of 10 days in 2003 to a median of 3.5 days in 2013. 23 Zimbabwe also found an overall significant reduction in outbreak detection time, from a median of 6 days in 2003 to a median of 1 day in 2013. 24 Furthermore, Honduras and Belize saw an overall improvement in timeliness of outbreak detection, from a median of 12 days in 2007 to a median of 2.5 days in 2016. 25

Timeliness metrics were used by some pilot countries to assess the impact of specific interventions designed to improve disease surveillance. Indonesia cited implementation of the Early Warning Alert and Response System as contributing to improvements in the speed of outbreak detection; a Cox hazards regression demonstrated that the speed of outbreak detection had improved (hazard ratio, 1.8; 95% confidence interval [CI], 1.4 to 2.4) from 9 days (range 3 to 27) to 6 days (range 4 to 8). 26 Meanwhile a Cox hazards regression indicated that Integrated Disease Surveillance and Response implementation in Senegal was correlated with average annual improvements in timeliness of outbreak detection (15.2%), outbreak reporting (14.8%), laboratory confirmation (12.1%), public health response (14.3%), and public communication (9.8%) over a 10-year period. 23

The establishment of an FETP in some countries was correlated with both improvements in timeliness and an increase in outbreak reporting. For example, in Paraguay, establishment of an FETP in 2011 was correlated with a decrease in median outbreak reporting time from 25 days to 8 days, and a decrease in median response time from 4 days to 1 day. 27 Paraguay also noted a statistically significant increase of 87% in the overall number of outbreak reports available for the period after the FETP was established. A similar trend was also noted in Zimbabwe, with 86% of outbreak reports available during the study period following the establishment of an FETP in 2005.

Several countries stratified data at the subnational level to further describe differences in timeliness, including India, Indonesia, Pakistan, Senegal, Taiwan, and Zimbabwe. For example, the Chennai-based FETP in India noted a small but statistically significant gap between urban and rural settings in Tamil Nadu, with outbreak response times in rural communities taking twice as long (median of 2 days) as their urban counterparts (median of 1 day). 28 Furthermore, the study conducted by the Indonesia FETP found that timeliness varied across the country, as outbreaks on Java Island (median of 6 days) were detected twice as fast compared with outbreaks in other areas (hazard ratio, 2.1; 95% CI, 1.5 to 2.9). 26

In the Mekong Basin, results from studies conducted in Cambodia, Laos, Myanmar, and Vietnam are available in a study by Lawpoolsri et al, 21 with median reporting times, response times, and time to public communication broken down by year and disease. The countries agreed to examine a shared list of priority diseases that included dengue, food poisoning and diarrhea, diphtheria, measles, H5N1 and H1N1 influenza, rabies, and pertussis. Each country examined 6 years' worth of outbreak data from 2010 to 2015, ranging from 288 outbreaks to 715 outbreaks per country. The timeliness of outbreak notification, response, and communication to the public varied greatly, depending on the country, disease, and year.

An early analysis of SECID country data by Mersini et al, 22 presented at the International Meeting on Emerging Diseases and Surveillance in November 2018, examined timeliness trends from Albania, Bosnia and Herzegovina, Bulgaria, Macedonia, and Kosovo. In addition to median time to outbreak detection, reporting, and laboratory confirmation, the 5 SECID countries also reported results on the median time to patient hospitalization and median time to patient treatment. Each country selected priority diseases of particular relevance to them, including anthrax, brucellosis, Crimean-Congo hemorrhagic fever, measles, and tularemia. Across the participating countries, the median detection time was found to be 1 day; median time to laboratory confirmation, 8 days; median time to patient hospitalization, 3 days; and median time to treatment, 4 days. A full analysis of SECID network data is currently in development at the time of this writing.

Discussion

Development of a timeliness metrics framework arose out of a desire to assist countries in monitoring progress toward meeting the requirements of the IHR. When the first round of pilot studies was initiated in 2014, nearly 80% of the countries were either not meeting their IHR obligations or failing to report on their progress. 29 At that time, the JEE process was early in development, with the initial Global Health Security Agenda pilot assessments taking place in 2015 and the first JEE tool published by WHO in February 2016. 30 Initial planning for the Global Health Security Index, published in 2019, was only just beginning.31,32 Our goal was to develop and advocate for a set of key indicators that could be measured through existing surveillance infrastructure and robust enough to enable identification of gaps and areas of success.

Timeliness metrics are a critical and complementary evaluation tool for pandemic preparedness. Scores from the Global Health Security Index and the JEEs have not necessarily correlated with a country's ability to respond to the COVID-19 pandemic, emphasizing the need to monitor both capabilities and performance. 33 Hence, timeliness metrics generated by countries for the purpose of improving their own systems may help monitor progress toward meeting the IHR and provide metrics that can feed into larger evaluation efforts.

In the pilot studies highlighted here, the majority of country participants found the timeliness metrics to be useful for evaluating their infectious disease surveillance systems. Examining timeliness trends helped to identify gaps in surveillance capacity and data collection practices. Challenges and limitations, however, surfaced during the retrospective studies, including limited resources for data collection and extraction activities, lack of date variables for establishing outbreak milestones, and incomplete data. Nevertheless, the results reported here demonstrate the feasibility of measuring timelines for outbreak surveillance, which in turn provides a starting point for understanding influencing factors that can be targeted for investment.

Ending Pandemics convened 2 international expert consultations in 2018 and 2019 with more than 60 representatives from 43 health agencies, nongovernmental organizations, universities, and foundations from the human, animal, and environmental health sectors to disseminate lessons learned from the pilots and address the aforementioned challenges. The meetings were conducted in partnership with the Salzburg Global Seminar to spearhead revisions to the outbreak milestone definitions, identify strategies to enable routine data collection, and consider how timeliness trends can be interpreted to inform improvements to surveillance systems across the One Health spectrum. One Health is a collaborative, multisectoral, and transdisciplinary approach that recognizes the interconnection between people, animals, plants, and their shared environment. 34

In-depth discussion and rigorous debate at the initial meeting resulted in 8 outbreak milestones with updated definitions for use by both public health agencies and other health organizations. The meeting summary and list of participants can be found in the meeting report, Finding Outbreaks Faster: How Do We Measure Progress? 35 The subsequent meeting in 2019 focused on revising the outbreak milestones further to incorporate environmental monitoring for prediction and prevention, as well as for use in animal outbreak surveillance. 36 The 11 outbreak milestones found in Table 3 reflect the broadening of definitions to ensure applicability across the One Health spectrum. 36

Updated Outbreak Milestone Definitions, 2019

Definitions have been updated to reflect a One Health approach and ensure applicability across human, animal, and environmental health sectors. The sequence of the milestones may vary by outbreak. In some cases, a single action may represent more than 1 milestone. The definition of an outbreak may vary by disease, geography, or sector.

The after-action review milestone is included to inspire the necessary collaborations among sectors for operationalizing One Health.

These standardized outbreak milestones enable countries and public health organizations to define and calculate timeliness metrics relevant to them. Importantly, it was agreed that routine collection of the outbreak milestones would alleviate many of the challenges identified in the pilot studies. Modifications to event management systems and after-action report forms provide clear opportunities to routinely capture outbreak milestones where many of these variables may already be collected. Using such opportunities as starting points, countries can explore how to capture outbreak milestones in all relevant surveillance systems to ensure complete and continual data capture.

Guidance to support implementation of these metrics is being developed in partnership with several stakeholders. The outbreak milestones are now included in WHO's country guidance on after action reviews and simulation exercises 37 while also being used by the US Centers for Disease Control and Prevention to evaluate event-based surveillance tools. 38 Furthermore, WHO has made timeliness of detection, response, and notification to WHO a key component of their methods for measuring progress toward the Thirteenth General Programme of Work goal of “1 billion people better protected from health emergencies.” 39 Most recently the WHO Regional Office for Africa published a study examining the dates of onset, detection, notification, and outbreak end for 296 events between 2017 and 2019 using similar methods to those shared here. 40

Evaluation frameworks that account for the drivers of timeliness trends can help assess the impact of investments in global health security for improving disease surveillance. Outbreak milestones captured by existing disease surveillance systems can be leveraged to identify gaps and highlight areas for improvement. The timeliness metrics can also enable the identification of high-performing programs and spur further exploration into the reasons for success.

Regional disease surveillance networks such as MBDS and SECID provide a platform to examine regional disease surveillance strengths and weaknesses. That said, caution should be exercised when comparing timeliness metrics between or among countries due to variable capabilities, burden of disease, and socioeconomic factors. The timeliness metrics presented in this article are intended to help individual countries assess their progress over time rather than function as comparative metrics across countries.

The outbreak milestones and associated timeliness metrics were developed with the recognition that not all countries or organizations have the capacity or intent to capture each of the outbreak milestones. The pilot studies previously described have demonstrated country-level variance for which outbreak milestones may be captured by existing routine surveillance systems. Countries may consider establishing a minimum subset of outbreak milestones that can be integrated into all routine surveillance systems. Adoption of the entire set of milestones may provide the impetus to revise surveillance systems, outbreak investigations forms, and related systems to capture all necessary data points. As previously noted, the timeliness metrics identified by WHO to measure progress toward the Thirteenth General Programme of Work goal of “1 billion people better protected from health emergencies” may provide a reasonable starting point for many countries.

Due consideration should also be given to the expanded use of timeliness metrics in the animal and environmental health sectors. Embracing a One Health approach within the timeliness metrics framework may further our understanding of how a multisectoral approach better prepares the world to react to emerging health threats. Ending Pandemics will continue to promote the use of timeliness metrics across the human, animal, and environmental health sectors, including efforts to work with partner countries to integrate outbreak milestones into their event management systems, DHIS2 platforms, and other surveillance tools. Efforts also include coordinating with WHO, the Food and Agriculture Organization of the United Nations, and the World Organisation for Animal Health on efforts to capture timeliness metrics in their systems, enabling the use of timeliness metrics through training workshops for FETPs, and linking with workforce development efforts to ensure the inclusion of content that highlights these methods. This work will further inform best practices for capturing these milestones and metrics, and shape future recommendations and guidance. The resulting timeliness data may not only help identify factors influencing surveillance performance, but also have the potential to inform economic and impact analyses that link detection and response times with outcomes.

The pilot studies cited focused on assessing feasibility of acquiring outbreak milestone data and tracking timeliness trends. This article is not intended to be an in-depth assessment of timeliness trends reported by each country, rather it focuses on the potential utility of such an approach and provides suggestions for future use. Pilot study exclusion criteria varied by country, and, therefore, interpretation of timeliness trends should be done with particular consideration for what type of diseases may have been excluded from the study. Pilot studies report a wide range of total number of outbreaks across the study periods. Hence, timeliness trends from studies with a particularly low number of outbreaks should be interpreted with care. The outbreak milestone definitions are intentionally broad to ensure applicability across a wide range of health organizations; further specificity may be needed for individual organizations to operationalize the use of outbreak milestones.

Footnotes

Acknowledgments

The authors would like to acknowledge our many partners who led the pilot studies discussed here, including Aslam Pervaiz (Pakistan FETP), Augustina Silva (Paraguay FETP), Daniella Azor (Haiti FETP), Donewell Bangure (Zimbabwe FETP), David Rodriguez (Council of Ministers of Health of Central America and the Dominican Republic), Hao Yuan-Cheng (Taiwan FETP), Ibrahima Sonko (Senegal FETP), Joan Karanja (Kenya FETP), Nur Aini Kusmayanti (Indonesia FETP), Preeti Padda and Viduthalai Virumbi (India FETPs), Shaba Kilasi (Tanzania FETP), and our colleagues at Training Programs in Epidemiology and Public Health Interventions Network (TEPHINET). In addition we would like to thank our partners in MBDS and SECID for their work in applying this framework in a collaborative, multi-country approach in Southeast Asia and Southeastern Europe, respectively. Finally we would like to thank all of the participants from the “Finding Outbreaks Faster” convenings at the Salzburg Global Seminar in 2018 and 2019 whose contributions helped to refine and advance this framework.