Abstract

Meta-analyses have found that social cognition training (SCT) has large effects on the emotion recognition ability of people with a psychotic disorder. Virtual reality (VR) could be a promising tool for delivering SCT. Presently, it is unknown how improvements in emotion recognition develop during (VR-)SCT, which factors impact improvement, and how improvements in VR relate to improvement outside VR. Data were extracted from task logs from a pilot study and randomized controlled trials on VR-SCT (n = 55). Using mixed-effects generalized linear models, we examined the: (a) effect of treatment session (1–5) on VR accuracy and VR response time for correct answers; (b) main effects and moderation of participant and treatment characteristics on VR accuracy; and (c) the association between baseline performance on the Ekman 60 Faces task and accuracy in VR, and the interaction of Ekman 60 Faces change scores (i.e., post-treatment − baseline) with treatment session. Accounting for the task difficulty level and the type of presented emotion, participants became more accurate at the VR task (b = 0.20, p < 0.001) and faster (b = −0.10, p < 0.001) at providing correct answers as treatment sessions progressed. Overall emotion recognition accuracy in VR decreased with age (b = −0.34, p = 0.009); however, no significant interactions between any of the moderator variables and treatment session were found. An association between baseline Ekman 60 Faces and VR accuracy was found (b = 0.04, p = 0.006), but no significant interaction between difference scores and treatment session. Emotion recognition accuracy improved during VR-SCT, but improvements in VR may not generalize to non-VR tasks and daily life.

Introduction

Deficits in social cognition, among which problems in emotion perception, are common in people with a psychotic disorder 1 and predict problems in social functioning, for example,2,3 Social Cognition Training (SCT) combines compensatory strategy training with repeated practice with social stimuli. Meta-analyses4–7 have found large effects of SCT on emotion perception. In the past years, studies have explored using Virtual Reality (VR) to train social cognition.8–10

Compared with conventional SCT, VR-SCT has the potential added benefit of facilitating practice in an interactive, dynamic and realistic environment. Two8,10 preliminary studies observed moderate to large improvement in emotion perception. However, a randomized controlled trial (RCT; n = 83) by our research group, comparing a VR-SCT, “DiSCoVR” (Dynamic Interactive Social Cognition Training in Virtual Reality), with an active control condition (VR relaxation) showed no effects on emotion perception or Theory of Mind. 11

Given that this intervention was based on effective protocols and findings from previous meta-analyses of SCT, it was unclear why no effect was found; this could be due to ineffectiveness of the protocol in general, or due to a lack of generalization of VR improvements to non-VR measures.

In fact, little is known in general about how SCT works, and for whom; to our knowledge, only meta-analyses have investigated this issue, with inconsistent results.6,8,12 Moreover, SCT studies to date only report pre- and post-treatment emotion perception scores. These demonstrate improvements after SCT, but neither reveal which factors are relevant for improvement, nor what happens during SCT. Cella and Wykes 13 investigated treatment processes during Cognitive Remediation Training, a form of cognitive training similar to SCT, but aimed at neurocognition (e.g., memory).

The therapeutic alliance during training predicted improvements in functioning, whereas other variables (e.g., number of tasks completed) predicted gains in neurocognition. The only study to report data during SCT is a single case study of a person with traumatic brain injury, 14 showing session-by-session improvement of emotion perception accuracy, particularly for negative emotions. After starting treatment, an increase in response time occurred, trending downward as sessions progressed.

Thus, how improvements following SCT develop over time, and which factors are relevant for treatment success, is still largely unknown. However, it is particularly relevant to understand why SCT might (or might not) be effective, as session-by-session measurements can demonstrate when and how improvements occur.

Computerized interventions could provide a unique opportunity to track this treatment process, as several parameters can be logged that might be more difficult to track in analog exercises (e.g., reaction time). VR also provides an immersive, interactive setting with control of its parameters (e.g., difficulty) and its content,15,16 in which behavior can be recorded unobtrusively. For example, Freeman et al. 17 used VR to treat persecutory delusions and tracked movement in VR.

Participants receiving cognitive behavioral therapy moved a greater distance (i.e., explored the environment more) than people receiving only exposure. However, tracking parameters to examine treatment processes during VR exercises has not been used for SCT. Moreover, the relationship between data recorded during VR treatment and improvement outside VR has, to our knowledge, not been investigated.

In this study, we investigate the treatment process and potentially relevant factors for treatment success. Given the lack of effects of DiSCoVR on social cognition measures, we investigated whether a treatment effect was present in VR, and whether this potential effect generalized. Given the dearth of knowledge on factors contributing to treatment efficacy, we opted to use an exploratory approach, selecting variables from our data that have previously been investigated as predictors of emotion recognition18–20 and moderators of SCT efficacy.6,8,12 We investigated the following research questions:

(a) Does emotion perception accuracy during a VR SCT training improve over time, while accounting for the difficulty level and the type of emotion shown?

(b) Do participants become faster at correctly identifying emotions during a VR training, while accounting for the difficulty level and the type of emotion shown?

(a) Do participant characteristics (i.e., age, gender, baseline neurocognition, education level, premorbid intelligence, number of hospitalizations, baseline emotion perception, baseline symptoms) predict changes in emotion perception accuracy over time during VR-SCT sessions?

(b) Do treatment characteristics (i.e., strategy use, duration of VR practice, duration of sessions, time taken to complete treatment) predict changes in emotion perception accuracy over time during VR-SCT sessions?

(3) Is (improvement in) accuracy in the VR environment associated with accuracy on a conventional task of emotion perception?

Materials and Methods

Design

This study combines data from two studies: a single-group, uncontrolled pilot study and a single-blind RCT on DiSCoVR, a VR-SCT, as the emotion recognition module was nearly identical in both studies. For detailed information on these studies and DiSCoVR, cf. Refs.8,11,21

Participants

Inclusion criteria

- Age 18–65.

- Diagnosis of psychotic disorder, determined in the past 3 years with a structured diagnostic instrument or verified at baseline using the Mini-International Psychiatric Interview. 22

- Social cognitive deficits as indicated by a referring clinician.

Exclusion criteria

- A relevant neurological disorder, such as dementia (pilot study only).

- Substance dependence (pilot study only).

- (Photosensitive) Epilepsy.

- Inadequate proficiency of Dutch language.

- A diagnosis of an intellectual disability and/or estimated IQ under 70.

Participants were recruited through clinical referral and self-enrollment from mental health institutions in Netherlands: University Medical Center Groningen (both studies), GGZ Drenthe (both studies), GGZ Delfland (both studies), Zeeuwse Gronden (RCT), and GGZ Westelijk Noord-Brabant (RCT).

Intervention

DiSCoVR consisted of 16 individual 45–60 minutes, twice weekly one-on-one treatment sessions, guided by a trained therapist. The intervention was modeled after existing, effective SCT protocols (e.g., Roberts 23 and Westerhof-Evers et al. 24 ) and was designed to gradually increase the complexity of training content. Sessions consisted of face-to-face discussion (e.g., about goals and strategies) and practice with social stimuli in VR.

The intervention encompassed three modules, each targeting a different domain of social cognition (i.e., emotion recognition; theory of mind and social perception; and social interaction). The latter two modules will be described only briefly, as only data from the first module was used. The VR environments were developed by CleVR BV.

Module 1 (sessions 1–5) targeted emotion recognition. Personal social goals (e.g., “Having a conversation with a stranger”) were established, and compensatory strategies for emotion recognition were introduced (Supplementary Appendix SA1). A strategy was selected before every VR practice session. Participants were encouraged to use these in daily life (Supplementary Appendix SA2). In VR, participants encountered stationary characters (“avatars”) in a shopping street (Fig. 1).

Screenshots of VR environment (emotions and interface). VR, virtual reality.

On approach, avatars showed one of six basic emotions (happiness, sadness, fear, disgust, anger or surprise) or no emotion (neutral). Participants selected the emotion shown from a multiple-choice menu. For incorrect answers, the avatar expressed the emotion again more strongly and participants could try again. These emotions were based on the facial action coding units proposed by Ekman and Friesen; 25 a study by our research group showed comparable recognition performance between the VR emotions and conventional tasks of emotion recognition.

The difficulty of the exercises could be altered, for example, by making emotions more subtle, or by changing the amount of time allotted to answer. To make the software easier to use for therapists, we designed standard VR levels which gradually increased the difficulty of all parameters simultaneously (Table 1).

Parameters of Virtual Reality Levels

Six emotion profiles were used: happiness, sadness, anxiety, anger, disgust, and surprise. Finally, an emotion profile with an emotion strength of 0 percent was used, representing a neutral face. Thus, in total, seven different emotion options existed.

Due to technical limitations, the maximum number of avatars (stationary+walking) in any level was 40.

The practice level was not used in the analyses, as participants were still getting used to the VR environment in this level, and no emotions were shown (only neutral faces).

VR, virtual reality.

In the second module (sessions 6–9), participants viewed social interactions between avatars, which contained questions about the mental state of the avatar. Outside of VR, participants learned a technique to understand social situations. In the third module (sessions 10–16), participants role-played personally relevant social situations with the therapist, through VR. Outside of VR, participants learned a social problem-solving technique.

Participants explored the VR environments and selected answers using a joystick controller (Microsoft Xbox One). We used a set-up (Fig. 2) consisting of two computers and two monitors: one computer ran the VR environment, its monitor showing the participant's point of view in VR. A second, connected computer displayed the VR user interface, which was used to set up and control the VR environment by the therapist. Generally, participants used the VR headset (Oculus Rift Consumer Version 1 head-mounted display) while standing. Participants could withdraw from the VR session at any time. We did not record participants' prior exposure to VR.

DiSCoVR hardware setup. DiSCoVR, Dynamic Interactive Social Cognition Training in Virtual Reality.

Measurements

Demographic, clinical, and diagnostic measures

Demographic and clinical variables were recorded in a baseline interview (see Procedure section). Premorbid IQ was estimated by administering the National Adult Reading Test 26 (Dutch Version 27 ). The number of correct pronunciations of 50 increasingly uncommon words were recorded (score range 0–100). The Mini-International Neuropsychiatric Interview Plus 22 was administered to verify diagnoses.

VR measurements

The following VR parameters were available:

- Emotion expressed by the avatar. - Answer given by the participant (correct = 1; incorrect = 0). - VR level (Table 1). - Response time, in seconds. - Total time spent in the virtual environment, in seconds.

The following parameters were extracted from self-report therapist session forms:

- Treatment session dates.

- Total duration of the treatment sessions, in minutes.

- Strategies used for practice (see Supplementary Appendix SA1 for a list of standard strategies).

Emotion perception

The Ekman 60 Faces Test 28 was used as a conventional emotion perception measure at baseline and post-treatment (see Procedure section). In a computerized test, participants select emotions portrayed by 60 photos (total score range 0–60).

Information processing

In the Trailmaking Test, 29 participants connect the circles with numbers (TMT-A) or numbers and letters (TMT-B) in the correct order. The task completion time (in seconds) is recorded. A TMT-B score corrected for TMT-A was calculated (TMT-B divided by TMT-A).

Positive and negative symptoms

The positive (7 items, score range 1–7) and negative (7 items, score range 1–7) subscales of the Positive and Negative Syndrome Scale (PANSS 30 ) semi-structured interview were used to assess psychotic and negative symptoms.

Procedure

Participants expressing interest in the study were contacted by the research team. After a week of consideration, written informed consent was signed, the baseline assessment (T0) meeting took place, and DiSCoVR started. Within 2 weeks after the last session, participants completed the post-treatment measurement (T1). Both studies were approved by the Medical Ethical Committee of the University Medical Center Groningen (pilot: ABR NL55477.042.16, METc 2016/050; RCT: ABR NL63206.042.17, METc 2017/573).

Analysis

We explored the data using descriptive statistics and visualization using the ggplot2 R package. 31 We used only outcome data from session 2 and level 1 onward for analysis.

Each research question was analyzed with a mixed effects generalized linear model (ME-GLM), with treatment session at level 1, and participant at level 2. All models included the predictors treatment session (with session 2 coded as 0, range 0–3) and VR level (with level 1 coded as 0, range 0–5, to account for the difficulty level of the stimuli).

In the models for RQ1a and RQ1b, we also included the emotion shown (using “happy” as the reference category since it is generally recognized best 28 ). In the models for RQ2a-b and RQ3, we were interested in the general change over time and predictors thereof, rather than specific accuracy of the various emotions, and therefore refrained from including the emotions shown.

The candidate models were defined using the following attributes: linear or quadratic predictors (of treatment session and VR level) and random effects structures (i.e., random slopes and/or intercepts). To estimate the ME-GLM, we used the R packages lme432 (for regression model estimation) and lmerTest 33 (for p values). The significance level was set at α = 0.05.

For RQ1a, using a logistic ME-GLM, the odds of correctly identifying an emotion were predicted by the treatment session (linear effect), the VR level practiced (quadratic effect), and the emotion shown. For RQ1b, only correct trials were modelled. Using a ME-GLM with a Gamma distribution with a log link function, the response time (in seconds) was predicted by the treatment session (linear effect), the VR level (quadratic effect), and the emotion shown.

For RQ2a-b, we fitted a logistic ME-GLM for each of the participant characteristics (RQ2a) and treatment characteristics (RQ2b) of interest. We refrained from modelling these characteristics jointly in a single model, because of multicollinearity issues. The odds of correctly identifying an emotion were predicted by the treatment session, the VR level practiced (quadratic), the participant or treatment characteristic (to estimate the main effect of the predictor), and the interaction between the participant or treatment characteristic and treatment session (to evaluate moderation of the predictor of treatment session effects). A Benjamini & Hochberg or false discovery rate correction was applied to the p values, to correct for multiple tests.

For RQ3, using a logistic ME-GLM, the odds of correctly identifying an emotion were predicted by the treatment session, the VR level practiced (quadratic effect), the baseline Ekman 60 Faces Test score, and an interaction between the difference score (T1–T0) on Ekman 60 Faces Test and treatment session. To investigate whether an improvement in the Ekman 60 Faces Test score was present, we conducted a paired t test.

Results

Descriptives

Demographic and clinical characteristics, as well as descriptive statistics of the VR task and treatment sessions are shown in Table 2.

Descriptive Statistics (Demographic, Clinical, Virtual Reality Task Data, Treatment Session Data)

In seconds.

NART, National Adult Reading Test; NLV, Nederlandse Leestest voor Volwassenen; PANSS-N, positive and negative syndrome scale, negative symptoms subscale; PANSS-P, positive and negative syndrome scale, positive symptoms subscale; SD, standard deviation; TMT, Trailmaking Test.

Recognition accuracy over time (RQ1a)

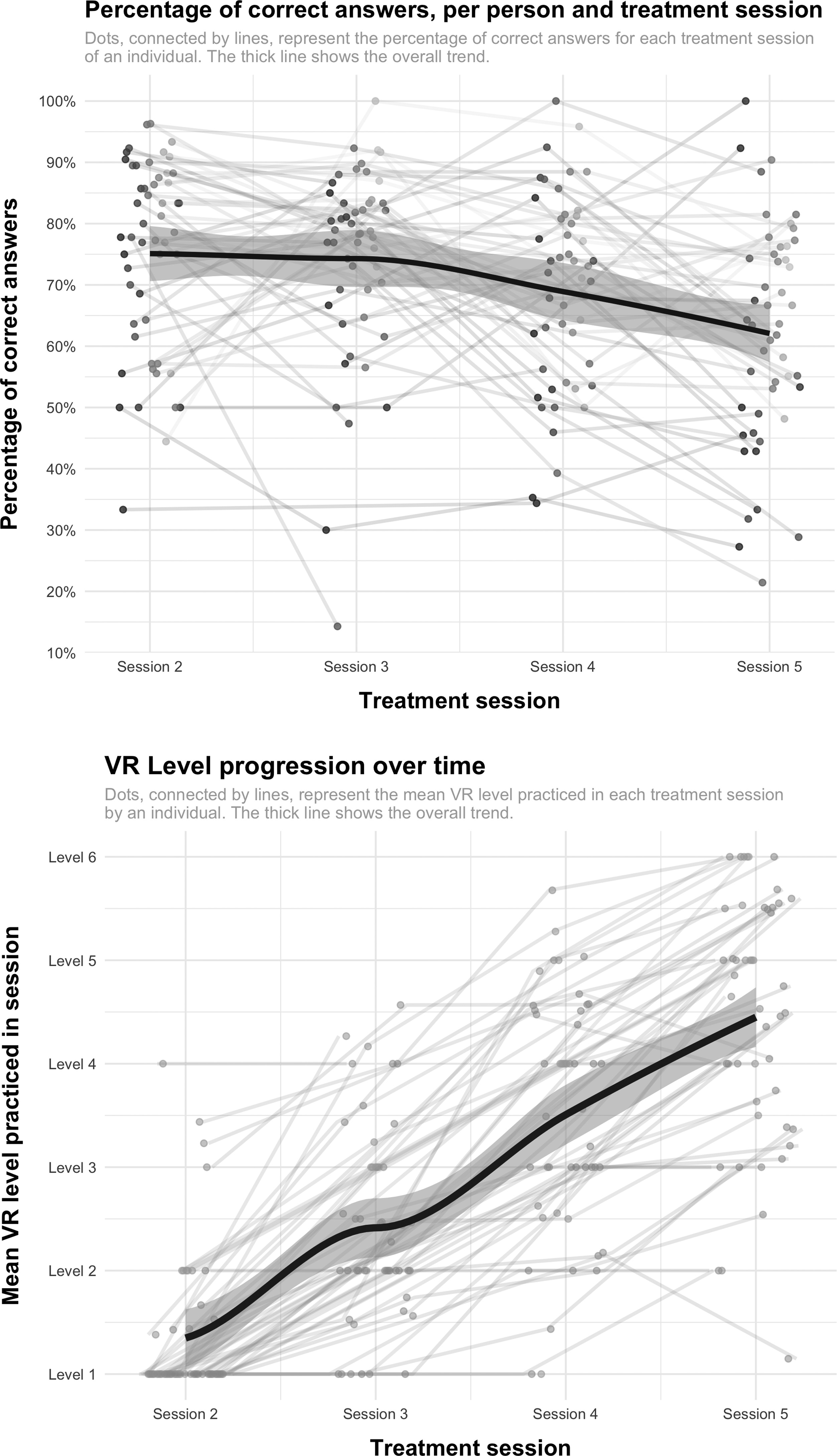

Plots of performance over time are shown in Figure 3. The first panel shows a moderate to high percentage of correct answers (>50 percent), with variation over individuals and over time. Overall accuracy decreased over time. The second panel shows (mean) VR level progression. The plot suggests an association between treatment session and VR level; the repeated measures correlation was 0.77 (p < 0.001). Together, the plots suggest that participants practiced more difficult levels as sessions progressed, at the cost of a slight decrease in accuracy.

VR parameters over time (percentage of correct answers and mean level practiced).

The logistic mixed-effects regression model (Table 3) showed a significant effect on response accuracy of treatment session, VR level (quadratic), and the emotions anxious, angry, sad, surprised, and disgusted. Thus, all other things being equal, the odds of correctly identifying a given emotion increased as the treatment sessions progressed and decreased as the level increased. Compared with happy faces, the odds of correctly identifying anxious, angry, sad, and disgusted faces were significantly lower, but significantly higher for surprised faces.

Fixed Regression Coefficients and Test Parameters of the Mixed Effects Generalized Linear Models (for RQ1a, RQ1b, RQ2a-b, RQ3)

All covariates were scaled. If a squared covariate was used, it was squared first, then scaled, to facilitate model estimation.

Covariate models represent separate mixed-effects regression analyses; only main effects of covariates and interactions with treatment session (fixed effects) are shown in this table. The intercept and effects of treatment session (linear) and VR level (squared) were included in the models, but have been omitted from this table for the sake of parsimony.

Unadjusted p value of model parameter.

p Value of model parameter after applying an FDR correction.

Significant at α = 0.05.

FDR, false discovery rate; PANSS, Positive and Negative Syndrome Scale; SE, standard error.

Emotion recognition reaction speed over time (RQ1b)

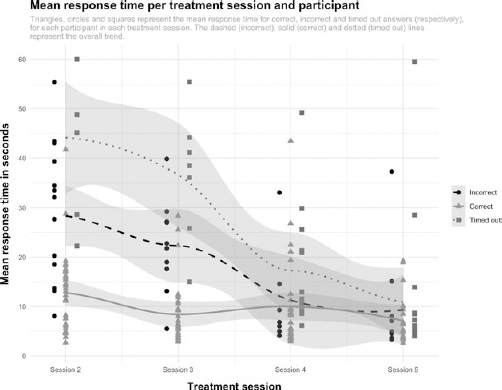

A plot of mean response time for correct, incorrect, and timed out responses over time is shown in Figure 4. As is shown in Table 2, response times decreased over time. The results of the generalized linear model using a Gamma distribution (Table 3) showed that, all other things being equal, response times for correct answers decreased as treatment sessions progressed and increased with level progression. Response times were longer than for happiness for all emotions, except surprise.

Response time across treatment sessions (for correct, incorrect, and timed out answers).

Participant and treatment predictors of recognition accuracy (RQ2a-b)

The results of the regression analyses investigating moderators of treatment session effects are shown in Table 3. After correction, there was only a significant main effect of age (quadratic and scaled): as age increased, the overall odds of correct identification decreased (cf. Supplementary Fig. S1 in Supplementary Appendix SA3). No interactions between any of the investigated variables and treatment session were found.

Performance on VR task versus conventional task (Ekman 60 Faces) (RQ3)

A paired t test showed a significant improvement in the total Ekman 60 Faces Test score (t = 2.72, df = 43, p = 0.009) from baseline to post-treatment. The model for RQ3 in Table 3 shows that the T0 Ekman 60 Faces Test score was a significant predictor of correct emotion identification. However, there was no significant interaction between treatment session and Ekman 60 Faces Test difference score, suggesting that improvement across treatment sessions was not different for people with higher or lower Ekman 60 Faces Test difference scores.

Discussion

Main findings

We found that while accounting for the difficulty level of the exercises and the specific emotion to be recognized, emotion perception performance on a VR task improved as treatment progressed. Participants also provided faster correct responses over time. We found no evidence of moderation of treatment or participant characteristics of treatment effects.

Finally, baseline emotion recognition performance on a photo task (Ekman 60 Faces) predicted correct emotion identification in VR, but there was no evidence that people who benefited more from VR training, also showed larger improvement on the Ekman 60 Faces task.

Emotion recognition in VR

We did not find that improvement in VR was associated with improvement outside VR, suggesting a discrepancy between the VR emotion recognition task and non-VR tasks of social cognition. The question, therefore, remains to what degree improvements in VR generalize to performance on other social cognitive tasks and daily life. As pointed out in the Introduction, a study 18 using the same VR environment found that performance and confusion patterns across the VR task, a photo task (Ekman 60 Faces Test), and a video task 34 were comparable in healthy individuals.

This is in line with our finding that baseline Ekman 60 Faces scores were predictive of VR emotion recognition accuracy. While this suggests that the VR task tapped into similar skills as conventional social cognition tasks, relationships between performance on the different tasks were not directly investigated in the aforementioned study.

The lack of an interaction between improvement in VR and change in emotion perception outside VR suggests that DiSCoVR may have had limited generalizability, a possible reason for the lack of effects of DiSCoVR in our RCT. 11 It is possible that, due to graphical limitations, the way emotions are currently rendered in VR is too simplistic, and therefore not applicable to real-life emotions. For example, it has repeatedly been found that the emotion disgust is recognized better on photographs of faces than in VR.19,35

Ultimately, VR emotions are a simplification, and may not (yet) adequately represent the complexity of real emotions.19,36 Graphical limitations aside, it is also possible that the VR exercises did not adequately simulate real-life social interactions, in which people are a participant rather than an observer and a personally relevant social context is present. These components of social interactions have been proposed as essential qualities of ecologically valid social cognition tasks; 37 without them, the added value of using VR to practice may be limited.

Given that the widespread adoption of VR as a therapeutic tool is relatively recent, only a few studies targeting social interaction difficulties in psychotic disorders are available investigating the added value of VR by comparing VR treatment with non-VR treatment. Tsang and Man 38 (n = 95) compared VR vocational rehabilitation training with a non-VR, therapist-led equivalent and conventional vocational training and found that VR led to greater improvement in cognition, but the analog format led to greater improvement in a face-to-face work performance test.

Park et al. 39 compared VR social skills training to conventional social skills training, and found that VR training led to greater motivation, improvements in conversational skills and assertiveness, but conventional training had a greater effect on expressive nonverbal skills.

No clear patterns have, therefore, emerged regarding target processes that are more effectively improved by interventions using VR than by a non-VR equivalent. A blended approach is possibly necessary, where emotion recognition practice occurs both in VR and in real-life social situations.

Moderators of improvement in VR

We found a main effect of age on emotion recognition accuracy, replicating other studies.18,19 Contrary to previous meta-analyses on (non-VR) SCT finding associations between emotion perception and gender, hospitalization status, clinical symptoms and antipsychotic treatment, 20 we found no other predictors or moderators of accuracy. This could be due to the absence of an effect, interference due to the interrelatedness of time and task difficulty, and/or insufficient power to detect moderation effects.

On the other hand, our results regarding moderators of treatment effects are consistent with previous meta-analyses.6,7,40 Thus, while more research is needed regarding the optimal approach to (VR) emotion recognition training, the present evidence suggests it can be beneficial regardless of the exact training parameters (e.g., session duration) and participants' demographic and clinical characteristics.

Strengths and limitations

This study is the first to investigate the learning process of participants engaging in emotion recognition training, and SCT in general. It is also the first to use VR for this purpose. Given that the primary purpose of the sessions investigated presently was therapeutical, these data provide a relatively accurate picture of how SCT might work in a clinical setting.

However, the process of data collection was not optimal for research, as data collection was not the main goal of the treatment sessions. Particularly, the interrelatedness of treatment session and VR level makes it difficult to disentangle the effects of difficulty versus time. Further, data from two separate studies were combined. We cannot exclude the possibility that impactful differences existed between the studies.

Implications and suggestions for future research

Our results suggest that external validity could be an issue for DiSCoVR, and possibly VR emotion recognition training in general. Given that ecological validity is regarded as one of the main benefits of VR, it is vital to investigate further how improvements in performance in VR relate to performance on other social cognitive tasks and real-world social functioning.

Our results may imply that VR emotions do not sufficiently resemble real facial emotions. Technological advancements in facial rendering and VR resolution may partially address this issue. Future research could study improvement in facial expression through adding micro-expressions, mixed emotions and individual variability in emotional expression.

In addition, complementing VR-SCT with additional types of practice material (e.g., photos, videos) could help participants develop a broader range of recognition skills. Moreover, studies should investigate the strengths and weaknesses of VR-SCT compared with conventional training, to identify for which target processes VR can be used successfully.

It is possible that VR is not (yet) appropriate for targeting complex perceptual processes requiring highly realistic VR stimuli. For other treatment targets, however, VR interventions have repeatedly been shown to be effective and generalizable. For example, RCTs on VR cognitive behavioral therapy and exposure have found improvement in social participation, persecutory delusions, and hallucinations.17,41–43 Thus, targeting emotional or cognitive experiences with VR could (currently) lead to greater generalization.

Finally, this study demonstrated the value of investigating treatment processes during treatment. Future studies could investigate additional parameters during interventions, such as gaze and approach versus avoidance behavior. This could be supplemented with experience sampling methods to investigate relevant processes in daily life. This way, procedural changes during treatment can be investigated, and VR tasks and treatments potentially further improved.

Footnotes

Authors' Contributions

S.A.N., W.V., and G.H.M.P. designed and carried out the DiSCoVR studies. M.E.T. designed the statistical analyses, which were conducted by S.A.N. S.A.N. wrote the manuscript, which was edited and approved by W.V., G.H.M.P. and M.E.T.

Author Disclosure Statement

The authors have no competing interests to declare.

Funding Information

Development of DiSCoVR was funded by the Dutch Research Council (Dutch: Nederlandse Organisatie voor Wetenschappelijk Onderzoek, NWO) (grant 628.005.007). GGZ Drenthe funded the position of author S.A.N. The University of Groningen funded participant compensation (staff grants for G.H.M.P. and S.A.N.). These organizations did not play any further role in study design, interpretation, or otherwise.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.