Abstract

The uncanny valley (UCV) model is an influential human–robot interaction theory that explains the relationship between the resemblance that robots have to humans and attitudes toward robots. Despite its extraordinary worth, this model remains untested in certain respects. One current limitation is that the model has only been examined in general or context-free situations. Given that humanoids function in the world beyond laboratories, investigating the UCV in specific and actual situations is critical. Additionally, few studies have examined the impact of affective responses presented in the UCV to other appraisals of humanoids. To address these issues, this study explored affective and cognitive responses to humanoids in specific service situations. In particular, we examined the effect of affective responses on trust, which is regarded as a critical cognitive factor influencing technology adoption, in two service contexts: hotel reception (low expertise) and tutoring (high expertise). By providing a richer understanding of human both affective and cognitive reactions to humanoids, our findings expand the UCV theory and ultimately contribute to research regarding user adoption of service robots.

Introduction

Despite the diffusion of service robots, some practical warning signs have emerged. Many stores and companies that used Softbank's Peppers robots to interact with consumers have decided to discontinue their use because of customer resistance. 1 While robots' data management and multilingual capabilities are attractive to consumers, their impersonality and inability to understand informal language (e.g., slang) are reportedly regarded as significant concerns. 2 Accordingly, the diffusion of robots in the social realm increases individuals' interactions with robots and requires a profound understanding of human–robot interactions (HRIs).

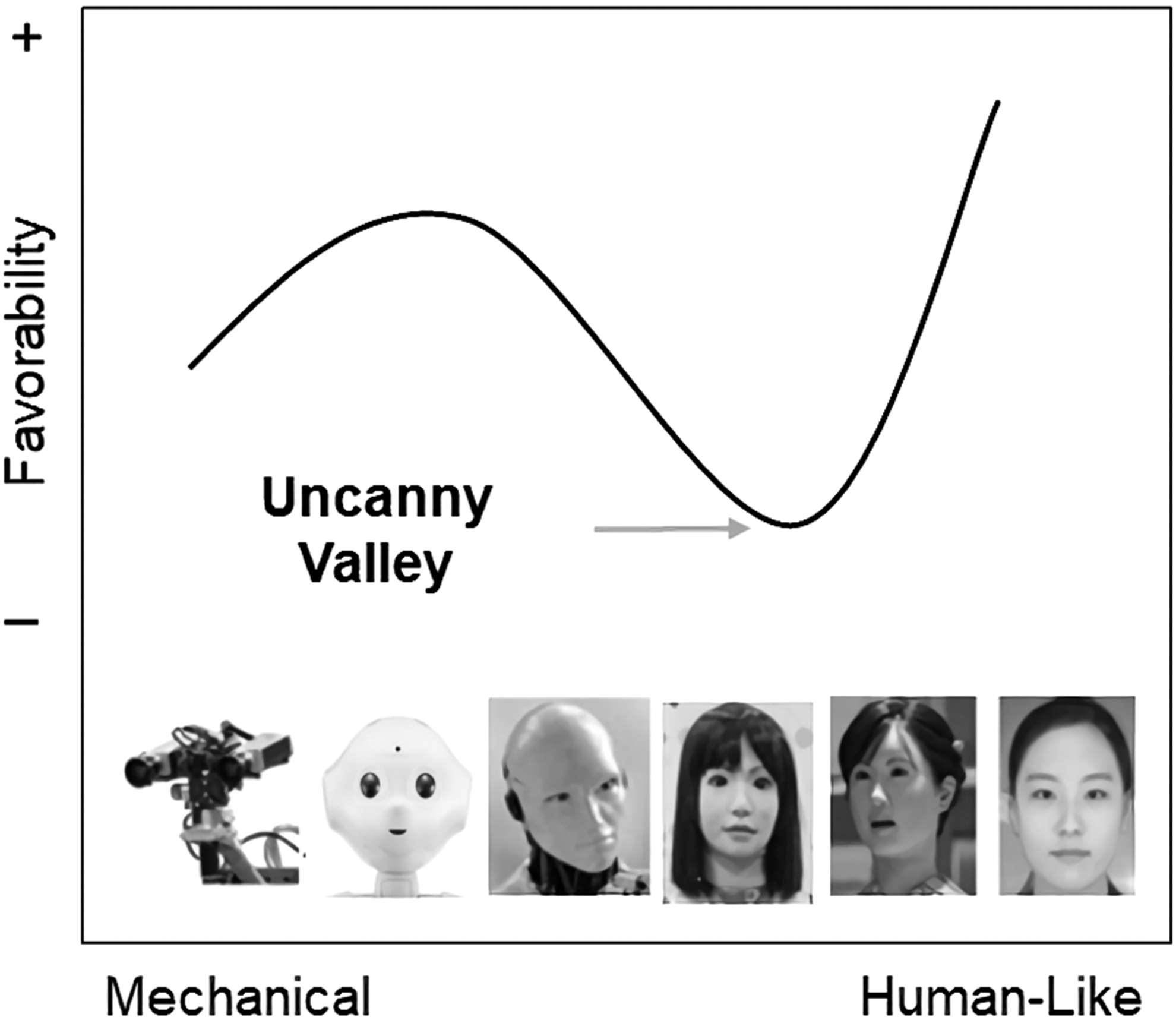

The uncanny valley (UCV), which refers to the negative feeling humans derive from interactions with even highly realistic human-like robots, 3 could be another significant cause of individuals' unfavorable feelings for robots. Although some researchers have argued that anthropomorphizing robots has a positive effect on individuals' attitude, 4 many studies have produced inconsistent or even contrary results 5 in this regard. The UCV theory can explain such contingent findings. The theory posits the existence of a nonlinear relationship between anthropomorphism and favorability; while anthropomorphized robots basically increase favorability, their imperfect resemblance to humans can cause extremely negative feelings (Fig. 1).

The uncanny valley.

The use of robots for individual users or customers is increasingly widespread in diverse social and business contexts. In particular, robots empowered by artificial intelligence (AI) can operate in ever more diverse service contexts. 6 This trend highlights the importance of examining UCV in specific use contexts. Users' responses to robots have reportedly been significantly influenced by the types of interactions they engage in with robots.7,8 Although the effects of human likeness or anthropomorphism can be contingent on situational factors, 9 our knowledge of how the UCV works in different application contexts remains limited. Thus, our first research question was as follows: Does the context of human interactions with humanoid robots affect the uncanny valley? More specifically, this study investigated the UCV in different expertise contexts. Advances in AI technologies have resulted in the creation of humanoid robots with diverse levels of expertise and led to their deployment in diverse contexts. 10 As users have more interaction with diverse robots with different expertise, the expertise context becomes more significant in understanding users' responses to robots. Another reason for choosing the expertise context is that robots' expertise can affect users' attitude toward robots. The expertise of service providers is regarded as a significant factor influencing consumers' evaluation of service quality, 11 and such effect has been demonstrated in diverse contexts of user adoption of technology, including chatbots. 12 We therefore think that the UCV (i.e., attitude toward robots) can be affected by their expertise. Additionally, expertise is a dimension of trust, 13 and thus, we expect that robots' expertise is a significant factor influencing users' overall trust in robots, which is examined as users' cognitive response in this study. Prior research also posits that the expertise of chatbots affects consumers' trust in services. 14

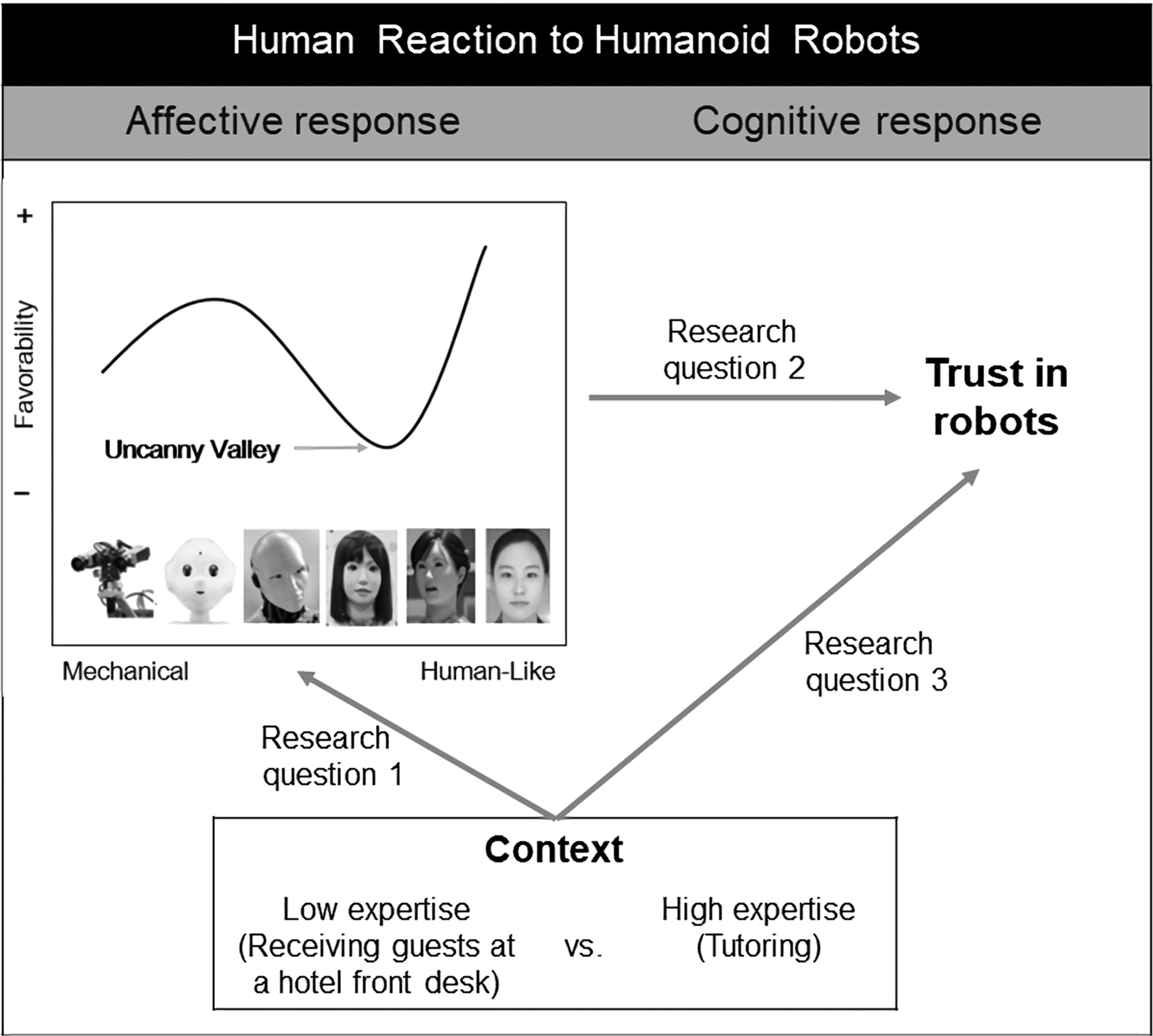

Another limitation of the UCV hypothesis is that it only examines emotional responses to humanoid robots without considering cognitive responses. Since users' interactions with humanoid robots are growing in volume and sophistication, a more comprehensive understanding of their reactions is required. Both affective and cognitive processes contribute to human decision-making and behavior. 15 Prior research has shown that individuals' cognitive assessments of humanoid robots are significantly related to the affective responses presented in the UCV. 16 In particular, trust in robots can be an important cognitive factor in humans' interaction with robots. 8 Humanoid robots imitate human characteristics and behave in human-like ways. People may assume that their interactions with humanoid robots are comparable to interactions with humans; in other words, the individual (trustor) expects the robot (trustee) to perform a particular action in his or her own interest. The unfamiliarity of interactions with humanoids, however, can provoke suspicion even regarding satisfactory interactions. Trust in technologies has typically been regarded as a central factor in their adoption. 17 Similarly, trust in humanoid robots plays a critical role in HRIs and may be essential in the current preliminary stage of service robot development. Recognizing that affective responses influence the formation of trust in other parties during interactions, 18 our second and third research questions were as follows: How is trust in humanoid robots reflected in the affective responses presented in the uncanny valley? Do the contexts of interactions with humanoid robots influence humans' trust in robots? Given that trust could reflect context-dependent affective responses, we assumed that trust could be influenced by the context. Empirical research has also shown that environmental factors significantly impact trust in robots. 19 Figure 2 shows our research model.

Research model.

Trust in Humanoid Robots

Although UCV has been examined extensively, previous UCV studies have not considered individuals' cognitive responses to various anthropomorphized robots. In this study, we examined the relationship between UCV (emotional reaction) and trust (cognitive reaction) in robots. Trust reflects an individual's willingness to make themselves vulnerable to another party's behavior. 20 Trust facilitates interactions among individuals and has been widely used to explain individuals' behavior in numerous computer-meditated environments. 21

While many studies have employed trust-in-people to explain individuals' Internet-mediated interaction practices, some studies have assumed that information technology (IT) itself can be a trustee and examined trust-in-technology. Given that trust formation depends on the characteristics of counter parties, 20 individuals may evaluate the trustworthiness of a given IT based on the attributes it manifests in an interactive context. For example, Wang and Benbasat 22 revealed that trust is a critical factor in users' interactions with online recommendation agents. In their study, they regarded the recommendation agent software as a trustee. Similarly, people may assess humanoid robots' trustworthiness when interacting with them. People may even be more inclined to treat humanoid robots more like humans than other types of IT since humanoid robots have the characteristics of humans in terms of appearance and they engage in social interactions with other humans. 23 In fact, trust has been regarded as a critical indicator in assessments of the quality of human–computer interactions. 24 Its persuasive function in social interactions could affect individuals' acceptance of robots. 25

Hancock et al. 19 conducted a meta-analysis to examine the factors that influence trust in robots by classifying them as user-related (e.g., prior experience), robot-related (e.g., robot attributes), and environmental factors (task type). They found that while robot-related and environmental factors significantly influenced trust in robots, user-related factors had little effect. Supposing that the UCV mirrors robots' traits, trust could be closely related to the UCV. Individuals' emotional states influence their decision-making. 26 When individuals make judgments, their feelings serve as critical reference points. 27 The relationship between affect and decision-making also applies in trust formation. Although rational models of trust posit that trust development depends on careful and deliberate processing, trust is significantly influenced by affect, which is derived from available cues. 28 It has been argued that individuals with positive emotions are more likely to trust other parties. 18 The significant impact of feeling on believing has been employed in IT contexts. Research has revealed that the affective responses presented in the UCV deeply influence individuals' assessment of humanoid robots' trustworthiness. 14

As Hancock et al. 19 found, environmental components also influence trust in robots. Some studies have examined the contextual impact of trust in robots. Gaudiello et al. 8 found that users have more trust in robots in functional contexts than in social contexts. Salem et al. 25 posited that the revocability of the outcomes of tasks conducted by robots affects users' acceptance of robots' recommendations. The fact that the use of robots in various personal and social contexts has become increasingly common highlights the need for further investigation of robot trust in a wider range of contexts.

Methodology

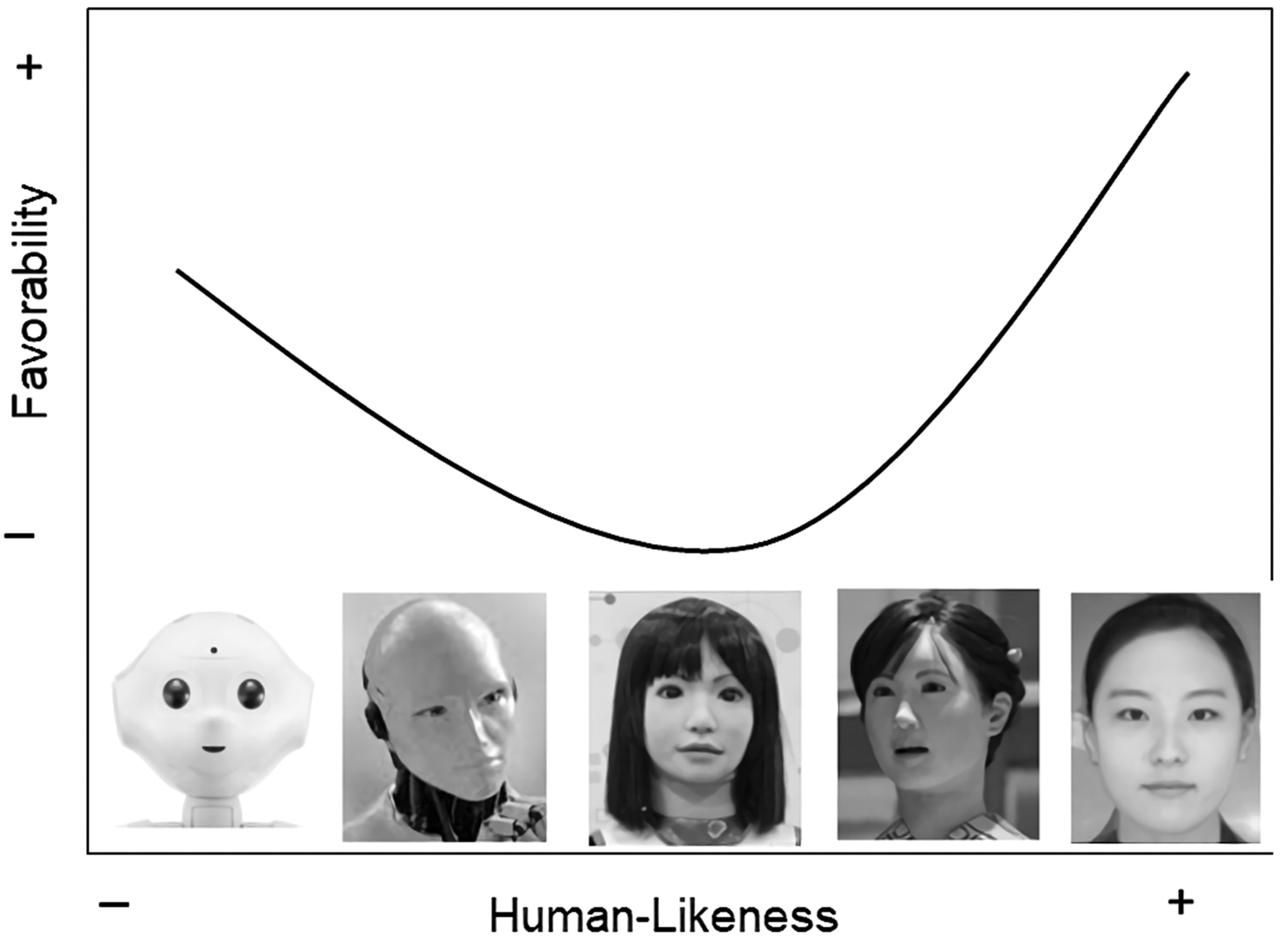

To select pictures of humanoids to present in an experimental questionnaire, we reviewed pictures of humanoids that were used in previous relevant studies as well as photos on the Internet that we found using the keyword “humanoid.” In the initial phase, we collected 10 humanoid pictures. Through a pilot test that asked about the human resemblance of each picture, we ultimately chose five pictures that presented a clear UCV gradation for parsimonious analyses (Fig. 3). In addition, in the pilot test, we checked the expertise levels required in two task contexts: receiving guests at a hotel front desk versus tutoring. We chose these two situations because they have been widely presented as possible or actual applications of intelligent robots. 5 Prior literature suggests that executing transactions with customers is regarded as a simple task for service robots, whereas educating customers is a professional job for robots. 29 Results of our pilot test confirmed that respondents perceived the different expertise levels of two contexts (t = 2.910, p < 0.01).

Humanoid pictures in an experimental questionnaire.

We adopted measurement questions that have been used in prior studies of the UCV 30 and added questions regarding the degree of human likeness of humanoids (“Do you agree that the robot in this picture looks like a human?”) and measuring favorability (“Do you feel uneasy or unfriendly when looking at the robot in this picture?”). We also developed a question to measure overall trust in robots: “Do you believe that the robot in this picture would be trustworthy as a hotel reception staff (or tutor)?” All items were measured using 7-point Likert scales ranging from “strongly disagree” to “strongly agree.”

We conducted a between-subject experimental survey in which participants provided answers to questions about their affective responses (favorability) and cognitive responses (trust) to five different types of humanoid robot photos in one of two situations (receiving guests at a hotel front desk vs. tutoring). We collected data from the panel members of an online research firm in South Korea and administered web-based online surveys over a 1-week period in March 2019. We gathered data separately for the two study contexts. After we eliminated invalid responses, we included a final sample of 505 participants in the analysis (251 in the hotel reception context and 254 in the tutoring context). The mean age of the participants was 39.3 years. In the first stage of the survey, participants assessed the human likeness of five humanoid pictures. Next, they read a short scenario describing the context and provided their responses regarding favorability and trust for each of five humanoid pictures, which were randomly presented. Finally, participants answered demographic questions.

Results

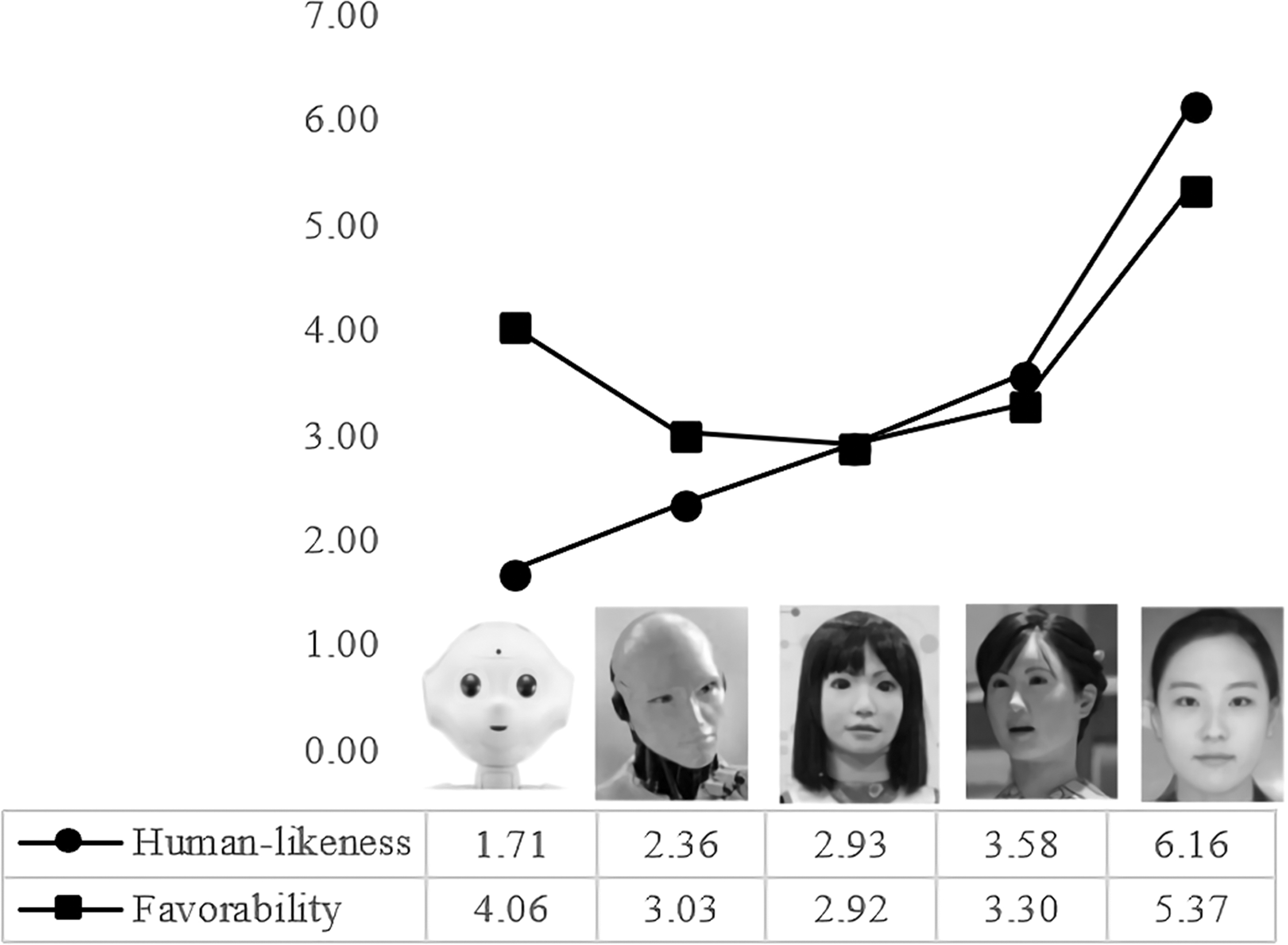

The results of a paired difference test confirmed a human-likeness hierarchy for the five humanoid pictures, whose favorability showed a U-curve, evidence of the UCV effect, as did the results of the pilot test (Fig. 4).

Human likeness and favorability of five humanoid pictures.

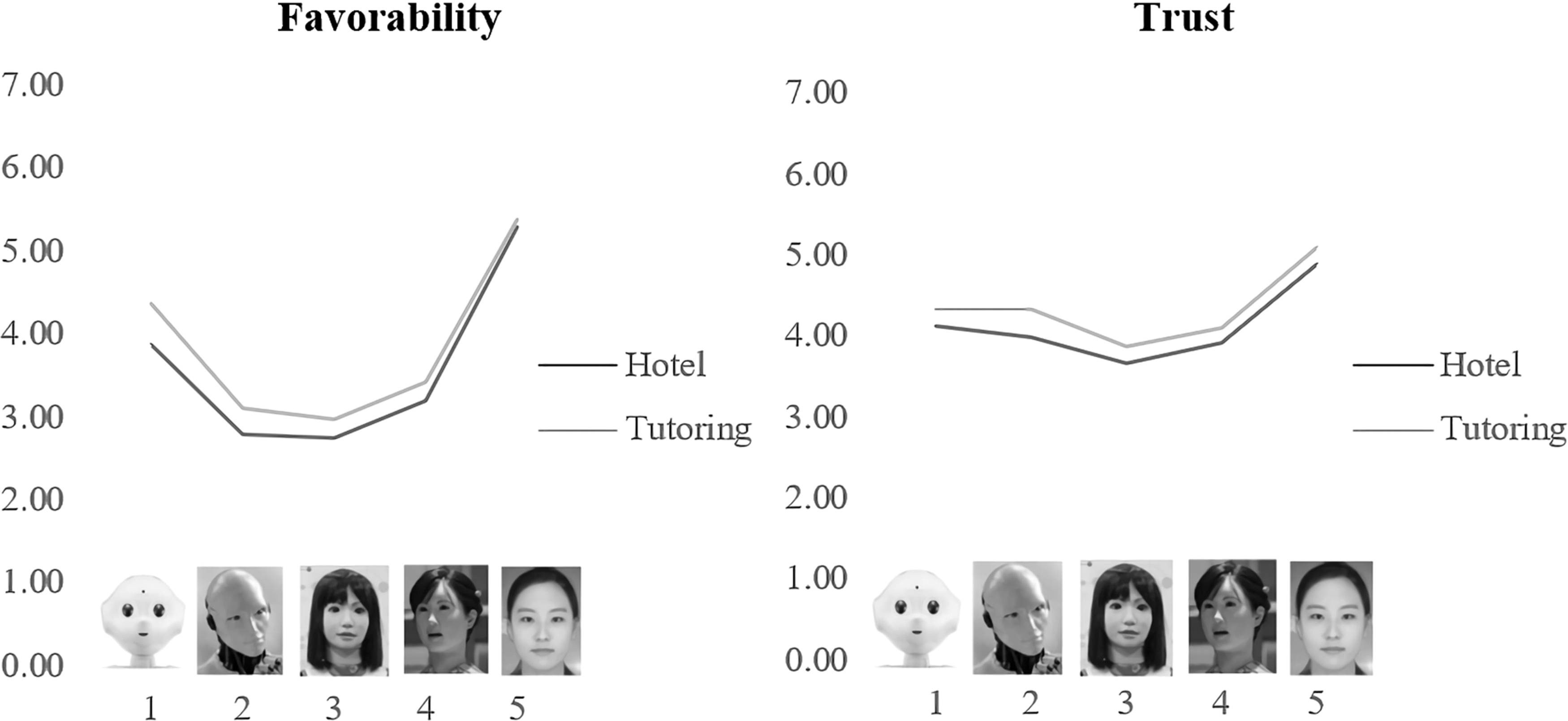

We conducted analysis of variance (ANOVA) to examine differences by context. The data achieved ANOVAs assumption of homogeneity of variance, and the results showed significant contextual differences in favorability and trust for all stages except for favorability in the last stage (Table 1 and Fig. 5). Participants evaluated both favorability and trust more positively in the tutoring context than in the hotel reception context, but the F-values (mean differences) revealed a variation in this result. The contextual effect on participants' appraisals was more powerful for favorability in stages I, II, and III. We also conducted a regression analysis to examine the influence of favorability on trust. In the hotel reception context, the adjusted R2 was 0.308 and the coefficient of favorability was 0.557 (t = 10.595, p = 0.000); in the tutoring context, the adjusted R2 was 0.310 and the coefficient of favorability was 0.559 (t = 10.708, p = 0.000). These results show that favorability had a significant impact on trust.

Comparison of favorability and trust by context.

Analysis of Variance Results for Favorability and Trust by Context

Discussion

This study contributes to service robot research by elucidating the effects of robots' anthropomorphism. Human-like robots or humanoids have been broadly utilized in service areas, and thus, anthropomorphism has been regarded as a significant factor that influences consumers' attitudes toward humanoid robots. Previous studies have produced inconsistent findings regarding the effects of anthropomorphized robots. Many studies have demonstrated that robot anthropomorphism generates warmth 4 or adoption intentions, 31 whereas others have revealed anthropomorphism's contingent 32 or negative 5 effects. This study explicates anthropomorphism's effects in service fields by employing the UCV theory.

Furthermore, this study provides a better account of these effects by showing perceptual differences regarding anthropomorphism in different service contexts. Applying the UCV perspective, this study examined how peoples' affective and cognitive responses to humanoid robots differed in two service contexts, hotel reception and tutoring. As far as the power of the UCV is concerned, our analyses revealed ambivalent results. On the one hand, we found that favorability had a significant effect on trust, implying that affective appraisals of humanoids play a role in initial impressions, which are the foundation for further cognitive evaluations. This finding, therefore, suggests that the UCV describes the principal reaction to humanoids, and its influence is not limited to affective responses but applicable to cognitive responses to humanoids as well. On the other hand, the depth of the UCV (i.e., differences in favorability across the stages) decreased trust. This result implies that the impact of the UCV was attenuated by trust in robots. Because interactional evaluations (e.g., trust) can form primary attitudes toward robots in actual real-world settings, the impact of the UCV might be weakened in real contexts. Conclusively, although the UCV indicating affective evaluation of robots is influential in people's attitude toward them, its impact might be declined in actual contexts. Our findings suggest that researchers should examine individuals' affective and cognitive responses to robots in actual application environments while also scrutinizing how such responses lead to outcome behaviors, such as continuous use, service satisfaction, and compliance with robots. 33

Our analysis also revealed that affective and cognitive responses were more positive for the high-expertise humanoid (tutoring) than for the low-expertise humanoid (hotel reception) in all stages of the UCV except for the last stage, where the humanoid's face is the same as a human's face. This finding suggests that when people form impressions of humanoids conducting certain tasks for them, their assessments differ based on the task type. More specifically, people's attitudes are less influenced by humanoids' peripheral cues (e.g., appearances) in tasks requiring higher levels of expertise. This result can be explained by the elaboration likelihood theory, which proposes that humans process stimuli via two distinct routs (i.e., a central route and a peripheral route). 34 When elaborating on a message, the purposeful and conscious processing of an argument (stimulus) based on an individual's ability occurs via a central route while a heuristic processing based on general impressions occurs via a peripheral route. 34 In our context, when a humanoid's task is complicated or knowledge-intensive, people's dominant attention on its ability to successfully complete a task mitigates the influence of the humanoid's appearance on their reaction to it. This result implies that people may differently evaluate robots based on the robots' level of expertise. Accordingly, researchers need to examine the UCV phenomenon in diverse real-world contexts (e.g., static robots vs. movable robots, intraorganizational contexts vs. consumer service contexts). Managers should also take service type into consideration when developing service robots; in particular, managers trying to use simple service robots must be careful about the UCV. Managers might be better off using modest human-like robots (first stage example in our experiment) to offer simple services to customers rather than incomplete human-like ones (second, third, and fourth stage examples in our experiment).

One of limitation of this study is the use of a single item for measuring each factor. The study employed it to mitigate respondents' fatigue of a long survey process. Instead, we asked fundamental and straightforward questions (e.g., “Do you agree that the robot in this picture looks like a human?” for measuring human likeness) to reduce the weakness of using a single-question scale. However, there are well-developed measuring scales of anthropomorphism or human likeness, 35 trust, 36 and favorability. Accordingly, future research is recommended to employ multiscale measurement to achieve rigorousness.

As robots are equipped with AI and HRI becomes prevalent in the social and commercial lives, consequences of such interaction can be more complicated and unpredictable. As revealing the dynamics of humans' responses to robots, this study contributes to understanding of future HRI. Researchers also envisage that advances in AI will make robots more intelligent and even have a sense of self within the next few decades. 37 Such evolution of robots implies that they will be able to fully acquire knowledge and make decisions, and ultimately be indistinguishable from humans. 10 Accordingly, future research needs to examine the UCV in human interaction with more intelligent or emotional robots beyond the current focus on robots' tangible attributes such as appearance or voice. 38

Footnotes

Author Disclosure Statement

No competing financial interests exist.

Funding Information

This work was supported by the Ministry of Education of the Republic of Korea and the National Research Foundation of Korea (NRF-2019S1A3A2099973) and the MSIT (Ministry of Science and ICT), Korea, under the ITRC (Information Technology Research Center) support program (IITP-2020-0-01749) supervised by the IITP (Institute of Information & Communications Technology Planning & Evaluation).