Abstract

Robots are becoming an integral part of society, yet the extent to which we are prosocial toward these nonliving objects is unclear. While previous research shows that we tend to take care of robots in high-risk, high-consequence situations, this has not been investigated in more day-to-day, low-consequence situations. Thus, we utilized an experimental paradigm (the Social Mindfulness “SoMi” paradigm) that involved a trade-off between participants' own interests and their willingness to take their task partner's needs into account. In two experiments, we investigated whether participants would take the needs of a robotic task partner into account to the same extent as when the task partner was a human (Study I), and whether this was modulated by participant's anthropomorphic attributions to said robot (Study II). In Study I, participants were presented with a social decision-making task, which they performed once by themselves (solo context) and once with a task partner (either a human or a robot). Subsequently, in Study II, participants performed the same task, but this time with both a human and a robotic task partner. The task partners were introduced via neutral or anthropomorphic priming stories. Results indicate that the effect of humanizing a task partner indeed increases our tendency to take someone else's needs into account in a social decision-making task. However, this effect was only found for a human task partner, not for a robot. Thus, while anthropomorphizing a robot may lead us to save it when it is about to perish, it does not make us more socially considerate of it in day-to-day situations.

Introduction

A total of 41.8 million robots are projected to be part of households around the world by 20201 to conduct tasks that are menial to humans, such as domestic assistance. 2 While these technological developments can enrich people's lives 3 they also pose challenges. For example, the appearance of robots is becoming increasingly human-like, causing us to view them as more than tools. 4 In situations where the life of a human-like robot is threatened, people sacrifice a group of anonymous humans to save that robot's “life” 5 or empathize with a robot when it is physically mistreated. 6

Yet, these kinds of scenarios do not reflect our day-to-day interactions with robotic devices. On a day-to-day basis, the majority of our prosocial behavior toward other humans consists of small acts of prosociality (e.g., giving someone directions or considering someone's perspective when taking a decision). Clearly, not wanting someone or something to perish involves different affective motivations than giving directions. Indeed, prosocial behavior increases as a function of urgency 7 and potential harm. 8 Similarly, people empathize more with a robot that is being severely maltreated compared to a robot that is being treated kindly. 9 Thus, while previous research shows that we can be moral or empathetic toward robots in urgent and high-consequence situations, we do not know whether day-to-day, low-consequence acts of prosocial behavior occur as well. Yet, since robotic devices are becoming increasingly common in the household, it is pertinent to understand the mechanisms that ground our common, day-to-day interactions with them. Are we prosocial toward robotic devices, and if so, which factors influence this?

Prosocial behavior consists of actions that benefit people other than oneself, such as helping, sharing, or comforting. 10 In human–human interaction, prosocial behavior allows us to form and maintain relationships with people. For us to behave in a way that benefits another individual, we need to be able to consider that individual's needs and desires. Thus, prosocial behavior involves the ability to take another person's perspective, 11 understand that their needs and desires may be different from our own, 12 and possibly experience empathic concern toward them.13,14

Prosocial behavior presupposes that the target of our prosocial action has mental states and has affective experiences. Arguably, currently available robots do not have emotional experiences or needs similar to humans. 15 Yet, humans have a strong tendency to perceive nonhuman agents in human terms by attributing mental states, emotions, and intentions to them: a phenomenon called anthropomorphism.16,17 Specifically relating to the human–machine interaction, the Media Equation theory 18 and Computers As Social Actors (CASA) paradigm 19 claim that humans will respond to any type of object (such as a computer) as if they are human, provided enough social cues are present.

The link between prosociality and the attribution of mental states to nonhuman agents opens up the intriguing possibility that we act prosocially toward robots when we anthropomorphize them. Indeed, anthropomorphizing a nonhuman agent has been shown to lead to more interpersonal connectedness 20 and higher trust. 21 Specifically, anthropomorphizing a robot leads to processing its movement as human, 22 joint attention, 23 increased trust, 24 and moral care.5,6,9 However, previous research on human–robot interaction was done in urgent and high-consequence situations. Therefore, results cannot be generalized to low-consequence situations.

An established paradigm for measuring everyday, low-consequence acts of prosociality is the Social Mindfulness (SoMi) paradigm. 25 The SoMi paradigm measures our willingness to consider another individual's needs before our own. In the SoMi task, participants have to repeatedly choose among three items of the same category (e.g., pens). Crucially, two items are identical, while one item differs in a certain aspect (e.g., two blue and one black pen). Participants are told that they have to choose an item, but that someone else will pick something from the remaining items after them. It is counted how often the participant picks the socially mindful item (of which there were two so the task partner still has a choice between two unique items). The overall proportion of socially mindful versus nonsocially mindful choices thus gives an indication of a participant's overall willingness to consider the task partner's needs. In human–human interaction, participants have shown to be more socially mindful in their decision-making when another individual has to pick after them. 26 Furthermore, SoMi scores have been correlated with measures such as general empathy. 25

The current research investigated social mindfulness toward robots. Two separate studies were conducted utilizing the SoMi paradigm.a In Study I, participants were presented with two experimental blocks: in one, they performed the SoMi task alone, that is, they had to make choices between items without a partner. In the other block, they performed the classic SoMi task with a partner (a human or a robot). We hypothesized that (1a) more socially mindful choices would be made in the social condition than in the solo condition; and we further expected that (1b) participants would make more socially mindful decisions when the partner was another human compared with a robot.

In Study II, we investigated the effect of anthropomorphic attributions on the level of social mindfulness. Participants performed the SoMi task with another human and a robot, both of which were described in either human-like and anthropomorphic terms or in neutral and mechanical terms. We hypothesized (2a) participants in the anthropomorphic condition to be more socially mindful than participants in the neutral condition, (2b) regardless of whether their partner was a human or a robot.

Study I

Methods

Participants

The minimum required sample was determined to be n = 134 (67 participants per condition), using G*Power (with α = 0.05, β = 0.80, dz = 0.35). The effect size used was based on a previous effect size comparing responses with humans and robots 5 since this study used a similar experimental design. This effect size was divided by two to obtain a conservative estimate.

A total of n = 136 undergraduate students were recruited to participate in this study in exchange for course credit. All provided informed consent before participation. Based on preregistered exclusion criteria, 12 participants were dropped from the analysis for completing the study in less than 3 minutes, as well as 2 participants who took more than 90 minutes. This resulted in a final sample of 122 students (Mage = 22.11 ± 4.98, 83 females).

Materials and procedure

Participants completed the experiment on their own computer in a quiet environment using Qualtrics. After signing up, participants received a link to the online experiment. Before the experiment started, participants were instructed to ensure they would not be interrupted for the duration of the experiment (±20 minutes).

The solo condition was completed by all participants. Participants were randomly assigned to the social-human or social-robot condition. The presentation order of the solo and social block was counterbalanced. Each block consisted of 12 trials (6 test- and 6 distractor trials). During test trials, participants were presented with three items from which they had to choose one. In all test trials, two objects were identical and one object was different. The distractor trials contained four similar items: two of each type. The presentation order of the test and distractor trials and the order of items were randomized.

In the solo condition, participants were instructed to imagine that they can take the object they chose home with them. In the social condition, participants were informed that someone else would choose between the remaining items. In both conditions, a picture of the task partner was displayed.5,27 Subsequently, participants provided their age and gender, were thanked, debriefed, and awarded course credit. The experimental procedure was approved by the institutional review board of the affiliated university. Specific examples of our materials as well as details of the data analysis procedure can be found in the Supplementary Material.

Results

Participants' choices in the social mindfulness trials were analyzed using a binomial mixed-effects logit model 28 in R. No significant effect of task partner was found, p = 0.697. The proportion of socially mindful choices did not differ between the solo (M = 0.53 ± 0.20) and the social-human (M = 0.55 ± 0.23) or social-robot (M = 0.56 ± 0.25) conditions.

Discussion

Contrary to hypotheses 1a and 1b, we could not detect any differences between the solo and social conditions, nor between the human and robot task partners in the social condition.

Looking at the proportions of socially mindful choices, our results align with other “neutral” conditions in the SoMi paradigm.25,26 Similar to our findings, van Doesum et al. 25 report social mindfulness proportions at chance level when participants are not given any explicit instructions; only after explicit instruction to take their partner's perspective do they find a significant increase in social mindfulness. In addition, in a real-life version of the task, van Doesum et al. 26 report a chance level of social mindfulness when no partner is present. However, when a partner is present, the rate of social mindfulness increases significantly.

Comparing this with our findings, we can draw two preliminary conclusions. First, the level of social mindfulness in our solo condition is on par with similar human–human baseline conditions in the literature. Second, our social condition did not sufficiently trigger participants to take the perspective of their partner as we only presented a picture and provided no other information. As previous research used explicit perspective-taking instructions, we thus designed a follow-up study in which participants were induced to take their partner's perspective using vignettes. Participants' social mindfulness toward a robotic and human task partner was assessed, while vignettes of the task partners were presented to induce participants' attributions of mental states.

Study II

Methods

Participants

The minimum required sample size was n = 128 (64 per group), using G*Power (α = 0.05, β = 0.80, η p 2 = 0.059). A total of n = 128 (Mage = 26.54 ± 11.10, 78 females) participants participated in the study in exchange for course credit. Participants were recruited both in the participant pool of the researchers' local university and through social media. All participants provided informed consent before their participation.

Materials and procedure

This experiment had a 2 (Partner: human vs. robot; within-subjects) by 2 (Condition: anthropomorphic vs. neutral; between-subjects) mixed design. Participants were randomly assigned to conditions. The materials and procedure were similar to Study I except that no solo condition was present. The presentation order of the human and robot block was counterbalanced.

Each block consisted of an introduction to the task partner followed by 12 SoMi trials. Participants were introduced to their task partner with a vignette. In the anthropomorphic condition, these vignettes described the task partner in a humanized manner, that is, by emphasizing mental states such as their emotions and intentions. In the neutral condition, vignettes described the task partner in a neutral manner, without referring to their mental states (for more details about the vignettes, see Majdandžić et al. 29 ; Nijssen et al. 5 ). A picture of the task partner was presented next to the vignettes. The combination of the vignettes and pictures was counterbalanced. The task partner was consistently referred to with a letter (e.g., “H”). Importantly, participants in the anthropomorphic condition read a humanized vignette for both the robotic partner and the human partner; idem for participants in the neutral condition. The humanizing versus neutral manipulation effect of the vignettes was validated in previous work.5,29 Subsequently, participants provided their age and gender, were thanked, debriefed, and awarded course credit. The experimental procedure was approved by the institutional review board of the affiliated university. Specific examples of our materials as well as details of the data analysis procedure can be found in the Supplementary Material.

Results

Participants' choices in the social mindfulness trials were analyzed using a binomial mixed-effects logit model 28 in R. A significant main effect of condition was found (β = −0.29, Wald Z = −2.95, p < 0.001), with a larger proportion of socially mindful choices made in the anthropomorphic (M = 0.64 ± 0.19) versus the neutral (M = 0.52 ± 0.20) condition. No significant main effect was found for task partner (β = 0.10, Wald Z = 1.58, p = 0.087). However, a significant interaction effect of condition and task partner (β = −0.14, Wald Z = −2.19, p = 0.017) was found.

Participants made significantly more socially mindful decisions toward the human partner in the anthropomorphic (M = 0.69 ± 0.23) versus the neutral (M = 0.52 ± 0.24) condition (χ 2 = 24.68, p < 0.001). For the robot partner, this difference did not reach statistical significance (M = 0.60 ± 0.24 in the anthropomorphic condition vs. M = 0.53 ± 0.27 in the neutral condition; p = 0.074). The significant interaction thus indicates that the difference in social mindfulness between the neutral and anthropomorphic conditions was significantly higher for the human partner than the robot partner.

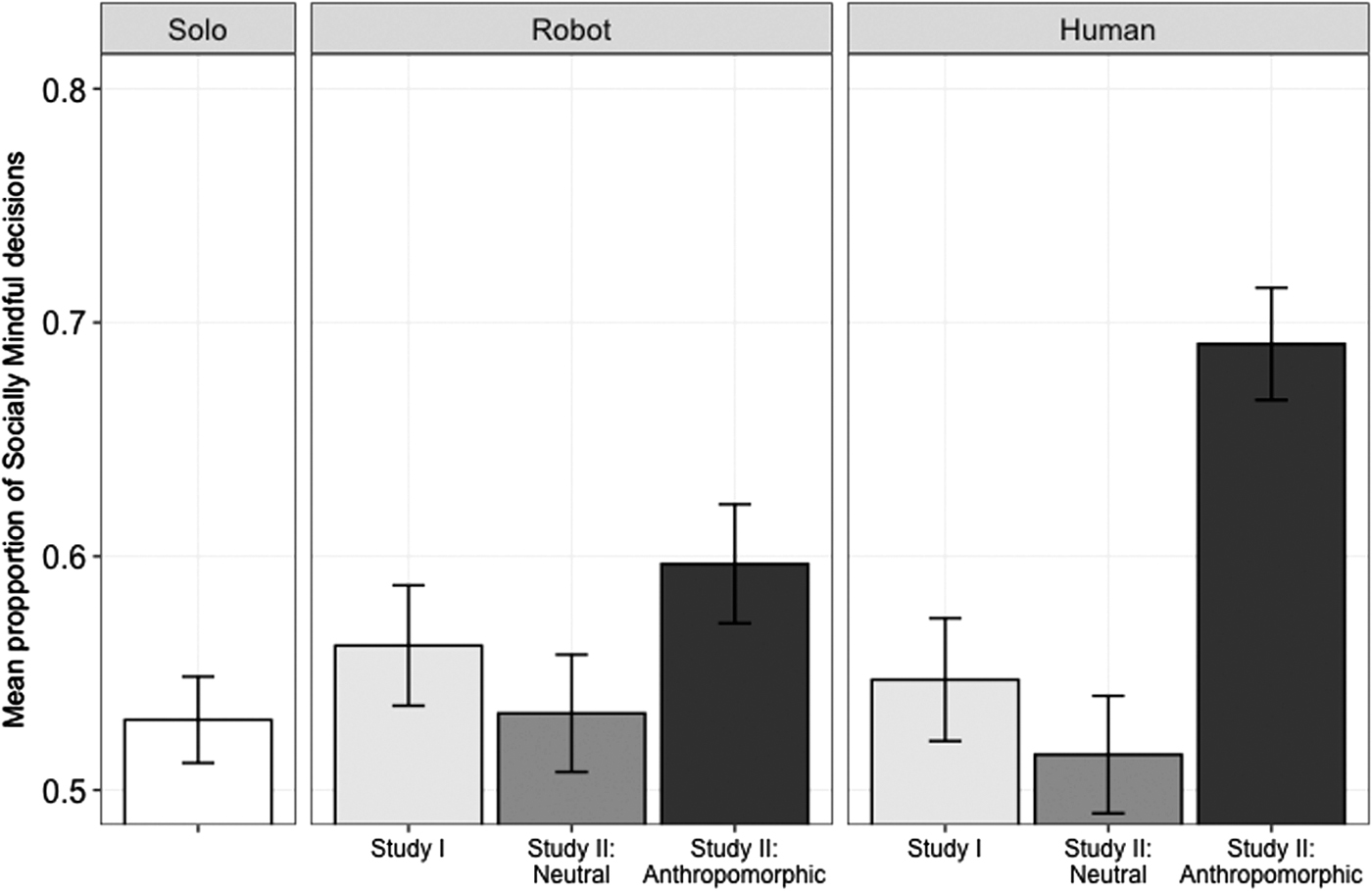

Two additional binomial mixed-effects logit models with the social condition (picture-only) from Study I as the reference group (Fig. 1) showed no significant difference between conditions for the robot partner (all ps > 0.396). For the human partner, the anthropomorphic condition yielded significantly more socially mindful choices than the social (picture-only) condition from Study I (β = 0.72, Wald Z = 3.53, p < 0.001). The neutral condition from Study II and social (picture-only) condition from Study I did not statistically differ (β = −0.14, Wald Z = −0.74, p = 0.338).

Overview of statistical results. In the left-hand panel, the proportion of socially mindful choices in the solo condition of Study I is displayed. In the middle panel, the proportion of socially mindful choices with a robot task partner is illustrated, in the picture-only condition of Study I as well as in the neutral and anthropomorphic conditions of Study II. The same conditions are displayed for the human task partner in the right-hand panel.

General Discussion

The current research investigated whether anthropomorphizing robots would affect prosocial behavior in a social decision-making task. Results of Study I show that the same level of socially mindful decisions was made in a solo context as with a human or robot partner. Results of Study II show that participants became significantly more socially mindful in the anthropomorphic condition, thus confirming hypothesis 2a. However, this effect was only found for the human partner: participants took the needs of their human partner into account more often when their mental states and emotions were emphasized than when they were not. For the robot partner, the difference in level of social mindfulness between the neutral and anthropomorphic condition was not significant—thus rejecting hypothesis 2b.

This research utilized an experimental paradigm that allowed us to measure day-to-day, low-consequence prosocial behavior. This stands in sharp contrast to previous research on prosocial behavior toward robots, which relied on urgent and high-consequence scenarios. While previous research showed that people in such scenarios are indeed more likely to, for example, protect an anthropomorphized robot from harm, 5 trust an anthropomorphized car more despite it causing an accident, 21 or empathize more with a robot that is being physically mistreated, 6 our results indicate that this effect of anthropomorphism does not necessarily extend to more common, low-consequence prosocial considerations. This is in line with previous research showing that participants empathize more with a suffering than a nonsuffering robot. 9 Moreover, our results match the dynamics of prosocial behavior in human–human interaction: people tend to be more prosocial toward someone when the urgency 7 or potential harm 8 of their situation increases. Linking this to our findings, the social decision-making trials in our two experiments were not constructed as highly urgent or highly harmful situations. The task partner simply lost out on an opportunity to choose between everyday items. In sum, our findings point to a relevant distinction based on urgency in the effects of anthropomorphism for human–robot interaction.

It should be noted, however, that the neutral vignettes used in this study also included some anthropomorphic cues regarding a robot's autonomy. Moreover, it could be argued that the content of the vignettes confounded participants' perception of the agent. However, the distinct effect of the neutral versus humanizing vignettes on mentalizing, liking, and empathy was confirmed in previous research.5,29 In addition, previous studies used several different vignettes for each category (humanizing vs. neutral) and found no differences between the individual vignettes in each category; furthermore, the vignettes that were quantitatively confirmed in previous studies as most distinctly emphasizing humanness versus neutrality were selected for the current study. Even though we thus confirmed the humanizing versus neutral effects of the manipulation in the current study, it would be interesting to include measures of empathy and liking as potential mediators in follow-up research.

This research is among the few investigations of human–robot interaction that directly compared participants' behavior toward robots with behavior toward humans. Many experiments on human–robot interaction focus solely on how anthropomorphizing versus nonanthropomorphizing affects certain behavioral parameters. 24 However, our results show a clear distinction in the effects of our manipulation on human versus robot partners. Thus, if studies on human–robot interaction want to investigate certain behavioral parameters and draw conclusions about how those behaviors compare with human–human interaction, including an experimental condition in which those parameters are measured vis-à-vis another human seems pertinent.

While our findings are relevant and have clear theoretical and practical implications, they are not in line with the long-standing Media Equation Theory and the CASA paradigm.18,19 While these models of human–machine interaction entail that we treat any machine as a human as long as it displays sufficient social cues, in our study, anthropomorphic vignettes did not significantly increase participants' social mindfulness toward robots. This may be because our study did not involve any real-life interactions, in contrast to the empirical studies by Nass and Moon. 19 Our results should thus be corroborated in future research, especially in real-life human–robot interaction settings.

Given the increasing role of robotic devices in our daily lives, a better understanding of the mechanisms that support our interactions with them is pertinent. Our results show that effects of anthropomorphism cannot be generalized across different types of social interactions: while we may be inclined to care for an anthropomorphic robot when it is about to be demolished does not mean we take its needs and desires into account in an ordinary situation.

Footnotes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.