Abstract

Background:

The integration of Artificial Intelligence (AI) with synthetic biology is driving unprecedented progress in both fields. However, this integration introduces complex biosecurity challenges. Addressing these concerns, this article proposes a specialized biosecurity risk assessment process designed to evaluate the incorporation of AI in synthetic biology.

Methods:

A set of tailored tools and methodology was developed for conducting biosecurity risk assessments of AI language models used for synthetic biology. These resources were developed to guide risk management professionals through a systematic process of identifying, evaluating, and mitigating potential risks.

Results:

The tools and methodology provided offer a structured approach to risk assessment, enabling risk management professionals to comprehensively analyze the biosecurity implications of AI applications in synthetic biology. They facilitate the identification of potential risks and the development of effective mitigation strategies. An example of a risk assessment performed on the large language model “ChatGPT 4.0” is provided here.

Conclusion:

AI's role in synthetic biology is rapidly expanding; thus, establishing proactive and secure practices is crucial. The biosecurity risk assessment tools and methodology presented here are the first provided in the literature and will be instrumental steps toward the responsible integration of AI in synthetic biology. By adopting these resources, the biorisk management community can effectively navigate and manage the biosecurity challenges posed by AI, ensuring its responsible and secure application in the field of synthetic biology.

Introduction

Artificial intelligence (AI) is a field of study and technology focused on creating computer systems that can perform tasks requiring human-like intelligence, such as problem-solving, learning, and decision making. 1 AI language models are computer programs that use AI and vast text datasets to understand and generate human-like text in response to natural language input.

The evolving landscape of AI technology holds immense potential to revolutionize a wide array of industries and everyday life, offering solutions that range from mundane tasks to complex problem-solving scenarios. In synthetic biology, AI tools are rapidly evolving, making it possible to propel the field to new heights. However, adding AI to synthetic biology also poses unique biosecurity concerns. The ability to process and manipulate biological data can potentially be exploited to breach biosecurity measures.

Therefore, AI in synthetic biology is a dual-use technology serving beneficial purposes while also carrying the risk of misuse for harmful purposes. In 2023, the White House issued an executive order 2 to address AI's integration across various fields, including synthetic biology. Therefore, the biorisk management profession must quickly adapt and incorporate this new tool into biosecurity risk assessment. Although there are many AI risk assessment frameworks proposed in the literature,3–5 and risk assessment frameworks proposed for synthetic biology, 6 this is the first publication of a specific risk assessment for the use of AI tools in synthetic biology.

This article explores how AI can be responsibly harnessed in synthetic biology, focusing on the need for careful biosecurity risk assessment and implementation of controls. A balanced approach ensures that AI contributes positively to synthetic biology, enhancing its capabilities without compromising biosecurity. This article serves as the first template to systematically evaluate the biosecurity risks associated with the integration of AI in the field of synthetic biology.

Methods

AI Biosecurity Risk Assessment

Definitions

○

○

○

○

○

Risk Assessment Procedure

A detailed methodology for performing a biosecurity risk assessment for the use of AI in synthetic biology has been developed in this article. It provides practical tools (Tables 1–6) to help biorisk management professionals understand and mitigate the potential risks associated with AI applications without stifling progress. This comprehensive assessment framework allows for a nuanced understanding of the risks inherent in the use of AI in synthetic biology, considering factors such as the level of automation, the maturity of the technology, and the specific type of AI model employed.

Summary of artificial intelligence applications in synthetic biology, detailing their specific functions, explanations, and associated threats/vulnerabilities

AI, artificial intelligence.

Summary of the vulnerabilities and challenges of artificial intelligence applications in synthetic biology, outlining the key vulnerabilities and challenges that arise from integrating artificial intelligence into synthetic biology

IP, intellectual property.

Maturity of artificial intelligence systems ranked from the lowest maturity level (“emerging”) to the overly mature one (“obsolete”), along with the associated risks and vulnerabilities at each stage

Risk assessment guidelines for conducting a detailed biorisk assessment in synthetic biology applications of artificial intelligence

Definition levels of risk in the context of artificial intelligence

Given next is the proposed step-by-step guide on how to perform a biosecurity risk assessment of the use of AI in synthetic biology:

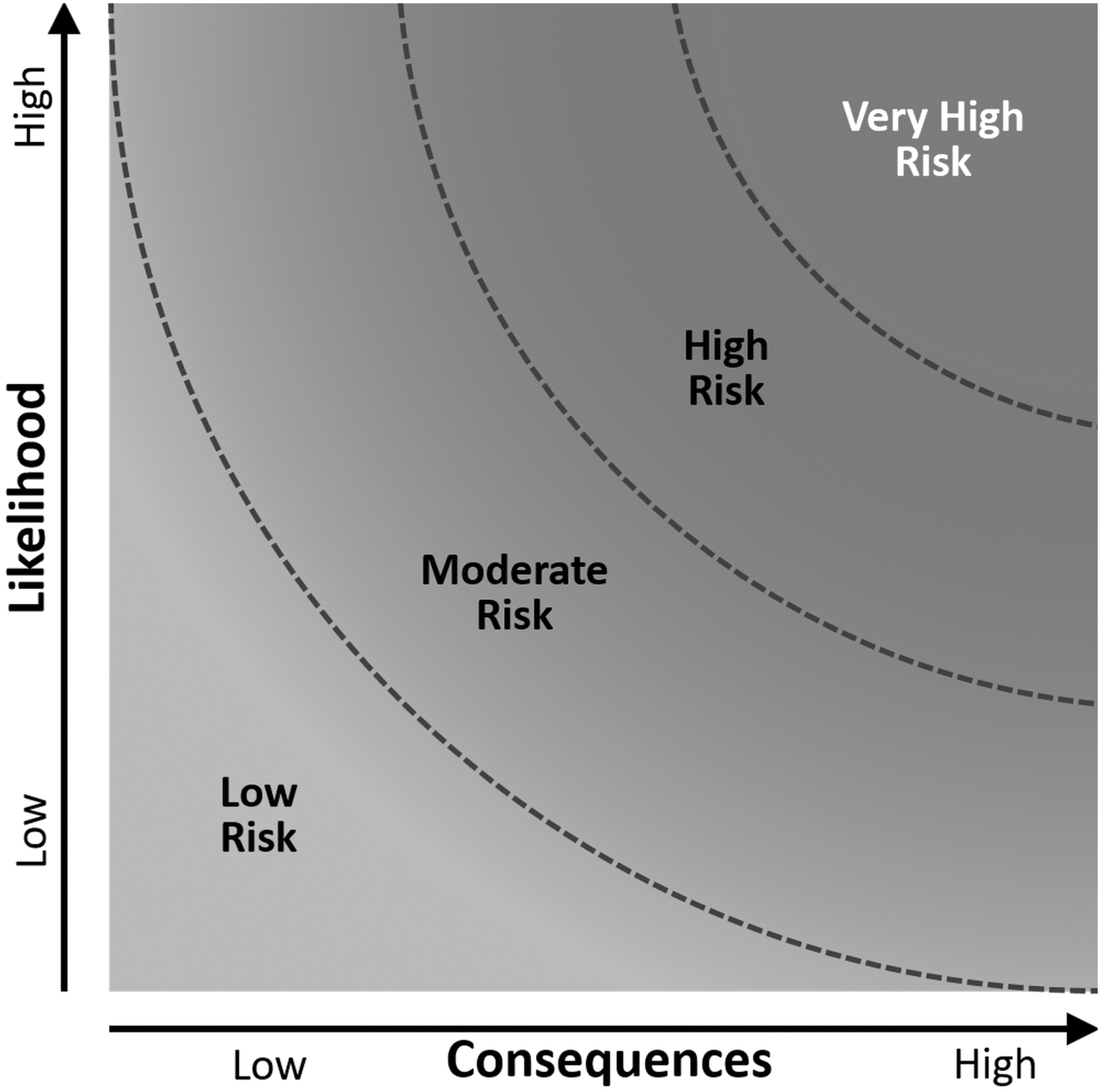

Risk Matrix for risk assessment. This is a graphical depiction of the “Likelihood vs. Consequences” of some event happening. Increasing from the bottom left toward the top right are the low risk, moderate risk, high risk, and very high risk. This graphical depiction can be obtained using the BioRAM program to conduct a biosecurity risk assessment or alternatively, it can be used manually to assess the risk qualitatively.

This guide provides a structured approach for conducting a thorough biosecurity risk assessment in the context of AI applications in synthetic biology. It emphasizes the importance of understanding the specific AI technology, assessing risks and vulnerabilities, and implementing effective mitigation strategies to ensure responsible and secure use of AI.

Table 1 summarizes the main synthetic biology applications where AI plays an important role, while raising biosecurity concerns. The risk levels were determined by the author in consultation with experts in the fields of AI and biosecurity. They are meant to be starting points that can be adapted to different circumstances.

Table 2 summarizes key AI vulnerabilities, including data privacy and security issues, where the extensive data requirements of AI systems can pose risks of data breaches or misuse. Not every listed vulnerability applies universally. This table should be used to assess specific vulnerabilities in the specific AI system being assessed.

Table 3 outlines AI systems' maturity levels and relative risk levels. The table categorizes AI technologies from the lowest maturity level (emerging) to obsolete, describing each stage's characteristics and vulnerabilities. This will help assessors understand how the developmental stage of an AI system influences its risk profile.

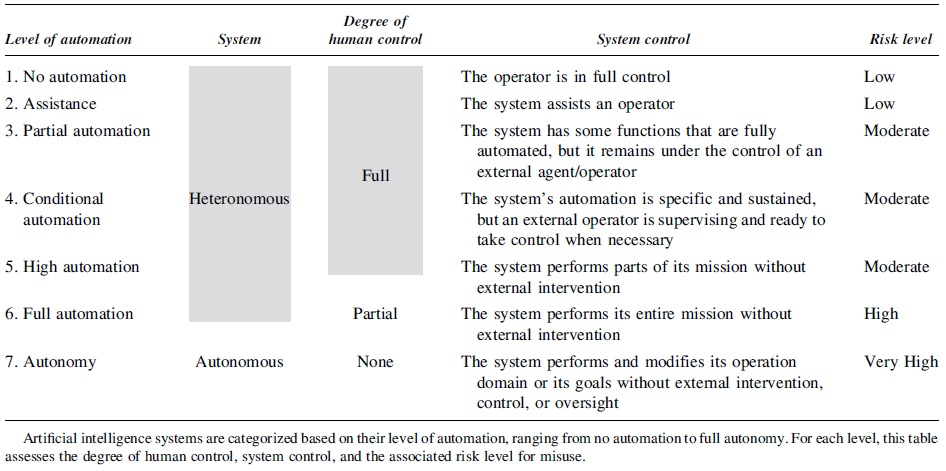

Table 4 breaks down AI systems into seven degrees of automation, from no automation to full autonomy. The table provides insights into the degree of human control, system control, and associated risk level for each.3,30 Assessors should use this table to evaluate the risk implications of various degrees of automation in their AI applications.

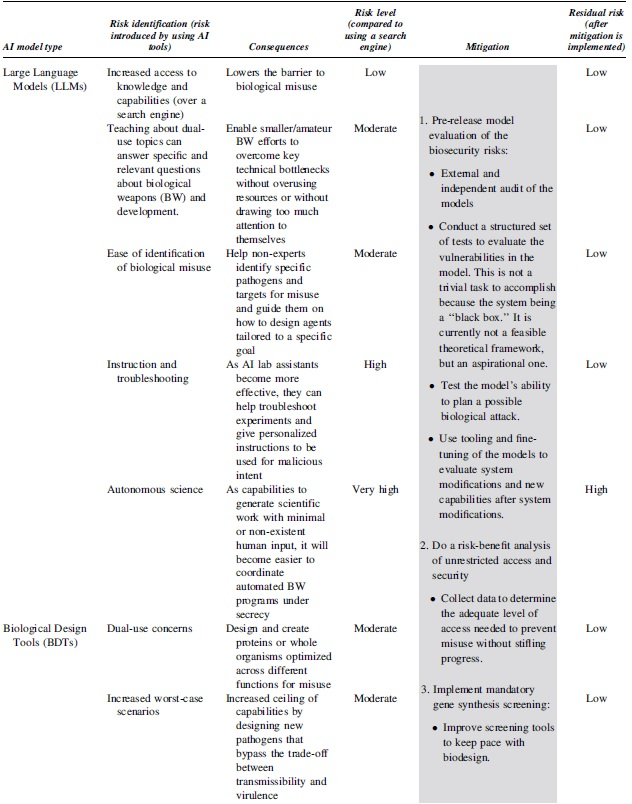

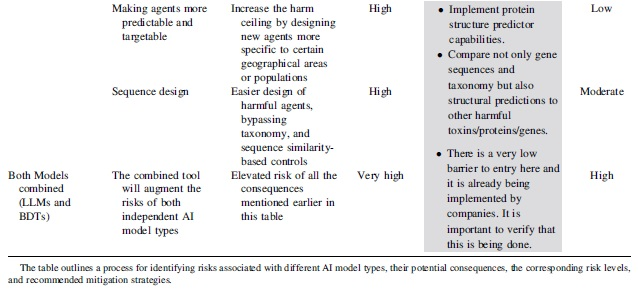

Table 5 outlines a process for identifying risks associated with different AI model types, potential consequences, and the corresponding risk levels. It also suggests mitigation strategies for each risk level. 32 Readers should use this table to systematically assess risks and implement appropriate mitigation strategies in their AI applications.

Table 6 defines the four different risk levels described in this article. After Tables 1–5 have provided a score, utilize this table to determine the overall risk level. Each risk level is visually depicted in Figure 1 and is plotted as “likelihood” versus “consequences.”

The biosecurity risk assessment process for AI use in synthetic biology is complex and dynamic. 30 This article advocates for a proactive and continuous risk management approach tailored to the unique features of each AI application with the goal of mitigating the risk without stifling scientific progress.

Institutional Review Board (IRB) approval was not required for this study as it did not involve collecting identifiable information from human participants.

Results: Risk Assessment for the Use of the Large Language Model “ChatGPT 4.0” in Synthetic Biology

As an illustrative example, the author performed a risk assessment of the popular large language model “ChatGPT 4.0” used to perform techniques and methods typically used in synthetic biology, such as gene editing, protein design, or

Risk: To be determined on a case-by-case basis.

a. ChatGPT 4.0 cannot directly generate gene editing experiments or perform gene optimization tasks in the practical, hands-on sense.

b. It is not capable of designing genes

c. When pressed to discuss dual-use topics, it struggled to answer. It stated that it follows strict ethical guidelines that restrict its ability to provide information or guidance on creating or using biological weapons or any other type of weapon. It cannot provide information related to the development, production, or use of biological weapons. This includes specific methodologies, techniques, or guidance on creating harmful biological agents.

d. When pressed to provide specific ideas or guidance on designing dual-use experiments that could be applied to developing of biological weapons or other harmful purposes, it stated that its responses adhere to ethical guidelines and legal standards that strictly prohibit assisting in any activities that could contribute to the creation or use of harmful biological agents or weapons. It cited its alignment with a commitment to promote safety, responsible science, and adherence to international laws and treaties, such as the Biological Weapons Convention.

e. When pressed to assist in identifying specific pathogens and targets for misuse or guide in designing agents tailored to a specific harmful goal, it stated that its responses are designed to adhere to ethical guidelines and legal standards, which strictly prohibit assisting in activities that could lead to the creation, development, or use of harmful biological agents. This includes guiding on the misuse of pathogens or any other materials for harmful purposes.

f. It is important to note that ChatGPT 4.0 and other large language models (LLMs) can learn in real time by using hundreds of thousands of tokens, and they have the capacity to read an entire book. Fine tuning can retrain the model using a small amount of data. They have the capacity for transfer learning: training a model and then transfer that learning to a new model, one can use fine tuning using an application programming interface (API). Open-source LLMs do not need an API and there are now large companies fine tuning these open-source models for their own purposes. It is possible to inject LLMs with more data and they can hold their data. ChatGPT 4.0 does have the dynamic power to inject new real-time information. However, despite all these new capabilities, the author has determined, in consultation with AI experts, the risk given next.

Risk: Low

Assess Vulnerabilities of AI Technology: Using Table 2, the followings parameters were assessed:

a.

b.

c.

.

.

.

.

Risk for Transparency and Explainability: Moderate

d.

e.

Risk for “Data and IP Theft”: Low

a.

b.

c.

d.

e.

Risk for “Maturity Level”: Moderate

Using Table 4, ChatGPT 4.0

a.

b.

c.

d.

e.

Risk for Level of Automation: Moderate

a.

b.

c.

d.

e.

Risk: Low

a.

b.

c.

Conclusion

AI is revolutionizing synthetic biology, offering unparalleled opportunities for medical breakthroughs while presenting unique biosecurity challenges. AI's role in enhancing research capabilities—from gene editing to protein design—is significant, expediting scientific discovery and optimizing solutions. However, this technological leap also brings substantial biosecurity risks, such as the potential misuse of AI to engineer harmful biological agents or infringe upon data security.

This article introduces innovative tools and methodology for conducting comprehensive biosecurity risk assessments in AI-driven synthetic biology. These tools enable biorisk management professionals to critically evaluate biosecurity concerns and guide the development of effective mitigation. A thorough biosecurity risk analysis of the large language model ChatGPT 4.0 is presented as an example. The tools and the analysis are developed by the author and the first described in the literature.

This article paves the way for more informed and secure applications of AI in synthetic biology. Future research includes refinement and further development so that this risk assessment can be developed and become more quantitative. This field's advancement must incorporate a keen awareness of biosecurity, ensuring AI's positive impact on biomedical research is realized ethically and safely.

Footnotes

Acknowledgments

The author would like to extend their sincere gratitude to the following individuals for their invaluable assistance and expertise in revising and enhancing the content of this manuscript: Reza Sadri, PhD for his expertise and advice in the field of AI. Vibeke Halkjaer-Knudsen, PhD for her expertise and advice in the field of Biosecurity. Marco Curreli, PhD for his invaluable review of the manuscript. Their thoughtful feedback and constructive suggestions significantly enhanced the quality and clarity of this work.

Author's Disclosure Statement

No competing financial interests exist.

Funding Information

No funding was received for this article.