Abstract

Quality assurance activities have been well established in medicine and surgery for over 20 years. More recently, clinical indicators have been developed and applied in a variety of settings where they have been associated with improvements in standards of care. Within psychiatry, indicators have been developed for use in inpatient psychiatric units, but not for specialist psychiatric programs such as consultation–liaison (C–L) psychiatry. In order to address this, the Inner West Area Mental Health Service-Royal Melbourne Hospital (IWAMHS-RMH) C–L service developed a program that incorporates clinical indicators into clinical practice using a simple and flexible data collection system.

The need to monitor service delivery in C–L psychiatry has been stimulated by a number of recent challenges. First, general hospitals are treating increasing numbers of patients associated with shorter lengths of stay [RMH clinical coding services, personal communication]. At RMH, the average length of stay in general medical and surgical beds is 5.5 days. Second, general hospital psychiatry units have been closed. Patients with medical– psychiatric comorbidity are now managed on general wards with C–L support. Third, funding formulas have placed limits on C–L services. Overall, it has been argued that an emphasis on the treatment of ‘serious mental illness’ has been associated with a decrease in the provision of resources to patients with medical–psychiatric comorbidity [1,2].

The nature of C–L work in emergency departments is also changing. Patients previously seen in psychiatric hospital admitting offices are now seen in the emergency department. These patients are often acutely unwell and behaviourally disturbed. This population is different from that seen in inpatient C–L and has different needs. The resources allocated to C–L have to be spread between these two populations.

The changing role and contemporary challenges for C–L highlight the need for effective data collection and monitoring of care. It is difficult to adapt a service to changing circumstances without knowing the nature of those changes. The collection of a select number of core parameters of service activity can perform this function. Referral and contact information are two examples of core parameters. Simple analysis of these parameters will identify areas of need, such as units with high referral rates. Changes over time can also be followed. These changes may include increased referrals from the emergency department or as a consequence of a new liaison attachment.

Clinical indicators provide information as to the quality of service provision. This complements the quantitative data outlined in the previous paragraph. Clinical indicators are markers. They are objective, arbitrary and simplified. The degree to which they are useful depends on their capacity to shed light on the core processes of a program. It is important, as a consequence, that their development and use should be guided by those providing direct clinical care. This not only improves the chances of the indicators of reflecting real components of care, but also of being responded to in a meaningful way by other clinicians.

Clinical indicators and quality assurance

The Australian Council on Healthcare Standards (ACHS) commenced a voluntary program of accreditation of hospitals in 1974. Medical clinical indicators were introduced into the ACHS accreditation program in 1993 [3]. Clinical indicators have been devised as objective measures of clinical management and care. They are used to identify problems within a service and to compare performances between services. A clinical indicator should be relevant to clinical practice and practical to measure.

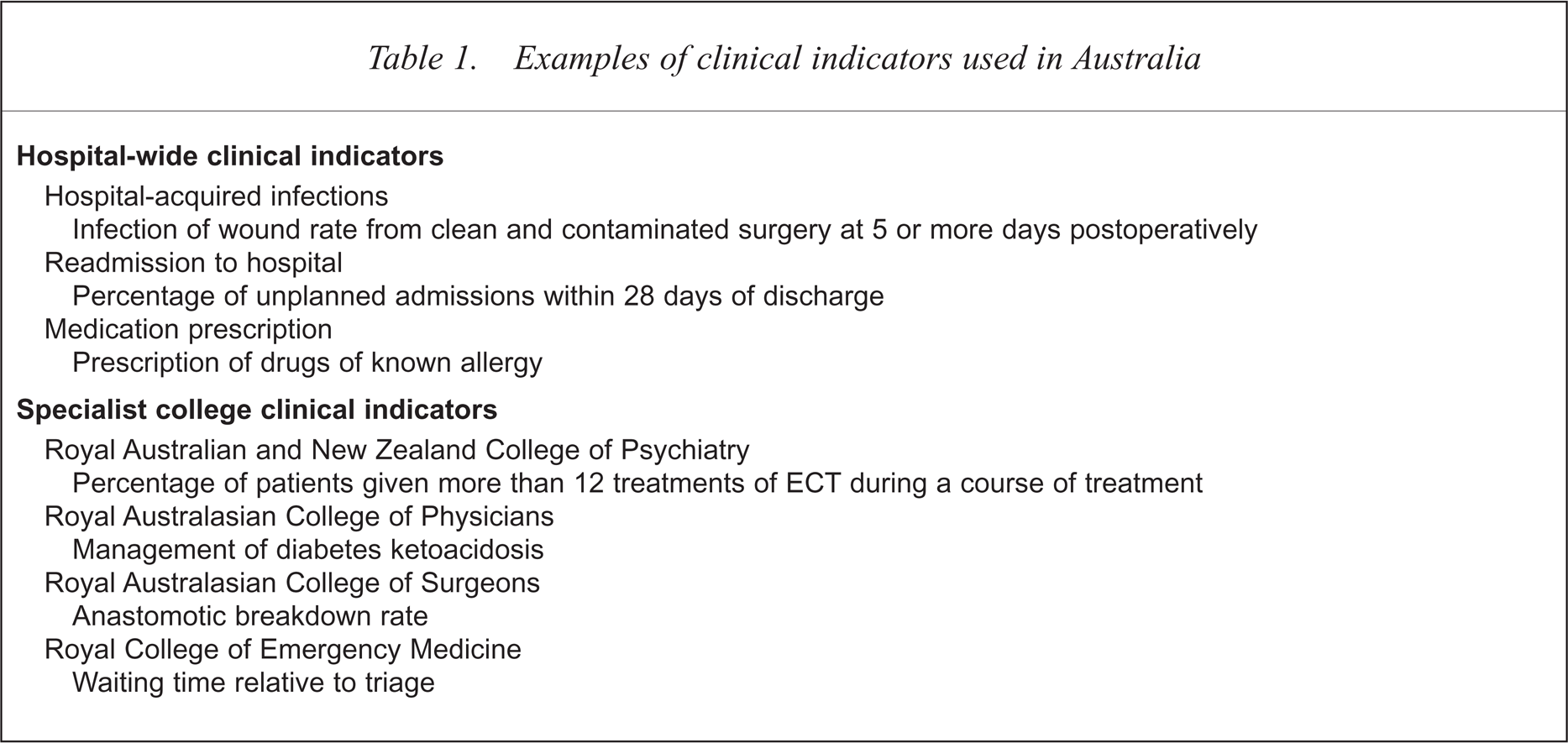

The ACHS Care Evaluation Program was established in 1989. Through this program specialist colleges became involved in the development of clinical indicators [3]. As shown in Table 1, both hospital-wide and specialist indicators have been developed. Indicators may measure either an outcome of care, such as morbidity, or a process, such as compliance with management criteria. Under the guidelines for accreditation, the ACHS does not specify the number or type of indicators to be used. The expectation is rather that the hospitals use those most relevant, and thereby display an active and appropriate quality assurance program.

Examples of clinical indicators used in Australia

A clinical indicator is used within a cycle of quality improvement. First, the indicator is measured over a period of time. If performance by the hospital or unit is maintained above the threshold as defined in the indicator, then the indicator may be replaced or the threshold raised. If there is a failure to achieve the threshold then an intervention is planned and undertaken. The effects of this intervention are monitored, thereby completing the cycle. In reviewing the use of clinical indicators in a variety of Australian settings Portelli and others have argued that they are ‘useful tools for facilitating improvements in the quality of patient care’ [4,5].

The first version of clinical indicators for psychiatry was written jointly with the Royal Australian and New Zealand College of Psychiatrists in 1996 [6]. A second version was published in 1998 [7]. The indicators issued are relevant only to psychiatric unit inpatient care. They are not appropriate for use in C–L psychiatry.

The Royal Melbourne Hospital consultation-liaison service

The RMH is a 400-bed teaching hospital in northern inner-Melbourne. Psychiatric services located at the hospital are integrated with the Inner West Area Mental Heath Service. The C–L service has close links with the University of Melbourne Department of Psychiatry, and the Neuropsychiatry Unit, which is funded as a state-wide service by the state Department of Human Services.

The Royal Melbourne Hospital C–L service provides 24-hour consultation to all medical and surgical wards and the emergency department. Liaison attachments have been established with the neurology and infectious diseases units. The Neuropsychiatry Unit has close involvement with the Comprehensive Epilepsy Program. The C–L service is staffed by two full-time psychiatry trainees, one psychiatric nurse based in casualty, the equivalent of a full-time consultant divided among four individuals, and a half-time secretary. The trainees are typically in the third year of training. The nurse is a level 4 with experience in both emergency and C–L psychiatry. The trainees are on site during normal hours and on call after hours. The nurse is on site during normal working hours. The consultants are on call at all times.

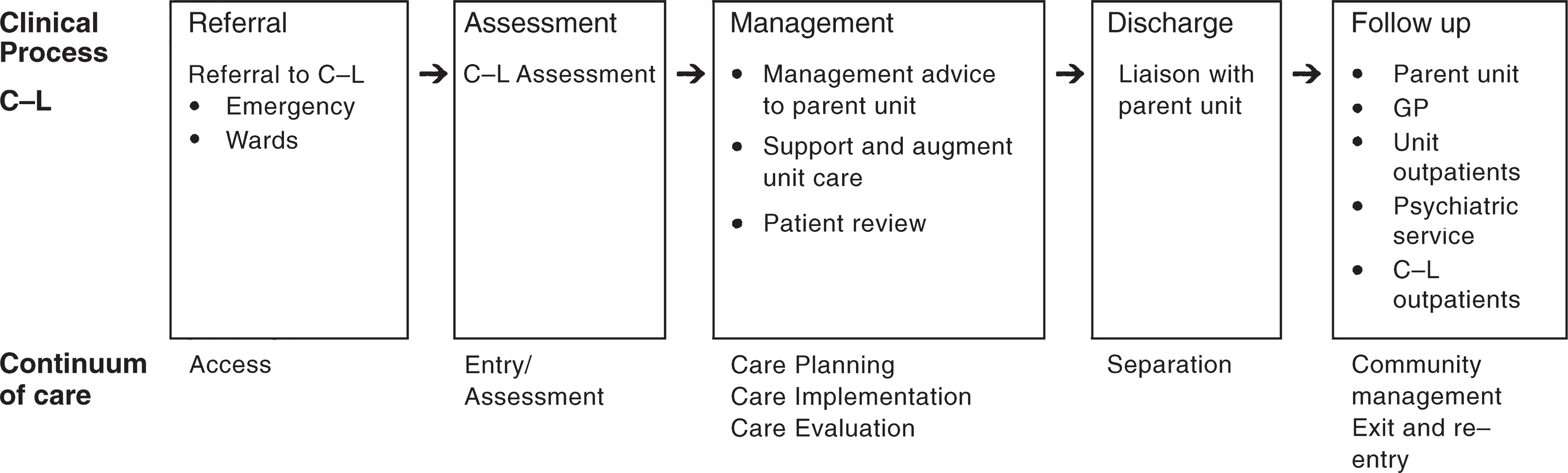

The consultation process can be summarised using the ACHS continuum of care format. This is demonstrated in Fig. 1. Referrals from the inpatient medical staff are made to a C–L trainee using the hospital paging system. A written referral is completed concurrently and left in the patient notes. A trainee initially assesses the patient. The patient is subsequently reviewed by a consultant, unless precipitous discharge occurs. The neuropsychiatry unit conducts neuropsychiatric assessments when requested by the referring unit or the general C–L service.

Continuum of care in consultation–liaison psychiatry

Referrals to the emergency department are made verbally to the liaison nurse or the rostered trainee. The liaison nurse will conduct an initial assessment and discuss the patient with the registrar or request a separate review. A consultant is available to discuss the patient and may be directly involved for complex or difficult problems.

All current cases are discussed at weekly review meetings. Separate meetings are convened for in-patient and emergency C–L. They are attended by the trainees, the coordinating consultant and, in the latter case, the C–L nurse.

Follow up may be arranged in a variety of settings. These include follow up by the referring unit, a general practitioner, a private psychiatrist, the Area Mental Health Service, or at a weekly C–L outpatient clinic. The C–L clinic is staffed by two consultants and two trainees.

Development of the University of Melbourne Department of Psychiatry-Royal Melbourne Hospital consultation–liaison database and clinical indicators

In February 1998, IWAMHS-RMH consultation– liaison service undertook the development of a new data collection process designed to support clinical care, training and research activities. A working group was established, lead by the academic staff of the University of Melbourne. The aims of the group were to: (i) develop and trial an appropriate data collection process and database; (ii) develop a process to ensure that the data was collected in a timely and accurate manner; (iii) devise and implement clinical indicators for C–L; and (iv) integrate the model into clinical practice by making it relevant, efficient, easy to use.

The working group explored a range of pre-existing data collection systems [8–10]. The MICROCARES System [11] and the work of the European Consultation–Liaison Workgroup (ECLW) [12–14] were important examples.

The MICRO-CARES optical scan clinical database system had been the common platform used by C–L services in a range of Australian settings [15–18]. The system was originally established primarily as a tool for internationally standardised research and has provided valuable information on the characteristics of patients referred to C–L services and on patterns of treatment. The system, however, had significant limitations in addressing the aims of our project. The MICRO-CARES forms are complex and time-consuming to complete. This has lead to the dissatisfaction of those completing them, especially trainees, and difficulties with compliance. Furthermore, the data domains used in MICRO-CARES are inflexible, making adaptation to local needs and the incorporation of clinical indicators difficult.

The ECLW collaborative study provides another example of data collection in C–L psychiatry [12–14]. A key aim of ECLW study was to describe different patterns of C–L service delivery in Europe with a view to developing minimal standards for C–L services. One of the instruments devised for the study was a patient registration form [13]. This form included information on admission and referral characteristics, sociodemographic variables, past history, medical and psychiatric diagnoses, clinical state and C–L input. The ECLW saw these parameters as fundamental and as fulfilling a number of functions, including stimulating service development and guiding health care policy.

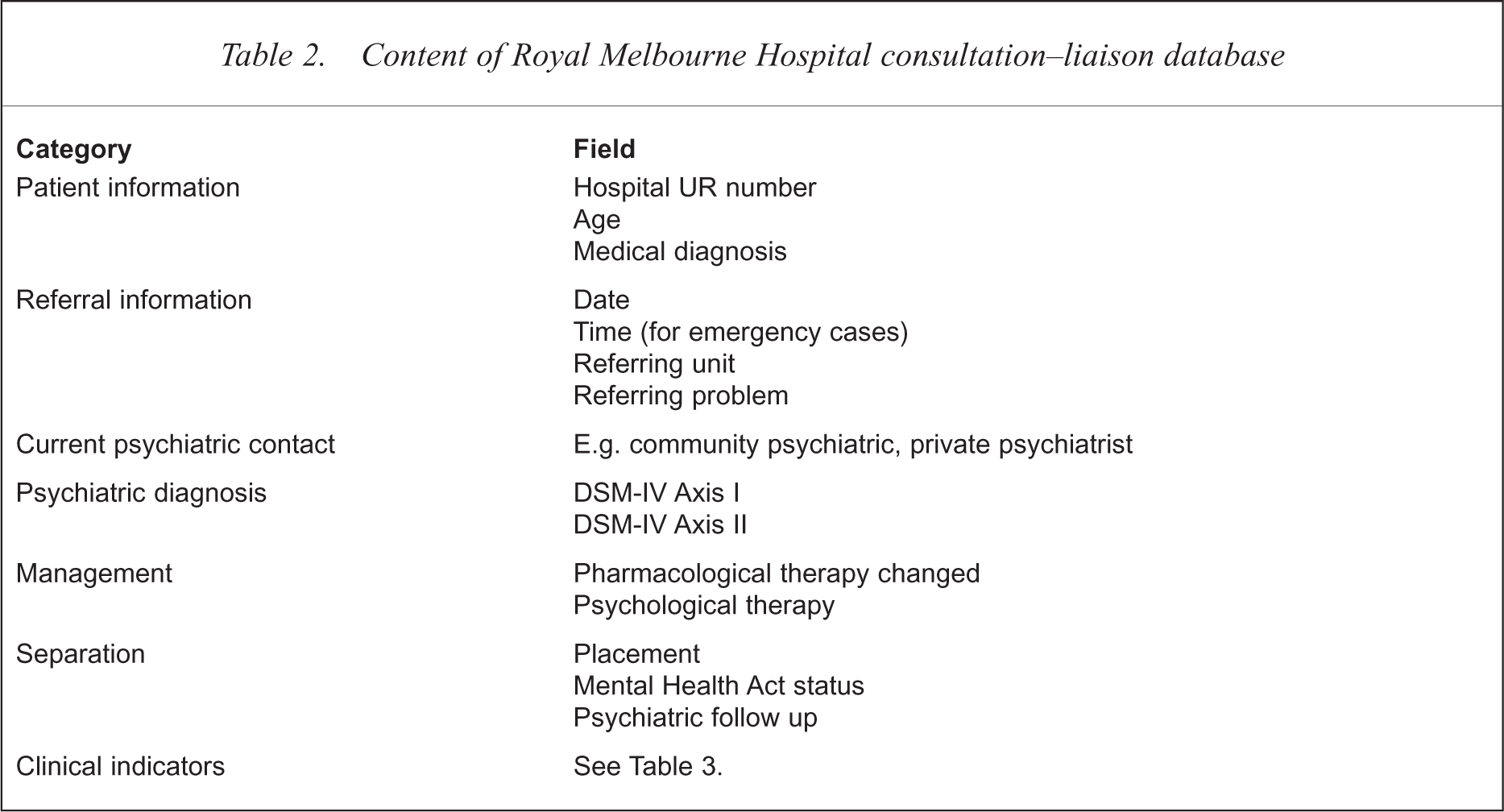

Following the literature review, the working party defined the parameters that would be collected within the RMH database. The format contained seven key areas as summarised in Table 2.

Content of Royal Melbourne Hospital consultation–liaison database

The process for the formulation of the clinical indicators was similar to that used to decide on the database fields. The hospital-wide and college-specific indicators already in use were reviewed [3–5]. In contrast to the extensive literature on improved outcomes with C–L interventions, however, no articles on the use of clinical indicators in C–L were found.

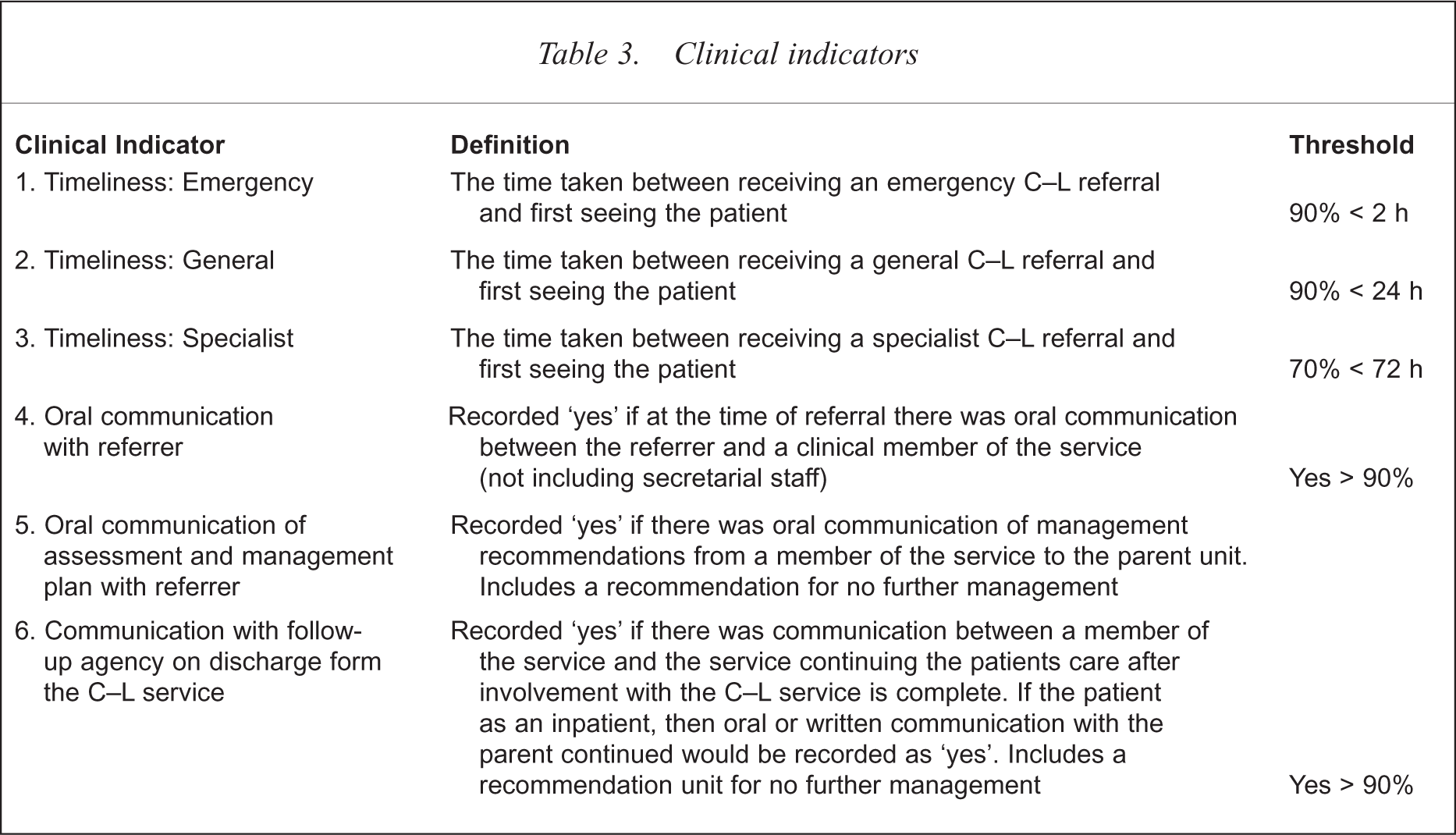

The continuum of care in C–L psychiatry seen in Figure 1 was used in the development of the clinical indicators. Key processes are defined in each box. After exploring a range of options, timeliness and communication were chosen as functions to be developed into indicators. In defining the timeliness clinical indicators, a distinction was made between services to emergency, general C–L and those receiving specialist neuropsychiatric assessment. Communication, a central tenet of the consultation process, was recorded at assessment, after formulation of a management plan and at discharge (Table 3).

Clinical indicators

A ‘tick-a-box’ data collection sheet was designed on which to record the data. Information was recorded at referral, assessment and at discharge from the service. The sheets took about 2 min to complete. Contact information required for the State Department of Health and Human Services was also recorded.

The final task of the working group was to develop a computerised database. Microsoft Access 97 (Microsoft, Redmond, WA, USA) was used as the operating platform. The program was written so as to generate time-span reports relating to each of the parameters of service delivery and the clinical indicators. The program is adaptable. For example, new fields can be added and additional reports devised.

Collection and use of consultation–liaison database and clinical indicators

The system of data collection, clinical indicators and weekly reviews was fully implemented in August 1998. Data collection sheets were commenced for all new referrals. At the time the referral was received, the trainee or nurse recorded the patient and referral information. Once an assessment was completed, the diagnostic and management information was recorded. The diagnosis was derived from a clinical assessment conducted by the trainee and usually reviewed by the consultant. DSM-IV categories were used [19]. The final information on separation was completed after discharge.

The forms relating to current and recently discharged patients were reviewed at the weekly clinical review meetings. All patients seen in the previous week were discussed briefly using the data collection sheet as a patient summary and prompt. Any incomplete or ambiguous fields were corrected. This process allowed the consultant to review referral and diagnostic problems not previously addressed and to monitor discharge planning and follow-up arrangements. The reviews also facilitated the monitoring of workload, referral patterns and systemic problems affecting the service. After the weekly review meetings, the sheets of patients who had been discharged were forwarded to the C–L secretary for entry into the computerised database. The secretary required approximately 1 hour of training in order to feel confident about data entry. Any incomplete or unclear forms were returned to the review meetings for correction.

The feedback of information occurred at a monthly administration meeting. All C–L service staff attended this meeting. The monthly reports generated from the computerised database were tabled. These reports provided information on the nature of referrals, patient diagnoses and patient discharges over the previous month.

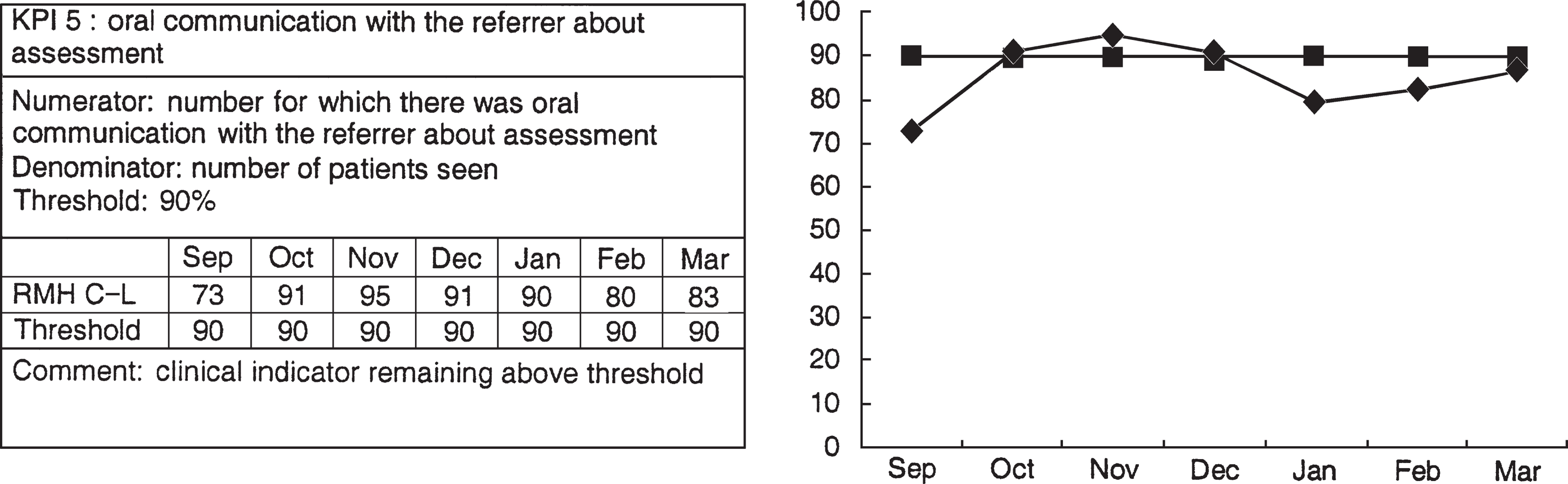

The clinical indicators were also presented at the monthly administration meeting. The IWAMHSRMH format for Key Performance Indicators (KPIs) was used, as illustrated in Figure 2. The KPI is represented in reference to its target threshold. In the case of KPI number 5, for example, the threshold was set at 90%. In other words, performance was deemed adequate if there was oral communication with the referrer about the results of the assessment in 90% of cases. The KPIs, along with comments from the meeting, were forwarded to the Area Mental Health Service Clinical Standards Development Committee.

Clinical indicator presented in Key Peformance Indicator format

Discussion

The University of Melbourne–Royal Melbourne Hospital C–L database and clinical indicators were developed in order to address a number needs. These included a desire to explore the usefulness of clinical indicators in C–L in view of their increasing application in other areas of medicine. The development of an underlying database was central to this process and complemented an established school of thought highlighting clinically relevant data collection as an important component of a modern C–L program. It was hoped that the use of these two tools (clinical indicators and clinically relevant data collection) would be useful in addressing some of the current and future challenges for C–L psychiatry.

The development of the system occurred on a platform of clinical and academic experience. This allowed for a course to be plotted between the often conflicting demands of clinical work and administration. This balance seemed particularly important given the difficulties experienced with other data collection systems. The project had support from both the clinical staff and hospital administration. This support was important in its successful implementation, especially so given the time frame between commencement and implementation. The new system required staff education and changes to timetables. In order for similar systems to be used in other settings, a similar degree of motivation and willingness to change would be required.

The experience of the system to date has been positive. The tracking of patients along the continuum of care has resulted in an increased confidence that patients are not ‘falling between the cracks’. The clinical indicators appear valuable in a number of ways. First, the recording of them highlights the importance of the process being monitored. This improves the likelihood of its implementation in the clinical setting. Second, the indicators provide a measure of how well the continuum of care is operating. This reflects the quality of service the program is providing to patients and the hospital. Third, clinical indicators can identify specific problems and monitor the effectiveness of interventions conducted at the program level.

The use of the database has led to an increased understanding of the role and function of C–L service within the general hospital. It has also helped clarify the role of C–L within the Area Mental Health Service. The demonstration of the number and nature of psychiatric contacts in the emergency department has promoted a closer working relationship with the community mental health services. The detection of patients presenting to the emergency department who are currently engaged with community psychiatric services has led to improved feedback about these patients to their case managers. Information from the database has also been useful when describing the C–L service to general hospital units.

The system has been adopted with minor alterations by other C–L services within the Healthcare Network. A network C–L committee has been established to oversee this development. Data are being collected by C–L services in other hospitals. The potential exists for the meaningful comparisons of service use and clinical performance between sites, and for collaboration in research.

A number of difficulties can be identified in the system. The collection and review of data has the potential to unduly impinge on clinical resources. Time is required to complete the forms, submit them, enter the data and review the results. This occurs in addition to the other data collection already established within mental health and hospital services. There is some duplication of data between the hospital, mental health and C–L systems. This is clearly an inefficiency that will continue until there is integration between hospital, mental health and programmatic databases.

Compliance will always be an important issue in data collection in C–L. It may be argued that compliance was maintained as a consequence of the initial enthusiasm about the project. With time, compliance may fall. The final result may be that the degree to which data collection becomes an accepted part of clinical practice in C–L will be related to the ease of collection and the utility of the data. We endeavoured to address both these factors in the development of our system. For example, the use of the data collection forms at the clinical reviews gives the process immediate recognisable benefits for the trainees and consultants. Likewise, the monthly distribution of information provides rapid feedback about the data collected.

The reliability of the data and clinical indicators may be questioned. The fields relating to reason for referral and management, for example, require judgement in choosing the categories of best fit. The diagnosis is obtained through clinical assessment rather than structured interview. The clinical indicators which require the recording of whether an action was successfully performed are also open to a degree of interpretation. Optimising validity was addressed, as much as possible, through the use of clinical supervision and the circulation of definitions.

The implementation of clinical indicators in Australian medicine is one method of trying to improve standards of care. It is clearly not the only method. The use of objective measures of clinical practice may be viewed suspiciously by those who are concerned about managerial interference in medicine. Our experience to date has been encouraging. The use of clinical indicators in C–L psychiatry, complemented by a flexible database, can be of value. Furthermore, an active involvement in the development of these clinical indicators has led to a better understanding of the service and of the processes of quality improvement. It has also informed debates about service provision within the current managerial environment. It seems better to have mastered clinical indicators than to fear mastery by them.

Acknowledgements

We wish to acknowledge Joyce Goh for her advice on the development of Key Performance Indicators, and Irene Esquival for providing invaluable IT technical support.