Abstract

During a dialogue, agents exchange information with each other and need thus to deal with incoming information. For that purpose, they should be able to reason effectively about trustworthiness of information sources. This paper proposes an argument-based system that allows an agent to reason about its own beliefs and information received from other sources. An agent's beliefs are of two kinds: beliefs about the environment (like the window is closed) and beliefs about trusting sources (like agent i trusts agent j). Six basic forms of trust are discussed in the paper including the most common one on sincerity. Starting with a base which contains such information, the system builds two types of arguments: arguments in favour of trusting a given source of information and arguments in favour of believing statements which may be received from other agents. We discuss how the different arguments interact and how an agent may decide to trust another source and thus to accept information coming from that source. The system is then extended in order to deal with graded trust (like agent i trusts to some extent agent j).

Keywords

Introduction

An increasing number of software applications are being conceived, designed, and implemented using the notion of autonomous agents. These applications vary from email filtering (Maes, 1996), through electronic commerce (Rodriguez, Noriega, Sierra, & Padget, 1997; Wellman, 1993), to large industrial applications (Jennings et al., 1996). In all of these disparate cases, the agents are autonomous in the sense that they have the ability to decide for themselves which goals they should adopt and how these goals should be achieved (Wooldridge & Jennings, 1995). In most such applications, the autonomous components need to interact with one another because of the inherent interdependencies which exist between them. They need to communicate in order to resolve differences of opinion and conflicts of interest that result from differences in preferences, work together to find solutions to dilemmas and to construct proofs that they cannot manage alone, or simply to inform each other of pertinent facts. In other words they need the ability to engage in dialogues. Consequently, agents should be able to manage and deal with trust in information sources. In negotiation dialogues, for instance, one makes contracts with trustworthy agents. More generally, agents consider information coming from other sources only if these latter are trustworthy. As a result of this requirement on providing agents with the ability to deal with trust, an important amount of work has been done. Two main categories of works can be distinguished:

Works on understanding and formalising the notion of trust in information sources. Such works try to answer the question: what does the sentence ‘agent x trusts agent y’ mean? Examples of answers can be found in Castelfranchi (2011), Castelfranchi and Falcone (2000), Falcone, Piunti, Venanzi, and Castelfranchi (2013), Marsh (1994). In Demolombe (1998, 2001), it is argued that trust is generally not absolute but rather concerns some properties of an agent like his or her competence, sincerity, cooperativity … Works on reasoning about trust. The idea is to decide whether to trust or not a given source of information. Two categories of models are particularly proposed: (i) statistics-based models (Matt, Morge, & Toni 2010; Shi, Bochmann, & Adams 2005) which rely on past behaviour of a source in order to predict its future behaviour. (ii) logical models (Demolombe, 2004; Demolombe & Lorini, 2008) which infer trust in some properties from trust in other properties.

Besides, since the seminal book by Walton and Krabbe (1995) in which they distinguished between six types of dialogues, there has been much work on providing agents with the ability to engage in such dialogues. Typically, these focus on one type of dialogue like persuasion (Amgoud, Maudet, & Parsons, 2000), inquiry (Black & Hunter, 2009), negotiation (Sycara, 1990) and deliberation (McBurney, Hitchcock, & Parsons, 2007). Furthermore, Walton and Krabbe emphasised the need to argue in dialogues in order to convince other parties to accept opinions or offers. Consequently, in most works on modelling dialogues, agents are equipped with argumentation systems for reasoning about their own beliefs, building arguments and evaluating arguments received from other sources. While this use of argumentation is a common theme in all work mentioned above, none of those proposals consider trust in information sources when dealing with incoming information or when making deals with other agents. They rather assume that agents are trustworthy and accept any information (respectively, offer) sent by any agent as soon as it does not contradict their own beliefs (respectively, it satisfies their goals). However, agents are not necessarily neither sincere nor reliable as argued in the huge literature about trust in information sources. This would mean that in existing works, agents may accept claims even if their sources are not trustworthy. They may also make deals with unreliable agents.

This paper fills the gap by proposing an argumentation system that agents may use in dialogues for reasoning about different kinds of beliefs including beliefs about trust in information sources. The system fulfils thus three tasks. It states whether:

to believe in a given statement to trust or not a given source to accept or not an information/offer received from a source.

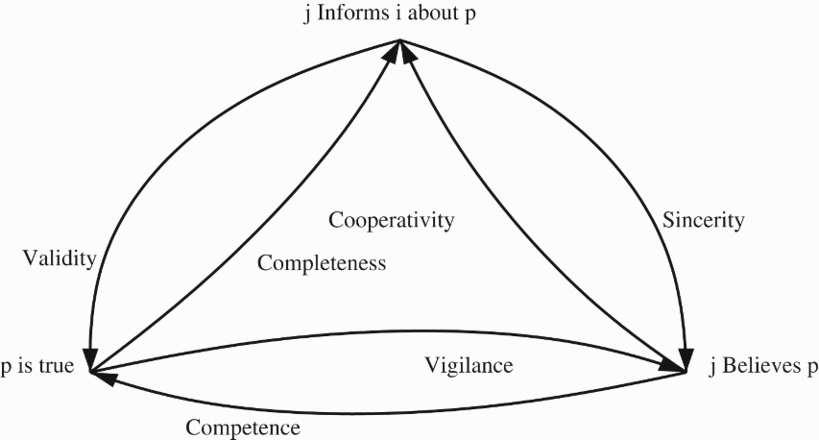

We consider a fine-grained notion of trust as opposed to absolute trust. Indeed, an agent trusts (or distrusts) another agent in a given property and not in absolute way. For instance, one may trust someone is his sincerity but not in his competence. In this paper, we focus on the six properties identified by Demolombe (1998, 2004), namely validity, completeness, sincerity, cooperativity, competence and vigilance. In the first part of the paper, trust is considered as a binary notion, i.e. an agent either trusts in a given property of an entity or not. The system starts with a belief base which is encoded in modal logic and which contains formulas expressing information about the environment (e.g. my car is red) and information about trust (e.g. agent i trusts in the sincerity of agent j). It builds arguments in favour of statements and establishes the attacks between them. The arguments are evaluated using Dung's semantics (Dung, 1995), and finally the inferences to be drawn from the base are identified. We show that the system satisfies nice properties, namely the rationality postulates defined in Amgoud (2013) about consistency and closure under consequence operator. In the second part of the paper, the system is extended in order to deal with graded trust as developed in Demolombe (2009) and in Demolombe and Liau (2001). The logical language that is used for representing beliefs is extended in such a way to encode certainty degrees of beliefs (such as, agent i has some doubts about climate change) and regularities degrees of relationships between facts (such as, if we are in London, it rains almost every day). From these two kinds of degrees, each argument is assigned an importance level which may not be the same for all arguments. Finally, arguments are evaluated using not only the attack relation but also a preference relation issued from the importance levels of arguments.

The paper is structured as follows: Section 2 introduces the logical formalism that will be used for representing and reasoning about agent's beliefs. Section 3 defines the six forms of trust that were initially introduced in Demolombe (2004), Lorini and Demolombe (2008) in case of binary trust. Section 4 presents the argumentation system as well as it properties. Section 5 presents the graded version of trust as proposed in Demolombe (2009), Demolombe and Liau (2001), and an argumentation system that can take into account varying degrees of trust and beliefs. Section 6 compares our model with existing works on argumentation-based trust. The last section concludes.

Logical formalism

This section introduces the logical framework (i.e. the logical language ATOM: set of atomic propositions denoted by AGENT: a non-empty set of agents denoted by

The language Sometimes we abuse notation and write

The axiomatics of the logic are the axiomatics of a Propositional Multi Modal Logic (Chellas, 1980). Indeed, in addition to the axiomatics of Classical Propositional Calculus we have the following axiom schemas and inference rules.

If ⊢ φ, then

Roughly speaking the intuitive meaning of (K) is that agent i can apply the modus ponens rule to derive consequences, (D) means that i’s beliefs are not inconsistent and (Nec) means that i is not ignorant of the logical truths.

The modal operator If

The intuitive meaning of (EQV) is that informing actions about two logically equivalent formulas have the same effects. For instance, to inform about the fact John is at home and John is working has the same effects as to inform about the fact that John is working and John is at home. The meaning of (CONJ) is that to inform about the fact John is at home and to inform about the fact John is working has the same effects as to inform about the fact John is working at home. The justification of this axiom schema is that informing actions are considered at an abstract level and two distinct concrete actions may be considered as the ‘same’ action if they produce the same effect on the receiver's beliefs. The axiom schemas (OBS) and (OBS’) assume that if an agent j informs (respectively, does not inform) an agent i about φ, then i is aware of this fact. This would mean that the communication channels are assumed to be perfect.

According to Chellas’ terminology, modalities such as Bel

i

obey a normal system KD and modalities of the kind

In the sequel, the symbol ⊢ refers to the consequence operator that is based on the previous axiom schemas. Besides, a belief base is a subset of

Throughout this section, we consider two interacting agents i and j and assume that i receives a piece of information

It is worth mentioning that the fact that an agent i believes in the sincerity of another agent j regarding proposition φ does not mean that i believes φ. The claim may be false and j is not aware about that. A strong version of sincerity is the property of validity.

Trust in validity: validity is the relationship between what the trustee says and what is true. For instance, the fact that Romeo trusts Juliet in her validity about the fact that Juliet loves Romeo means that Romeo believes that if Juliet says to Romeo that she loves him, then it is true that she loves him. The general definition is: the truster (i) believes that if he or she is informed by the trustee (j) about some proposition, then this proposition is true.

Trust in completeness: completeness is the relationship between what is true and what the trustee says; it is the dual of validity. For instance the fact that Romeo trusts Juliet in her completeness about the fact that Juliet loves Romeo means that Romeo believes that if it is true that Juliet loves him, then Juliet will tell Romeo that she loves him. The general definition is: the truster believes that if some proposition is true, then the truster is informed by the trustee about this proposition.

Trust in cooperativity: cooperativity is the relationship between what the trustee believes and what he says; it is the dual of sincerity. For instance, the fact that Juliet trusts Romeo in his cooperativity about the fact Juliet is beautiful means that Juliet believes that if Romeo believes that she is beautiful, then Romeo says to her that she is beautiful. The general definition is: the truster believes that if the trustee believes that some proposition is true, then the truster is informed by the trustee about this proposition.

Trust in competence: competence is the relationship between what the trustee believes and what is true. For instance, the fact that Juliet trusts Romeo in his competence about the fact that the door of her house is closed means that Juliet believes that if Romeo believes that the door of her house is closed, then it is true that the door is closed. The general definition is: the truster believes that if the trustee believes that some proposition is true, then this proposition is true.

Trust in vigilance: vigilance is the relationship between what is true and what the trustee believes; it is the dual of competence. For instance, the fact that Juliet trusts Romeo in his vigilance about the fact that the door of her house is closed means that Juliet believes that if it is true that the door of her house is closed, then Romeo believes that the door of her house is closed. The general definition is: the truster believes that if some proposition is true, then the trustee believes that this proposition is true.

In Parsons et al. (2012) other properties, called argument schemes, are discussed like trust in agent's reputation or trust in agent's character. For the purpose of the paper, we only focus on the six above properties and propose a formal framework for reasoning with and about them.

It is worth mentioning that the presented definitions of trust are specific to particular propositions. For instance, a patient (p) may trust in the competence of his or her doctor (d) regarding diagnosis g1. This is represented by the formula

As said before, completeness is the dual of validity, cooperativity is the dual of sincerity and vigilance is the dual of competence (Figure 1). The dual properties play a significant role. Let us consider the case where the trustee is a guard in charge of informing people living in a building if the elevator fails. If these people trust the guard's completeness, they infer that the elevator is working from the fact they have not received a warning from the guard.

Relationships between believing, informing and truth.

It is also easy to show that the six properties are not independent. Indeed, trust in validity follows from trust in sincerity and trust in competence. Similarly, trust in completeness follows from trust in vigilance and trust in cooperativity. In formal terms we have:

The effects of informing actions depending on the different kinds of trust are summarised below:

Property (E2) (resp. (E4)) shows sufficient conditions about trust that guarantee that performing (resp. not performing) the action

The effects of informing actions can be derived from the different kinds of assumptions about the trust relationships between agents. For instance, if the truster i trusts j’s sincerity about the proposition φ and j informs i about φ, the truster can infer that the trustee believes what s/he has transmitted to him or her (i). If, in addition, the truster trusts j’s competence (i.e. the formula

Argumentation is seen as a reasoning process in which arguments are built and evaluated in order to increase or decrease the acceptability of a given standpoint. The latter may be a belief, an action, a goal, etc. Argumentation has become an artificial intelligence keyword for the last 20 years. In its essence, argumentation can be seen as a particularly useful and intuitive paradigm for doing non-monotonic reasoning. The advantage of argumentation is that the reasoning process is composed of modular and quite intuitive steps, and thus avoids the monolithic approach of many traditional logics for defeasible reasoning. An argumentation process starts with the construction of a set of arguments from a given knowledge base. As some of these arguments may attack each other, one needs to apply a criterion for determining the sets of arguments that can be regarded as acceptable: the so-called extensions.

In what follows, we propose an argumentation system for reasoning about the different kinds of beliefs an agent i may have, in particular beliefs about trust in information sources. The system instantiates the abstract framework of Dung (1995) and uses one of its semantics in order to evaluate arguments. Before presenting the system, we start by recalling briefly Dung's framework and then show how arguments in favour of beliefs can be built and how these arguments may interact with each other.

Dung's abstract argumentation framework

The most abstract argumentation framework in the literature was proposed by Dung (1995). It consists of a set of arguments and a binary relation expressing attacks between the arguments. Both notions (i.e. arguments and attacks) are abstract entities and thus their origin and structure are left unspecified.

An argumentation framework is a pair

A pair

An argumentation framework

Let

It is worth recalling that stable extensions are maximal (for set inclusion) non-conflicting sets of arguments.

Let us consider the argumentation framework

This framework has five maximal (for set inclusion) non-conflicting sets of arguments:

It has one stable extension

An argumentation framework may be infinite, i.e. its set of arguments may be infinite. Consequently, it may have an infinite number of extensions (under a given semantics).

This section introduces an argumentation system for reasoning about the different kinds of beliefs an agent i may have. As already said, argumentation is an alternative approach for reasoning with inconsistent information. It follows three main steps: (i) constructing arguments and counterarguments from a logical belief base, (ii) defining the status of each argument, and (iii) specifying the conclusions to be drawn from the base. In what follows, we focus on a given agent i and propose a model for reasoning about his beliefs. The model instantiates Dung's framework by defining all the above items.

Starting from the logic

The system is a logical instantiation of the abstract framework proposed by Dung (1995) in his seminal paper. It consists thus of a set of arguments, an attack relation between the arguments and a semantics for evaluating the arguments. The arguments are built from the base

An argument built from a belief base H is consistent H ⊢ h

H is called the support of the argument and h its conclusion.

Let us illustrate this notion of argument with an example.

Assume the following belief base of agent i:

The previous arguments support various beliefs of agent i. Some of them, like (4) and (5), make use of beliefs on trust in information sources. To put it differently, they rely on agent's trust in order to make inferences. Such arguments are very useful in dialogue systems where agents may receive new information from other entities and should thus decide whether to accept it or not.

Arguments may also support the six forms of trust we discussed in Section 3. They show whether agent i should or should not trust another agent in one of the properties (sincerity, validity, cooperativity, completeness and competence). Let us consider the following example.

Assume the following base:

Note that the argument (3) is in favour of trusting in the sincerity of agent j regarding proposition φ.

The second component of an argumentation framework is its attack relation which expresses conflicts that may raise between arguments. In argumentation literature, several relations were proposed (see Gorogiannis and Hunter (2011) for a summary of relations proposed for propositional frameworks). Some of them, like the well-known rebutting, are symmetric. However, it was shown in Amgoud and Besnard (2009) that any argumentation framework which is grounded on a Tarskian logic Tarski (1956) and uses a symmetric attack relation may violate the rationality postulates proposed in Caminada and Amgoud (2007), namely the one on consistency. Indeed, such a framework may have an extension which supports inconsistent conclusions. Since modal logic is a particular case of Tarski's logics, then the argumentation system we propose here will suffer from the same problem as shown in the following example.

Let us consider the following belief base:

Let

In what follows we avoid thus symmetric relations. We discuss next various forms of attacks. The first one is the so-called assumption-attack proposed in Elvang-Gøransson, Fox, and Krause (1993). It consists of weakening an argument by undermining one of its premises (i.e. an element of its support).

Let

Let us illustrate this relation on the following example.

Let us consider the following base:

It is worth mentioning that this attack relation concerns all types of arguments that may be built from a beliefs base (i.e. arguments supporting ordinary beliefs and those supporting trust in information sources). The following definition introduces another way for attacking arguments in favour of trust in an agent's sincerity. The basic idea is to show a case where the trusted agent sent an information that s/he does not believe. To put it differently, the attack consists of proving that the trustee may lie.

Let

An argument in favour of trust in validity may also be undermined by an argument whose conclusion is a formula which is sent by the trusted agent and which is invalid (i.e. it does not hold).

Let

Similarly, an argument in favour of trust in completeness may be attacked. Recall that such an argument provides a reason for believing that if a given formula holds, then the truster agent will be informed about it by the trustee. An attacker highlights a formula which holds and for which the trustee does not send any message.

Let

Recall that trust in the cooperativity of an agent means that if he believes a statement, then he will inform the truster about it. An attack against an argument supporting such information consists of presenting a case where the trustee was not cooperative.

Let

An argument in favour of trust in the competence of an agent may be attacked by an argument supporting a statement that is believed by this agent but which is not true.

Let

Trust in an agent's vigilance may be attacked by exhibiting a claim which holds but is ignored by the agent.

Let

It is worth mentioning that assumption-attack relation is conflict-dependent, i.e. if (H, h) attacks (H′, h′) then H∪H′ is necessarily inconsistent. This is not the case for the six other relations as shown in the following example.

Let us consider the following base:

The seven forms of attacks are captured by a binary relation on the set of arguments which is denoted by ℜ.

Let (H, h) and (H′, h′) be two arguments of (H, h) assumption-attacks (H′, h′), or (H, h) sinc-attacks (H′, h′), or (H, h) val-attacks (H′, h′), or (H, h) com-attacks (H′, h′), or (H, h) coop-attacks (H′, h′), or (H, h) comp-attacks (H′, h′), or (H, h) vigi-attacks (H′, h′).

The following example shows that the attack relation ℜ is not symmetric.

It is easy to check that there is only one attack between arguments of

Next we show that the relation ℜ may admit self-attacking arguments.

Let us consider the following base:

An argumentation system for reasoning about the beliefs of an agent is defined as follows.

An argumentation system built over a belief base

Since arguments may be conflicting, it is important to define the acceptable ones. For that purpose, we use the stable semantics proposed in Dung (1995). This semantics allows to partition the powerset of the set of arguments into two sets: stable extensions and non-extensions. The extensions are used in order to define the inferences to be drawn from the belief base

Let

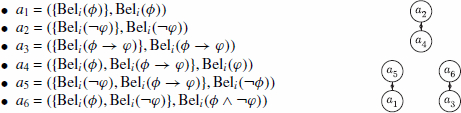

Let us consider the belief base

The following figure summarises the attacks between the eight arguments:

It can be checked that the argumentation system

It is worth noticing that the argument a4 belongs to the three extensions. Thus,

Cont

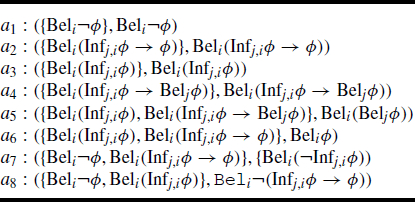

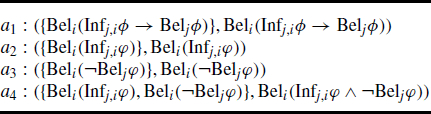

The table below shows some arguments that may be built from

The following figure summarises the attacks between the four arguments:

It can be checked that the argumentation system

Properties of the system

Remember that a belief base of an agent may be inconsistent. We show that the set of inferences drawn from that base using the argumentation system is consistent. Before giving the formal result, we start by another property which shows that every stable extension of the system supports a consistent set of beliefs. Note that this property corresponds exactly to the rationality postulate on consistency that was proposed in Caminada and Amgoud (2007) for rule-based logics and generalised later in Amgoud (2013) for Tarskian logics.

Let The set The set

Let

It is worth mentioning that the set of formulas used in the arguments of a stable extension is a consistent subbase of the beliefs base

From this property of the system, it follows that the set

Let

From Definition 4.20, it follows that

The next property concerns another rationality postulate in Amgoud (2013) which claims that the extensions should be closed under sub-arguments. The idea is that accepting an argument in a given extension implies accepting all its sub-parts in that extension.

Let

Let

The next property concerns the third rationality postulate in Amgoud (2013) which claims that the extensions should be closed under the consequence operator, ⊢ in our case. This property guarantees that the system does not forget intuitive conclusions. Before presenting the formal result, let us first introduce a useful notation.

For

Let

Let

We show next that the set

Let

Let

Assume now that

This means, for instance, that if

In most situations it is an over-simplification to say that an agent i trusts (or does not trust) another agent j. Rather, in informal terms, we may say that i has a limited trust in j, or i’s trust in j is high. We are thus faced with the question: ‘what is the meaning of graded trust?’.

Demolombe (2009) proposed two different answers to this question. The first answer, when trust is represented by a formula of the form

The second answer by Demolombe (2009) is: ‘i believes that the set of φ

j

worlds is partially included in the set of ψ

j

worlds’. In such a case, the fact that i’s trust in j’s sincerity is high can be interpreted as: i believes that in almost all circumstances, if j informs i about p, then j believes p. According to this interpretation trust level refers to the regularity level of the relationship between the fact that φ

j

is true and the fact that ψ

j

is true. Graded trust is thus formally represented by the formula:

For the purpose of our proposal, graded trust may refer to both kinds of levels (uncertainty and regularity). It is thus represented by formulas of the form:

Extended logic

In what follows, we extend the logical language of Section 2 for reasoning about graded trust. Let us first recall the intuitive meaning of the new operators:

□φ: φ holds in all the situations.

The operator □ is introduced for formal purposes that are explained below. We also assume two additional sets that contain levels of beliefs and regularity:

GRB: finite set of belief levels.

GRR: finite set of regularity levels.

Notice that no particular assumption is made on the nature of the elements of these sets. However, we assume that they are both equipped with a preordering ≤ (i.e. a reflexive and transitive binary relation). For x, y∈GRB (respectively, in x, y∈GRR), x≤y means that y is at least as strong as x. The strict relation associated with ≤ is denoted by < and defined as follows:

We use the same notations for the minimal element and for the maximal element in GRB and in GRR while they are not necessarily identical. The context allows us to avoid ambiguities.

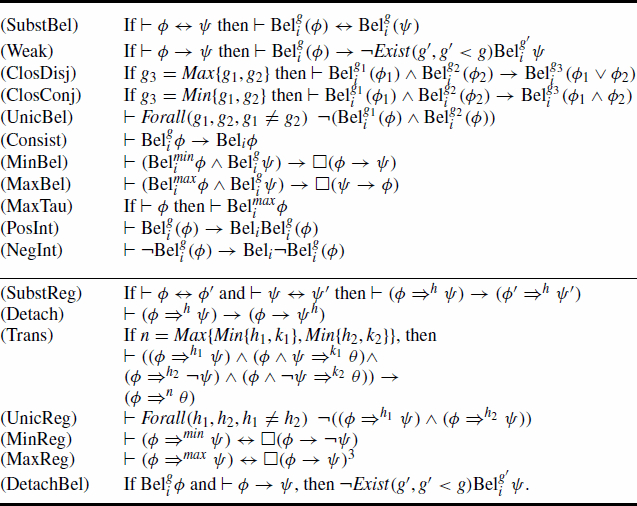

The logic associated with the extended language is based on the following inference rules and axiom schemas.3

Notice that

The first rule (SubstBel), states that in

In the sequel,

There is a clear consensus in the literature that arguments do not necessarily have the same strength. It may be the case that an argument relies on certain information while another argument is built on less certain information, or that an argument promotes an important value while another promotes a weaker one. In both cases, the former argument is clearly stronger than the latter. These differences in arguments’ strengths make it possible to compare them. Consequently, several preference relations between arguments have been defined in the literature (Amgoud, 1999; Benferhat, Dubois, & Prade, 1993; Cayrol, Royer, & Saurel, 1993; Simari & Loui, 1992). There is also a consensus on the fact that preferences should be taken into account in the evaluation of arguments (see Amgoud & Cayrol, 2002; Bench-Capon, 2003; Modgil, 2009; Prakken & Sartor, 1997; Simari & Loui, 1992).

In Amgoud and Cayrol (2002), a first abstract preference-based argumentation framework was proposed. It takes as input a set of arguments, an attack relation, and a preference relation ⪰ between arguments. For two arguments a and b, a ⪰ b means that the argument a is at least as strong as b. The relation ⪰ is abstract and can be instantiated in different ways. However, it is assumed to be a (total or partial) pre-ordering (i.e. reflexive and transitive). The strict version associated with ⪰ is denoted by ≻ and is defined as follows: a ≻ b iff a ⪰ b and not b ⪰ a. Whatever the source of this preference relation is, the idea is to ignore an attack if the attacked argument is stronger than its attacker. Dung's semantics are applied on the remaining attacks. This approach is particularly interesting when the attack relation is symmetric. However, when the attack relation is not symmetric like the relation given in Definition 4.17, the extensions of the argumentation framework may be conflicting leading thus to counter-intuitive results. Consequently, Amgoud and Vesic (2009) proposed a new approach which consists of inverting the direction of an attack whenever the attacker is weaker than its target as follows:

Let

Dung's semantics are then applied to the new framework

Let H is consistent H ⊢* h

Arguments attack each other as shown in Definition 4.17, i.e. the relation used in the binary case. However, they may have different strength levels. It is the strength level of the weakest (or the less certain) formula used in its support.

Let (H, h) be an argument such that

These strengths are used in order to compare arguments. The idea is to prefer the one with the greatest strength level, i.e. the one whose support is based on more certain information.

Let

The argumentation framework

Let us consider the following base:

The intuitive justifications of the beliefs strength levels are that agent i has observed that Luis’ car is at the parking and i is not strongly convinced that this fact guarantees that Luis is at his office. Moreover, i has been informed that Luis is attending a meeting and i knows that a meeting cannot happens at Luis’ office.

From

a1=(H1, h1), where H1 is

From Level(a) definition we have:

From the inference rules (SubstBel) and (Weak), we also have the argument:

a2=(H1, h2), where

We also have the arguments:

a3=(H3, h3), where H3 is

a4=(H3, h4), where

In the logic presented in Section 5 graded beliefs are assumed to be standard beliefs (see schema (Consist)) and standard beliefs must be consistent in the sense of schema (D). According to this logic arguments a2 and a4 lead to an inconsistency. This kind of inconsistency can be removed if it is accepted that

In the same context we could have the following knowledge base

In

a5=(H5, h5), where H5 is

We may have a more complex knowledge base

Now, we have the argument a6:

a6=(H6, h6) where H6 is

Since the consequence h6 of a6 is

Trust modelling has become a hot topic during the last 10 years. More than 20 definitions were proposed for this complex concept. Among others the following one was proposed by Falcone and Castelfranchi (2001):

Trust is a mental state, a complex attitude of an agent i towards another agent j about the behaviour/action a relevant for the goal g.

Gambetta (1990) defines trust as a subjective probability by which an agent i expects that another agent j performs a given action on which its welfare depends. In Liau (2003), trust is represented as agent's beliefs and the author focused on trust in validity and its impact on the assimilation of information received from the trustee. The basic idea is the following: if agent i believes that agent j has told him or her the truth of φ and i trusts the judgment of j on φ, then i will also believe φ. Our formalism follows this line of research and considers six forms of trust including validity, sincerity, and competence. It shows how to build arguments in favour (respectively, against) each form of trust, and how to use beliefs concerning the trustworthiness of the other agents in order to infer new beliefs.

Some attempts on combining argumentation theory and trust have been made in the literature. Based on the representation proposed in Liau (2003), Villata, Boella, Gabbay, and van der Torre (2011) presented an instantiation of the meta-argumentation model Boella, Gabbay, van der Torre, and Villata (2009) for reasoning about trust in validity. The technique of meta-argumentation applies Dung's theory of abstract argumentation to itself. The instantiation contains arguments built from beliefs and meta-arguments. An example of a meta argument is of the form Trust i meaning that ‘agent i is trustable’. Our formalism is more general since it reasons about more forms of trust. Moreover, it is much more simple since it instantiates directly Dung's framework with a clear and intuitive logical language in which various kinds of beliefs are represented.

An argumentation-based model for reasoning about inconsistent and uncertain information was proposed in Tang, Cai, McBurney, Sklar, and Parsons (2012). It is as an instantiation of the preference-based argumentation framework proposed in Amgoud and Cayrol (2002) where arguments do not necessarily have the same strengths and are thus compared using a binary relation expressing preferences. The arguments are built from a base which contains beliefs pervaded with degrees of certainty. These degrees are then combined for computing the certainty levels of the supports of arguments which in turn are used for comparing arguments. The particularity of the model is the use of trusted information in order to assign degrees for inferred beliefs. Indeed, the model takes as input a simple network whose nodes are agents and edges represent trust relationships between nodes. For instance, an arc from agent i towards agent j means that agent i trusts agent j. Weights are associated with edges and express degrees of trust. Our formalism is based on a richer model of trust. It distinguishes between six forms of trusts instead of an absolute trust in Tang et al. (2012). Moreover, our formalism not only uses trusted information in order to infer new beliefs but also reasons about trust itself and infers beliefs about trust.

More recently, in Parsons et al. (2012) the authors focused on identifying 10 sources of trust and presented them in terms of argument schemes, i.e. syllogisms justifying trustworthiness in an agent. Examples of sources are authority, reputation and expert opinion which is called in our formalism competence. Critical questions showing how each argument scheme can be attacked were also proposed. While some of the proposed sources make sense, others are debatable. For instance, trust because of pragmatism says that an agent i may decide to trust another agent j because it serves i’s interests to do so. There is a form of wishful thinking which is not compatible with the fact that trust is a belief.

Another interesting contribution on the combination of argumentation theory and trust was done in Stranders, de Weerdt, and Witteveen (2007). The focus is on computing to what extent agent i trusts agent j. This is done from statistical data and arguments. The model is an instantiation of the abstract decision model proposed in Amgoud and Prade (2009). Our formalism does not use statistical data. Moreover, it is an inference model and not a decision making one.

Finally, in Matt et al. (2010) the authors proposed a model for evaluating the trust an agent may have in another. For that purpose, arguments in favour of trust are built. They are mainly grounded on statistical data which makes this approach different from the one we followed in the present paper.

Conclusion

This paper tackled the important questions of formalising and reasoning about trust in information sources. It proposed a formal model based on the construction and evaluation of arguments. The model presents several advantages: first, it is grounded on an accurate and simple logical language for representing trust in information sources. Indeed, modal logic is used for distinguishing between what is true (respectively, false) and what is believed by an agent. Second, unlike existing works that define absolute trust in an agent, our model uses a fine-grained notion of trust. It distinguishes between six forms of trust including trust in the sincerity of an agent and trust in his competence. The third feature of our model is that it plays two distinct roles: (i) it shows how to take into account trust in information sources in order to deal and reason about information coming from those sources, (ii) it shows whether to trust or not a given source of information on the basis of available beliefs. This makes our model a good candidate for dialogue systems.

There are a number of ways to extend this work. Our future direction consists of investigating the properties of the model under other semantics, namely preferred semantics. We have shown that the attack relations we have defined are very special since they are not grounded on inconsistency. Consequently, despite the fact that arguments are consistent, self-attacking arguments may exist preventing thus the existence of stable extensions.

Another interesting future direction consists of refining the logical language by considering the notion of topic. The basic idea is to represent information such as: Agent i trusts the competence of agent j in psychology but not in philosophy. Our formal definitions can be extended in this direction thanks to the logic of aboutness developed by Demolombe and Jones (1995). The logical language of this logic contains a predicate A(t, φ) whose intuitive meaning is that formula φ is about topic t. This predicate can be used, for instance, for expressing the fact that i trusts j in his validity for any sentence about a given topic t: