Abstract

We present word frequencies based on subtitles of British television programmes. We show that the SUBTLEX-UK word frequencies explain more of the variance in the lexical decision times of the British Lexicon Project than the word frequencies based on the British National Corpus and the SUBTLEX-US frequencies. In addition to the word form frequencies, we also present measures of contextual diversity part-of-speech specific word frequencies, word frequencies in children programmes, and word bigram frequencies, giving researchers of British English access to the full range of norms recently made available for other languages. Finally, we introduce a new measure of word frequency, the Zipf scale, which we hope will stop the current misunderstandings of the word frequency effect.

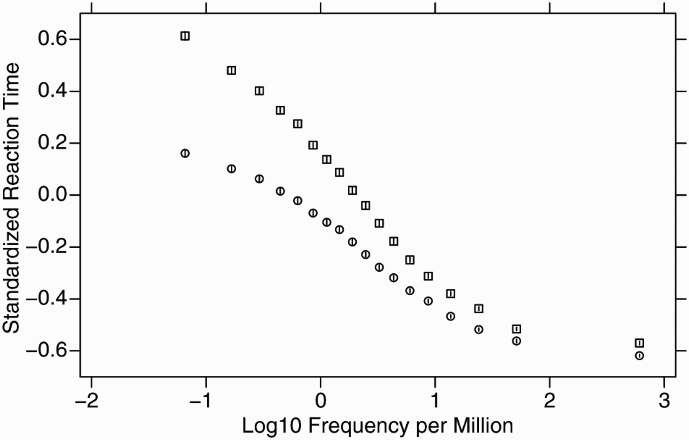

Word frequency arguably is the most important variable in word recognition research (Brysbaert, Buchmeier, et al., 2011). Words that are often encountered are processed faster than words that are rarely encountered. Figure 1 shows the course of the word frequency effect. It includes mean standardized reaction times ( The course of the word frequency effect in mean standarized reaction times from the British Lexicon Project (squares) and the English Lexicon Project (circles). The standard errors are represented by whiskers.

Research in American English and other languages has suggested that word frequencies based on film and television subtitles are better predictors of word processing times than word frequencies based on books and other written sources (Brysbaert, Buchmeier, et al., 2011; Brysbaert, Keuleers, & New, 2011; Brysbaert & New, 2009; Cai & Brysbaert, 2010; Cuetos, Glez-Nosti, Barbon, & Brysbaert, 2011; Dimitropoulou, Duñabeitia, Avilés, Corral, & Carreiras, 2010; Ferrand et al., 2010; Keuleers, Brysbaert, & New, 2010; New, Brysbaert, Veronis, & Pallier, 2007). This is an important finding, because the more variance can be explained by word frequency the fewer other variables are needed to account for word processing times. Brysbaert and Cortese (2011), for example, found that word familiarity did not explain much extra variance in lexical decision times to monosyllabic English words when the SUBTLEX-US subtitle frequency measure was used (Brysbaert & New, 2009) instead of a commonly used, outdated frequency measure based on a small corpus of written sources (Kučera & Francis, 1967).

Although word frequency estimates based on American subtitles can be used (and have been used) in British word recognition research, some precision is lost, because some words have a different spelling (e.g., labor vs. labour) or a different meaning (e.g., biscuits, pants) in the two languages. The divergences between American and British word usage imply that British researchers should limit their research to the words fully shared among the languages if they use American subtitle frequencies. Otherwise, their findings risk overestimating the impact of nonfrequency variables, such as age of acquisition, word familiarity, word length, or similarity to other words. Suboptimal frequency estimates also increase the risk of stimulus selection errors. This will be the case when words must be selected on the basis of frequency information (e.g., words having different numbers of closely resembling words, so-called orthographic neighbours, with higher frequencies) or when words of different conditions must be matched on frequency (e.g., highly emotional words vs. neutral words).

To address the limitations that researchers working with British English are confronted with, we decided to collect subtitle-based UK word frequency norms. In addition, because we were able to directly capture the subtitles from a variety of television programmes, for the first time we also collected subtitle frequencies from channels specifically aimed at children. Below we describe the collection of the data, the summary statistics calculated, and the first validation studies we ran.

Method

Corpus collection

In line with UK regulations, since 2008 the British Broadcasting Corporation (BBC) subtitles all scheduled programmes on its main channels, to help the hearing impaired. 1 These subtitles are not broadcasted through the main channel, but can be superimposed on the programme by those who wish so (e.g., by using Teletext). To have the widest possible range of language input, we collected the words and word pairs of the subtitles from nine channels (BBC1–BBC4, BBC News, BBC Parliament, BBC HD, CBeebies, and CBBC) broadcasted over a period of three years (January 2010–December 2012). Of these channels, BBC1 is the most popular and extensive (aimed at all types of audiences). The other channels have more limited hours. Of further interest is that the CBeebies channel is meant for preschool children (0–6 years) and the CBBC channel for primary school children (6–12 years). This allowed us to compile frequency norms for these groups.

Notwithstanding the provisions relating to “fair dealing” provided under Section 29 of the Copyright Designs & Patents Act 1988 (Government United Kingdom, 1988), the full textual content of the relevant subtitles was not stored or reproduced for the purpose of this research. A count of individual words and consecutive words was undertaken, obtainable from public transmissions. The method employed does not detract from or otherwise undermine the value of this evaluative work.

Text cleaning

The broadcasts were cleaned semiautomatically for doubles (programme repeats) and subtitle-related information not broadcasted to the viewers. Also the parts of the subtitles not related to the conversation were eliminated (e.g., the words “silence” or “thunder” to describe the ongoing scene; these are usually presented in upper case, or in a different font or colour in the subtitle). After the cleaning we obtained a total of 201.7 million words, coming from 45,099 different broadcasts. This is larger than the other existing subtitle corpora (Brysbaert & New, 2009; Cai & Brysbaert, 2010; Cuetos et al., 2011; Dimitropoulou et al., 2010; Keuleers et al., 2010) 2 and allowed us to calculate more precise parts-of-speech dependent frequencies and word bigrams.

Word Frequency Measures

Word frequency counts

A first decision to be made was what to do with hyphenated words. In British English, words are often hyphenated when they function as adjectives. So, a potion that saves lives can be described as “a life-saving potion”. This phrase could be counted as consisting of three word types (a, life-saving, potion) or four word types (a, life, saving, potion). The problem was particularly relevant for the BBC subtitles, because nearly one out of four word types contained a hyphen in the first analysis of the data. The vast majority of these hyphenated entries were of low frequency (fewer than 100 observations on a total of 200 million words). Because there are no a priori considerations about how to handle this finding (also because there is quite some individual variability in the use of hyphens; Kuperman & Bertram, 2013), we decided to use a pragmatic criterion and looked at which word frequencies correlated most with the 28 thousand lexical decision times of the BLP (Keuleers et al., 2012). As this clearly favoured the dehyphenated word frequencies (a difference in variance explained of 5%), we decided to dehyphenate the data before counting the words. 3

The dehyphenated subtitles resulted in a total of 332,987 different word types for a total of 201,712,237 tokens. Of these, 31,368 types were in the CBeebies subtitles with a total of 5,860,275 tokens, and 70,755 types were in the CBBC subtitles with a total of 13,644,165 tokens. Because the vast majority of words observed in a single broadcast were typos and other nonword-like structures (like “aaaarrrrgh” or “zzzzzzzzzzzz”), we decided to take out all entries observed in a single broadcast only. This reduced the number of types to 159,235 with a total token count of 201,335,638 for the complete corpus, 5,848,083 for the CBeebies subcorpus (27,236 types), and 13,612,278 for the CBBC subcorpus (58,691 types).

A standardized frequency measure: The Zipf scale

Although the frequency counts are the most versatile measure (as will become clear later, when we calculate all types of derived measures), they have one big disadvantage. The interpretation of the frequency measure depends on the size of the corpus. Therefore, authors have looked for a standardized frequency measure, an index with the same interpretation across all corpora collected.

Thus far, the most popular standardized frequency measure has been frequency per million words (fpmw). It is the frequency measure that we made available in our previous work on subtitle frequencies as well. However, we increasingly noticed that this measure leads to an incorrect understanding of the word frequency effect.

Because their corpus contained only 1 million words, the lowest value in the word frequencies made available by Kučera and Francis (1967) was 1 fpmw. This contributed to the assumption that 1 fpmw is the lowest possible frequency. Obviously, this is no longer the case for larger corpora. As it happens, about 80% of the word types in SUBTLEX-UK have a frequency of less than 1 fpmw (i.e., fewer than 200 occurrences in all broadcasts). Second, as shown in Figure 1, nearly half of the word frequency effect is situated below 1 fpmw, and there is very little difference above 10 fpmw. The frequency effect of lexical decision times between 0.1 fpmw and 1 fpmw is equal to or larger than the effect between 1 fpmw and 10 fpmw. A logarithmic transformation of frequency measures, as is routinely performed, alleviates this problem. However, the logarithms of fpmw become negative for frequencies lower than 1 (as again shown in Figure 1), which uninformed users tend to avoid. Because of these properties, fpmw as a standardized measure puts users on the wrong foot.

To make the word frequency effect easier to understand, one needs a scale with the following properties:

It should be a logarithmic scale (e.g., like the decibel scale of sound loudness). It should have relatively few points, without negative values (e.g., like a typical Likert rating scale, from 1 to 7). The middle of the scale should separate the low-frequency words from the high-frequency words. The scale should have a straightforward unit.

Once we know what the scale should look like, it is not so difficult to come up with a good transformation. In particular, when we take the log10 of the frequency per billion words (rather than fpmw), the scale fulfils the first three requirements. To meet the last requirement, we propose to call the new scale the

The Zipf scale is a logarithmic scale, like the decibel scale of sound intensity, and roughly goes from 1 (very-low-frequency words) to 6 (very-high-frequency content words) or 7 (a few function words, pronouns, and verb forms like “have”). The calculation of Zipf values is easy as it equals log10 (frequency per billion words) or log10 (frequency per million words) + 3. So, a Zipf value of 1 corresponds to words with frequencies of 1 per 100 million words, a Zipf value of 2 corresponds to words with frequencies of 1 per 10 million words, a Zipf value of 3 corresponds to words with frequencies of 1 per million words, and so on.

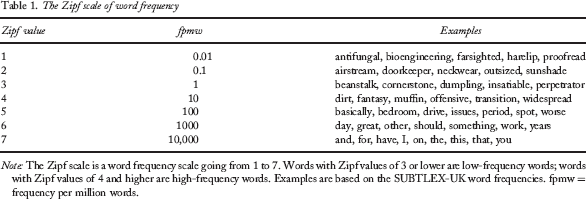

The Zipf scale of word frequency

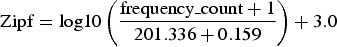

One more addition that is of interest for the Zipf scale is the possibility to include words with frequency counts of 0 (i.e., words not observed in the corpus). Although these words are less common in large corpora, they are by no means absent. Such words pose a problem for the Zipf scale as a result of the logarithmic transformation (given that the logarithm of 0 is minus infinity). In a recent review, Brysbaert and Diependaele (2013) concluded that the best way to deal with 0 word frequencies is the Laplace transformation. Rather than working with the raw frequency counts, one works with the frequency counts + 1. This means that all frequency values are (slightly) elevated. The proper application of the algorithm also implies that the theoretical size of the corpus is a little larger than the actual size, because one is leaving room for

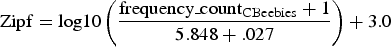

In practice, the following equation is needed to calculate the Zipf values on the basis of the frequency counts of the total corpus:

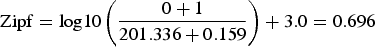

The values in the denominator are the size of the corpus in millions plus the number of word types in millions. Specifically, the Zipf value of an unobserved word type will be:

The Zipf value of a word type observed once in the complete corpus will be 0.997; that of a word observed 10 times will be 1.737, and so on.

To calculate the Zipf values for the CBeebies corpus, we have to use the following equation:

Specifically, this means that words with a 0 frequency in the CBeebies corpus get a Zipf value of 2.231; those with a 0 frequency in the CBBC corpus get a Zipf value of 1.864. The higher values for unobserved word types are due to the smaller sizes of the corpora and also mean that one should be sensible in their use. There is no point in blindly using these values for all missing words in the lists, as one assumes that the missing words are known to preschoolers (CBeebies) or primary school children (CBBC). As we see below, this may be one reason why the childhood frequencies are not correlating very well with the lexical decision times of the British Lexicon Project when calculated across all words.

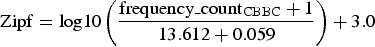

Frequencies used in two classical studies of the word frequency effect, expressed as frequency per million words and as Zipf values

Contextual diversity

Adelman, Brown, and Quesada (2006; see also Adelman & Brown, 2008; Perea, Soares, & Comesaña, 2013; Yap, Tan, Pexman, & Hargreaves, 2011) argued that not so much the frequency of occurrence of a word matters, but the number of contexts in which the word appears. Words only encountered in a small number of contexts (say, a word with a frequency of 100 occurring in one or two television episodes) will be more difficult to process than equally frequent words encountered in a variety of contexts (e.g., a word with a frequency count of 100 used in 80 different broadcasts). A good proxy for contextual diversity (CD) is the number of television programmes/films (or the percentage of programmes/films) in which the word appears. Brysbaert and New (2009) indeed observed that log(CD) explained up to 4% of variance more in lexical decision times than log(frequency). Part of the advantage was methodological, however. Two factors were involved. First, the effect of log(CD) on reaction times (RTs) is more linear than the effect of log(frequency), which becomes flat for high-frequency words, as can be seen in Figure 1. When nonlinear regression analysis was used, the difference between CD and frequency became smaller than 2%. Another part of the difference was due to the fact that some words occurred with very high frequency in a few films because they were the names of main characters (e.g., archer, bay, brown). The CD statistic is less influenced by these instances than the frequency statistic.

Still, the CD measure seems to have added value. Therefore, we provide this information for the different corpora we used (full corpus, CBeebies, CBBC). The values are available both as the total number of television programmes in which the word occurred and as the percentage of television programmes in which the word was encountered. As indicated above, the total number of broadcasts in the complete corpus was 45,099. The number of broadcasts in CBeebies was 4847; in CBBC it was 4848. 4

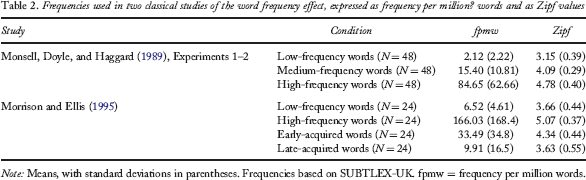

Part-of-Speech dependent frequencies

For many purposes it is good to know what roles words play in sentences and the relative frequencies of these roles (Brysbaert, New, & Keuleers, 2012). This enables researchers interested in nouns, for instance, to limit their stimulus materials to words that are always (or mostly) used as nouns. It also allows researchers to know whether an inflected word is used more often as an adjective (e.g., appalling) or as a verb (e.g., played). This is important information to decide which words to include in rating studies (e.g., Kuperman, Stadthagen-Gonzalez, & Brysbaert, 2012).

Part-of-Speech (PoS) frequencies can only be obtained after the corpus has been parsed (i.e., the sentences broken down into their constituent parts) and tagged (i.e., the words given their correct part of speech in the sentence). For a long time this was virtually impossible given the amount of work involved. However, the development of automatic PoS taggers has made it possible to get a reasonably good (though not perfect) outcome in reasonable time and at an affordable price. For a long time, the CLAWS tagger developed at the University of Lancaster was the golden standard (Garside & Smith, 1997; Lancaster University Centre for Computer Corpus Research on Language, n.d.). It was used for the BNC corpus, and we also used it for our SUBTLEX-US corpus (Brysbaert et al., 2012). However, in recent years the Stanford tagger (initial version: Toutanova, Klein, Manning, & Singer, 2003; The Stanford Natural Language Processing Group, n.d.) has become a worthy competitor. As it happens, the outcome of the first analyses with the Stanford tagger correlated more with the BLP word processing times than the outcome of the CLAWS tagger did. As indicated in Footnote 3, this was due to the fact that the Stanford tagger is more consistent in dehyphenating words than CLAWS. When the subtitles were cleared of hyphens before running the taggers, both gave comparable output.

Example of the PoS analysis

Bigram frequencies

Because extra information can be obtained from word combinations (Arnon & Snider, 2010; Baayen, Milin, Filipovic Durdevic, Hendrix, & Marelli, 2011; Siyanova-Chanturia, Conklin, & van Heuven, 2011), we also collected word bigram frequencies in the entire corpus (i.e., the frequency with which word pairs were observed). This resulted in over 1.5 million lines of consecutive word pairs observed in the corpus. For each pair we give information about the number of times it was observed, the symbols written between the words (space, punctuation mark, hyphen, …) and their respective frequencies. This makes it possible for everyone to calculate interesting additional metrics. For instance, it allowed us to add the 787 hyphenated words with a frequency count of more than 100 (fpwm = 0.5) to the database. 7 It also allowed us to warn researchers when a compound word is more likely to be written as two separate words than as a single word (for instance, the word “makeup” is observed 308 times in the subtitles (Zipf = 3.18), but the spellings “make-up” and “make up” have a combined frequency of 8998, making “makeup” a bad choice for a low-frequency word).

Correlations with Lexical Decision Measures

Given the ease with which word frequencies can be collected nowadays, it is important to check whether a new frequency measure adds something extra to the existing ones. On the basis of previous research, we can expect this to be the case given the superiority of subtitle-based frequency estimates, but still it is good to test this explicitly, also to make sure no calculation errors have been made. The most interesting dataset is the BLP (Keuleers et al., 2012), which provides lexical decision reaction times and accuracy measures of British students for over 28 thousand monosyllabic and disyllabic words. The main competitors to the SUBTLEX-UK word frequencies are the BNC frequencies, the CELEX frequencies, and the SUBTLEX-US frequencies. Words not observed in a corpus were assigned a frequency of 0, and log frequencies were the Zipf values (with Laplace transformation). The Laplace transformation was also used for the CD measure.

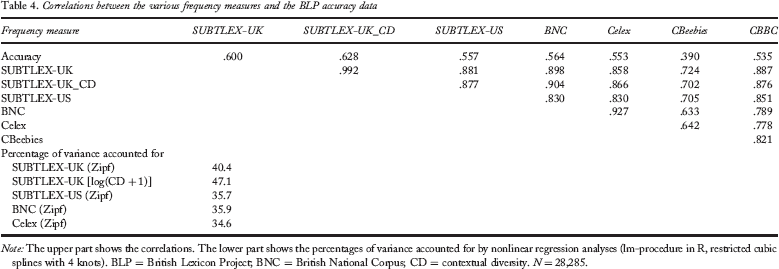

Correlations between the various frequency measures and the BLP accuracy data

Interestingly, the correlations with the childhood frequencies are much lower, in particular the correlation with the CBeebies frequencies (preschool children). Two reasons for this are the smaller sizes of the corpora (including the many missing words not known to children but given rather high Zipf estimates) and the fact that the overall SUBTLEX-UK frequencies include the subtitles from CBeebies and CBBC television programmes (almost 10% of the total SUBTLEX-UK).

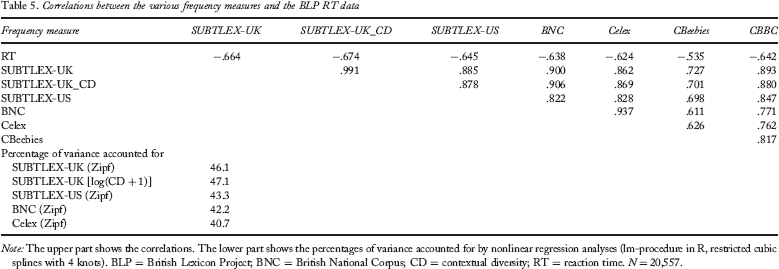

Correlations between the various frequency measures and the BLP RT data

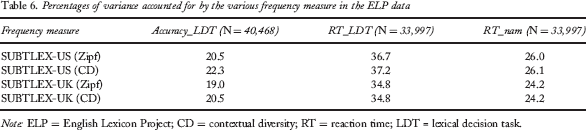

Percentages of variance accounted for by the various frequency measure in the ELP data

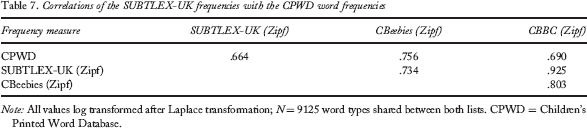

Correlations with the Children's Printed Word Database (CPWD)

Correlations of the SUBTLEX-UK frequencies with the CPWD word frequencies

Discussion

In this paper, we presented a new database of word frequencies for British English, based on television subtitles. On the basis of our previous research, we expected that these frequencies would better predict word processing performance than word frequencies based on written sources (in particular, the British National Corpus). This indeed turned out to be the case, when we tried to predict the lexical decision times and accuracies of the British Lexicon Project (Tables 4 and 5). The British subtitle frequencies were also better for predicting the BLP data than were the American subtitle frequencies, but they were inferior for accounting for the ELP data, in line with the observation that word usage is not completely the same in British and American English. The extra variance accounted for amounted to 3–5%, which is considerable given that many variables explain less than 1% of the variance once the effects of word frequency, length, and similarity to other words are partialled out (Brysbaert, Buchmeier, et al., 2011; Brysbaert & Cortese, 2011; Kuperman et al., 2012).

While analysing the findings, we were once again struck by how misleading the standardized word frequency measure fpmw (frequency per million words) is to understand the word frequency effect. Therefore, we proposed an alternative, the Zipf scale, which is better suited to the use of word frequencies in psychological research. This scale goes from slightly less than 1 to slightly more than 7 and can easily be interpreted as follows: Values of 3 and less are low-frequency words; values of 4 or more are high-frequency words. Words not in SUBTLEX-UK get a Zipf value of 0.696 when the frequencies are based on the complete corpus, 1.864 when the CBBC frequencies are used, and 2.231 when the CBeebies frequencies are used. The differences in minimal values are caused by the differences in corpus size and agree with the fact that missing words of interest in CBeebies or CBBC are likely to be more familiar than words not found in the entire corpus.

In addition to the word frequencies, the new database offers other information, which will allow British researchers to do cutting-edge investigations. These are:

Part-of-speech-related frequencies, which make it possible for researchers to better control their stimulus materials. A measure of contextual diversity (CD), which is particularly interesting for predicting which words will be known and which not (compare Tables 4 and 5). Word frequencies in materials aimed at very young (preschool) and young (primary school) children. Information about word bigrams.

Availability

The SUBTLEX-UK data are available in three easy-to-use files. The first one (SUBTLEX-UK_all) is a 332,988 × 15 matrix containing information of all word types (including numbers) encountered in the dehyphenated subtitles. The 15 columns give information about:

The spelling of the word type (Spelling). The number of times the word has been counted in all subtitles (Freq). The number of times the word started with a capital (CapitFreq). The percentage of broadcasts containing the word type in all subtitles (CD). The number of broadcasts containing the word in all subtitles (CDCount). The most frequent part of speech of the word (DomPoS). The number of times this dominant Pos was observed (DomPosFreq). The lemma associated with the dominant Pos (DomPosLemma). The number of times this lemma was observed in all subtitles (DomPosLemmaFreq). The summed frequencies of all the times this lemma was observed irrespective of the PoS (DomPosLemmaTotalFreq). All parts of speech taken by the word type (AllPos). The respective frequencies of these PoS (AllPosFreq). The associated lemma information (AllLemmaPos, AllLemmaPosFreq, AllLemma PosTotalFreq).

The second file (SUBTLEX-UK) contains more information about the 160,022 word types (159,235 single words and 787 hyphenated words) that are observed in more than one broadcast and which only contain letter information (i.e., no digits or nonalphanumerical symbols). This file is the file most psycholinguistic researchers will want to use. It has 27 columns, containing:

The word type. The frequency counts in all subtitles, the CBeebies subtitles, the CBBC subtitles, and the British National corpus. The Zipf values associated with the various frequencies. The CD counts and percentages in the three SUBTLEX corpora. The dominant PoS, its associated lemma, and their frequencies. All the PoS and frequencies of the word. The frequency of the word starting with a capital. Whether the lower-case spelling of the word type was accepted by a UK word spell checker (UK), a US word spell checker (US), both spell checkers (UK US), or none (X)

10

. This is an interesting column when words must be selected, and one wants to avoid the inclusion of names or other uninteresting entries. Whether the entry contains a hyphen (cf. the 787 added entries with hyphens). Whether the entry has another homophonic entry. This is interesting for finding homophones, but also to make sure selected low-frequency words do not have a higher frequency spelling alternative. Whether or not the word type has been encountered as a bigram in the subtitles. The frequency of the bigram (summed across all types of intervening symbols, in particular, blank spaces, punctuation marks, and hyphens).

Finally, the third file (SUBTLEX-UK_bigrams) contains information about word pairs. Because this file has nearly 2 million lines of information, it cannot be made available as an Excel file (although we have such a file with all entries observed 12 times or more). Each line contains information about Word 1 and Word 2, the frequency of the combination, the CD count of the combination, and which symbols were found between the two words with which frequencies. This is important information when researchers want to include transition probabilities in their investigations, or when expressions (e.g., object names, particle verbs) consist of two words.

Footnotes

1

On the basis of anecdotal evidence we can add that these subtitles are also appreciated by viewers with English as second language.

2

Brysbaert and New (2009) reported that the word type frequencies themselves show little difference once the corpus contains 30 million words, a finding that was replicated in the present analyses.

3

Dehyphenation also occurs in automatic text parsers, such as CLAWS and the Stanford parser (to be described later). Because the Stanford parser dehyphenates more words than CLAWS, the outcome of this parser outperformed that of CLAWS on the raw corpus, but no longer on the dehyphenated corpus.

4

The reason why these numbers are very similar is that both channels have a similar rotation of programmes with repeats after a rather short period of time.

5

A disadvantage of the Stanford tagger is that in its default mode it Americanizes the spellings of the words. So, one must be careful to change this when one is working with British spellings.

6

A notorious example is “horsefly”, which both CLAWS and Stanford parse as an adverb (arguably because the word is not in the programme's lexicon, so that too much reliance is put on the end letters –ly). Ironically, Stanford does correctly classify “horseflies” as a noun associated with the lemma “horsefly” (presumably because the end letters, –lies, are more likely to be associated with plural nouns than with other parts of speech).

7

These frequencies were not subtracted from the frequencies of the individual words, under the assumption that the component words of a hyphenated word get coactivated upon seeing the hyphenated word.

8

9

SUBTLEX-UK frequencies not including childhood frequencies can easily be obtained by subtracting the CBeebies and CBBC frequency counts from the total frequency counts.

10

The speller was the MS Office 2007 spellchecker, augmented with a list of lemmas one of the authors (M.B.) is compiling.