Abstract

An effective implementation approach is crucial for successful integration of structured risk assessment instruments into practice. This qualitative study explored barriers and facilitators to the implementation of the Short-Term Assessment of Risk and Treatability: Adolescent Version (START:AV) in a Dutch residential youth care service. Perceptions of staff members from various disciplines were gathered through focus group interviews at three consecutive occasions. After inductive coding of the interview extracts using thematic analysis, the identified codes were linked to the consolidated framework for implementation research. Through this framework, factors that influence an implementation project can be organized into multiple domains and constructs. In the present study, staff members described implementation barriers related to characteristics of the risk assessment instrument, staff, and the implementation process. In addition, features of the setting were frequently mentioned as hindering the implementation, such as hierarchy, culture, communication, as well as implementation climate and readiness for change. Staff members also identified multiple facilitators, such as experienced advantages of the START:AV compared to the previous risk assessment practice and positive beliefs about the instrument. The article concludes with recommendations for successful implementation of structured risk assessment instruments in forensic-clinical practice.

Over the past decade, researchers have flagged the need for more research and policy on the implementation of risk assessment instruments (Desmarais, 2017; Nonstad & Webster, 2011). Implementation refers to the processes that bridge the gap between the decision to adopt a new practice and the committed use of this practice (Damschroder et al., 2009). Once risk assessment instruments with sufficient predictive validity became available, successful implementation was considered ‘the new challenge’ in risk assessment practice (Nonstad & Webster, 2011, p. 94). How can we effectively integrate risk assessment instruments in practice and ensure fidelity of application? This can be a challenging undertaking, for example, due to staff resistance and insufficient awareness of potential pitfalls (Schlager, 2009; Webster et al., 2006). Yet, the quality of implementation is crucial for the effectiveness of risk assessment instruments in reducing recidivism. Müller-Isberner et al. (2017) stated that “positive outcomes are achieved only when both the implementation process and the practice are effective” (p. 465). Other researchers have expressed concerns that even psychometrically sound instruments will fail to improve outcomes for clients if not implemented properly (Desmarais, 2017; Schlager, 2009). For example, studies have found that services with better implementation quality (e.g., adherence to the administration procedure) had significantly better results in terms of risk management and service allocation (Vincent et al., 2012; 2016). In turn, improved risk management, by matching identified needs with appropriate service provision, is associated with reduced reoffending (Peterson-Badali et al., 2015).

To ensure implementation quality, a greater awareness and understanding of the barriers to successful implementation of risk assessment instruments in forensic-clinical practice is needed (Haque, 2016). Webster and colleagues (2006) underscored the importance of developing an implementation plan that reflects upon potential obstacles, such as limited resources or poor communication. Factors that hinder effective implementation are referred to as implementation barriers (Damschroder et al., 2009). Reflecting upon factors that promote successful implementation (e.g., management support, staff buy-in) should also be part of the implementation plan. These factors are referred to as implementation facilitators. Insight into the barriers and facilitators is crucial when deciding on which implementation strategies to adopt.

In 2016, Levin and colleagues published a systematic review of studies that documented determinants of successful risk assessment implementation in adult and adolescent (forensic) psychiatric and correctional settings. They included 11 studies, published between 2000 and 2013, in which the authors discussed factors they perceived as hindering or facilitating the implementation. Levin et al. (2016) organized these determinants according to the consolidated framework for implementation research (CFIR; Damschroder et al., 2009). The CFIR is a typology of implementation determinants compiled from 19 theories, and consists of five domains and 39 constructs. The first domain ‘intervention characteristics’ (eight constructs) concerns features of the method that is being implemented, such as cost, complexity, and adaptability of the new method. The second domain ‘outer setting’ (four constructs) refers to external economic, political and social influences that can impact an implementation process within an organization. In addition to the political context and the professional network of an organization, the needs of service users are also considered as determinants within the outer setting. The domain ‘inner setting’ (14 constructs) concerns the organization’s internal context, such as culture and climate, communication structures, staff involvement, and available resources. The fourth domain ‘user characteristics’ (five constructs) pertains to the characteristics of the users of the implemented method. Users, usually staff members, are considered active recipients with beliefs, attitudes, and ambitions that affect their behavior in relation to the implementation. The last domain concerns the ‘process’ (eight constructs). An implementation process consists of various steps that require action, from planning and engaging, to executing and evaluating. Each step includes activities that can enable or hinder the implementation.

Levin et al. (2016) used these domains and constructs to organize the hindering and enabling factors documented in the reviewed studies. They identified determinants linked to all CFIR domains, except outer setting. Although the external context (e.g., governmental guidelines and legislation) was mentioned in most studies, it was not reported as a factor affecting the implementation. Determinants related to the inner setting and the implementation process were most commonly reported. For example, all studies addressed the importance of ensuring staff engagement from an early stage as well as providing information and training to facilitate the implementation. Characteristics of the risk assessment instruments were also cited as determinants, mainly as barriers. For example, some risk assessment instruments were (initially) considered time-consuming or difficult to use. On the other hand, the potential to adjust an instrument to fit the local routines was repeatedly mentioned as a facilitator. Beliefs and concerns of the users were reported in all but one study as influencing the implementation. For example, the perception of clinical usefulness among users was identified as a facilitator, whereas a previous negative experience with structured risk assessment was considered a barrier to the implementation.

Central to the present study is the Short-Term Assessment of Risk and Treatability: Adolescent Version (START:AV; Viljoen et al., 2016). The START:AV is an evidence-based structured risk assessment instrument for use with boys and girls between 12 and 18 years old. It was developed, piloted and evaluated in North America, mainly within juvenile justice populations. The START:AV was deemed the most appropriate instrument for the present secure youth care facility, because of its emphasis on dynamic risk factors and its balanced approach that includes both risk and protective factors. The dynamic nature of the instrument, with a recommended reassessment interval of three to six months matched the setting’s four-month cycle of care. Additionally, compared to other risk assessment instruments for adolescents, the START:AV evaluates a wider spectrum of adverse outcomes. Short-term risk is evaluated for violence to others, nonviolent reoffending, substance abuse, unauthorized absence, suicide, self-harm, victimization, and self-neglect. All of these adverse outcomes are highly prevalent among adolescents admitted to residential youth care settings in the Netherlands (Vermaes et al., 2014).

We are aware of one implementation study with the START:AV conducted by Sher and Gralton (2014) in a British medium secure adolescent service. This study aimed to establish gaps in training and involve staff in the implementation process by inquiring their views and experiences with the instrument. Although the authors did not explore barriers and facilitators to the implementation, implementation determinants can be derived from their findings and the CFIR domains can be applied. With respect to intervention characteristics, staff members indicated that the START:AV was easy to use, with some difficulties in distinguishing between the item ratings (low, moderate, high) as well as differentiating between strengths and vulnerabilities. Some staff members also felt that the START:AV could be confusing and somewhat repetitive. With respect to the ‘inner setting’ domain, issues with untrained staff and insufficient practice with the instrument were reported. In addition, staff experienced not enough time to complete an assessment. Lastly, determinants related to user characteristics were noted: staff expressed facilitating beliefs about the START:AV as valuable and helpful, including the overall clinical usefulness of the instrument, and the added value of rating vulnerabilities as well as strengths. On the other hand, some believed that the START:AV might not be sensitive enough to measure change within a complex adolescent population. In addition, some raised the concern that the instrument was only as useful as the level of insight of the team into a patient’s problems. Nevertheless, most staff members felt confident in their ability to complete the START:AV and contribute meaningfully to the assessments. Aspects of the outer setting and implementation process could not be gathered from this study.

The present study

In line with Levin et al.’s review (2016), the present study explored which factors influenced the implementation of the START:AV in a residential youth care service. Implementation determinants from the perspective of staff members were gathered through focus group sessions and organized according to the CFIR. To our knowledge, this is the first primary study to apply the CFIR to risk assessment implementation research.

Method

This study is part of a larger evaluation study on the implementation of the START:AV, using a mixed-method design to assess various aspects of implementation within a youth care service. Previously, we used a quantitative web survey method to ask staff members about multiple implementation outcomes (e.g., feasibility, acceptability; De Beuf et al., 2019). Using a qualitative approach, we explored staff members’ perceptions of the implementation process and initial experiences with the START:AV, using a focus group interview method. The present article focuses on one particular issue that was addressed by the qualitative approach, that is, implementation determinants (i.e., barriers and facilitators) as perceived by staff members.

Setting

The study took place in one of 14 Dutch secure residential youth care facilities for boys and girls who suffer from severe behavioral and mental health problems. Youth are admitted under civil law, with a child protection order, to improve their safety (e.g., suicidal behavior, victimization) and/or the safety of others (e.g., violence toward others). At the time of the study, the service had three high secure (of which two were observation units) and six medium-secure (treatment) units, serving about 240 youths each year. In 2016, adolescents (58% girls) were admitted for 262 days on average, ranging from 4 to 717 days (A. Baanders, personal communication, January 31, 2019).

Prior to the implementation of the START:AV, no validated structured risk assessment instrument was routinely used within the service. Although the Dutch version of the Structured Assessment of Violence Risk in Youth (SAVRY; Borum et al., 2006/2006) was available and some treatment coordinators were trained in its use, the instrument was completed only a few times a year. Specifically, it was used when boys with severe aggressive and delinquent behavior, transferred from juvenile detention, were admitted to the service. Loosely based on the SAVRY, the service had constructed a 12-item risk checklist that was used systematically in decision making regarding the youths’ leave status (escorted/unescorted). The items of this risk list, such as ‘impulsivity’ and ‘association with deviant peers’, were rated by treatment coordinators as low, moderate or high without any rating criteria. Thus, the START:AV was introduced to replace both this 12-item risk list and the SAVRY. In addition, the START:AV substituted another self-constructed list: the ‘dimension list’. This list was used upon admission to the observation units to gather information on 15 developmental areas, the so-called dimensions (e.g., parent-child interaction, autonomy). Hence, in addition to risk assessment, the START:AV would serve as the service’s primary instrument to gather and structure treatment-relevant information on the adolescent.

The START:AV implementation took place in a turbulent social and political context. In 2015, a new Dutch Youth Act went into effect, resulting in major changes in the youth care system. One of the objectives of the new act was to decrease the use of costly specialized services such as residential youth care (Hilverdink et al., 2015). This affected the present service in terms of a reduction of beds, staff reorganization and lay-offs, and an increased workload for the remaining staff.

START:AV

The START:AV consists of 26 dynamic risk factors addressing characteristics of the adolescent (e.g., coping, social skills), their relationships and environment (e.g., peers, parental functioning), and their response to treatment (e.g., insight, treatability). The items are rated on a 3-point scale (low, moderate, high) based on functioning in the last three months: once as a protective factor (strength) and once as a risk factor (vulnerability). After weighing and integrating all available information, the evaluator formulates a final risk estimate (low, moderate, high) for eight adverse outcomes, relevant for the next three months. The START:AV is a so-called fourth generation risk assessment instrument which means that it explicitly links the assessment process to risk formulation and risk management (Haque, 2016). In terms of psychometric properties, there is evidence for fair to excellent interrater reliability with intraclass correlation coefficients (ICC1) ranging from .52 to .88 for the risk estimates, .86 for the vulnerabilities total score, and .92 for the strengths total score (Viljoen et al., 2012). Significant predictive validity was found for the majority of adverse outcomes, with ‘area under the curve’-values ranging from .63 to .83 for vulnerabilities total score, .63 to .80 for strengths total score, and .71 to .91 for the risk estimates. Thus far, the START:AV has been found to significantly predict all adverse outcomes, except unauthorized absences and health neglect (Bhanwer et al., 2016). The Dutch START:AV (De Beuf et al., 2019) was implemented at the current setting and research on the psychometric properties is currently conducted.

Implementation process

The START:AV implementation project was based on the eight steps presented in Risk Assessment in Juvenile Justice: A Guidebook for Implementation by Vincent and colleagues (2012). The implementation was led by an implementation coordinator (first author) who was hired for the project, in collaboration with an (internal) implementation committee and an (external) risk assessment expert (second author). The coordinator and the implementation committee were responsible for the implementation procedure and the development of policies and procedures that detailed the incorporation of the START:AV into the service’s workflow. In the months between September 2014 and March 2015, the implementation coordinator trained treatment coordinators in using the instrument. The training included a one-day workshop in which they learned to rate the START:AV, followed by additional practice cases that were discussed during a two-hour workshop. Later, a third half-day workshop was organized in which treatment coordinators received guidance on how to translate the risk assessment findings into a risk management plan according to the Risk-Need-Responsivity (RNR) principles (Bonta & Andrews, 2017). All treatment coordinators that were hired after the initial training, received one-on-one training shortly after recruitment, by the same trainer. From June 2015 until November 2015, a pilot implementation was carried out on two units to test the new workflow and accompanying documents (e.g., treatment plan). The coordinator and the implementation committee evaluated the pilot and subsequent recommendations were implemented by the coordinator.

The official, service-wide implementation started in February 2016 on all units simultaneously, for new admissions only. Due to this gradual implementation, it was not until fall 2016 that all treatment coordinators had completed at least one START:AV assessment in their caseload. The assessments were initially a task of treatment coordinators and included completing the START:AV comprehensive rating form (in MS Word) as a ‘master file’ in which all available information about the youth was gathered (e.g., file information, interviews, psychological testing, and observations). The estimated completion time within this approach ranged from one hour to four hours. However, starting from March 2017, all frontline staff (i.e., teachers, group care workers, therapists, family social workers, occupational therapists, drug counselors) were instructed to report their evaluation of the past months directly into the START:AV form. The implementation coordinator organized information sessions to educate frontline staff on the instrument’s items. Although the information was now entered via a multidisciplinary approach, treatment coordinators remained responsible for rating the items and formulating a final risk judgment. Over the course of 2017, a computerized version of the START:AV was developed and tested.

Focus group design

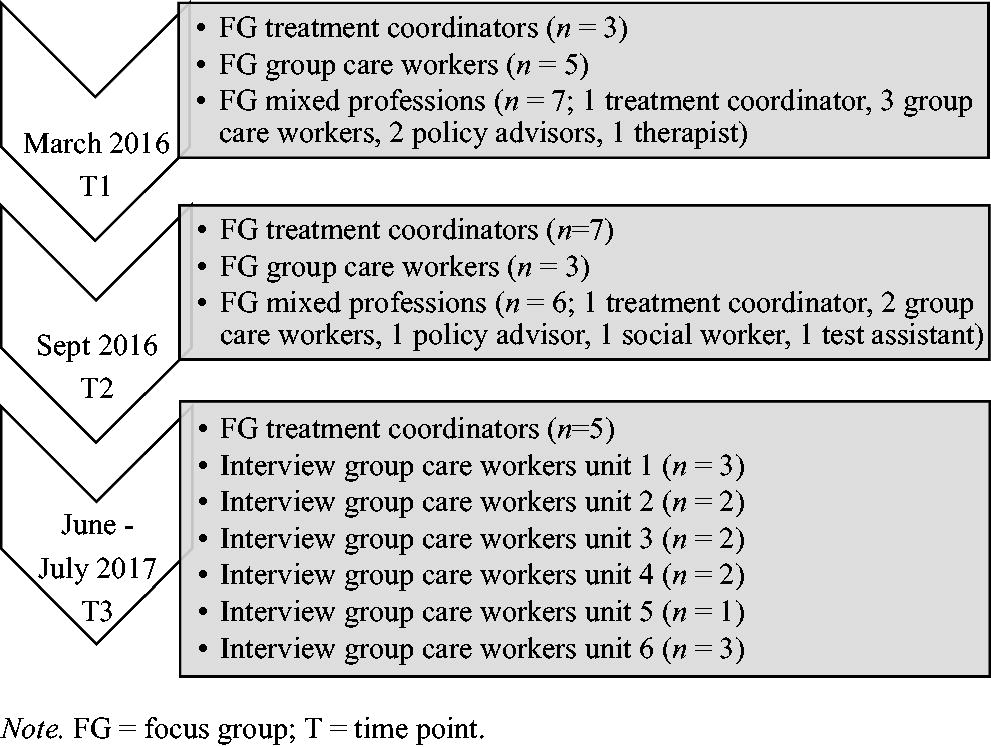

The initial design was to organize nine focus group discussions; three focus groups at three time points. The first time point was at the end of March 2016. Although this was almost two months into the implementation, most participants had not yet used the instrument due to the gradual process. The second time point took place in September 2016, and the last one was in June 2017. Each time point was scheduled to have one focus group exclusively with treatment coordinators (TC), one exclusively with group care workers (GCW), and one interdisciplinary group (Mix). The choice for two homogeneous groups and one heterogeneous group was guided by the assumption that homogeneous groups might generate more in-depth information about a group’s experience with the START:AV, while a heterogeneous group might generate a wider range of information (Schutt, 2004).

Sampling

All staff members who worked directly with adolescents were invited by a general invitation shared via email, newsletters, and announcements on the service’s intranet. In addition, two policy advisors were invited because of their involvement in the service’s quality assurance procedures for new and existing methods, as well as their involvement in the integration of the START:AV in the electronic patient file. However, this approach generated a poor response from group care workers and in the next step individual group care workers were invited via a personalized email. They were selected based on their active involvement in task forces and their interest in the START:AV observed during team meetings. We considered them as those who could best voice the implementation challenges experienced by the teams. At the follow-up time points, we started with personally inviting the participants from earlier sessions. Individuals in a purely managerial position (i.e., top-management, senior management, and operational middle management) were excluded from the study. Although treatment coordinators were considered middle management, they were invited because they supervised the treatment approach for the individual adolescent rather than the workforce. Higher management was not invited to the focus groups to prevent power differentials during the discussions. Moreover, because the authors did not focus on the perceptions of higher management, they were not considered as a separate user group in the design.

Focus group size

We aimed for five to ten participants per focus group. Although the literature is mixed about the ideal focus group size, recommendations typically range between 5 or 6 to 10 participants for noncommercial research (Masadeh, 2012). Small groups of 4 to 6 participants are recognized to be productive because they encourage participants to partake in the discussion, and they are increasingly popular because they are easier to organize (Krueger & Casey, 2014; Masadeh, 2012). Figure 1 shows that we reached this goal for five focus groups. Especially at time point three, it proved difficult to gather enough participants: only treatment coordinators responded. As a result, the study design was adjusted: the third mixed focus group was canceled as staff had expressed concerns that they could not meaningfully contribute to the group discussions due to a lack of experience with the START:AV. The focus group with group care workers was replaced by interviews for which the moderator visited frontline workers on their unit with a slightly adjusted interview guide. The group interviews were held with one up to three participants depending on how many group care workers were available on the unit (see Figure 1). In total, seven focus groups and six interviews took place.

Focus Group Participants Throughout the Three Sessions.

Participants signed an informed consent at the beginning of each focus group, after being informed about the content, procedure, audio recording, and their rights in relation to the research. Their participation was voluntary and during work hours. On average, focus groups lasted 64 minutes (range: 40-79 minutes) and interviews 12 minutes (range: 4-20 minutes). All interviews were held in Dutch and audiotaped with a voice-recorder. Afterwards, participants received a summary of findings via email and they had the opportunity to respond before the internal report was finalized.

Interview questions

During the first focus group interview, previous implementation efforts within the service were discussed, as well as expectations about the START:AV and potential barriers to the implementation. At the second and third time point, focus group interviews elaborated on the previously mentioned challenges and whether they had been resolved. In addition, positive and negative effects of the new risk assessment method, for example, on communication, were discussed, as well as workflow issues. An interview guide, modeled after Crocker and colleagues (2008), was used to guide the discussions and is available at the project page on Open Science Framework (OSF; https://osf.io/5gztr/). The open-ended questions provided a framework, and specific questions and prompts by the moderator followed from the discussion. The interview approach was informed by resources such as Krueger (2002), Krueger and Casey (2014), and Patton and Cochran (2002).

Role of moderator

In qualitative research, the investigator is considered an integral part of the research process and the final product (Galdas, 2017). Therefore, researcher influence should be disclosed and reflected upon. In the present study, due to resource constraints, the moderator of the focus groups was also the implementation coordinator as well as the data analyst. The investigator-moderator was not part of the treatment staff and she was hired by the organization to implement and evaluate the START:AV as a relative ‘outsider’ with a university affiliation. The moderator received one-on-one coaching by an experienced focus group facilitator and discussed the focus group sessions with the coauthors.

Participants

Across all time points, 36 unique staff members participated: 10 treatment coordinators, 21 group care works and 5 other professionals (see Figure 1). This reflects 100% of the treatment coordinators, 20% of group care workers, and 63% of other disciplines that were invited. Treatment coordinators, professionals with at least a master’s degree in psychology or special needs education, were responsible for the adolescents’ treatment process. In consultation with their team, they decided on the treatment approach in terms of type of therapy and treatment goals, and evaluated the treatment progress. They also provided treatment-relevant supervision and guidance to group care workers. Treatment coordinators, all female, were between 30 and 40 years old (M = 34) and had on average 10 years of work experience (range = 5-15) within the facility. Seventy percent had prior experience (i.e., use and/or training) with a structured risk assessment instrument, mainly the SAVRY. Group care workers were frontline staff who were responsible for implementing the treatment approach for the youths’ safety and wellbeing on the residential units. They supported adolescents with their everyday responsibilities. Of the participating group care workers, 43% were female; they were between 25 and 51 years old (M = 35), with 1 to 17 years of experience within the organization (M = 8.5). Among the other participants were professionals such as family social workers, psychotherapists and testing assistants, as well as policy advisors. Of these professionals, 80% were female and their average age was 44 years old (range = 36-56), with 7 to 29 years of service (M = 14).

Ethical considerations

The general director of the service gave permission to conduct the research within the facility. The Ethics Review Committee Psychology and Neuroscience (ERCPN) of Maastricht University approved the research protocol, procedures, and staff consent forms (ERCPN number 174_06_12_2016). All data were analyzed anonymously and stored according to the university’s Data Management Code of Conduct and the institution’s Data Protection Guidelines.

Data analysis

The focus group discussions were transcribed using free transcription software (NCH Software, 2016). The moderator was selected as transcriber because she was familiar with the context of the discussions, recognized the recorded voices, and was able to differentiate between relevant and irrelevant (e.g., interruptions) audio material. Furthermore, transcribing increased familiarity with the data, which was beneficial for subsequent thematic analysis. The transcription followed an edited approach; filler words, interruptions, self-corrected words and non-relevant content (e.g., small talk at the beginning of a session) were omitted while maintaining integrity of the recordings. All transcripts were anonymized: names were replaced by numbers. All transcriptions were double-checked against the audio recordings.

Thematic analysis

Transcriptions from the focus groups and interviews were analyzed using thematic analysis (Vaismoradi et al., 2013). This is a widely used qualitative method for identifying, analyzing, and reporting patterns within transcribed data (Braun & Clarke, 2006). It relies on a step-by-step process of coding and re-coding, and codes are collated into themes. The present study followed an inductive (data-driven) approach, which means that codes were identified independently of a theoretical framework. Applying a manual coding procedure, the first author started the thematic analysis by rereading the full transcript and entering the text in Excel with one sentence, or data extract, per row. Each data extract received one or more codes that summarized its content in one or two words. Examples of codes included ‘provide training’ or ‘resistance to change’. After working through all data extracts, initial codes with their definitions were listed in one file and examined for similarity. Similar codes were collated into a more manageable number of codes. Collated codes or newly identified codes were compared with the original statements of the participants to ensure they matched. Internal homogeneity of a code was examined by collating all data extracts labeled with this particular code and assessing coherence, while external heterogeneity was ensured by identifying clear distinctions between codes. This process was repeated for each focus group. The codes of the interviews at T3 were combined into one group.

In the next step, all codes were sorted into potential themes (Braun & Clarke, 2006). Five overarching themes were identified: ‘Implementation Determinants’, ‘Implementation Strategies’, ‘Implementation Outcomes’, ‘Feedback on Practice’, and ‘Suggestions for Practice’. The first three themes applied to the implementation process, whereas the latter two concerned the practicalities of the START:AV workflow. Because of the present article’s focus on determinants, only codes from the ‘Implementation Determinants’ theme were retained for further examination. For these codes, an inter-coder check was performed. The second and third author independently coded 27% (i.e., 113 of 417) of the extracts related to ‘Implementation Determinants’ using a codebook compiled by the first author (see https://osf.io/5gztr/). They compared coding sheets and discrepancies were resolved by discussion. In the end, all three raters reached consensus on the allocated codes. Moreover, as a result from the discussion, one new code was added as determinant and other codes were renamed for clarification.

Consolidated framework for implementation research

After the inductive approach to data gathering and data analysis, we applied a deductive approach to the classification and interpretation of the ‘Implementation Determinants’ codes. The CFIR codebook (Consolidated Framework for Implementation Research, n.d.) was consulted to connect our codes to the CFIR constructs, as it provides definitions and inclusion/exclusion criteria for the majority of the constructs.

Results

Descriptive information on the distribution of the 211 codes accumulated by the thematic analysis can be accessed via the OSF project page (https://osf.io/5gztr/). We focused on the codes related to the implementation determinants and their link with the CFIR domains and constructs (see Table 1). All five domains and 21 of the 39 constructs were addressed. In the following, we present the identified constructs per domain, illustrated with quotes. For each construct, it is also specified whether it was experienced as a facilitator, a barrier, or both.

Overview of the Implementation Determinants Codes Categorized according to the CFIR

Note. T = time point; TC = treatment coordinators; GCW = group care workers; Mix = mixed group of staff members

Intervention characteristics

Features of the START:AV were mostly discussed by the treatment coordinators. Contrary to other staff, they had considerable hands-on experience with the instrument and insight into its procedures, especially at the second and third time point.

Complexity (facilitator and barrier)

The majority of group care workers found it easy to provide information on the items because the item indicators on the form were felt to be self-evident. Although several group care workers reported difficulties separating strengths from vulnerabilities, which was corroborated by treatment coordinators, they supported the inclusion of strengths.

You have to be very aware of what is considered strengths and what is considered vulnerabilities, and without realizing you are documenting vulnerabilities among the strengths: “Oh no, that doesn’t belong here” and vice versa. I find the strengths very valuable because otherwise they wouldn’t be noted very often. (T3 GCW)

Relative advantage (facilitator and barrier)

When comparing the START:AV with the self-constructed risk checklist, treatment coordinators described benefits, such as more explicit documentation of the observed risks and the wide scope of potential adverse outcomes.

I notice that I reflect much more on how often the child has had such an aggressive outburst or hasn’t. While in the past, you just might have said “this is a very aggressive boy”, this time you are more looking for uh… That you make the connection: “Because of this lack in skills, the risk is high”. I think I … It provides more guidance to do this in a good way. (T2 TC)

The focus group interview with mixed staff members added that the START:AV provides a more comprehensive account compared to the setting’s self-constructed checklist. However, when comparing the START:AV with the dimension list, treatment coordinators felt that the START:AV did not add much in terms of information gathering and structuring. They felt that the items were not novel compared to the themes that were included in the dimension list: “I also think that it’s a good summary of current thinking in terms of the socio-emotional domain, the cognitive domain, social network, … but we had that already, it’s not like the START:AV added value to this” (T3). Neither did they experience benefits from the structured approach, “… because we were already working fairly structured prior to this. It is not like it was completely blank before and that we did not have any–how do you call it–structure or so”. (T3 TC). Overall, they indicated that the START:AV did not produce new insights or different treatment goals. One treatment coordinator seemed to carry the expectation that the START:AV would lead to considerably different conclusions: “In the end, you actually want it to result in making completely different choices and if that’s not the case, then you just think…” (T2 TC). Others countered this argument by indicating that this, in fact, validated their prior decision making. Furthermore, on the observation units where teams had been using the dimension list (see Setting), treatment coordinators regretted the change in information they now received from frontline staff on the START:AV items. They argued that although they obtained information on more domains, this information was less detailed than before. Likewise, treatment coordinators commented on the loss of narrative due to reporting per item. Other treatment coordinators added that they were missing information on domains that were not included in the START:AV, such as developmentally-appropriate knowledge of sexuality.

Adaptability (barrier)

Although treatment coordinators understood the importance of adhering to the item ratings, there seemed to be some negligence when allocating scores. A treatment coordinator asked rhetorically: “Do I really have to worry five minutes about whether it is moderate or high?” (T2 TC). Treatment coordinators also commented on the utility of the START:AV for less restricted settings, such as community-based services, arguing that it could be useful to detect unsafe conditions. Yet, they hypothesized that in such settings only a small group of juveniles would benefit from the risk assessment and therefore wondered whether it would be worth the (implementation) effort.

Design (barrier)

The computerized version of the START:AV comprehensive form, piloted prior to the last focus group, was mentioned as a barrier. According to the treatment coordinators, the digital form “made it even more difficult” for group care workers to report the required information, because it did not include the items’ anchors (i.e., item descriptors) to rely on.

Cost (barrier)

Group care workers expressed that, in the past, their work on the unit had not benefited from completing questionnaires and forms. This made some staff apprehensive about the present implementation:

Completing many lists, completing many forms, to ultimately make one report. I think that half of those forms are not even being used to reach a proper conclusion. But because it’s obligated, we have to complete them. That brings along a lot of work that I’d rather spend on the ward instead of in the office. (T1 GCW)

Outer setting

The outer setting refers to the larger political, economic and social context in which the organization is embedded. It includes those who are served by the setting and the external network of the organization. This domain was mentioned least often.

External policy and incentives (facilitator)

Treatment coordinators indicated that the introduction of the START:AV within the service corresponded with national reforms in youth care policy. Legal mandates for residential treatment were reduced in length, and evidence such as risk assessment information was increasingly required when imposing or prolonging mandated treatment. According to treatment coordinators, it “certainly goes nicely hand-in-hand because with that [risk assessment] you can better indicate ‘I really find that this individual needs more time in mandated residential youth care’, or ‘I really find that we should continue because…’.” (T3).

Inner setting

Contrary to the outer setting, characteristics of the inner setting (i.e., the service itself) were often noted as factors that influenced the implementation. It includes both tangible aspects, such as size and structure of the service, as well as immaterial features such as work culture and climate. Aspects of the inner setting were primarily discussed at the first time point. Statements on the inner setting in relation to previous implementation efforts were also coded as they were thought to be relevant to the START:AV implementation.

Compatibility (facilitator)

Treatment coordinators agreed that the START:AV was compatible with the objective of mandated residential youth care to reduce risks without trying to resolve all developmental challenges of the admitted youth: “Because you actually have to reduce the risks and should not want to change issues in all developmental areas” (T3). However, one treatment coordinator shared concerns that focusing on the risks and needs would not sufficiently address the youths’ problems.

Culture (barrier)

Staff members shared that, 10 years ago, the facility was a juvenile detention center. They believed that this background was still affecting today’s practice, which was perceived as strict and inflexible by some. A group care worker used the metaphor of a “slowly turning heavy tanker ship” (T1) to describe the organization. Overall, staff members believed that the service had difficulty adapting to change and preferred maintaining routine practice. Participants recognized both an aspiration to innovate and a rigidity to preserve the status quo among top-management. Treatment coordinators speculated that this rigidity was fueled by worries about losing a good standing reputation within the field.

It’s ambiguous because we see that many authorities, such as youth care offices, local authorities, refer [youths] to us because we deliver very good service, in their opinion. So it is somewhat ambiguous because on the other hand, this reluctance – sticking to what goes well– also makes us steady, well-functioning and therefore delivering a good job. To me, that is… uh well, difficult. (T1 TC)

This reluctance impacted treatment coordinators, because the service had not yet followed through on their promise to relieve them from other tasks. A treatment coordinator noticed: “In this case [of the START:AV], everyone thinks that we should do it, but there is still, for example, nothing else removed from our workload” (T1 TC).

Not only were those in charge thought to resist change, a group care worker voiced that it was quite common for frontline staff to respond reluctantly to new initiatives:

For some, it is more difficult than for others. And, it is precisely those who experience difficulty, who deserve attention, and it is precisely those who need to be involved in the process and perhaps those who you need to talk to more frequently. (T1 GCW)

Furthermore, group care workers felt new initiatives were often imposed upon them, in a top-down fashion, without sufficiently accounting for the team’s possibilities. This generated frustration among frontline staff: “When it comes out of the blue and someone says ‘You have to do that’, with no room for discussion, that causes irritability”. (T1 GCW)

Learning climate (facilitator)

Nevertheless, group care workers were positive about past implementation efforts in which they had been actively involved. For example, one group care worker enthusiastically described active involvement in the implementation of a group-oriented intervention: “What I really liked about implementing [intervention], what I found positive about it, is that we were allowed to contribute: when are we going to provide the training? How are we going to do it?” (T1 GCW). With regard to the START:AV implementation, participants did not express that they felt involved.

Structural characteristics (barrier)

The facility’s hierarchical management structure was reported as a barrier due to its many administrative levels. Staff explained that a decision had to pass multiple levels before it could lead to actual change.

This is also part of our culture. Here, everyone wants to have their say about it and everyone is allowed to have their opinion. At a certain point, because of this, we just stagnate: “Okay, when will we finally get started? When will we act? It still has to pass this committee and then that one has to give their opinion”. At times, this works against us because it takes three quarters of a year before anything finally seeps through in the organization. (T1 GCW)

A material barrier to the implementation was the physical scattering of units across the grounds, weakening the sense of unity within the service. Staff typically referred to the treatment units as ‘islets’.

Networks & communications (facilitator and barrier)

The barriers were found to affect communication. Group care workers did not always feel sufficiently informed on past implementations and other staff members also shared experiences of not being included in the information exchange. Concerning the START:AV implementation, a therapist explained that the communication was clear, but that the expectations for her particular position were unknown. Overall, participants found that the introduction of the START:AV was well-announced. A group care worker commented:

I do think, the communication… of course you [implementation coordinator] have been working on it for a while now and you have represented yourself pretty well. Everyone, in my opinion, knows something is going to change. (…) That makes a difference: the new system does not appear out of the blue. It is already [introduced], step-by-step, in the newsletter or in an email. Somewhere attention is paid to it, everyone is being reached. (T1 Mix)

Relative priority (barrier)

Treatment coordinators felt that, at the beginning of the implementation, the START:AV was not prioritized enough. On the question “What if you could change one thing?” a treatment coordinator responded:

(…) that our supervisor takes more responsibility as in “this has priority now and that means that we don’t do this or this or that for a while”, including some task forces or what not… That all of us agree as an organization: this has our priority now. (T1 TC)

Leadership engagement (barrier)

Frontline staff warned against the multitude of superiors to whom they were accountable. In the past, this had led to inconsistencies in approach and deviations from agreed strategies: “Exactly! One of the managers thinks it is really important, while my operational manager suddenly starts questioning the implementation, and you are in the middle of it” (T1 Mix). Receiving conflicting messages was perceived as frustrating and confusing. Treatment coordinators expected their leadership to take on a supportive attitude toward the increasing workload and be engaged in the implementation, for example, by completing START:AV assessments themselves.

The challenging part is that our direct supervisor has insufficient insight in how much time it takes. What he does, is personalize the problem: those who complain more, are considered to have a difficult personality, and those who don’t complain, are just not that busy. This is his strategy to ignore the problem and that is frustrating. (T1 TC)

Available resources (barrier)

Concerning the facility’s readiness to implement the START:AV, all disciplines addressed the lack of resources. The START:AV placed a burden on the treatment coordinators’ workload and this was, at least for some, a major barrier to adopt the instrument. They realized that becoming adept in using the START:AV required a substantial (time) investment: “We keep repeating this: time is really the biggest impeding factor in this.” (T1 TC). Similarly, group care workers found it difficult to carve out time to familiarize themselves with the completed forms they had to complete for their caseload. One group care worker linked this to the occupancy on the unit:

Usually there is not much time. That’s also complicated, it depends on how many adolescents you have on your unit. We already have a lot of lists to complete and there is already a lot that we must do. At the moment, we have six youth on the ward and then you have some extra time to do other things. When the unit is full with 11 adolescents, you’re lucky if you have managed to write the daily progress notes by 10: 30 p.m. (T2 GCW)

Access to knowledge & information (facilitator and barrier)

With respect to accessing information, participants appreciated the visits of the implementation coordinator to inform the teams about the START:AV: “I do think that what you did —discussing it in the team meetings—is very useful to make it more concrete.” (T2 Mix). However, group care workers experienced difficulties accessing the forms as they were not stored in a location available to them. Furthermore, new group care workers did not receive a formal introduction to the START:AV; they learned it on the job, during case conferences and by asking colleagues. A group care worker considered this part of one’s own responsibility: “I mean, children are assigned to a mentor based on availability at the time, and when you become mentor of a child in an observation trajectory, you have to think for yourself what you need to do.” (T3 GCW)

User characteristics

Knowledge & beliefs about the intervention (facilitator and barrier)

Treatment coordinators were convinced about the significance of the START:AV and the usefulness of the adverse outcomes for the setting’s clients. Moreover, treatment coordinators argued that with the START:AV they were better equipped to substantiate their decisions about the treatment approach and the recommended level of supervision. In addition, they believed in the value of focusing on strengths. This was illustrated by a treatment coordinator: “I feel that it is fair to the youths to also consider strengths. I think that in our field there is a strong tendency to focus on the negative things. Therefore, I feel this really is of added value.” (T2). Frontline staff agreed: “This way, you are forced to pay attention to the positive things.” (T2). Another advantage of the START:AV, according to staff members, is its focus on facts and the unambiguous presentation of risks and concerns. A treatment coordinator in the mixed group explained:

What I find very positive, is that you substantiate the risks for an adolescent much more. Often, as a team, you agree that it is a very worrisome case, but if you put it [the risks] together like that, then “Yes indeed, this is very worrisome”. And you can write it down much more clearly and communicate to the child guardian agency or the parents that there are major concerns in these areas. It helps me to describe things more objectively. (T2)

Still, one participant expressed concerns about confidentiality because it was not clear to this staff member that the START:AV was treated as an internal document and would not be shared with third parties.

Individual stages of change (facilitator and barrier)

On the one hand, treatment coordinators reported enthusiasm and willingness among their team members to use the instrument, on the other hand, much resistance was noted. Reported reasons for resistance to the implementation were lack of time and not being able to complete a START:AV assessment without being interrupted. Furthermore, staff members’ response to the new practice appeared to depend on their openness to change. Some readily expressed enthusiasm, whereas others were more reluctant. A group care worker commented:

One person might immediately start thinking about it, getting excited, while another, when he does not see the benefits or when it is not yet clear, immediately thinks: “Ah, again another list”. I find it varies a lot, at least if you look at our team. (T1 Mix)

Other personal attributes (barrier)

One participant who works closely with the adolescents’ relatives, expressed concerns about reporting incriminating information, without an extended narrative, in the START:AV. This staff member seemed to experience a conflict between providing information on the START:AV items and his role as counselor:

You are working with a human process and devastating situations, and then you can’t simply state in the report “Look, this is what this family has shared”. Then you fall short on these people and that is not okay. (T2 Mix)

Process

External change agent (facilitator)

Treatment coordinators considered the service’s choice to hire an implementation coordinator as a facilitator. In past implementations, they had noticed better facilitation of external project coordinators by management and staff members had also been more receptive to external agents. They said: “I think it makes a big difference when an external person is brought on board, as now happens with the START:AV, and this person takes charge” (T1 TC).

Planning (barrier)

Treatment coordinators regretted the long planning phase with extensive preparation, which they referred to as “an endless start-up phase” (T1). According to them, the implementation committee should have started sooner with the full implementation.

Executing (barrier)

They were especially dissatisfied about the substantial time that passed between the training and the actual use of the instrument. One treatment coordinator explained how this impacted her:

It’s just really too bad that if you look back: when did we complete the [START:AV] practice cases? That was over a year ago! Look, if… for me that [knowledge] really is already disappearing. That’s just a waste of energy. (T1 TC)

For this reason, treatment coordinators supported the decision to inform group care workers later in the process, otherwise “they might become frustrated” (T1).

Discussion

This qualitative study explored staff members’ views on factors that affected the implementation of the START:AV risk assessment tool in a residential youth care service. Using the consolidated framework for implementation research, we organized a set of implementation determinants, which we derived from focus group interviews using a data driven approach. On multiple domains, staff members identified factors that they perceived as impeding or facilitating implementation of the instrument. Aspects of the inner setting were mentioned most frequently, followed by user characteristics and features of the risk assessment instrument itself. The implementation process itself and the outer setting were rarely mentioned.

The cultural and structural features of the service were widely discussed as barriers to the implementation. Structural barriers included the physical environment (e.g., physical scattering of units) and the ‘corporate’ environment (e.g., hierarchical levels). According to participants, these structural barriers hindered communication, which is an essential component of introducing change (Damschroder et al., 2009). Moreover, communication is key in earning staff buy-in, creating enthusiasm, and encouraging staff involvement. Multiple aspects of the implementation relied on communication, such as sharing the rationale for introducing structured risk assessment, providing practical information about the START:AV workflow, and updating staff on the implementation progress. In addition, resources were a frequently mentioned barrier: the START:AV increased staff members’ workload while operating within the same (time) conditions. Similarly, staff in Sher and Gralton (2014) study reported a lack of time to effectively complete the START:AV. The present approach to treat the START:AV as a master file, and the time investment that came with it, led to resistance among staff members. Overall, resistance was a common theme, from the board of directors to frontline staff, each for their own reasons. This is not surprising, because implementing change is “fighting against one’s inner desire to maintain the status quo” (Tran, 2019).

Nevertheless, despite the experienced strain in terms of workload, staff members were positive about the value of the START:AV and its usefulness for their practice, in line with Sher and Gralton’s findings (2014). In both settings, the focus on strengths was highly valued. In addition, in the present study, the multiple adverse outcomes were particularly appreciated and perceived as relevant for the setting, even more so than the individual items. Yet, one staff member expressed experiencing difficulties with reporting sensitive information in the START:AV form, while simultaneously working on building and sustaining a therapeutic alliance with the youths’ families. This concern is in line with staff’s expected loss of discretion described in three of the studies reviewed by Levin et al. (2016). For example, in an implementation study by Vincent et al. (2012), 21% of juvenile probation officers “feared that their years of experience would be discounted in favor of a score from a tool” (p. 573). However, this anticipation proved unwarranted as only four officers (4.7%) reported feeling invalidated by the instrument at 10 months into the implementation.

With respect to the instrument’s characteristics, there are several parallels with the findings of Sher and Gralton (2014). For instance, group care workers in our study also found the items to be straightforward and easy to complete; yet, some struggled with differentiating strengths from vulnerabilities, similar to the UK study. Most group care workers preferred a narrative approach in which they connect, and potentially counterbalance, vulnerabilities and strengths in one paragraph. Strictly separating strengths from vulnerabilities required additional effort. In addition, group care workers reported that differences between some of the items were quite subtle, making it more difficult to allocate information to the appropriate item without duplicating, a concern also mentioned by Sher and Gralton (2014). It is unclear from the UK study, whether staff was already familiar with structured information gathering. In the current setting, treatment coordinators experienced this as a considerable barrier: they seemed disappointed by the limited novelty of the START:AV in terms of the included items and the provided structure, compared to what they were already used to. Moreover, replacing the dimension list with the START:AV was accompanied by a sense of loss (e.g., less detail, missing themes, loss of narrative).

The participants rarely commented on the planning and the execution phase of the implementation, perhaps because of the timing of the focus groups. The planning phase had ended and the official implementation had begun. Nevertheless, staff stated that preparation, training, and actual implementation had not succeeded each other within a reasonable time frame. The lengthy intervals between these steps were perceived as impeding the implementation.

Similar to findings reported by Levin et al. (2016), and Sher and Gralton (2014), staff members did not consider factors from the outer setting as affecting the implementation. This was somewhat surprising, because the implementation took place during turbulent times for youth care organizations, with many legal and budgetary changes occurring simultaneously. The restraints that were imposed on the service from the outside (e.g., reduced financial resources) may have hindered the implementation. On the other hand, growing political pressure to use evidence-based practices, such as structured risk assessment, could also have facilitated the implementation. Yet, staff did not allude to this. We contemplate that staff members have a tendency to focus primarily on internal organizational factors. This might be especially true for (secure) residential settings that are more closed off from society than community-based services. Furthermore, the needs of the assessed adolescents themselves, and barriers and facilitators for them to participate in the risk assessment were not discussed. Such absence was also noted by Levin et al. (2016). In risk assessment practice, patients’ views are typically not included and assessments tend to be conducted top-down by professionals (Langan, 2010). Similarly, in the present setting, adolescents were not involved in the risk assessments. This approach likely limited staff members’ consideration of the adolescent as a stakeholder in the implementation.

The majority of determinants reported in the present study are recognized in the existing literature as important conditions for successful implementation. In accordance with prior work on risk assessment implementation (Levin et al., 2016;; Müller-Isberner et al., 2017; Nonstad & Webster, 2011; Schlager, 2009; Sher & Gralton, 2014; Webster et al., 2006), our findings highlight the importance of involvement and commitment on all levels, dedicated leadership, inclusive and transparent communication, adequate resource allocation, training, timing, monitoring, and integration in existing structures.

Limitations and future research

A first limitation is the involvement of the implementation coordinator as moderator and data analyst. On the one hand, familiarity with the service organization helped the moderator to better understand participants’ comments and to know when to probe for further information. On the other hand, as an ‘insider’, the moderator may have relied on implicit information about the organization that was not checked for accuracy (Chenail, 2011). Including more moderators into the design, especially moderators without familiarity with the service, might have compensated for the potential bias stemming from having the implementation coordinator as the single moderator. However, triangulation was applied during data analysis: a subsample of the extracts was coded by raters who were external to the service, reaching satisfactory agreement. This procedure improves standardization and accuracy in the coding process and helps control for bias (Boeije, 2010). However, researcher triangulation was not repeated during the deductive phase when the determinant codes were linked to the CFIR constructs. Not having multiple coders leaves the process to one researcher’s judgment. For example, the code ‘fear of negative effects’ could be considered a characteristic of the organizational culture (i.e., ‘Culture’) or a characteristic of the leading CEO (i.e., ‘Individual Stage of Change’). Nevertheless, both the codes and the constructs were defined in their respective codebooks prior to the deductive phase, reducing the opportunity for interpretation.

Second, the sampling method might have influenced who participated in the focus groups. It is possible that because of the voluntary nature, staff members with strong resistance toward the implementation did not sign up for participation. The opposite could also be true; perhaps dissatisfied staff members took the focus group discussions as an opportunity to voice their negative opinions. Moreover, participants knew prior to accepting the invitation that the implementation coordinator would be moderating the sessions. It is plausible that individuals who felt uncomfortable talking to the implementation coordinator refrained from participating in the study. As a result, the breadth of experiences shared in the focus groups could have been affected, with less diversity in reported barriers and facilitators. Nevertheless, this issue might have been partially resolved by randomly approaching the teams for interviews at the third time point. We used this recruitment strategy as an alternative to the focus group discussions that could not be organized at that time. Although interviews with a maximum of three group care workers likely produced less discussion and reduced the range of experiences that were reported (Krueger & Casey, 2014), on the other side, this may have resulted in reaching group care workers who would otherwise not have attended a focus group discussion.

Another way the focus group procedure may have impacted the findings is that familiarity with the moderator could have increased the likelihood that some participants made statements to please the interviewer (Chenail, 2011). However, because the topic was not particularly sensitive or personal and participation did not bring personal benefits, there was no obvious motive for socially desirable responding. Moreover, the participants in the present study were rather outspoken about the work environment. Considering the large number of experienced barriers to implementation gathered over the interview sessions, it is fair to assume that the majority of participants felt comfortable voicing criticism during the discussions.

A third limitation is that higher and middle management were not included in the study. This limits our understanding of the beliefs and attitudes of this group about the START:AV and what they perceive as implementation determinants. Higher management is typically more involved with external stakeholders, and from that perspective, they might have reported more external influences. Thus, not having this perspective embedded in the findings is a limitation. Nevertheless, the role of leadership and their potential influence on the START:AV implementation was discussed by other staff members.

Lastly, the reader should take into consideration that the present study reflects perceptions of staff members from a particular service with a particular client population, during a particular (political) time. Nevertheless, parallels with previous studies (Levin et al., 2016; Müller-Isberner et al., 2017; Nonstad & Webster, 2011; Schlager, 2009; Sher & Gralton, 2014; Webster et al., 2006) suggest that our results might be transferable to other contexts, at least to residential treatment settings. To enhance applicability of risk assessment implementation studies, we advocate for the use of implementation frameworks, such as the CFIR to allow comparison between settings and instruments. Moreover, future research could work on identifying the most essential determinants to implementation success and how they can be facilitated. Ideally, this research would involve multiple sites, to allow comparisons and identification of common determinants.

Although we purposefully decided on using an inductive approach to data gathering and data coding, all codes could subsequently be linked to the CFIR. Therefore, this comprehensive framework might provide an adequate starting point for future risk assessment implementation studies, for example, when developing interview questions that explicitly prompt for certain domains and constructs, such as the outer setting or implementation climate (Kirk et al., 2015). In addition, we would like to encourage future studies to investigate relationships between determinants and between determinants and implementation outcomes. Better understanding of what impedes and facilitates successful implementation (e.g., integration, adoption, satisfaction) paves the way for (more) effective risk assessment implementation strategies. A similar approach could be followed for the implementation of risk management in clinical practice. For example, studies have shown that programs that adhere to the Risk-Need-Responsivity (RNR) principles are more effective in reducing recidivism than programs who do not follow these principles (Koehler et al., 2013). Yet, a recent systematic review (Viljoen et al., 2018) found that professionals only showed moderate adherence to the risk principle and limited adherence to the need principle when making risk management decisions. Thus, it might be equally relevant to extend implementation research to risk management strategies and deepen our understanding of the barriers and facilitators that professionals face in adhering to the RNR principles.

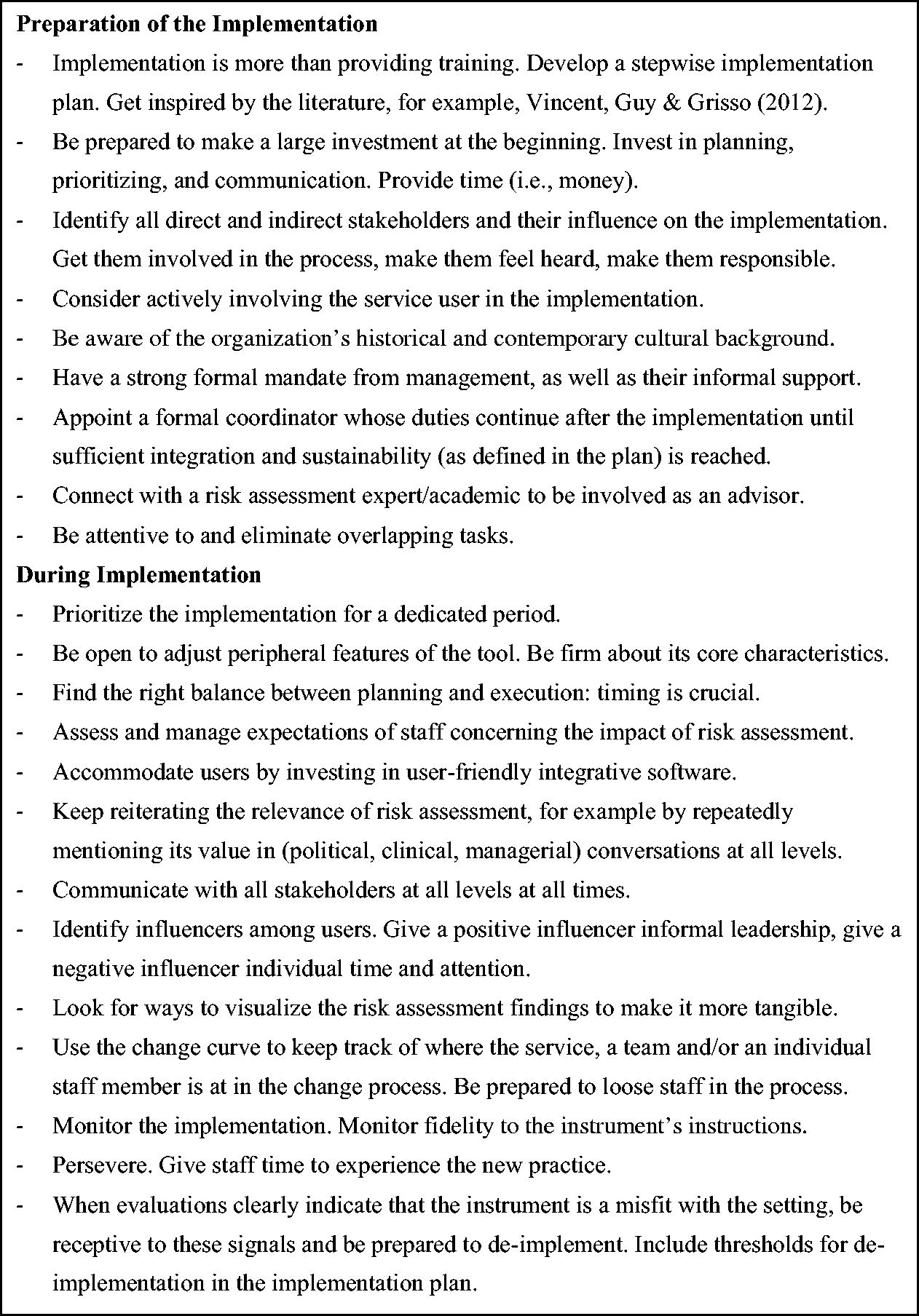

Practical implications

When planning an implementation, every coordinating committee could benefit from studying the CFIR. That way, potential barriers can be identified early in the implementation process and strategies can be adopted to increase the odds of a successful implementation. In addition, determinants and strategies should be reconsidered throughout the process as their relevance will change depending on the stage of the implementation (Damschroder et al., 2009). In Figure 2, we listed recommendations based on suggestions from staff and the experiences of the implementation coordinator. This list can be complemented with strategies from the ‘CFIR-ERIC implementation strategy matching tool’, which is freely available online (https://cfirguide.org). This matching tool assists in allocating strategies from the Expert Recommendations for Implementing Change (ERIC; Powell et al., 2015) compilation to the determinants of interest.

Suggestions for Implementing Risk Assessment Instruments.

Conclusion

In the past decade, there has been growing attention to the study of implementation of risk assessment instruments in forensic-clinical practice. The systematic review of Viljoen et al. (2018) suggested that the use of structured risk assessment instruments does not yet reliably result in violence reduction. One potential explanation for this finding are the challenges faced when implementing risk assessment with fidelity into practice. To move risk assessment practice to a higher level, it would require mental health services to adopt a systematic, evidence-based approach to its implementation (Haque, 2016). Increased understanding of the implementation process and the conditions that create a foundation for successful implementation (e.g., adherence to the risk assessment guidelines) is necessary to optimize risk assessment. The present study provided insight into the impeding and promoting factors of an implementation as perceived by the users of a risk assessment instrument, in this case the START:AV. The CFIR was useful in organizing and synthesizing complex and multi-leveled information gathered via interviews with professional users of the instrument. The more knowledge about risk assessment implementation accumulates, the better the field will understand which approach works for which instruments in which settings. High-quality implementation of risk assessment instruments is one of the first, probably crucial steps in reducing adverse outcomes among adolescents.