Abstract

Nowhere is the issue of scarce resources more emotive than in the health-care system: people's lives are profoundly affected by their access to health care and the availability of appropriate types of care. Increasingly, as scarcity is recognized, economic evaluation is being asked of the health-care sector. Although it has not been used extensively to evaluate psychiatric services in Australia or New Zealand, there are emerging forces which will change this. These ensure that for psychiatrists, to paraphrase H.G. Wells' famous comment on statistics, economic thinking will one day be as necessary for practice as the ability to read and write is for citizenship.

The critical reasons for this judgement can be found in three interwoven historical patterns. With the rise of individual libertarianism as a philosophy and psychopharmacology as a means, mental illness has been transformed from ‘madness’ to ‘illness’: a transformation that implies psychiatric conditions can be treated and, if not cured, controlled. This transformation enables those with mental illness to live in the community (with their civil rights) rather than in institutions (where their civil rights may be heavily curtailed). Second, there has been a broadening in the range of conditions psychiatrists treat.

Whereas it was once accepted that psychiatrists treated, in asylums, those with psychosis, there is now widespread acceptance that psychiatrists in office practice will and can treat a broader range of conditions. Third, as people live longer lives in industrial societies the proportion of those with mental ill health (defined in its broadest terms, including dementia and depression) associated with chronic neurological diseases is increasing [1, 2].

Psychiatry is thus being integrated into both acute and public-health medicine as never before. As this occurs, the same constraints that apply to the provision of nonpsychiatric acute health-care interventions are being applied to psychiatry.

A principal constraint is in regard to available healthcare resources. In all developed countries, the cost of mental health care is increasing. For example, Fein [3] estimated the 1955 total cost of mental illness in the USA to be US$1931 million; in 1985 Rice et al. estimated it to be US$103 691 million: a 54-fold increase over a period when the USA economy grew just 10-fold [4]. Throughout the 1980s and 1990s health-care managers, clinicians and governments have employed a variety of strategies seeking to ensure that finite resources are used as beneficially as possible. One strategy is to seek gains in efficiency; despite such gains it is predicted that the demand for mental health care will continue to rise: a demand exacerbated by increasing costs associated with the introduction of new technologies [5]. It was, for example, recently reported that in 1998/1999 pharmaceutical use in Australia increased by 3%, but that the cost increased by 10% due to the consumption of newer, more expensive pharmaceuticals [6], p.4].

Within this framework, economic evaluation can offer psychiatry three important mechanisms to assist with its tasks: it can describe the cost of the burden of psychiatric illness, it can predict the level of resources that will be needed in psychiatry, and it can provide information about the best use of those resources.

Recent reviews of economic evaluations in mental health care reported that there were few good economic evaluations. Using the search and selection procedures advocated by the Cochrane Schizophrenia Group, Johnstone and Zolese reported that of the 206 citations elicited in their electronic search, they were able to accept just four studies for their review of the effectiveness of planned short hospital stays for those suffering mental illness. They reported that basic information was often missing (including data on mental health, social functioning and family burden) and they rated the quality of economic information as poor [7]. A review of atypical antipsychotics reported that many economic studies lacked appropriate comparator groups and suffered methodological flaws making it difficult to draw any firm conclusions regarding the cost-effectiveness of the interventions [8]. Another review of 48 studies reported that evaluations generally failed to measure costs or did so incorrectly [9]. In another it was reported that most evaluations were restricted to cost-analyses or cost-of-illness studies which are not usually accepted as economic evaluations since there is no comparator. The authors reported that the methodological quality of most of the articles they reviewed was poor, and blamed this on the difficulties associated with measurement in the mental health-care field, a lack of understanding of economic evaluation and a failure to work in close cooperation with health economists, a failure to adequately operationalize costs, and on a failure to adhere to the basic principles of economic evaluation [10]. This paper seeks to remind psychiatrists of those basic principles.

Describing the cost of the burden of illness and treatment

In order to describe the costs associated with mental illness, these need to be defined and measured: illness is expensive to both the individual and society. Cost of illness studies are concerned with establishing these costs; they reveal the extent of health-care expenditure by illness. This enables economic comparisons between different illnesses thus drawing attention, and possibly additional resources, to areas that are particularly needy. As such, while cost of illness studies are not really economic evaluations, costs are one of the fundamental building blocks upon which economic evaluation depends.

Types of costs

Before 1997, health economists used Fein's (1958) cost definitions: costs were thought of as being direct, indirect or intangible [3]. In 1997, however, Drummond et al. redefined these as costs consumed within the health-care sector, costs borne by the patient and his or her family and costs borne by other sectors of the economy [11].

Health-care costs

These describe the resources consumed by health care interventions (programmes), which may be aimed at either preventing or treating illness. Because they are formal costs they can be easily identified. As an example, diagnostic-related groups (DRGs) are premised on direct acute health-service costs, since they are based on indices of relative cost for hospital services. The assumption underpinning DRGs is that they provide a standardized mechanism for funding actual services provided. Where this assumption is proved true, the use of DRGs should lead to more consistent funding arrangements, greater efficiencies and more equitable funds distribution. For an early review of DRGs and psychiatry the reader should consult Hunter and McFarlane; although now dated the general thrust of the argument is highly informative [12]. Although these costs are easily identified, they may be difficult to measure unless they are routinely captured. They include the costs of programmes associated with prevention, detection, treatment, rehabilitation, research, training and investment. Included in these costs are all factors involved, such as personnel, buildings, equipment, pharmaceuticals, dressings and tests carried out. When comparing programmes it is essential that the same services be included. Healthcare costs are thus similar to Fein's ‘direct costs’, which were defined as ‘the actual dollar expenditure on mental illness’ [3], p.10].

Patient and family costs

In addition to costs, the consequences of ill health are expensive (costly) to the individual and his or her family and friends. When an individual is ill, he or she may be unable to perform roles that would have been undertaken in the absence of such a condition, or performance in these roles may be compromised. Under these circumstances, there may be loss of income for either the patient or a caregiver (this foregone income may also include loss of social or leisure activities), travelling costs in attending hospital or medical treatment, copayments for medical care or treatment and modifications to motor vehicles or the home. Other non-wage costs include inability to perform usual non-paid activities such as child care, voluntary work, leisure activities or various activities of daily living, including housework. These patient and family costs are particularly important because of the long-term or episodic nature of mental illness which may impact upon caregivers; Panzarino, for example, reported that depression caused greater functional loss than other chronic illnesses [13].

These foregone activities can, in theory, all be measured and counted. One of the difficulties is to assign monetary values to these costs; for this reason only a subset of patient and family costs are usually included in cost of illness studies, most usually foregone income.

Other sector costs

In addition to health-care costs and patient and family costs, someone who is ill may consume resources provided by other community sectors, such as private or public agency costs (e.g. various forms of home help provided by city councils or private service providers) or voluntary organizations (e.g. church or social service agencies). Wherever possible, these costs should be assessed and included.

One of the difficulties, of course, lies in collecting all these various costs. The best advice is that all areas of life that might possibly be affected by an intervention should be monitored and costs assigned (regardless of who bears them), including both current and future costs. The exception is where the costs will not be different between the treatment groups being compared. In advancing this position, the Panel on Cost-Effectiveness in Health and Medicine recognized the difficulties and argued that other sector costs, typically service costs, should be estimated through the use of prices of the services provided, unless these were unrealistic. For further information on this topic, readers are referred to Manning [14].

Patient and family costs and other sector costs, then, are similar to Fein's ‘indirect’ and ‘intangible’ costs, which were defined as ‘the economic loss in dollars (or in work years) that society incurs because some part of society is suffering from mental illness’ [3], p.10].

Measuring and reporting costs

Which of the above costs will be included in any particular study will depend upon why the study is being done and the view taken by the researcher; this is referred to as the perspective of the study. The possible perspectives are those of the patient, the service provider, or the society. Each of these leads to different cost estimates because each is concerned with different things. For example, Rosenheck et al. evaluated the cost effectiveness of clozapine. The costs were presented from the point of view of the health-care system (i.e. the service provider), consequently the only costs included were those reported by participants attending Veterans Affairs medical centres and other direct health-care services [15]. If they had taken a social perspective, it is possible their findings would have been different.

Generally, the societal perspective is the broadest since it includes all costs and outputs (e.g. benefits and unintended outcomes, such as cost shifting, or the use of non-health social services, such as legal costs). The other perspectives are narrower in scope. For psychiatric services the social perspective is preferred from the evaluator's point of view given that there is reason to believe there may be high patient and family and other sector costs (see below). This is because, when compared with the majority of other acute health states, psychiatric illness is both chronic and has multiple effects. This perspective is also recommended by the Panel on Cost-Effectiveness in Health and Medicine because it represents the public interest rather than that of any particular health-care sector [14].

A real problem facing researchers, however, is that collecting non-service costs (i.e. so as to reflect the societal perspective) is extremely difficult. For example, consider Johnston et al's study of intensive case management. In addition to service-provider costs, they collected caregiver costs, such as time spent caring, foregone income, medication, travel, accommodation, repairs due to damage caused by the patient, fire and legal services. Because of the difficulty in reliable data collection, they were only able to collect these for 46% of cases. Given this, they excluded these during their data analysis [16].

Which costs are measured also depends upon whether an evaluation is assessing a new or existing programme. If the programme is new, its full costs should be included. If it is an extension of an existing service, the provision of extra services has a marginal cost and it is these which should be included.

Regarding the reporting of costs, mean costs and 95% confidence intervals should be reported. There are two difficulties that commonly arise which pose problems with doing this. The first difficulty is that economic data are often badly skewed, thus violating the assumptions underpinning many statistical procedures, including analysis of means. The two conventional approaches to the analysis of skewed data are to use non-parametric tests or to transform the data, usually into a log scale. The second difficulty is that there are often outliers (i.e. extreme cases). These outliers are very important because they represent people with extreme health need (they are therefore a constant feature of health care). Since outliers have a profound influence on mean calculations, the question of whether to trim outliers or not is an important one which needs to be assessed very carefully. Although trimming may be seen to be undesirable, there are times when it can be considered.

One of the difficulties in economic evaluation is the uncertainty in deriving monetary costs. To overcome this difficulty, health economists have traditionally used sensitivity analysis. This involves exploring how sensitive the results from an economic evaluation are to varying parameter values by, for example, 5–10% (the values used are often derived from the magnitude of uncertainty surrounding the parameters). Thus if an investigator feels that data are not reliable he or she may use sensitivity analysis to provide estimates of how the costs would vary under various scenarios and assumptions. Recently, there has been a move towards modelling of multiple sources of uncertainty by simulation methods and presenting confidence intervals, instead of a limited number of scenarios in a sensitivity analysis.

Other issues in estimating the cost of mental illness

Within the mental health-care field, much of the early cost of illness work was devoted to establishing the direct cost of mental illness. Although this may have partly reflected the antipsychiatry movement [1], it was also due to the fact that mental illness was perceived to be expensive because of the large number of hospital beds utilized for psychiatric disorders: the bed rate in 1955 was about 350/100 000 population in the UK and 450/100 000 in the USA. With de-institutionalization, the widespread acceptance of drug therapies, the transition of psychiatry to a therapeutic discipline embracing ‘milder’ case diagnosis and increasing awareness of patients' rights to a high quality of life [1], these rates dropped dramatically to 155/100 000 and 96/100 000 in 1981 and 1983 respectively, [17]. Consequently, although health-care costs will have shifted (from institution to community), patient and family costs are likely to be an increasingly important component of psychiatric illness costs due to the chronic nature of specific mentalhealth problems.

This implies that in many cases the full economic costs are unlikely to be adequately estimated and will depend upon the methodology used and the assumptions made by the researchers. We offer two very different examples providing different estimates depending upon the perspectives of the study.

Where service costs alone are measured, there will be considerable variation depending upon study perspective. Wolff et al. adopting a very narrow view which assumed that there were costs only where an insurer, provider or patient paid for resources, compared different perspectives for outpatient services and reported that the per unit (i.e. service provided) cost could range from US$108 from the management perspective to US$538 where the perspective was that of an economist [18].

In the other example, Hawthorne et al. presented a range of estimates of the cost of psychosis in Australia. They based their calculations upon three critical parameters. First they used estimates of the number of people living with psychosis in the Australian community; these ranged from 0.4 to 0.7% of the population: between 74 000 and 129 500 people. They then used a value of $50 000 per annum for the value of a life, based on court awards in the road safety industry using a payout of $750 000 to cover a presumed loss of 15 years of life. Third, they based their calculations on the loss of utility as determined by a sample of respondents (people suffering psychosis) who were registered with St Vincent's Mental Health Service in Melbourne, Australia. The dis-utility values ranged from 0.34 to 0.55. Using these parameters they provided estimates of the cost of psychosis which ranged from a low of $1258 million through to a high estimate of $3238 million: there was a 180% difference between the low and high estimates [19].

Measuring service outcomes

Economic evaluation can also provide estimates of service outcomes. Drummond et al. refer to outcomes as ‘consequences’ and define three types: changes in health states, the saving of resources and the creation of other value [11].

Changes in health states

Changes in health states are the effects of programmes on health, such as measures of mortality, morbidity or health status/function capacity. That is, they reflect the clinical or social effects of a programme and are usually referred to as ‘natural units’. They are usually collected during programme evaluations, from clinical trials, epidemiological studies or health surveys. The indicators used can be either disease-specific or generic. Diseasespecific indicators have the advantage of greater sensitivity to the disease in question; the disadvantage is that they preclude comparison with other diseases or interventions. Conversely, generic indicators permit comparisons, but may lack sensitivity. We recommend that both types of indicators are used.

For example, in a study of early intervention among young people experiencing an emerging psychotic disorder, psychosocial functioning was measured using the Quality of Life scale, symptoms were measured with the Scale for the Assessment of Negative Symptoms and neuroleptic daily doses (in chlorpromazine equivalents) [20]. These measures would preclude comparison with a different intervention where these data were not collected. Other indicators which were collected in this study included contacts between clinicians and patients, and inpatient bed days; these could easily be used in a comparative study across different health fields.

Resources saved

These are defined as ‘costs saved’ as a result of an improvement in health status. The maximum saving would be where a patient has been restored to ‘normal health’ and they will not consume future resources.

As with costs, there are three levels of resources saved: health care resources, patient and family resources and other sector resources. Benefits could include reductions in demand for health-service provision, increased wages from return to work, reductions in family caregiving (thereby enabling the caregivers to undertake other activities, e.g. return to work), reductions in family breakdown, reductions in time spent travelling to and from health services, or reductions in social welfare consumption.

Other value

There are generally two forms of ‘value’ that a health care programme may create. There is the direct value to the patient of his or her improved health state (usually measured as either changes in health states directly or indirectly), and there may be the value of being reassured that he or she is receiving appropriate health care (irrespective of the effects of that care). Where improved health states are measured indirectly, they may be measured using ‘utilities’, which are best thought of as people's ‘values’ for particular health states.

A key issue for the evaluation of mental health services is the reliability and validity of self-reported utilities. In general, self-reports are affected by adaptation [21], age [22], neuroticism [23], emotional adjustment [24], marital status [25], education [26], employment status [27] and spirituality [28]. A recent review of these and other factors can be found in Diener et al. [29]. In addition, there are particular issues for those suffering mental illness, including the effect of affective state [30], poor insight or distortion [31] and neuroleptic therapy [32]. On these grounds, it has been argued that self-report should be discarded altogether in favour of either clinician or proxy ratings [33].

However, there is some evidence suggesting these issues do not necessarily invalidate self-report. In a study involving patients suffering schizophrenia, Voruganti et al. reported that although the severity of illness, medication side-effects and cognitive ability all affected the estimates, the reliability of these estimates, based on repeated measures, was not compromised [34]. In another study involving people suffering psychosis, Herrman et al. contrasted self-report with clinician-report, and concluded there was no evidence that self-reports were invalid [35].

Utility measures

Utility measures provide preferences for a health state. For example, most people would prefer to be normally healthy over a 10-year period rather than suffer depression, experience considerable pain or be bed-ridden over the same period.

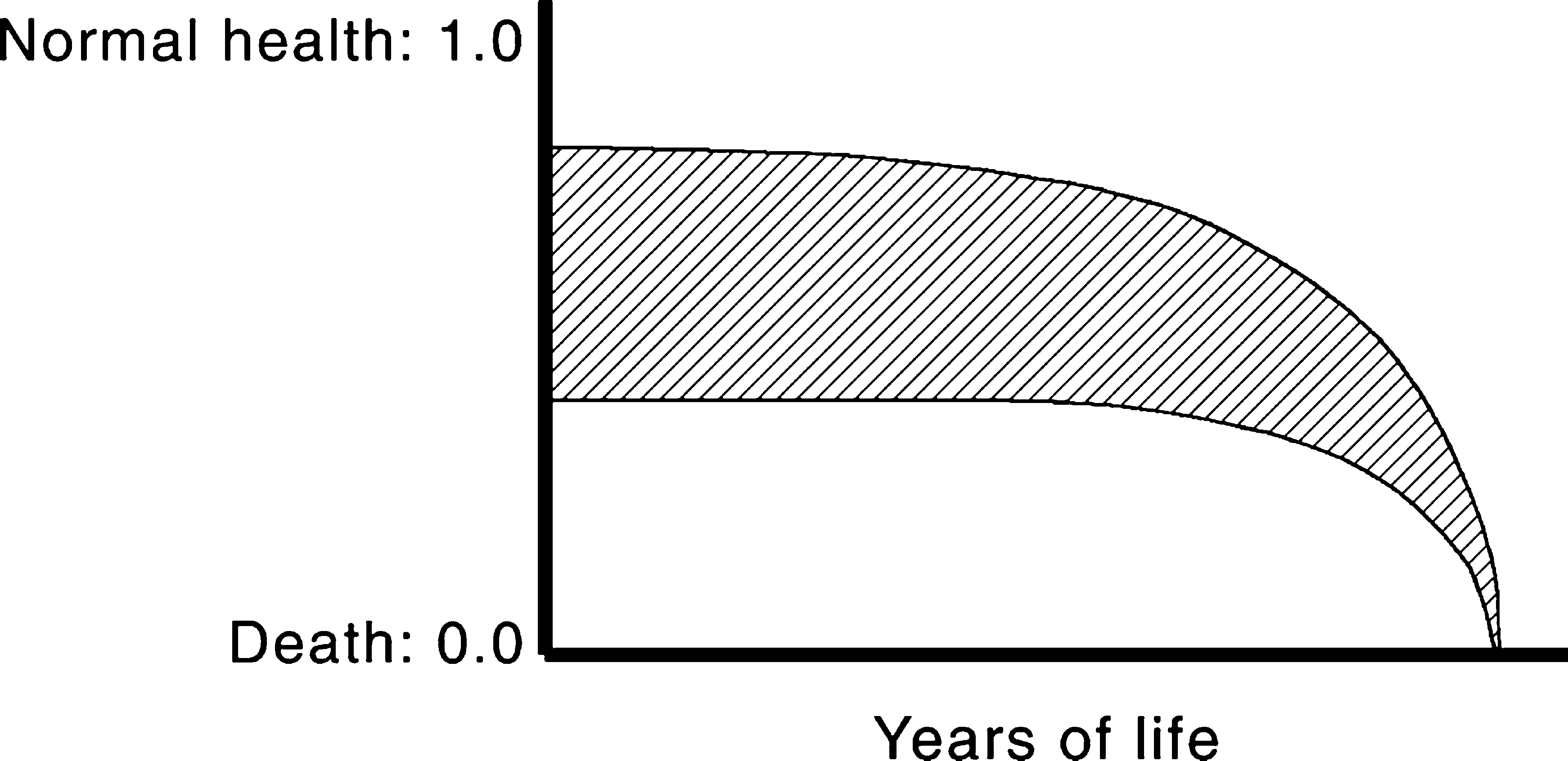

Generally, the term ‘utility’ refers to very particular ways of establishing these preferences such that a weighted index of preference is established on a scale from 0.00 to 1.00 where 0.00 = death and 1.00 = perfect (very good) health. If an intervention has a particular effect (e.g. where untreated, severe chronic depression with a preference value of 0.30 is improved to slight depression with a preference value of 0.70 through use of treatment A, an SSR1 medication for depression), then by subtracting the preference values (0.7 – 0.3 = 0.4), the utility value of treatment A can be obtained (0.4). Where utilities exist over time, they are referred to as qualityadjusted life years (QALYs). This example is given in Fig. 1. The lines show the utility values for untreated severe chronic depression (lower line) and treated depression (upper line). Although both patient groups have the same life-length, the treated patients have a higher quality of life across their lives. If the years of life are weighted by the utility values, the area between the two lines represents the quality-adjusted life years (QALYs) gained from treatment A.

Quality-adjusted life years (QALYs). The lines show the utility values for untreated severe chronic depression (lower line) and treated depression (upper line). The area between the two lines represents the QALYs gained from treatment A.

The Disability Adjusted Life Year (DALY) is an alternative to the QALY and is used for estimating the burden of illness [36]. The DALYs combine the expected length of life lost due to premature mortality with the severityadjusted future stream of life lived with disability that arises from incident cases of disease or injury. Given recent publicity and the use of DALYs to calculate the burden of disease, it is likely DALYs will become a standard measure in health-sector-wide economic evaluation.

The rationale behind utilities is that preference measurement provides a common metric for directly comparing interventions in different health fields. For example, the QALYs gained from treatment A for depression could be compared with those gained from treatment B, which might be surgical intervention for incontinence. Utility measurement has been popularized (demonized?) through its association with the cost-per-QALY or the cost-per-DALY.

So how are utilities obtained? There are two ways. In the first, a ‘composite’ health state scenario is constructed describing the health state to be evaluated. This scenario is then scored (weighted) using the standard gamble (SG), time trade-off (TTO) or, more recently, the person trade-off (PTO).

Standard gamble

Suppose we need to know the value Patient A with chronic depression attaches to her quality of life. A treatment option is presented to her that has two possible outcomes: either full health for the remainder of her life, or death. She is free to choose either the treatment or to remain with chronic depression. If the probability of full health is 1.0 (i.e. she will be cured of depression and there is no chance of death), then obviously she will choose to have the treatment. If the probability of full health is 0.90 and death 0.10, she may still choose the treatment. However there would be a point, for example at 0.80 for full health and 0.20 for death, where she is not clear as to whether she would want the treatment or would choose to remain in her current health state. This point of indifference is the ‘value’ of her health state.

Time trade-off

In the TTO, patient B with chronic depression would be asked to choose how many years of full health he would accept as a substitute for, say, 10 years of life in his current health state, followed by death. At first he may be offered 5 years. If he rejected this choice, he would then be offered 9 years. If he accepted this choice, he would be offered 6 years. The choice would continue back-and-forth like this until he indicated that the point of indifference was reached. For example, if the point of indifference was that 8 years of full health was the equivalent of 10 years with chronic depression, then the quality of life value for his current health state is simply 8/10 = 0.8.

Person trade-off

Unlike SG or TTO, in PTO the respondent usually is not a patient, but a health-care worker or representative of the normal population. He or she is asked to choose between extending the life of (say) 100 healthy individuals for 1 year, and extending the life of N individuals who are in health state X (e.g. suffering severe chronic schizophrenia). The number of individuals (N) in health state X can be varied until the point of indifference is reached. This is accepted as the utility value.

The alternative to the composite health state scenario approach is the ‘multi-attribute utility’ (MAU) approach, where each aspect of health is asked separately. Each question asked has several responses corresponding to different levels of health, each of which is weighted using one of the scaling methods above. The weighted responses are then combined forming the utility value. The key advantage of MAU instruments is that they permit the rapid and economical computation of standard utility scores across a multitude of health and illness conditions (they take 5–10 min for the respondent to complete).

One of the reasons why the three different valuation procedures (SG, TTO and PTO) give different evaluations is that they reflect different perspectives: SG is concerned with the individual's perspective where the results are uncertain; TTO is premised on the individual's valuations of a given health state, but where the outcomes are known; and PTO presents a societal perspective where the results are known.

For both composite and MAU approaches, but particularly for MAU instruments, there are key issues relating to (i) instrument coverage; (ii) the measurement of preferences; and (iii) the methods used to combine evaluations into single utility scores with the properties outlined above. The literature suggests that some utility instruments have poor coverage of health-related quality of life (HRQoL), that there are doubts concerning the validity of reported preference evaluation, that different instruments use different methods to obtain utility estimates and that these give different utility values for the same interventions [37–40]. These limitations suggest that utility measurement selection is critically important and should be undertaken carefully.

In addition to these measurement caveats, it is assumed that QALYs are unbiased indicators of preference. The literature on this point is equivocal at best. It is known that the validity of several leading utility instruments is questionable [37, 38]. There are also debates over whose perspective should be used for the value-weights; the evidence suggests that patients', proxies' and the general populations' values differ considerably and give different QALYs for the same health outcomes. Generally population and proxy estimates for illnesses are lower (i.e. the illness is seen as less desirable) than those provided by patients who have experienced the illness. Whether this is due to fear or horror of the illness or to patients' adaptation to their circumstances is unclear [41]. Given that it is taxpayers who fund the health-care sector, it is usually argued that population values should be used [39].

Types of economic evaluation

Economic evaluation employs a family of evaluation techniques. Although the techniques share some elements, they measure outcomes differently with the consequence that they seek to answer different types of research and policy questions [2]. One feature of economic evaluation is that it always seeks to compare between programmes, or between a programme and the conventional treatment, that is, it is always searching for efficiency [42].

Cost-minimization analysis

This form of analysis considers only costs. It is therefore relevant only when the clinical effects of two alternative programmes are identical. If the programmes do not have identical outputs in terms of health gain, then cost minimization is a simple analysis of costs of alternative programmes where the preferred programme is that with the lowest cost, as shown by a recent exercise in cost minimization of care for schizophrenic patients living in the community.

Cost minimization: care for schizophrenic patients living in the community

Salize and Rossler [43] reported on the costs of maintaining comprehensive care for 66 patients with schizophrenia living in the community, and compared them with the costs of residential care. The study was conducted in Mannheim, Germany. Subjects were patients who had been discharged from either of the two Mannheim psychiatric hospitals, but who had had at least one inpatient episode within the previous 12 months.

To investigate actual costs, the researchers developed the Mannheim Service Recording Sheet (MSRS); all service interventions were recorded, including therapy, medication, supportive services, activities of daily living services, general consultations, advice on occupation, accommodation (including sheltered accommodation where required), crisis intervention and somatic treatment. Care needs were identified at the beginning and end of the study. Costs per service were computed for all services, including capital costs, staff costs, overheads and other costs associated with providing psychiatric services. Because a psychiatric perspective was adopted, the social costs of housing (when not sheltered care), informal caregiving and foregone employment were not included.

The average cost of community care was reported to be US$18 377 per case per annum, which was compared with the cost of care in a long-term state psychiatric hospital of US$43 000 per case. The cost of a permanently incarcerated patient was US$61 261 per annum. When the costs were broken down, 38% were due to sheltered accommodation, 38% to rehospitalization, 10% to outpatient care, 6% for medication, 5% for rehabilitative care and 2% for other. The authors reported that community care cost 43% of institutional care; and concluded that community care was the better choice.

The caveats reported by the authors on the findings included that Mannheim was a special case because of the well-established specialized services available to support community care, that there were no improvement in outcomes at the end of the 12-month study period, and that indirect costs (e.g. family caregiving) were not assessed. In addition, it could also be argued that their method of establishing institutional costs may have resulted in artificial estimates since these were average costs across Germany; no consideration was given to the real costs associated with the psychiatric hospitals in Mannheim.

Cost-effectiveness analysis

The difficulty with cost minimization is that health outcomes usually vary between programmes. Where this is the case, cost-effectiveness analysis (CEA) is used to determine which programme is the better means of achieving desired health outcomes for particular patient groups. Cost-effectiveness analysis involves determining the cost per health outcome unit for the programme of interest and then comparing this with those from a different programme (e.g. a new drug therapy compared with the conventional therapy). That is, it is concerned with productive efficiency. An example is Davies and Drummond's evaluation of clozapine drug therapy for treatment-resistant schizophrenia. They compared the costs of therapy with the gains measured in years of life with no disability or only mild disability [44].

Subject to the caveats below, CEA is very flexible and robust, in that it can be applied across a wide variety of settings, evaluate a broad spectrum of health programmes and is relatively easy to undertake. For all these reasons CEA is the most widely used of the economic evaluation methods.

To overcome the difficulty of programme heterogeneity, outcomes are usually expressed in common units which are based on the natural health outcomes of programmes [45]. These are the improvements in health status arising from an intervention (e.g. restoration to full health following treatment for depression). These may be expressed, for example, as the cost per life year gained, or the cost per smoker who quits. Where these direct measures of health are not available, ‘surrogate performance indicators’ (indirect measures of the underlying health state) may be used. Examples of these indicators are the cost per number of cases treated, change scores on standard measures such as the Health of the Nation Outcome Scales (HoNOS) [46] or the Hamilton rating scale for depression [47]. Changes in QALYS can also be used as an indicator, although it has been argued that QALYs are not directly health-related in the way that measures used by clinicians are, such as the HoNOS. However, QALYs are derived from health-related quality of life instruments, usually where MAU models directly ask respondents to report on their health status. This captures the patient's health status perspective in a way that clinician-completed measures cannot. The two approaches (clinician-assessment and patient perspective) thus complement each other. In addition, the distinction between HRQoL and quality of life is important. The HRQoL only covers those areas of quality of life which are directly affected by health: personal care, social relationships, physical wellbeing and pychological state. Quality of life may embrace these and economic wellbeing, socioeconomic status, the environment and employment opportunities among other things. A critical issue which arises is that of interpretation of the findings. Because there is often no ‘gold standard’ for programme outcomes, interpretation of CEA may be quite subjective unless some benchmarks are available for comparison.

Cost-effectiveness: early psychosis intervention

This cost-effectiveness study was aimed at evaluating the Early Psychosis Prevention and Intervention Centre (EPPIC), Melbourne, Australia. EPPIC is a programme aimed at young people who are experiencing an emerging psychotic disorder. The programme activities included an assessment team, an inpatient unit, a case management team for outpatients, a day programme and several therapeutic subprogrammes. The aims of EPPIC are to reduce delays in initial treatment, to initiate treatment in a friendly manner and to provide phase-specific interventions to young people and their families.

In their evaluation, Mihalopoulous et al. [20] examined the cost-effectiveness ratios for 51 cases following their first year of treatment. In the absence of an adequate concurrent comparator group, comparisons were made with the treatment model offered to all psychiatric patients in the western half of Melbourne immediately prior to the establishment of EPPIC. The outcomes were changes in the average cost of per point improvement on the Quality of Life Scale (QLS) and Scale for the Assessment of Negative Symptoms (SANS), the cost of bed days, the number of outpatient services utilized as determined through completion of the Service Utilization Rating Scale, and the average cost of neuroleptic medication. Only direct costs were included in the study.

The findings were calculated on the probability of service use, based on the cases for which data were available. Given the uncertainties surrounding the costings, a sensitivity analysis was included in which costs and outcomes were varied by up to 50%. The findings showed that the average cost per pre-EPPIC patient was $24 074, whereas per EPPIC patient costs were $16 964. On this basis it was concluded EPPIC was more cost beneficial than the pre-EPPIC service. This finding was due to the reduction in inpatient bed days: the costs of outpatient care were actually higher for EPPIC patients than for non-EPPIC patients ($5666 vs $2688 respectively), caused by higher number of contacts under EPPIC. When the cost per point improvement on the QLS and SANS were calculated for EPPIC and pre-EPPIC the differences were startling: $1081 versus $12 671 for the SANS; and $380 versus $836 for the QLS, respectively. Given these large differences in cost per natural outcome unit, the advantage of EPPIC was maintained during the sensitivity analysis.

The limitations of the study included the use of historical controls which were matched with EPPIC cases. Although it was asserted by the researchers that ‘little else had changed in the social environment’ the period of the study was immediately prior to major psychiatric reform in Victoria and it is possible the results were due to ‘anticipatory’ changes as much as to EPPIC. A second caveat on the findings was that the evaluation covered the early developmental period of EPPIC. This would give rise to the Hawthorne effect perhaps playing an important part in the findings due to staff and patient motivation. A final caveat was in relation to the imprecise estimate of the costs used in the study.

Cost-utility analysis

Essentially cost-utility analysis (CUA) is an extension of CEA, except that the health gains (outputs) are measured in QALYs or DALYs. It is the convention that both natural units (morbidity for example) and utility values should be collected and presented in the evaluation.

Cost-utility analysis assumes that QALYs or DALYs provide a more complete picture of the benefits accruing from a programme as they incorporate the preferences for health states rather than just clinical outcomes. As such it is often claimed that CUA provides the necessary data for allocative efficiency evaluations; if the assumption is made that all else is equal, the preferred health programme is that with the lowest cost per QALY or, with fixed health resources, where there is the greatest gain in the number of QALYs per cost.

Given that CUA depends upon utility measurement, the critical issues in CUA are all related to either utility measurement (discussed above) or to the perspective from which the evaluation is undertaken. Regarding the evaluation perspective, if the health budget is fixed (i.e. a political or social decision has been reached on the proportion of GDP to be allocated to health care), then CUA may be an appropriate method of allocating resources between different programmes such that funds are directed to those programmes producing the most QALYs. As such, it has a role to play in helping to inform resource allocation decisions through making available to decision makers information about the value of programmes.

Given these caveats, many questions remain unanswered at this time regarding the validity of utility measurement and consequently CUA. It would appear that the role of CUA is not sufficiently well defined at this point of time for it to be uncritically accepted. However, this assessment must be balanced by recognition that cost per QALY/DALY is the only evidence available that, for a given disease, a treatment is cost effective when compared with other diseases and treatments; and research to date suggests that cost-effectiveness ratios vary by order of magnitude. Thus the fact that there are problems with CUA should not preclude its use as it is the type of economic analysis which is most informative to policy makers.

The World Bank's World development report 1993: investing in health raised interest in the cost-effectiveness of health systems [48]. However, the methodology for examining the cost-effectiveness of a large number of interventions at once is relatively new; only a handful of studies have been conducted and there are no academic published studies in psychiatry. We describe here an early example from Mauritius [49, 50].

Using the Disability Adjusted Life Year to estimate programme effectiveness in mental health

In 1994, the Government of Mauritius embarked upon a health systems reform programme. A national burden of disease study and subsequently a cost-effectiveness study were undertaken to inform the reform process. Both studies used the DALY as a measure of health outcome [49, 50].

Based on the results of the burden of disease study and discussions with the Ministry of Health and the Ministry of Economic Planning and Development, 12 interventions and 24 potential new interventions were selected for costeffectiveness analyses. A number of existing and prospective interventions for schizophrenia were included.

The existing interventions for people with schizophrenia consisted of intermittent admissions of short duration (2 to 4 weeks) for neuroleptic treatment and, in some cases, electroconvulsive therapy. A smaller number of people with schizophrenia were admitted long-term. After admission, patients were advised to come for follow-up outpatient visits to the central psychiatric hospital; a high proportion failed to attend. Two alternative interventions were analysed. Both involved the deployment of community- based psychiatric teams to provide simple counselling and long-term treatment with neuroleptics and short-term hospitalization for acute exacerbations. The only difference between the two new intervention options was the use of typical or new neuroleptics. The community-based psychiatric teams could be established rather easily as there were already a number of experienced, UK-trained community psychiatric nurses employed as ward sisters.

The current treatment strategy of intermittent shortterm admissions and centralized follow up for schizophrenia was estimated at 174 000 Mauritian rupees (MUR) per DALY. Long-term hospitalization was estimated to cost MUR4 500 000 per DALY averted. Electroconvulsive therapy was considered an ineffective treatment for almost all cases of schizophrenia and only one DALY was estimated as the potential benefit at a cost of MUR3 000 000. The alternative interventions of community psychiatric teams providing long-term treatment with typical and newer-generation neuroleptics were estimated to cost MUR34 000 and MUR35 000s per DALY averted respectively. The latter cost-effectiveness ratio was slightly more favourable despite the higher cost of the drugs because a greater benefit was assumed through greater effectiveness and fewer side-effects.

To put these findings into perspective, the costeffectiveness ratios for a selected group of other interventions analysed were: (i) the existing medical management of angina pectoris at MUR78 000 per DALY averted; (ii) expanded access to overseas invasive cardiac services at MUR420 000 per DALY; (iii) periodic mass screening combined with tight control of patients (willing to comply) at non-communicable disease clinics for diabetes and hypertension at MUR1 500 000 per DALY; (iv) establishment of a neonatal intensive care unit at MUR22 600 per DALY; and (v) improved antenatal and obstetrical services at MUR171 000 per DALY.

Cost–benefit analysis

Cost–benefit analysis (CBA) attempts to put monetary values on costs and benefits in relation to valuing a programme; and it has the potential to compare this with all alternative uses of the resources whether these alternatives are within the health-care sector or not.

The critical issue that CBA seeks to address is to determine the ‘worth’ of a programme when compared with other programmes through the comparison of costs with benefits. Where benefits exceed costs, programmes may be preferred. Once this is done, due to the use of a common metric (money), it is assumed that direct comparison can be made across all interventions with the implication that the most efficient (as determined by the greatest social benefit per cost) are the most desirable.

Issues which are particularly important in CBA are the problems of being able to (i) measure all costs and benefits (notoriously difficult to do); (ii) reduce these to a monetary indicator; and (iii) adjust the costs and benefits by discounting future benefits (usually done at 3–5% per annum). Most generally, in trying to come to grips with these issues, CBA has been usually conducted from the human capital (i.e. the cost of labour) perspective. This perspective, however, has been criticized on equity grounds because it assigns a high value to those in the workforce since it is these people who are ‘productive’ in the market paradigm. Thus the elderly, those out of the paid workforce (e.g. volunteers, homemakers), the unemployed and those from low socioeconomic backgrounds are systematically discriminated against [51], as may be those suffering mental illness.

Cost–benefit analysis: general practitioner training in depression

Rutz et al. [52] examined the effectiveness of a GPtraining programme in the diagnosis and treatment of depression. Ninety per cent of GPs on the Swedish island of Gotland participated in the programme in 1983, which consisted of instruction in the aetiology of depression and long-term and prophylactic treatments for depression in the elderly. The training incorporated videotaped case studies. Twelve months later the second part of the programme was given; this time the focus was on children and adolescents.

The effectiveness of the programme was evaluated in two studies. An impact evaluation compared baseline data (1982) with data collected in the 12 months following programme completion (1985 data); the data were sick leave due to depressive symptoms, emergency case patterns, utilization of psychiatric care, prescription of pharmaceuticals for depression, suicide rate and suicidal contacts with psychiatric and primary care. A summative evaluation was conducted between 1986 and 1988, using suicide, inpatient care and depression-related pharmaceutical prescriptions. The data were contrasted with previous trends on the island and with trends across Sweden generally. The findings were that there was a reduction in suicides, the sick-leave rate was reduced, institutionalization declined and the prescription pattern of pharmaceuticals changed, including a reduction in the consumption of sedatives.

The intervention costs included all educational material preparation, programme delivery costs, the loss of ordinary employment for the teachers and participating GPs, accommodation costs for the duration of the training and the total expenses incurred by the programme organizers (the Swedish Committee for the Prevention and Treatment of Depression). Pharmaceutical cost increases since 1982 were estimated on the assumption that the 1982 prescription level would have continued in the absence of the programme. These estimates were then compared with actual antidepressants prescribed during the follow-up period. Average costs per defined daily dosage were obtained for the three most common drugs prescribed (antidepressants, sedatives and tranquillisers). Inpatient care costs were also estimated, using the average costs per day at the Department of Psychiatry at Visby (the largest town on Gotland). The same procedure was used for the pharmaceuticals with respect to baseline and follow-up period. Sick-leave costs were calculated based on the sick-leave benefit paid by the National Insurance Office. Regarding suicide costs, police suicide records were used as the indicator, and the value of the number of years of life saved was estimated using the statistical model espoused by the Swedish National Road Safety Office.

The findings were that the programme cost 369 000 Swedish crowns (SEK); there was an increase in antidepressant costs, but a reduction in tranquillisers and sedatives leading to a net gain of SEK227 000. There was a 51% reduction in psychiatric admissions causing benefits of SEK11 250 000. Sick-leave days declined by 19%, providing a benefit of SEK3 400 000. Regarding suicides, there was a decline of 49% during the study period, leading to benefits of SEK140 600 000.

The programme benefits were estimated to be SEK155 000 000, giving rise to a net benefit of SEK154 631 000. A sensitivity analysis was conducted by varying the value of life; instead of using the road safely estimates, estimates provided by the National Board of Health and Welfare were used. The authors also varied suicides; instead of police records they estimated the costs using the Swedish National Statistics Office's suicide reports.

The caveats on this study were that the calculations were based on rough estimates and the assumptions outlined above. It was assumed that the estimates for the baseline year (1982) would have been true for the following extrapolated years (i.e. the assumption was made of steady state indicators, such as that depressed persons did not recover). A third caveat is that no effort was made to measure the intangible effects of the programme (improved quality of life, leisure time, etc.).

On conducting economic evaluation in the mental health care sector

In 1992 Drummond estimated that 25% of all healthcare programmes had been assessed in a formal evaluation [53]. When this low level of evaluation activity is placed in the context of ever-increasing demand for health care and arguments over resource constraint, it is obvious that evidence-based medicine will continue to become more important. Clinicians of all types will be asked to evaluate their programmes and those evaluations will be increasingly taken into account by healthcare planners when determining which programmes to prioritize. That mental health care programmes will be part of this process of scrutiny and prioritization is highly likely given the increasing number of people with mental illness living in the community.

Under these circumstances, psychiatrists will increasingly be requested to contribute knowledge about health care in the psychiatric field: they will be asked to justify their health care programmes at a time when the demand for their services will be increasing. It is therefore important that they not only have an understanding of economic evaluation techniques, but that they also understand the requirements of economic evaluation so that they are better able to work with health economists.

The following checklist, based upon that designed by Harris et al. [54] for use in the pharmaceutical industry, may help alert psychiatrists to these issues. When undertaking an economic evaluation, the following critical questions should be considered.

When should an economic evaluation be included?

Generally, economic evaluations should be included alongside all clinical trials in the interests of providing economic as well as clinical evidence about the intervention.

Where economic evaluation is undertaken, the best conditions are those relating to ‘usual’ clinical practice, as these provide realistic estimates of costs and benefits. The reason why this is preferred is that the more realistic the estimates, the greater their use in informing policy decisions.

What is the purpose of the evaluation?

This is the most fundamental question of all. The answer will determine what kind of an evaluation is undertaken. For example, where societal perspectives are adopted, a greater range of information about costs and benefits will need to collected. There are clear implications for the complexity and cost of the economic evaluation. Given that most evaluations only make marginal differences to programmes (i.e. there is political accommodation: decision-makers take a range of considerations into account when determining the future of any particular programme, and changes are usually at the periphery of programmes [55]), Campbell argued that full-blown experimental evaluations (which are very costly to carry out) should be reserved for ‘proud programmes’: those programmes which were extremely important [56]. The question regarding the purpose of evaluation also involves consideration of several subsidiary issues.

1. The nature of the problem being considered. Different programmes operate at different levels of development, they have different target audiences, different activities and operate in different settings. All these affect how an evaluation would be designed and carried out.

2. Whether the programme is new or well-established. Where a new programme is being proposed the evaluation may involve a modelling exercise to assess estimated costs and outcomes; that is, the model will use a hypothetical estimate of expected programme costs and benefits based on previously established data published in the literature or from another programme. The evaluation activities will be very different to those involved in evaluating a mature, well-established programme (which may have never been evaluated previously).

3. The existence of previous evaluations. Where previous evaluations are available, they may provide information directly relevant to the conduct of the evaluation of the programme of interest. Usually, previous studies have not included an economic evaluation (since they are comparatively rare in the psychiatric literature). Where this is the case it may be possible to conduct a partial evaluation where economic data are synthesized with existing results.

Is there a comparator programme?

For most existing programmes there are comparator programmes; for example, there are alternative pharmaceuticals available for treating psychosis. When economic evaluation takes place it is important the evaluand (the programme or new proposed programme) is compared with the existing programme most likely to be replaced. Where several alternative programmes exist, the appropriate comparator is the most widely used programme. Where there is no comparator programme, a pre–post design may be used. It has also been argued that for sector-wide economic evaluations the null option (doing nothing; the absence of a programme) can be used as a comparator [57]. Although is it often argued that a mirror design can be used to overcome the lack of a contemporary comparator, this may provide misleading results due to historical changes occurring outside the treatment regime [8].

What type of economic evaluation is most appropriate?

The type of economic evaluation which is most appropriate will, of course, depend on the question to be asked. Most clinical studies are structured around clinical needs and criteria; economic evaluations are usually conducted alongside the clinical trial. This has important ramifications affecting the type of economic evaluation which can be undertaken. One of the difficulties is that when economic evaluations are included after protocol design, the options for the economic evaluation are constrained; it is better for economic evaluation to be planned for as part of the clinical trial protocol.

What costs and outcomes should be included and how should they be measured?

Generally outcomes should be measured at the three levels already described: clinical outcomes, changes in health status and quality of life measures. Which of these should have priority in any particular study depends on the researchers and the circumstances under which they are working. In practice, costs should include those associated with all major events (regardless of their specific cause) that can reasonably be attributed to either the illness of interest or to the programme [58].

Conclusion

Across all developed countries there is mounting pressure on mental health care systems: new interventions which allow more effective treatment increase ‘need’ for the treatment. If resources are held constant or increase slower than the rate of mental health-care innovation, this inevitably leads to a widening gap between ‘total need’ (the burden of ill health) and ‘met need’. Under this scenario the ability of health-care systems to ‘meet all needs’ will be increasingly compromised [59].

Governments have responded to this crisis by pursuing the goal of efficiency in health-care service provision. As shown above there are various definitions of efficiency, and the most comprehensive include not only cost information, but cost per outcome and cost per outcome value. In a climate where health-care services are being openly rationed, the use of economic evaluation to provide critical information about these matters will increase. Increases in demand for psychiatric services will ensure that psychiatrists are held accountable for their programmes; they will be expected to undertake comprehensive evaluations, including economic evaluation, so that their programmes can be compared with other health-care interventions.

Given this, it is essential that psychiatrists educate themselves as to the types, function, activities and issues surrounding economic evaluation. It is only from this informed base that psychiatrists will be able to use economic evaluation to further their health-care programmes.

Currently, the evidence would suggest that in the past there have been few good economic evaluations of psychiatric services; given current levels of activities, this situation is changing. Where evaluations have been undertaken, it has been reported that most evaluations were methodologically poor. This has been blamed on measurement difficulties, poor understanding of economic evaluation, a failure to work closely with health economists, and a failure to adhere to the basic principles of economic evaluation [9, 10].

This paper has sought to remind psychiatrists of those basic principles. If these principles are applied, the position described above can be turned around so that economic evaluation is used as a tool for psychiatry, rather than a master, in providing the evidence necessary to assist with determining health-care priorities.

Footnotes

Acknowledgements

We thank Cathy Mihalopoulos for her comments on the manuscript. Graeme Hawthorne's position is funded by the Victorian Consortium for Public Health.