Abstract

A not uncommon methodology in psychiatric research has been the retrospective assignment of diagnoses from case notes and other sources, often buttressed by statements that such methodologies provide a valid and reliable source of classification. This has been an attractive and potentially cost-effective approach which avoids the time and expense associated with case-finding and prospective psychopathological assessment. It can also be used with pre-existing data sets complemented by case records to assign diagnoses according to more modern diagnostic criteria. However, given the potential problems associated with rating psychopathology and assigning valid psychiatric diagnoses [1–4], a number of conditions need to be satisfied before accepting the validity of such methods, particularly when they are used in studies aiming to clarify the aetiology of, and risk factors for, specific disorders such as schizophrenia.

The first clear method for making the most of incomplete or patchy pre-existing clinical material was provided by the development of the PSE syndrome checklist as one of the elements of the 9th edition of the Present State Examination (PSE) [5]. This was a positive initial step, which developed for pragmatic reasons, but was complicated both by the idiosyncrasy of the data reduction rules underpinning the PSE and by the lack of careful examination of its validity as a method. More recently, a more systematic and soundly based clinical tool known as the Operational Criteria Checklist (OPCRIT) for psychotic illness has been developed [6] and used in a series of clinical and biological studies [7–11]. This instrument represents an attempt to develop a standardised polydiagnostic approach to existing clinical data sets. The authors sought to combine the ‘top-down’ approach, the major aim of which is to produce operational diagnoses, with the ‘bottom-up’ method, which involves a careful definition of a range of clinical phenomena. It has demonstrated good inter-rater reliability particularly at the full diagnostic level. Earlier checklists used by the authors on data from the Maudsley Schizophrenia Twin Series [12] produced polydiagnostic assignments which manifested different levels of heritability [13,14], hence providing some evidence of the external validity of the criteria sets, and in the process, the method itself.

A recent study by Craddock et al. [15] investigated the concurrent validity of OPCRIT with consensus best-estimate lifetime diagnoses and found good to excellent concordance. However, there were a number of serious methodological flaws associated with this study. First, the raters who rated the OPCRIT checklists also derived the consensus diagnosis and this may have influenced the diagnoses arising from the consensus procedure. Second, the study only compared OPCRIT to one other diagnostic procedure (the consensus, best-estimate lifetime diagnosis). The consensus procedure involved the two raters discussing available information, notably case note information, and then agreeing on a diagnosis. The conclusion that OPCRIT has good concurrent validity compared to other diagnostic procedures is thus somewhat premature, as it remains unknown how concordant OPCRIT is with interview-based methods of diagnostic assignment.

McGorry et al. [16] recently showed that alternative procedures to assign common sets of operational criteria have only moderate concordance. Hence it may be argued that the conclusions drawn from any particular study requiring rigorous diagnostic classification are critically dependent on the validity of the procedure employed. Unfortunately, this important limitation has been consistently overlooked by developers of new diagnostic procedures as well as the researchers using these procedures. The impact of diagnostic misclassification can be significant and may constitute a possible obstacle to progress in aetiological research [17].

The term ‘procedural validity’ was originally coined by Spitzer and Williams [18] and refers to the extent to which new diagnostic procedures yield results similar to existing diagnostic procedures. Failure to assess procedural validity is an important latent source of classification error, with a definite contribution to misclassification rates. Although the term ‘procedural validity’ assumes that some procedures are more valid than others, it remains unclear what criteria should be used to determine this.

The present study is an attempt to examine the procedural validity of the most rigorous retrospective case note diagnostic method: the OPCRIT system by separately rating the case notes and clinical abstracts of 50 patients admitted to the Early Psychosis Prevention and Intervention Centre (EPPIC) (Melbourne, Australia) who participated in the DSM-IV field trial for psychotic disorders. A previous study by McGorry et al. [16] examined the procedural validity of four methods of assigning a DSM-III-R diagnosis and the current study compares the OPCRIT method of assigning diagnoses with these methods. The original study examined the concordance of 2 validity-oriented interview-based methods of assigning with kappa values ranging from 0.53–0.67 [16]. The problem of misclassification even with kappa values which were moderate to good was highlighted. As a secondary focus, ‘historical’ diagnoses assigned by both OPCRIT and the Royal Park Multidiagnostic Instrument for Psychosis (RPMIP) are compared. It is hypothesised that the OPCRIT method of retrospective assignment of diagnoses using files or abstracts will show even more pairwise divergence than the other paired methods of diagnostic procedures, and hence be found to seriously lack validity.

Method

The case notes and clinical abstracts of 50 first episode patients consecutively admitted to EPPIC were requested. These 50 people were the same people who had also participated in the procedural validity study of the DSM-IV field trial instrument [19], the RPMIP [2,3], the Munich Diagnostic Checklists [20,21] and a consensus diagnostic procedure [16]. In this study 50 consecutively admitted patients with first-episode psychotic illness treated at the EPPIC [22], a specialist first-episode psychosis program, which has a catchment area of 800 000 people, were recruited during 1992. The mean age for the study group was 26.3 years (SD = 6.8, range = 18–45). There were more men (n = 31, 63%) than women (n = 19, 38%), the majority (n = 42, 84%) had never married, and 56% (n = 28) were unemployed at the time of index assessment. Mean number of years of education was 11.1 (SD = 2.2). Organic aetiology and mental retardation were exclusion criteria. Written, informed consent was obtained from all subjects. The study group approximated an incidence sample of first-episode psychosis for a defined area of Melbourne. The study formed part of the multicentre DSM-IV Field Trial for Schizophrenia and Related Psychotic Disorders [19] that examined the reliability and concordance of three sets of options for diagnosing DSM-IV psychotic disorders plus the criteria from DSM-III, DSM-III-R, and ICD-10. Forty-six abstracts and 45 sets of case notes were collected for the present study. The remaining abstracts and case notes were not located during the study period. An attrition rate of approximately 10% of case notes is comparable to other studies of this nature [6].

OPCRIT is described in detail by McGuffin et al. [6], but is essentially a checklist built up of operational criteria defined by a comprehensive glossary. The items are mainly psychopathology ratings with some historical and course ratings. A computer algorithm which assigns diagnostic criteria is then applied to all ratings. There are relatively few complex criteria, which is somewhat surprising, given the importance of rating the sequence and prominence of symptoms for accurate diagnostic assignment in modern diagnostic systems. OPCRIT is reported to be reliable when used with case notes, clinical abstracts, and even correspondence between clinicians. Hence, it is implied that it can be used as a stand-alone procedure though it is also characterised as an ‘accessory’ or a complementary tool [6].

Three raters (CM, CMcF and SR) shared the task of rating the case notes and clinical abstracts of the study sample. Before the study began, the raters achieved a baseline level of rater concordance by rating and subsequently discussing the case notes and abstracts of four patients, who were not part of the study sample. The rating of the case notes and abstracts of the study sample occurred in two stages. Stage 1 consisted of clinical abstract ratings alone. Twenty randomly selected abstracts were rated by all three raters for the purpose of measuring inter-rater reliability. The remaining 26 abstracts were then equally distributed between the raters. Each rater therefore rated approximately 30 abstracts.

In stage 2, each rater rated approximately 15 sets of case notes each. It was ensured that the case note and abstract diagnostic ratings for each subject used in the final pairwise comparisons were not completed by the same rater in order to maintain independence of ratings. The method used in the four other diagnostic procedures is described in McGorry et al. [16].

Results

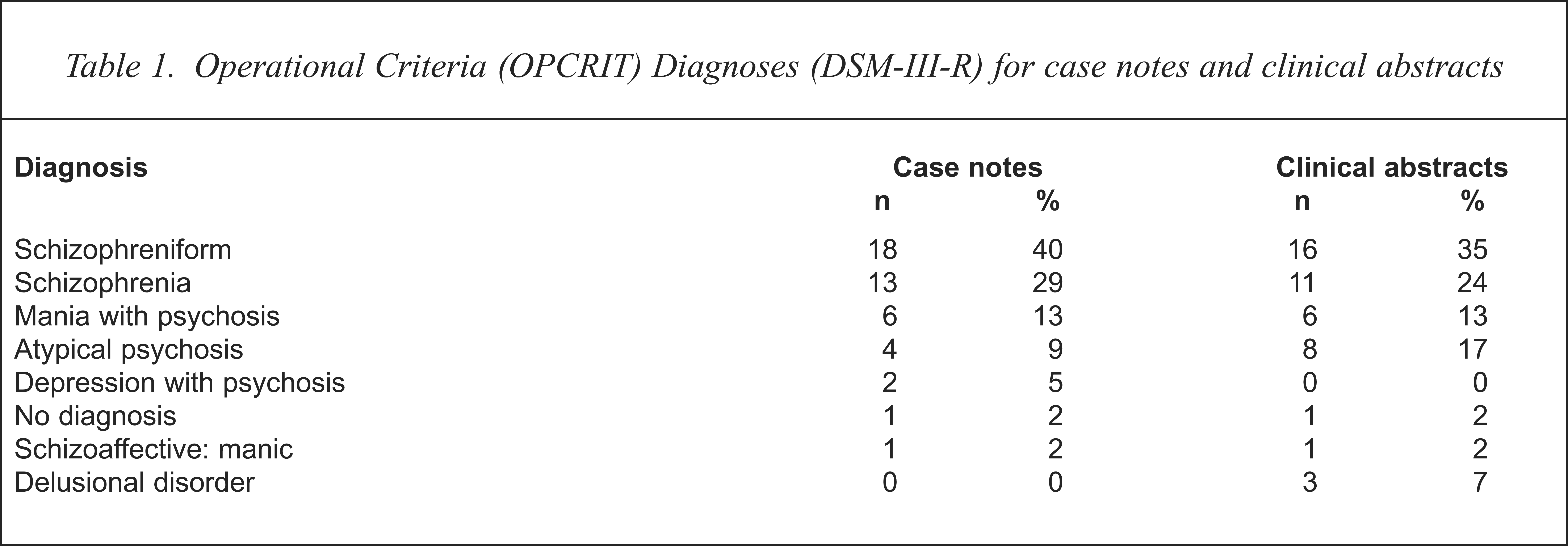

The OPCRIT DSM-III-R diagnostic profile of the study sample is shown in Table 1.

Operational Criteria (OPCRIT) Diagnoses (DSM-III-R) for case notes and clinical abstracts

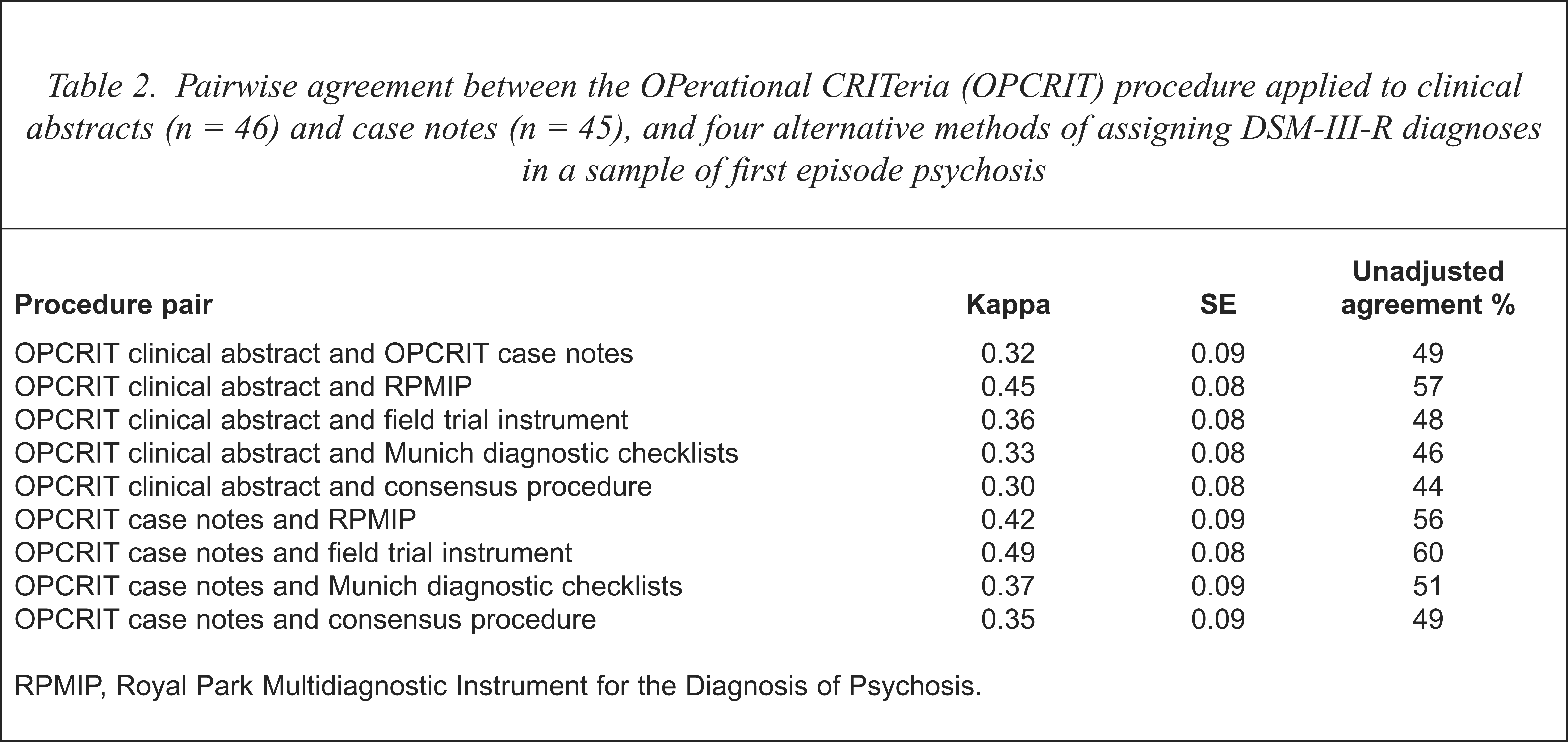

Inter-rater reliabilities for item-by-item agreement between the three raters was assessed and found to be satisfactory (median κ = 0.84, semi-IQR = 0.19). Levels of agreement for the two data sources for assigning OPCRIT diagnosis using either the clinical abstract or case notes, plus a pairwise comparison of OPCRIT case note and clinical abstract derived diagnosis with each of the four DSM-III-R diagnostic procedures used in McGorry et al. [16] is shown in Table 2.

Pairwise agreement between the OPerational CRITeria (OPCRIT) procedure applied to clinical abstracts (n = 46) and case notes (n = 45), and four alternative methods of assigning DSM-III-R diagnoses in a sample of first episode psychosis

RPMIP, Royal Park Multidiagnostic Instrument for the Diagnosis of Psychosis.

Pairwise comparison of OPCRIT and the four diagnostic procedures produced an 8 × 9 matrix for each pair of comparisons. Cohen's unweighted nominal kappa and associated standard errors [23] is presented as the index of agreement between the eight pairs of comparisons as well as the per cent agreement between the pair.

Pairwise kappa values as well as per cent agreement between OPCRIT and each of the four comparison diagnostic procedures ranged from poor to moderate. Full diagnostic concordance for DSM-III-R diagnosis between the OPCRIT case note procedure and all of the other four procedures occurred in only 35.6% of cases and full diagnostic concordance between the OPCRIT clinical abstract procedure and all of the other four procedures occurred in only 32.6% of cases. By contrast, the pairwise comparisons in our initial study [16] were considerably better than the current results. Pairwise kappa values obtained in the original study ranged from 0.53 to 0.67, unadjusted agreement values between the pairs of procedures ranged from 66% to 76% and full diagnostic concordance between the four procedures occurred in 54% of the cases. The level of concordance between the two sets of OPCRIT ratings applied to the case notes and the clinical abstracts was also poor to moderate. However, neither data source was associated with better concordance when OPCRIT was compared with the other diagnostic procedures.

Since OPCRIT and the RPMIP are both polydiagnostic procedures, kappa values for common historical diagnoses, including Feighner, RDC, Schneider, Taylor and Abrams (schizophrenia) and Bouffée Délirante (Licet-S) [2,3] were also derived. These again proved to be very poor, with the highest agreement occurring between the OPCRIT case note rating and the RPMIP for Schneider's classification of schizophrenia (κ = 0.38) SE = 0.14).

Discussion

This study has demonstrated that the pairwise concordance of DSM-III-R diagnoses assigned by OPCRIT alone using two forms of case material with DSM-III-R diagnoses assigned by four other methods is poor and substantially lower than the pairwise comparisons between the remaining four methods. It is acknowledged that one of the original four methods, the consensus procedure, was to some extent retrospective, like OPCRIT. However, there was always one clinician present who knew the patient well during this procedure and who could answer specific questions regarding missing data. The overall results are of some concern and have important implications.

The sources of discordance are relatively simple to identify and principally involve information variance. A case record or abstract is a clinical tool, not a research database, as emphasised by Lützoft et al. [24]. Features not recorded in the abstract or case notes are not necessarily absent. They may have been missed, not recorded, not felt to be important for diagnosis or management or interpreted differently. The sequence and prominence of symptoms, critical for diagnostic assignment, may not have been clearly recorded and those features that have been recorded may not have been rated according to strict glossary definitions.

A clue to the weakness of the data source and the method can be found in earlier OPCRIT studies, where 20–40% of cases meet criteria for atypical psychosis (DSM-III) or Psychotic Disorder NOS (DSM-III-R), although the rate in the present study was somewhat lower, especially where the complete case notes were used. In the earlier work however, the authors inappropriately blamed the diagnostic system rather than the retrospective methodology, which is compromised by missing or limited data [25]. This is particularly the case with clinical abstracts, though OPCRIT applied to detailed case notes did not achieve any higher concordance in this study. A further issue contributing to discordance could relate to the content of the algorithms, which often blend low-quality, elemental data (even when the data set is complete). This relates to the complexity and the quality of the checklist itself.

This raises the question of what level of quality of information (if any) would suffice for OPCRIT or any similar approach to be used as a stand-alone procedure. OPCRIT was developed in an academic unit which prides itself on producing exemplary case notes. However, the situation was confused somewhat in the original research [6], since the authors were not clear as to the extent to which case notes were augmented by the PSE ratings, and they included OPCRIT ratings made from correspondence to general practitioners. This suggests that the quality of the data set must have been extremely variable, yet buttressed in many cases. Our study was also conducted in a very active research environment with a 12-year focus on diagnostic research, including participation in both the ICD-10 and DSM-IV field trials for psychotic disorders. We believe the quality of our case notes and clinical abstracts to be also very good, and more than adequate for the purpose for which they were intended, namely clinical management. They have also functioned as a useful accessory source of information to contribute to psychopathological ratings in our research and feed into the RPMIP procedure [2,3].

In our opinion, the results of this study indicate that the role of case notes and related material in diagnostic assignment should be restricted to an adjunctive one. Consequently, procedures such as OPCRIT, as the authors of OPCRIT suggested as an option, (though they indicated it can be used on its own) [6], should function solely as accessories in a more global task of diagnostic assignment centred around direct subject interview. This appears to be the approach adopted in the schedule for clinical assessment in neuropsychiatry (SCAN) [26] where the item group checklist (IGC) functions in a complementary manner to the PSE 10. Given that the dominant research strategy in psychosis is based around the reduction of heterogeneity within psychotic disorders [27], the minimisation of the risk of misclassification seems essential. The findings of the present study must bring into question the conclusions of any studies that have relied solely on OPCRIT or similar procedures to assign diagnoses retrospectively using only case record material.

Acknowledgments

The authors would like to acknowledge the statistical support of Susan Harrigan and Paul Dudgeon and the financial support of VicHealth (Victorian Health Promotion Foundation) and the National Health and Medical Research Council.