Abstract

Objective:

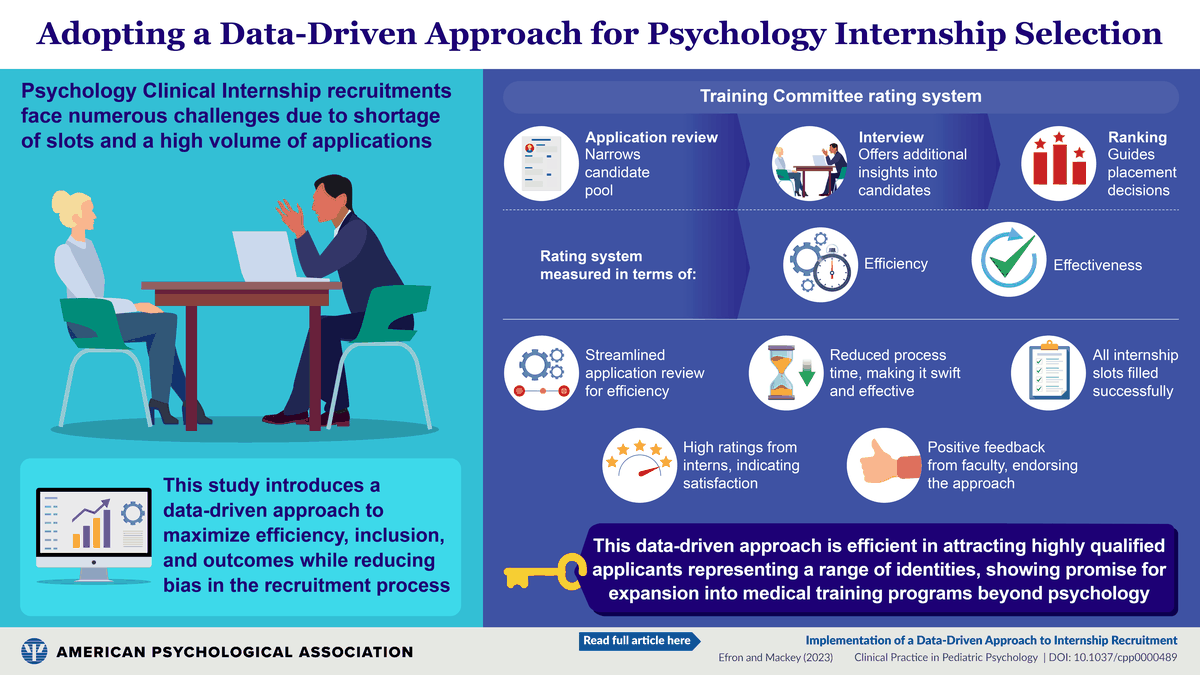

Recruitment for Psychology Clinical Internship is a complex and labor-intensive process. The objective of this study is to describe a data-driven procedure to maximize objectivity and optimize outcome for internship recruitment.

Method:

The Psychology Training Committee designed an objective rating system for online applications and interviews, incorporating both objective and subjective data. Perceptions of efficiency and effectiveness with this approach were assessed via a survey distributed to the approximately 50 faculty members who have participated in the internship recruitment process. Intern perceptions following completion of the program were also assessed.

Results:

This recruitment procedure is highly efficient, as the streamlined process of reviewing applications, developing a list of candidates to interview, and generating final rankings of candidates interviewed occurs quickly, with limited burden to training faculty. The system has demonstrated effectiveness, as there have been no unfilled internship slots and all matched applicants have been within the top half of the rank list generated annually. All interns during this period of time have successfully completed the program, and data suggest that the training interns have received has met (or exceeded) their expectations. Survey data from training faculty echo these findings.

Conclusions:

This data-driven approach to internship recruitment has been easy to implement. This approach provides a highly effective and flexible system for ranking a diverse and qualified group of applicants and has the potential to be utilized by other training programs within psychology, as well as across a wide range of disciplines within the medical field.

Implications for Impact Statement

This study describes a data-driven procedure developed to maximize objectivity and optimize outcome for internship recruitment. The approach reviewed has demonstrated efficiency and effectiveness for recruiting a diverse and qualified group of applicants. It has the potential to be utilized by other training programs within psychology and medicine more generally.

Recruitment for Psychology Clinical Internship Programs occurs yearly, as a 1-year clinical internship is required to receive a doctorate in Clinical Psychology. The number of applicants to Psychology Clinical Internship Programs frequently exceeds the number of available internship slots, creating a chronic internship shortage nationwide (Baker et al., 2007; Brock et al., 2015; Rodolfa et al., 2007). In the 2021 internship match, there were 3,515 APA-accredited internship slots available and 4,139 applicants (Association of Psychology Postdoctoral and Internship Centers [APPIC], 2021). The imbalance between internship positions and internship applicants has received considerable attention resulting in significant efforts to increase the number of internship opportunities available nationwide. However, as a result of the chronic internship shortage, students often apply to a large number of internship programs, in the hopes that doing so will increase the likelihood of a match; the median number of applications submitted by applicants in Phase 1 of the 2021 match was 15 (APPIC, 2021). Therefore, due in part to the internship shortage as well as to the fact that internship is a requirement for a doctorate in Clinical Psychology, internship programs receive a high volume of applications in any given year.

For APA-accredited internship programs, there is a standardized application process that occurs during the fall and winter annually. Specifically, applications are due in or around November, interviews are conducted in December or January and rankings are due to the nationwide match service in the beginning of February. Thus, the timeline for the process, from start to finish, is relatively short; internship sites complete the entire screening and selection process within a 3-month period. Given the volume of applications that require review, the time involved in interviewing a subset of applicants and the short duration of the entire process, internship recruitment is a complex and labor-intensive process for internship sites nationwide. Therefore, it is highly desirable to develop efficient procedures for internship recruitment. In doing so, however, it is imperative to maintain focus on the ultimate goal, which is to match with highly competent students who are a good fit for the particular program. The internship year is a critical component of training for future clinical psychologists; it is an intensive clinical experience, during which students are trained in a variety of clinical competencies that are necessary to effectively deliver clinical services. It is important for graduate students to be matched with programs that are consistent with their training background and training goals.

Efficient internship recruitment must include efforts to ensure diversity in internship cohorts. Increasing diversity among pediatric and child clinical psychologists is of critical importance (American Psychological Association, 2003). Therefore, it is crucial to consider the ways in which representation is captured in all stages of the recruitment process, and it is important to develop standardized procedures for doing so.

Finally, it is ideal to utilize efficient recruitment procedures that are structured in a way to allow for adaptation to changing circumstances. The COVID-19 pandemic, and the corresponding shift to virtual interviewing, certainly highlighted the need for flexible recruitment procedures. Other more common situations, such as sudden changes in funding or faculty involvement in training, could also necessitate flexibility with regard to the recruitment process. Furthermore, given that for a variety of reasons internship programs may change their focus over the years and more generally that there are trends in training that change over time, it is optimal to utilize recruitment procedures that are easily adapted to the changing landscape of the training program.

Internship training directors are continuously exploring avenues to streamline recruitment efforts while maintaining the goals of matching with highly competent trainees who are a good fit for their particular program, ensuring diversity in each cohort, and maintaining enough flexibility to adapt to changing circumstances. Previous research aimed at developing screening measures for sites to use to measure internship readiness (e.g., competencies and experiences) has demonstrated the difficulty of creating one measure to capture the full range of experiences while highlighting the need for such tools (Power et al., 2011). Recent work also emphasizes the importance of socially responsible practices in internship recruitment (Glover et al., 2022). However, no official standard or guidance for this process exists or examines the process across both application review and interviews. As such, the primary objective of this study is to describe a data-driven procedure developed by our site to maximize efficiency and optimize outcome for recruitment to a Psychology Clinical Internship program.

Method

Recruitment Procedure

The Psychology Training Committee at Children’s National Hospital designed a multistage process to maximize efficiency and objectivity in the internship recruitment process (see Figure 1). Although we do not publish all of the proprietary forms and ranking formulas, programs are invited to contact the authors for additional information regarding specifics of these processes. First, a rating system to review applications submitted for the Psychology Clinical Internship Program was developed; the overall goal of this system is to determine which 40–45 applicants, from a pool of approximately 200 applications, would be invited to interview for one of four positions in our Psychology Clinical Internship. InfographicRecruitment Process Overview

Application Review

A detailed Application Rating Form was developed, which includes minimum criteria for the internship (e.g., number of child and adolescent therapy cases, number of assessment cases) as well as more advanced criteria (e.g., quality of experiences, quality of cover letters, essays, recommendations). Each application submitted to our program is reviewed by two psychology faculty members, using this particular form. Raters have the option of utilizing a paper version of the form or completing it online (via REDCap). Over the years, there has been a clear shift, with all faculty completing the form online in the 2020–2021 recruitment season.

More specifically, the criteria on the Application Rating Form include information about the quality and quantity of assessment and treatment experiences, as well as the caliber of academic achievements reported by applicants. Information pertaining to applicants’ membership to underrepresented groups (e.g., with regard to race/ethnicity, ability status, sexual orientation/gender identity, etc.) is also noted. Ratings include an overall score based on criteria determined by the Psychology Training Committee to be important for success in this particular program. Each year, the form is reviewed and small modifications are made with input from both the Psychology Training and Diversity Committees. As such, the number of items on the form may change from year to year, but it generally requires approximately 30 min for a faculty member to review each application. Each application is reviewed by a pair of faculty reviewers who rate the application individually. Faculty reviewer pairings are made in a thoughtful way, with consideration of each faculty member’s experience in the recruitment process (i.e., faculty members newer to the process are paired with those more experienced) as well as their areas of specialization (e.g., faculty members with expertise in neuropsychology would be paired with someone with expertise in a different area, such as pediatric psychology). Applications are rated by faculty members over approximately a 2-week period of time. After faculty members complete their ratings, the data are exported into SPSS to facilitate analyses. A list of 40–45 top-rated applicants is generated, and those applicants are then invited to attend one of two interview days (either in person or in the case of the 2020–2021 recruitment season, virtually). Up until 2021, the list of top-rated applicants was made using the overall rankings as final scores. In the 2020–2021 recruitment season, the Application Rating Form was modified so that raters provided individual ratings on sections of the form (e.g., clinical, research, commitment to health equity, and match with the program) rather than one global rating. These ratings, rather than one overall rating, were then used in a weighted formula to provide a total application score. The application score was used to select the top applicants who would be invited to interview for a slot in our program. It was also ultimately entered into our algorithm for ranking candidates who interviewed at our site.

Interview Process

The Psychology Training Committee also developed a semistructured interview system, to be used by our faculty on the two internship interview days. Approximately 35 faculty participate annually in internship interviews, out of our total of approximately 50 faculty members. The number of interviews each faculty member conducts across the two interview days may range from two to eight, with most faculty members interviewing a total of four candidates. During interviews, each applicant is interviewed by three faculty members in the course of their interview day, on a variety of topics including clinical experience, research/dissertation experience, training, professional goals and match with the program. Before the interview day, training directors review the cover letters submitted with the applications of the interviewees to determine applicants’ primary areas of interest. For their interviews, efforts are made to pair applicants with two interviewers who share their primary areas of interest and one interviewer who does not. During each interview, an Interview Rating Form is used by each faculty member, which outlines specific information that should be obtained from the applicant during that particular interview. Questions to be asked of the applicant in a particular interview varies depending on whether the interview is the applicant’s first, second, or third interview of the day. Specifically, in the applicants’ first interview of the day, they are asked a specific set of questions regardless of who the interviewer is, in their second interview they are asked a different set of questions, and in the last interview, they are asked yet other questions. Following each interview, the interviewer completes the Interview Rating Form, on which they rate applicants on a series of dimensions (e.g., match with our program, ability to articulate information about clinical cases and working with diverse populations, interpersonal skills). This form incorporates both quantitative and more subjective data. After all interviews are completed (i.e., after the second interview day), each faculty member ranks all applicants that they have personally interviewed across the two interview days. It is noteworthy that this procedure for conducting interviews represents a change from our previous system, where each faculty member determined the focus and content of the interview as well as what information factored into one global rating of the applicant.

Match Ranking

Finally, the Psychology Training Committee created a unique ranking formula, combining the information obtained from the application ratings and from the faculty interviews, which gives 20 unique data points (i.e., two overall application rating scores, three overall interview rating scores, three rankings across those applicants interviewed by each interviewer, and four ratings by three interviewers regarding specific factors of interest). Additionally, a yes/no variable for whether an applicant is known to represent a minoritized group (e.g., a rating for being a member of a racial/ethnic minoritized group and a second for gender identity/sexual orientation, lower socioeconomic status, first-generation scholar, etc.) is obtained from the application. This information is included in the data set and used only after the initial rankings are generated. SPSS syntax is used to run the algorithm using the entered data, which allows for an efficient, data-driven method for rank-ordering candidates. Specifically, a 50% weight is given to the average of the three overall interview scores, 25% weight to the average rank orders of applicants interviewed across three interviewers, a 20% weight to the average of the ratings of important factors (i.e., enthusiasm, match, interest in supervising, interpersonal skills), and a 5% weight is given to the average of the two application ratings. When the ranks are completed, then the diversity variable is considered. First, the percentage of total interviewees representing diverse backgrounds is tallied overall, and then the percentage represented in the top, middle, and bottom 1/3 of the rank list is reviewed to ensure that diverse candidates are not disproportionately represented at the middle or bottom thirds. If there is even distribution, diverse status is then used to break any ties in the rankings, in favor of the diverse candidates. If there is no even distribution, a 5% change in score is given to all diverse candidates, and the ranking formula is rerun, generating the final rankings.

Faculty Survey Procedure

To assess faculty perceptions of the current recruitment process and a comparison of this system with the previously used system, a survey was designed by the study authors and distributed to the 50 current psychology and neuropsychology faculty who at some point have participated in internship recruitment at our institution. Survey questions focused on overall impressions of the recruitment process, positive and negative aspects of the process, perceptions of consideration of diversity in recruitment, and for those who had also participated in the former internship recruitment process, thoughts were elicited on the comparison between the two approaches.

It should be noted that the former system utilized at our site differed from the one currently used in several ways. First, the internship applicants had two, rather than three, interviews. Second, as mentioned previously, the content of each interview was determined entirely by the faculty member conducting the interview (oftentimes resulting in much duplication of information obtained from the applicant). Third, following the two interview days, a 3-hr faculty discussion of all candidates was held. Finally, based on that discussion, all faculty members ranked all candidates, without the use of data, and these rankings were then summarized by the training directors to generate the final rankings of internship candidates.

Measures

We examined indicators of efficiency, effectiveness, and faculty perceptions of efficiency and effectiveness of the recruitment process.

Efficiency

To evaluate efficiency, we utilized the length of time for all faculty reviews of applications to be complete (we did not ask faculty to time how long they spent reviewing each application) to extend invitations for interviews as well as the length of time to generate a rank list after interviews. We also surveyed faculty in regard to their perceptions of efficiency in the recruitment process overall and with regard to specific aspects of the review process. Questions on efficiency focused on whether efficiency and the process to complete and submit ratings was a positive or negative part of the experience of application rating. Percentages of faculty reporting these aspects as strengths or challenges were tallied as indicators of perceptions of efficiency.

Effectiveness

Intern Ratings of Internship Program Satisfaction by Training Domain

In addition, we surveyed the faculty who have participated in the internship recruitment process for perceptions of the effectiveness of the process. Visual slider scales representing scales from 0 to 100 were used for perceptions of overall feelings of the effectiveness of the application and interview rating process, comparisons in effectiveness and ease compared to the prior system. Room for qualitative feedback was provided. These questions covered the process as a whole as well as specific items related to the effectiveness of the current system with regard to the recruitment of a diverse intern class.

This project was unfunded and was certified exempt by the institution’s Institutional Review Board (IRB) based on its status as a quality improvement project with no risk. Regarding the faculty survey component of the study, an information sheet approved by the institution’s IRB was included at the start of the anonymous survey for the faculty, which described the study, voluntary nature of participation, and potential risks involved in participation.

Results

Participants

Efficiency and effectiveness are reported from the 2014 to 2020 application cycles. Intern perceptions of whether the program met their training goals included interns from 2016 to 2020 given 2016 was the year that the Standards of Accreditation changed and the Application Rating Form was altered to reflect these updates. Additionally, surveys were sent to 50 current faculty members who have participated in the internship recruitment process. Nearly 75% of faculty members returned the survey (n = 38).

Efficiency

To evaluate efficiency, we assessed the speed of review of internship applications. Using our current recruitment system, faculty members have 2 weeks to rate the 12–15 applications assigned to them. The Application Rating Form has been designed to maximize efficiency, as the items on our rating scale follow the order that the information appears in the actual application. Furthermore, the Application Rating Form is also structured to rate minimum criteria first so that the remainder of the form is not completed in the event minimum criteria are not met. All faculty have consistently been able to complete their ratings within the allotted 2 weeks, making this a very efficient process. After application ratings are submitted, the training directors resolve any significant discrepancies between raters by reviewing the applications in question and determine which 40–45 applicants will be invited to interview. This process takes less than 2 hr and involves only the training directors. The use of REDCap improves the efficiency as data are available immediately for analyses/evaluation without needing to be entered into a database by hand which reduces the likelihood of errors due to data entry. REDCap is also used for interviewers to submit their interview ratings, allowing for immediate access to those data by the training directors. The process of reviewing/cleaning interview data takes approximately 30 min; running the scoring algorithm takes approximately 5 min, culminating in the initial rank list. The process of reviewing the ranks, breaking ties, and checking for “red flags” noted by faculty interviewers takes approximately 60 min. This quick process of generating rankings is a striking contrast to the former procedure, which included a very lengthy ranking meeting that all faculty were required to attend.

Effectiveness

The primary outcomes of interest are whether slots are filled during the match, which candidates match, and whether interns successfully complete the program and feel as though the program has met its training goals. To date, there have been no unfilled internship slots, and all matched applicants are within the top half of the rank list annually. All interns have successfully completed the program. When interns complete the program, they rate whether the program has met each of the stated training objectives set out by the Council on Accreditation (i.e., research; ethical and legal standards; individual and cultural diversity; professional values, attitudes, and behaviors; communication and interpersonal skills; assessment; intervention; and supervision) on a scale of 0 (not met), 1 (met), and 2 (exceeded expectations). Intern ratings indicated that the program met or exceeded expectations for training in all competency domains (see Table 1).

Another indicator of the effectiveness of the system is whether there is agreement among raters using this system. Across the 6 years that have been tracked using this system, there was 77% agreement between application raters of whether an applicant was recommended for an interview, indicating a relatively high level of agreement.

Faculty Perception of Recruitment Efficiency and Effectiveness

Qualitative Feedback From Faculty Survey

Note. AAPIC= Association of Psychology Postdoctoral and Internship Centers; REDCap = Research Electronic Data Capture.

Faculty Responses to Recruitment Survey

It is noteworthy that of the 15 faculty members who had participated in both the former and current recruitment systems, there was an overwhelming preference for the current, more data-driven system. For example, on a visual scale of 0 (preferred former system) to 100 (preferred current system), the current application rating process was deemed preferable (M = 86.0, SD = 17.2, range = 50–100) with no respondent preferring the former system. There was also preference for the current interview system (M = 83.9, SD = 19.3, range = 29–100) and for the current ranking process (M = 84.2, SD = 19.5, range = 40–100).

Discussion

The current study is the first to describe a pragmatic procedure to manage the complex process of internship recruitment. The absence of thorough descriptions such as this, or basic guidelines, is striking, given that all internship programs must find a way to manage recruitment effectively and efficiently. Descriptions of this sort should likely be especially helpful for training faculty involved in the development of new internship programs.

Study findings suggest that the recruitment procedures developed and utilized at our site are effective, from both the standpoint of the interns we train and the program itself. To date, at our site, there have been no unfilled internship slots following the Match and all matched applicants are within the top half of the rank list annually. Furthermore, all interns have successfully completed the program and have rated the internship as meeting or exceeding expectations. As internship bridges the gap from graduate school to the real world for psychology trainees, and it is therefore a critical year of training for future psychologists, applicants seek out internship programs that will be a good fit for their training goals. Our survey data, collected by interns upon completing their internship program, suggest that interns recruited by our site felt as though they received the training that they were expecting from their internship experience. It should be noted that we attempt to provide applicants with access to as much information as possible about our program before they submit their rankings so that they are in the best possible position to determine if the program we offer is a good fit for their goals. To this end, we strive to provide detailed and updated information in our brochure and on the hospital website. Furthermore, efforts are made to make the interview day as informative as possible as well, so applicants can get an accurate sense of what our program has to offer. Data obtained from a yearly survey we conduct of applicants we interview (after rankings are made but before match results are revealed) suggest that the opportunities to talk in-depth with faculty and current interns on the interview days are among the most important components of the interview day (Mackey & Efron, 2019).

From the standpoint of the program too, the recruitment procedures described above are effective. It is critical for internship programs to be matched with trainees who have the educational background, experience, and interest in the training opportunities available at a given site to maximize the internship year. Since there are no standardized measures of clinical or professional competency (Callahan et al., 2014), what constitutes effective recruitment will be specific to individual programs. Poor “fit” between interns and the site often results in interns being unable to meet the training goals developed by the program and intern dissatisfaction, and often leads to increased burden on training faculty. Furthermore, there are no established mechanisms for interns to transfer from one internship program to another in the midst of the training year, making it critical for internship programs to utilize effective recruitment procedures and ultimately match with trainees who are a good fit for the program.

To have an effective recruitment system that is also efficient is ideal. The recruitment timeline is short and the volume of applications is large, making internship recruitment a big task to complete relatively quickly. Furthermore, the recruitment season occurs at a busy time of year, when there are many competing demands on faculty (i.e., winter holidays, deadlines at the end/beginning of the calendar year and semester). The procedure described above serves to limit the burden on training faculty while ensuring their significant input in the recruitment process. While survey data suggest that the system described above is perceived by training faculty to be efficient, we are constantly striving to improve our procedures, to increase efficiency without compromising effectiveness. Over the years, we have implemented a number of changes to streamline our recruitment procedure; data from our survey of faculty who have participated in both our former and current recruitment procedure suggest a strong preference for the current system. Of note, some faculty did feel that the application rating procedure was too long and cumbersome; we have explored ways to streamline the Application Rating Form and have also considered having each application read by one, rather than two, faculty, which would reduce the number of applications each faculty reviewed by half.

Internship recruitment must prioritize efforts to increase diversity among pediatric and child clinical psychologists (American Psychological Association, 2003). As such, there is a real need to develop recruitment procedures in which diverse representation is monitored at all stages of the recruitment process (Glover et al., 2022). This requires that training faculty have a proactive plan for addressing imbalances in minority representation among applicants. There currently exists no official guidance for how to do this, making it especially important for programs to share the procedures they have developed for this purpose. Additionally, APPIC does not include information on identity in the application. Therefore, at our site, the Psychology Training Committee and Psychology Diversity Committee work closely together to determine how best to communicate to applicants the value placed on diversity by our program and institution. Our brochure and website highlight the importance we place on recruiting and training interns from diverse backgrounds. We are intentional in this not only to ensure that applicants to our program are invested in the same goals with regard to health equity, but also to encourage applicants to self-disclose aspects of their identity that are not gathered in the APPIC application. This information on equity and inclusion is reiterated and expanded upon during interview day, during an introduction session provided by the Psychology Diversity Committee. In addition, our Application Rating Form and Interview Rating Form include variables relating to applicants’ diverse status as well as their commitment to work with individuals from diverse backgrounds (e.g., additional experiences sought, focus on health equity in research, participation in committees/working groups); in this way, issues pertaining to diversity are incorporated into our overall ratings of applicants’ fit with our program. Once the recruitment data are subjected to the algorithm and preliminary rankings are generated, a specific procedure is utilized to ensure that diverse applicants are represented in the top third of the rankings. Lastly, when rankings are generated, any ties that exist that involve applicants from diverse backgrounds are broken in favor of those individuals. These procedures have evolved over the years and are reviewed annually.

Finally, the importance of flexibility with regard to internship recruitment has been highlighted during the COVID-19 pandemic. The recruitment procedure described above has proven to be quite adaptable to changing circumstances during the pandemic, and more generally, over the years. When this recruitment procedure was initially developed, most of it was conducted in person. However, as we learned in the 2020–2021 recruitment year, this system can be implemented entirely remotely if necessary (i.e., remote review of applications, remote applicant interviews, remote data analysis, and remote generating of rankings). Furthermore, the algorithm that is at the core of our data analysis can easily be modified based on changes in the preferences of the training team and changes in the focus of the program. Relatedly, research suggests that recruitment trends have changed over time in terms of what is prioritized by training programs (Ingram et al., 2021); the weighted algorithm used at our site is easily adjusted based on trends in internship training. For example, over the years, we have increased our focus on commitment to health equity and representation of minoritized groups in our internship classes and have adjusted research expectations with the development of new clinical promotion tracks in academic medical centers. Lastly, at our site, the number of training faculty has varied greatly over the years. We currently implement this system with a large training faculty; however, there were a number of years that the very same system was used with a much smaller group of faculty. This system is easily adapted to changes in the constellation of those using it.

There are important limitations to this approach. Notably, the review and ranking system for interviews relies upon data that may be duplicative as all data points are all coming from one interview process. Although this system is not perfect, we have found that allowing interviewers to rate along dimensions of clinical skill, research ability, commitment to health equity, and other indicators of applicant strengths, rather than providing one single rating, allows for greater flexibility and important distinctions (e.g., when a candidate has strengths in research but weaknesses in clinical experience). Additionally, because these data were collected across a number of recruitment years, factors such as variability in forms across years, changes in the faculty who are reviewing applications, and other changes to processes can affect the internal validity of findings as well as data interpretation.

The procedure described earlier provides a framework for managing recruitment that allows those using it to be flexible with regard to how it is implemented and reflective on the process being used. For example, programs can modify the ratings of competencies and experiences to match their specific program focus, change the weighting of the ranking formula to match their site’s priorities, or make any number of other modifications. The purpose of this article is to illustrate this process as a guide for how this might be accomplished for a broad range of programs. Self-assessment is central to this system; as program values shift and as other circumstances related to training opportunities change, adjustments can easily be made, while still utilizing this overall approach. Given the immense adaptability of the system, it has the potential to be used by a range of training programs within psychology, as well as across a wide range of disciplines within the medical field.